Relato: Turking the business graph

A failed analytics startup post-mortem.

Warp (source: Pixabay)

Warp (source: Pixabay)

In order to conquer a market, you must first understand it. We often speak of markets in the abstract, as addressable segments of the economy, defining them by examples of companies and by comparisons to others engaged in similar activities. Sales and marketing leaders have richer internal models of markets they use to guide their organizations as they fight for their share of the markets they contest. In January 2015, I set out to build an external representation of a market every bit as rich as those in the minds of leading executives driving successful companies; I founded an analytics startup called Relato—a startup that, unfortunately, did not succeed. In this post, I’ll present the story of the company and the work I did there, the entrepreneurship and network science involved in my work, and some insight into how not to run a young analytics startup, and a little about how to do so as well.

My mission with Relato was to build a deeper understanding of the modern networked economy, a vast network in which companies are best defined according to their business relationships with other companies. When it comes to understanding companies, it’s “who you know.” These relationships translate to connections in the business graph, made up of connections between customer, partner, competitor, and investor.

Mission: Mapping markets

I started Relato with an experiment to see how much market intelligence I could gather from the business web. Having worked at LinkedIn, I missed their social graph. I wondered, “Could a copy of the business graph be collected from the open web?” The answer to that research question is what led me to found Relato.

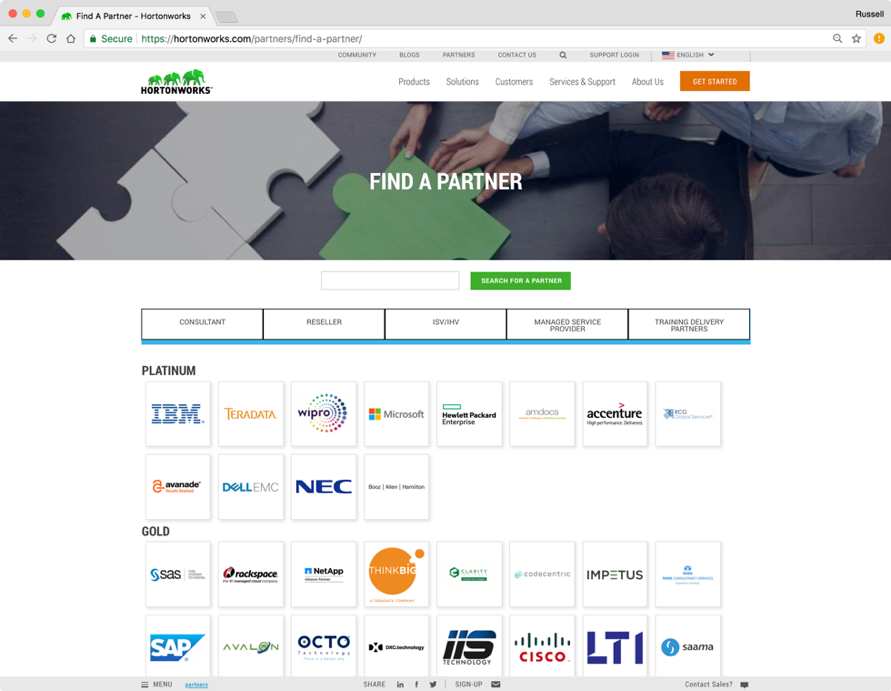

I started by surveying the state of the market for data on companies. I discovered that while basic firmographic data was available—things like address, industry code, website technologies—there was nothing that captured the actual business activity of companies. By contrast, when I surveyed the websites of businesses, I found a treasure trove of information about their business relationships with other companies. Starting with a market I knew—big data—I manually transcribed the partnership pages of the major players: Hortonworks, Cloudera, MapR, and Pivotal. The combined list came to hundreds of companies—not a bad survey of the big data market.

I saw opportunity! I could transcribe the companies listed on partnership pages to learn how companies actually did business. Then I could use graph analytics on this data to extract next-generation profiles on companies. This data could be used in lead scoring and lead generation systems to provide a breakthrough in their level of performance. In short, I could provide leads for enterprise customers that would convert to sales at a rate never before seen! I got excited. What if sales calls only came to people who wanted your product, because Relato told you so? I could optimize the economy and change the world! I was inspired.

I figured out roughly how I could collect this data using natural language processing, an area that I know a little bit about but is not one of my core skills. Building this model and making it good enough to be saleable would take at least a year. I did not have a year of cash to burn in the bank. Fortunately, there was a faster alternative.

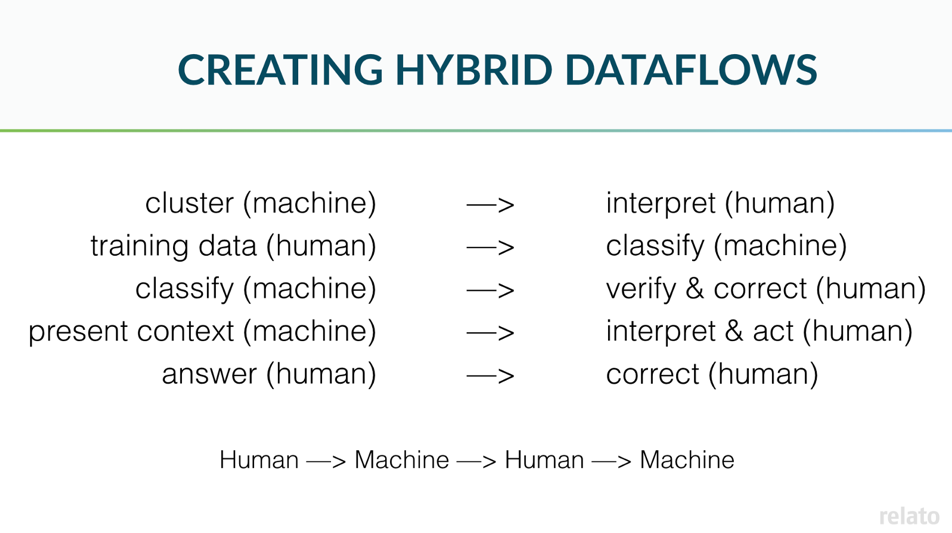

There was a shift in “big data” to hybrid human/machine processing, where humans located in places where wages are low would perform many instances of simple tasks to cheaply create data sets for machine learning systems. Using this method, there would ultimately be a higher cost per record collected because I would be paying real humans wages, but the up-front cost in development time was much lower. This way, I could get started much faster, shipping a beta product to customers using money from angel investors instead of venture capitalists.

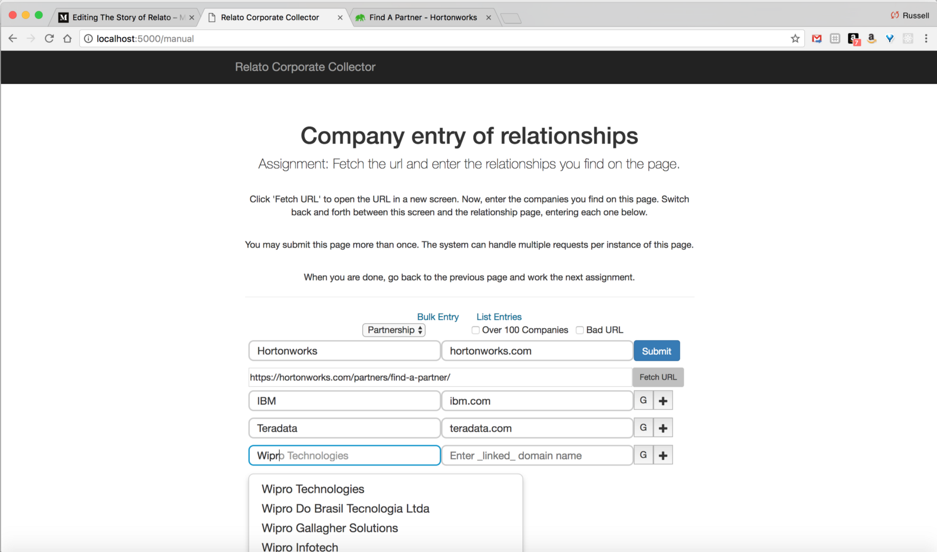

Most data scientists use application programing interfaces (APIs) like Amazon’s Mechanical Turk to automate delegating data processing tasks to humans. Rather than using an API, I built a data collection web application that was used by people I actually got to know: “mechanical Turks” I hired on a website called UpWork. They would transcribe partnership pages and enter them into a database using an application that did things like autocomplete company names to ensure the data was clean and consistent. If the page had thousands of partnerships, I automated the collection process using utilities I developed to extract the names and domains of companies.

The system worked beautifully, and the workers became good at their jobs. I would spot check their work, offering corrections. Instead, they often corrected me! There is truly a wealth of talent available from underemployed individuals in areas of the world with less opportunity than we enjoy in the Bay Area. They are talented and hardworking, and you can get great work humanely—if you treat them with the respect they deserve—rather than as human computers at the other end of an API.

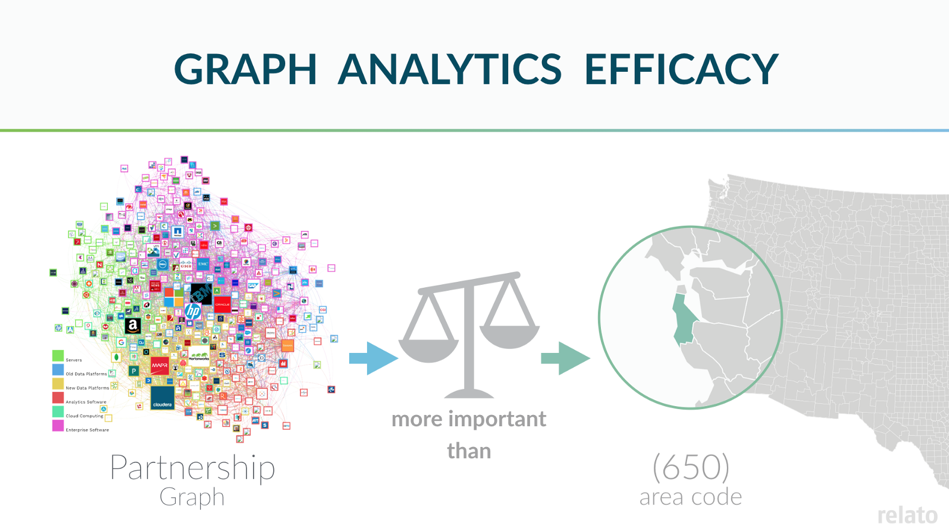

Data in hand, I developed algorithms using a graph database to calculate metrics describing the way companies worked together. I started by basing Relato’s lead scoring system on data from several commercial APIs to ensure my model had everything my competitors used. Then I added to the model the features I derived from the partnership graph. It worked! The accuracy of the model increased dramatically. I knew I was on to something when the model indicated that the partnership centrality, a graph metric, was by far the most important feature determining lead scoring accuracy. It was even more important than the 650-area code (Silicon Valley) for a company selling to technology startups! Relato was off to a good start, algorithmically at least. This became a slide in our pitch deck (see Figure 4).

Lead generation: Minting hot leads

Relato built two things: a lead generation system for B2B sales, and MarketMaps, a business graph visualization tool. A lead scoring system is just a predictive model for inbound business contacts, scoring them by how likely they are to become customers. A lead generation system adds a database already populated with a “universe” full of contacts, plus a lot of plumbing to shuffle the data through the system, through the algorithms and back out to the customer. In goes a current customer list, out go recommended leads.

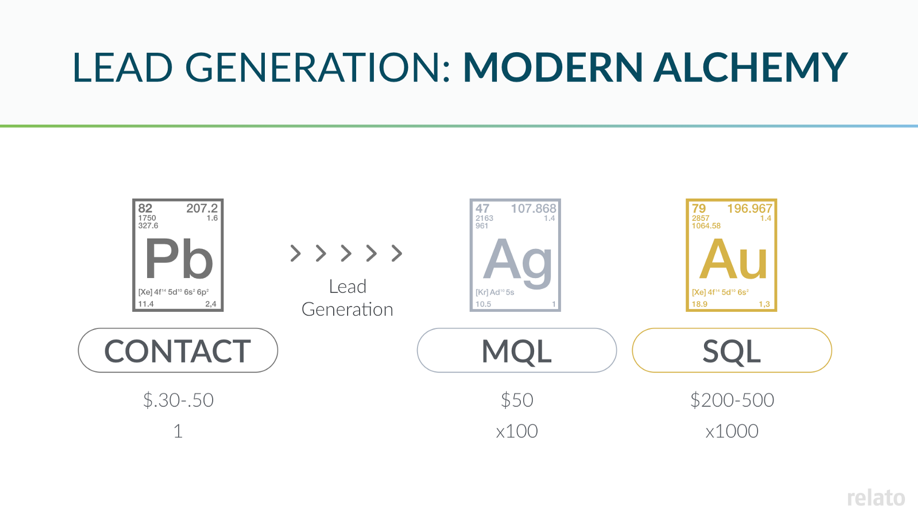

The idea of algorithmic lead generation is to take a database of business contacts representing the entire world of business and select those few “hot leads” for a specific company that will convert into real customers. In the process, this generates a ton of value. If you can pull this off, it’s a good business model—see Figure 5, which describes the price amplification as contacts travel through the marketing funnel on their way to becoming sales. Contacts sell for a fraction of a dollar. Good leads sell for $50 to as much as $500. That makes a lot of room for profit!

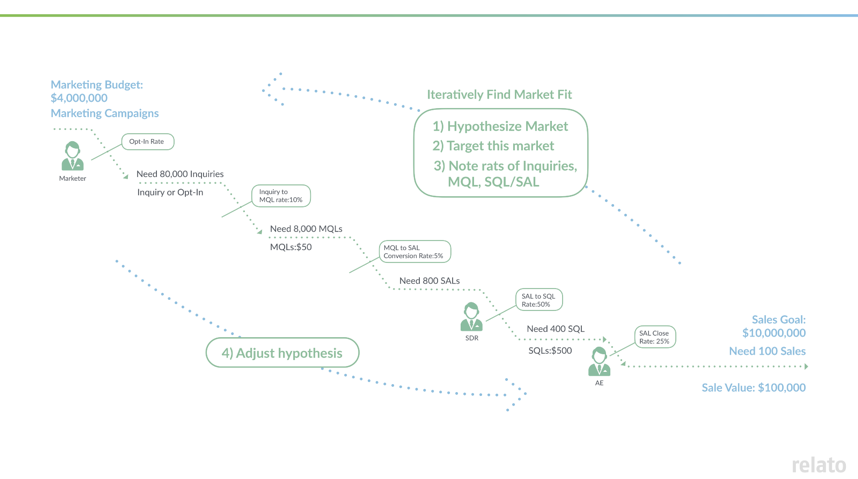

Figure 6 shows the marketing funnel itself for a business-to-business (B2B) company with a $4 million marketing budget. At the top of the funnel, contacts for a person sell for a fraction of a dollar. The sale of lead lists is still a thriving market, although the accuracy of the contact information varies greatly.

It takes many leads in the top of the funnel to result in one sale at the bottom. In this case, we’ll need 80,000 responses from contacts to meet our revenue goal. A response would be opening or clicking on a link in an email or coming to the website from a web search.

The next step in the funnel is a marketing qualified lead (MQL). A lead becomes an MQL when an analysis of the lead’s behavior determines the lead is likely enough to buy that they merit attention from a real person. MQLs for enterprise companies go for about $50 a piece. Marketo and other marketing automation systems calculate a lead score based on a lead’s behavior, such as when they interact with your website (10 points!) or download a white paper (50 points!). When the score becomes high enough, contact is made by a lead qualifier. A lead qualifier is a junior salesperson who makes initial sales contacts by phone and email. The problem with marketing automation systems is that the scoring algorithm is usually arbitrary. As a result, there is widespread dissatisfaction in the results generated by the large investments enterprises have made in marketing automation over the last decade. This is what drives the lead scoring market.

Predictive lead scoring systems substitute machine learning for manual behavioral analysis to optimize the lead scoring algorithm. They replace arbitrary lead scores with artificial intelligence. Lead scoring systems then become a black box within the marketing funnel that uses past results (sales) to score future leads. Firmographic data or data characterizing a business is limited, so behavior is the primary training data for these systems, which work better than marketing automation alone. These systems have return on investment (ROI), but so far, this has turned out to be an iterative improvement that has not revolutionized sales and marketing.

The next step in the marketing funnel is a sales qualified lead (SQL). A lead becomes an SQL when a live, in-person salesperson has spoken to the person behind the potential lead, and through conversation has determined they are a good fit for the company’s product. Top salespeople are fed SQLs from junior salespeople and they use their extensive skills to build relationships and close deals. The lead funnel has side branches: a good senior salesperson will also do his or her own lead generation, often bringing a rolodex from job to job, selling different products to the same contacts over and over.

For B2B sales, it is necessary to pour a lot of contacts (200 in our slide in Figure 6) into the sales and marketing funnel to generate a single sale. Most leads fail to mature to the next level at each level of the funnel. Relato’s mission was ambitious: to skip levels in the sales and marketing funnel, in order to mint contacts directly into leads every bit as good as sales qualified leads. Many other lead generation companies have attempted this task, but none that I could find used anything like Relato’s business graph to do so.

In the next post, we’ll talk more about the products I built at Relato, and where I went wrong in steering the business.