Assumptions have a powerful effect on a product’s outcome

How the Hypothesis Progression Framework and Customer-Driven Cadence can help mitigate assumptions and guide you through customer and product development.

Le labyrinthe. (source: PublicDomainPictures.net)

Le labyrinthe. (source: PublicDomainPictures.net)

In the summer of 2000, General Motors, an American car manufacturer, introduced the Pontiac Aztek, a radically new “crossover” vehicle—part sedan, part minivan, and part sports utility vehicle (see Figure 1). It was marketed as the do-it-all vehicle for 30-somethings. It was the car for people who enjoyed the outdoors, people with an “active lifestyle” and “none to one child.”

On paper, the Aztek appeared to be fully featured. It had a myriad of upgrades that included options for bike racks, a tent with an inflatable mattress, and an onboard air compressor. GM even included an option for an insulated cooler, to store beverages and cold items, between the passenger and driver seat. Their ideal customer was someone who would use the Aztek for everything from picking up groceries to camping out in the wilderness.

The Aztek had a polarizing visual aesthetic: many either loved or hated it (most hated it). Critics found its features, like the optional tent and cooler, awkward and downright gimmicky. GM insisted these were revolutionary ideas and suggested they were ahead of their time. They believed that, once customers took the Aztek for a test drive, they would quickly realize just what they were missing.

After a $30 million marketing push, it appeared the critics were right. The Aztek failed to make even a modest dent in the overall market. The year the Aztek was released, the American auto industry sold 17.4 million vehicles. The Aztek represented only 11,000 of those vehicles (a number that some believed was still generously padded).

To customers, the Aztek seemed to get in its own way. It was pushing an agenda by trying to convince customers how they should use their vehicles, rather than responding to how they wanted to use them.

It’s easy to point at this example in hindsight and ask, “How could GM spend so much time, money, and resources only to produce a car no one wanted?” Some suggested it was because the car was “designed by committee” or that it was a good idea with poor execution. Insiders blamed the “penny pinchers” for insisting on cost-saving measures that ultimately produced a hampered product that wasn’t at all consistent with the original vision.

The lead designer of the Aztek, Tom Peters, went on to create many successful designs, like the C6 Chevy Corvette and 2014 Camaro Z/28, and eventually won a lifetime achievement award. He suggested the poor design of the Aztek started with the team asking themselves, “What would happen if we put a Camaro and an S10 truck in a blender?”

The reality is that it was all of these reasons. Even though it appeared, at the time, that GM was “being innovative,” they had forgotten the most crucial element: the customer. They had fallen in love with a concept and tried to find a customer who would want it.

They were running focus groups and also doing their own market research. They probably even created personas or some variant of the “ideal customer” that was perfect for the Aztek. GM believed they were being customer-focused. Yet they weren’t paying attention to the right signals. They had respondents in focus groups saying, “Can they possibly be serious with this thing? I wouldn’t take it as a gift!”

While we can commend GM for trying to push the boundaries of the auto industry, we must admit that by not validating their assumptions and listening to their customers, they had created a solution in search of a problem.

We make assumptions about everything. It’s a way for us to make meaning of what we understand based on our prior beliefs. However, our assumptions aren’t always grounded in fact. They may come from “tribal knowledge,” experience, or conventional wisdom. These sources start with a kernel of truth, which makes them feel real, but too often we mistake assumptions for facts.

This is not to say that assumptions are a bad thing. They can be incredibly useful in tapping into our intuition. It’s when our assumptions go unchecked that we open ourselves to vulnerabilities in our design.

Unchecked assumptions can have a powerfully negative effect on our products, because they cause us to:

- Miss new opportunities or emerging market trends

- Make costly engineering mistakes by creating products that nobody will use

- Create technical debt by supporting features that customers aren’t using

- Respond to problems too late

What’s most dangerous about unchecked assumptions is that they become conventional wisdom and are carried so long that they create a false sense of security. Then a competitor swoops in with a better understanding of the customer and quickly takes over the entire market.

Henry Petroski, a professor at Duke University and expert in failure analysis, once said, “All conventional wisdom has an element of truth to it, but good design requires more than an element of truth—it requires an ensemble of correct assumptions and valid calculations.”

Therefore, it introduces a high level of risk if teams move forward with underlying assumptions that haven’t been formulated, tested, and validated.

What is the Hypothesis Progression Framework?

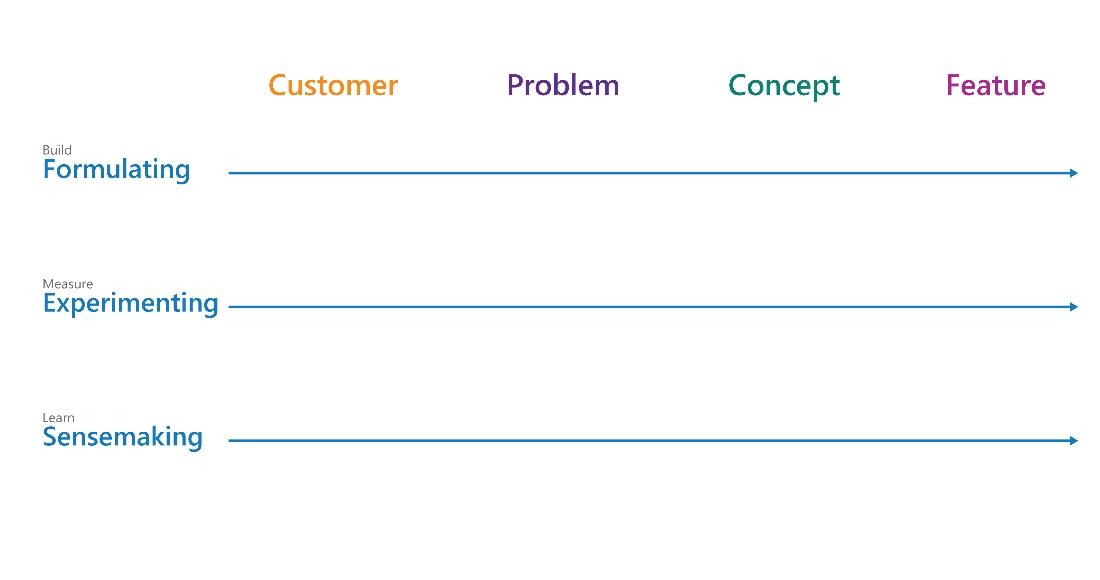

The Hypothesis Progression Framework (HPF) allows you to test your assumptions at any stage of the development process. At its heart, the HPF breaks up the development of products into four stages: Customer, Problem, Concept, and Feature (Figure 2).

Using the HPF, your team will:

- Formulate your assumptions into testable hypotheses

- Validate or invalidate your hypotheses by running experiments

- Make sense of what you’ve learned so you can plan your next move

As the name suggests, the HPF is founded on the principle that if you state your assumptions as hypotheses and try to validate them, you will remain objective and focused on what the customer is telling you rather than supporting unconfirmed assumptions.

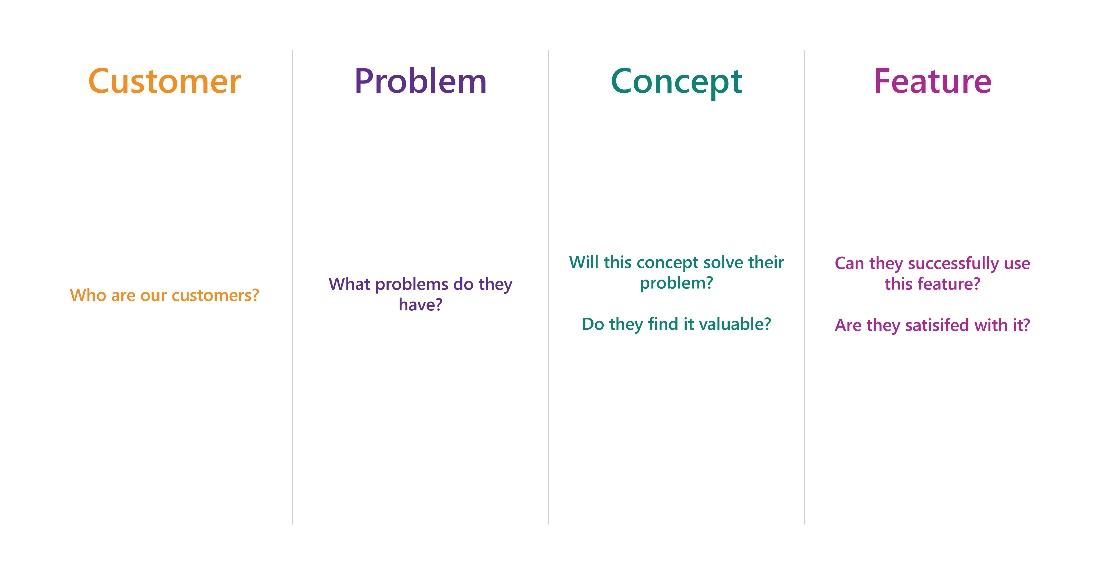

For now, understand that each stage in the HPF works together to address these fundamental questions (Figure 3):

Who are your customers?

When we sit down with teams, we will often hear something to the effect of, “Oh, we know who our customers are. That’s not our problem.” Then we’ll ask questions like:

- What environments do your customers live/work in?

- Why do they choose your product over your competitors’?

- What are they trying to achieve with your products?

- What unique attributes make your customers different from one another?

You may (or may not) be surprised how many teams have difficulty answering these types of questions.

Customer engagement is not customer development. It’s one thing to engage customers by having an ongoing dialog using social networks, support forums, and the like. That’s great. However, it’s another thing entirely to systematically learn from your customers and generate actionable insights.

Your customers are not in a fixed position. Their values and tastes change over time. Therefore, you must be willing to journey with them and continually refine your products to remain one step ahead of where they plan to go.

What problems do they have?

At times, we get so enamored and focused on our solution that we need to step back and ask ourselves, “How many people are really experiencing this problem?” or “How much of a frustration is this problem for our customers?” If GM had been willing to ask their customers, “How valuable is it to have your car convert into a tent?” they would have learned that the tent was not solving a necessary problem for most of their customers. We must appreciate that, to create successful products, it’s more than solving a problem—it’s a matter of solving the right problem.

Will this concept solve their problem and do they find it valuable?

There are many ways to solve a problem, but how can you be confident you’re solving it the right way? Are you sure that customers value the way you’re trying to solve the problem, or are you introducing new problems you hadn’t considered?

During the Concept stage, you’re trying to ensure that you’re solving the customer’s problem in a way they find valuable.

You want to leverage your customers’ feedback and continually confirm that your ideas are on the right track. You’ll establish the benefits of your concept (as well as its limitations) and increase your confidence that you’re building something customers want.

Can they successfully use this feature and are they satisfied with it?

We’ve all been excited for a product release only to be disappointed later because it didn’t deliver on its promises. Throughout the design and development process, you must ensure your concept works as expected and is successful in helping customers solve their problems. While the Concept stage is to ensure you are building the right thing, the Feature stage ensures you are building it the right way.

By using the HPF as a guide, your team will remain customer-focused as it progresses through customer and product development. Together, these stages represent your entire solution. It’s important to note that the HPF doesn’t necessarily need be worked from left to right; it can be started at any stage. Depending on where you are in your product’s development, you may decide to start at the Concept stage or Problem stage. However, you may start at a later stage in the HPF only to discover that you need to answer fundamental questions in the earlier stages. We’ve had teams come to us, ready to conduct usability testing on a feature, only to realize they didn’t truly understand the customer or problem. That’s what makes the HPF so profound: it allows teams to easily understand the stages that need to be validated to ship a successful product.

As we’ve discussed, your customers’ needs evolve over time, and you must be willing to revisit your assumptions about your solution to ensure it’s meeting the right customer, solving the right problems, creating value, and making your customers successful.

The Customer-Driven Cadence

To remain Lean and customer-focused, teams must operate in a pattern of continuous learning and collaboration. The HPF has been designed to be used in parallel to your product development sprints or schedules. In short, you should be using the HPF while you’re building and refining your products.

In Eric Ries’s Lean Startup method, he calls for startups to engage in a “build, measure, learn” loop that accelerates learning and customer response.

We’ve found tremendous success with this approach and have refined it to align more closely with our framework and activities in our playbooks. The Customer-Driven Cadence has three fundamental actions that you’ll employ during each stage of the HPF: Formulating, Experimenting, and Sensemaking (Figure 4). Let’s look at each of these individually:

Formulating (a.k.a. Build)

Throughout the entire customer-driven process, from customer to product development, you’ll be formulating your assumptions, ideas, and hypotheses. This is an important practice because you will need to track the team’s learning along the way. Ries refers to these key decision points as moments where a team needs to “pivot” (change direction) or “persevere” (continue the course).

By continually formulating your assumptions and stating them as hypotheses, your team will create a structure that allows you to easily track your assumptions about the customer, their problems, and your ideas of how to respond to those problems. Finally, you’ll need to formulate a Discussion Guide. The Discussion Guide is the set of questions you’ll ask customers in order to prove/disprove your assumptions.

Experimenting (a.k.a. Measure)

Each stage of the HPF has activities where you need to test whether your assumptions were correct. By continually running experiments against your hypotheses, you’ll have the data you need to decide whether to pivot or persevere with your product’s direction. While there are many methods you can employ to test your hypotheses, our book heavily emphasizes talking directly with customers.

Sensemaking (a.k.a. Learn)

The customer-driven approach relies on your ability to continually collect and make sense out of customer data. So often, teams fall into a cycle of “build, measure, build, measure, build, measure” and miss the overall learning or broad understanding of their customer. We’ve provided an activity in each of our playbooks that allows your team to pause and reflect on what you’ve all learned so you can make informed decisions about where to go next.