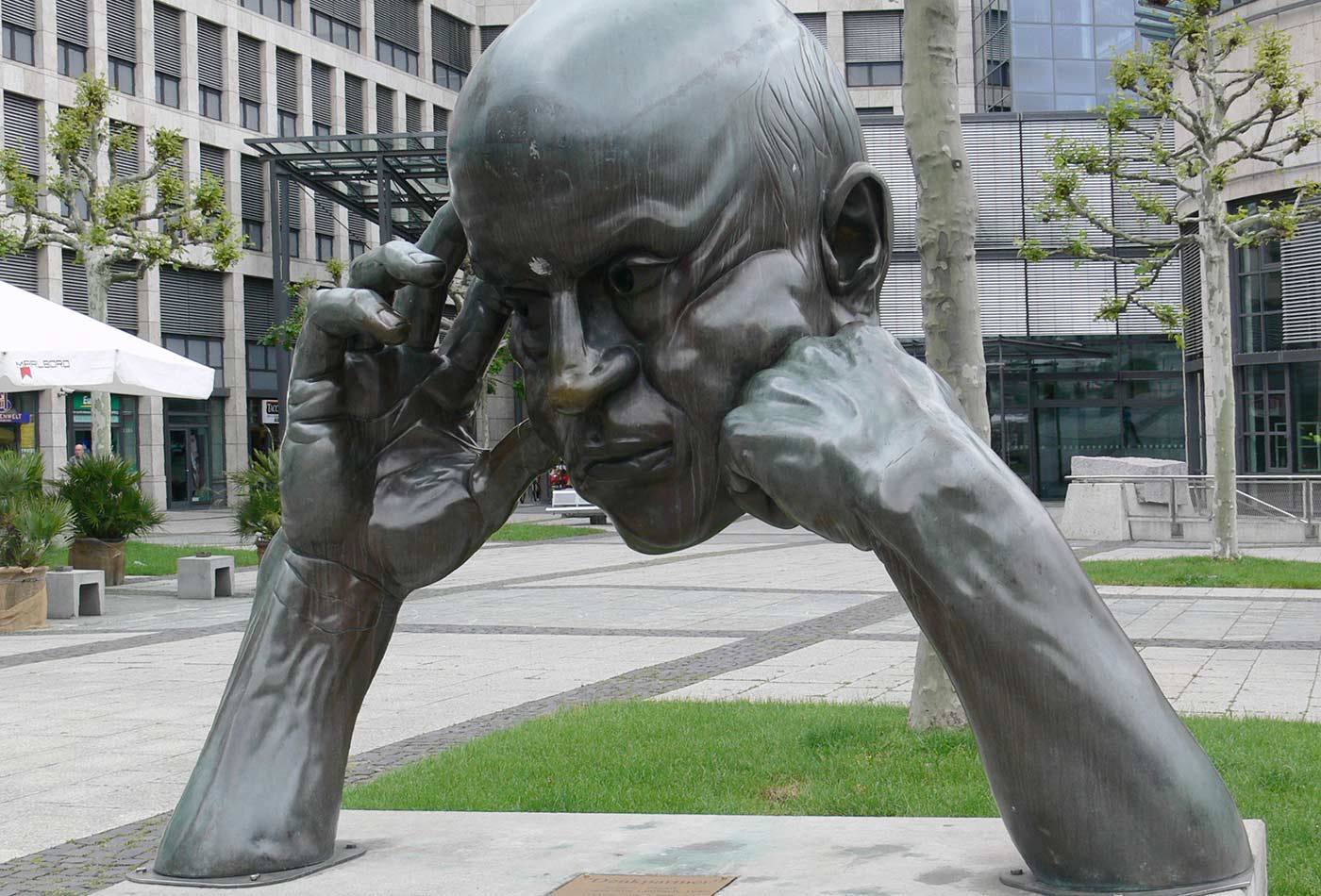

Bronze-Skulptur "Denkpartner" von Hans-Jörg Limbach, 1980. (source: Andreas Praefcke on Wikimedia Commons)

Bronze-Skulptur "Denkpartner" von Hans-Jörg Limbach, 1980. (source: Andreas Praefcke on Wikimedia Commons) While I was writing my post about artificial intelligence and aggression, an odd thought occurred to me. When we discuss the ethics of artificial intelligence, we’re typically discussing the act of creating (programming, training, and deploying) AI systems. But there’s another way to look at this. What kind of ethics is amenable to computation? Can ethical decision-making be computable?

I wasn’t quite serious when I wrote about AIs deriving Asimov’s Laws of Robotics from first principles—I really doubt that’s possible. In particular, I doubt that AIs will ever be able to “learn” the difference between humans and other things: dogs, rocks, or, for that matter, computer-generated images of humans. There’s a big difference between shooting a human and shooting an image of a human in Overwatch. How is a poor AI, which only sees patterns of bits, supposed to tell the difference?

But for the sake of argument and fantasy, let’s engage in a thought experiment where we assume that AIs can tell the difference. And that the AI has unlimited computational power and memory. After all, Asimov was writing about precisely predicting human actions centuries in the future based on data and statistics. Big data indeed—and any computers he knew were infinitesimally small compared to ours. So, with computers that have more computational power than we can imagine today: is it possible to imagine an AI that can compute ethics?

Jeremy Bentham summarized his moral philosophy, which came to be known as utilitarianism, by saying “the greatest happiness of the greatest number is the foundation of morals and legislation.” That sounds like an optimization problem: figure out a utility function, some sort of computational version of Maslow’s hierarchy of needs. Apply it to every person in the world, figure out how to combine the results, and optimize: use gradient ascent to find the maximum total utility. Many problems would need solutions along the way: what is that utility function in the first place, how do you know you have the right one, is the utility function the same for every person, and how do you combine the results? Is it simply additive? And there are problems with utilitarianism itself. It’s been used to justify eugenics, ethnic cleansing, and all sorts of abominations: “the world would really be a better place without …” Formally, this could be viewed simply as choosing the wrong utility function. But the problem isn’t that simple: how do you know you have the right utility function, particularly if it can vary from person to person? So, utilitarianism might, in theory, be computable, but choosing appropriate utility functions would be subject to all sorts of prejudices and biases.

Immanual Kant’s ethics might also be computable. The primary idea, “act according to the principle which you would want to be a universal law” (the “categorical imperative”), can also be rephrased as an optimization problem. If I want to eat ice cream every day, I should first think about whether the world would be a tolerable place if everyone ate ice cream every day. It probably would (though that might conceivably push us up against some boundary conditions in the milk supply). If I want to dump my industrial waste on my neighbors’ lawns, I have to think about whether the world would be acceptable if everyone did that. It probably wouldn’t be. Computationally, this isn’t all that different from utilitarianism: I have to apply my rule to everyone, sum the results, and decide whether they’re acceptable. Instead of finding a maximum, we’re avoiding a minimum, and computationally, that’s just about the same thing. Kant would insist on something much more than avoiding a minimum, but I’ll let that go. Kant would also insist that all rational actors would reach the same conclusion—which also makes it sound like a computational problem. If two programs use the same algorithm on the same data, they should get the same result. The difficulty would again be in finding the utility function. What are the global consequences, both positive and negative, of eating ice cream? Of pouring sewage? Like the utilitarian’s utility function, this utility function would also be prone to prejudices and biases, both human and statistical.

You see a different optimization problem in Aristotle’s ethics. One of Aristotle’s principles is that the good is always situated between two extremes. When you see injustice, you ought to be angry, but not so angry that you lose control. It’s equally unethical to have no anger, not to care about injustice. So, ethical action is a matter of dynamic balance, finding the appropriate operating point between unacceptable extremes. Maintaining that balance sounds a lot like control theory to me. Although Aristotle doesn’t have the language to say this, it’s a feedback loop. It’s learned, it comes from experience, it’s something people can get better at. But Aristotle has surprisingly little to say about the virtues on which ethical behavior is based. He assumes that he’s speaking to someone who already understands what these mean, and wants to get better at living a good life. Computationally, that doesn’t help. Humans can observe that acting justly satisfies them in a way that acting unjustly doesn’t, and use this satisfaction as a signal that helps them learn how to lead a good life. It’s not clear what satisfaction or pleasure would mean to an algorithm, and if we don’t know what we’re optimizing, we’re not likely to achieve it. To an algorithm, this initial sense of right, of virtue or duty, is an input from outside the system, not something derived by the system.

Maybe there’s a fourth approach. Are we limiting ourselves by casting computational ethics in terms defined by the history of philosophy? Maybe we can treat the whole world as a gigantic optimization problem and build an ethical software platform that learns its way toward ethics by observing actions and their outcomes, and tries to maximize total good. But that still begs the question: what are we optimizing? What’s the utility function? Are we observing smiles and gestures, and interpreting them as signs of positive or negative outcomes? And what about long-range consequences? And if so, what sort of time frame are we looking at? To what extent are we willing to trade off short-term cost against long-term benefit? This is essentially utilitarian, in that we’re solving for the “greatest good for the greatest number,” so it’s not surprising that we end up with the same problem. Even if we can use AI to distinguish good outcomes from bad outcomes, we still need some kind of function that tells how to value those outcomes. If a present good has bad consequences 10 years out, do we care? What if it’s 100 years? 1,000 years? That’s not a decision artificial intelligence can make for us.

We could go a step further by eliminating optimization altogether. That approach turns our problem into finding a Nash equilibrium: you find everyone’s preference function and evaluate the individual preference functions under all possible courses of action. Then you pick the action for each individual so that no individual can do better without convincing another player to change his or her action. The problem is that Nash equilibria aren’t really optimal in any sense of the word. There is no such thing as cooperation; you can’t go to the other players and say “if we work together, we will all be better off.” A Nash equilibrium represents the tragedy of the commons, rendered in mathematical terms.

It isn’t surprising that computational ethics looks like an optimization problem. Whether you’re human or an AI, ethics is about finding the good, deciding the best way to live your life. That includes the possibility that you will sacrifice your own interest for someone else’s good. If “living a good life” isn’t a difficult optimization problem, I don’t know what is. But I don’t see how ethics based on a priori considerations, like Aristotle’s ideas of duty or virtue, the Ten Commandments, or Asimov’s laws, could be computed. These are all external inputs to the system, whether handed down on stone tablets or learned and handed down through many generations of human experience. I doubt that an AI could derive the idea that it must not harm a human—if we want AIs to behave according to anyone’s laws, they’ll have to be built into the system, including systems that have the ability to write their own code.

Or maybe not. If our ethics arose over millenia of shared experience and “supervised learning,” could AIs do the same? Human learning is almost always supervised: we don’t, on our own, learn how to act toward other humans. And our traditions are ancient enough that they can easily appear to be imposed from outside the system, even if they were derived within it.

That notion of a priori law was precisely what Kant and utilitarians were trying to avoid: can ethics be developed from the world, as opposed to imposed on it? But looking at the computational problems I’ve posed, I see a similar problem. Both utilitarians and Kantians need some kind of utility function. And those functions are either imposed from the outside or (to stay computational) derived from some futuristic sort of deep learning. In the first case, they’re nothing more than the external laws we were trying to avoid, though possibly much more complex. In the latter case, where the functions are themselves the result of machine learning, they’re still subject to bias, in both the rigorous statistical sense, and the less rigorous (but ultimately more important) human sense of “fairness.” As Cathy O’Neil has observed, people will create the data sets they need to confirm their biases. Who watches those who create the data sets?

Don’t take this too seriously; I’m not really suggesting that any one try to code up the Nichomachean Ethics or the Critique of Practical Reason. I’m skeptical of computational ethics, but skepticism comes easily. If you want to solve the problem of ethical computing, be my guest—but make sure you really solve it, and don’t just sneak a holocaust’s worth of human prejudices in through the back door.