Sequence-to-sequence machine learning

Training deep learning models to code solutions: An interview with Oriol Vinyals.

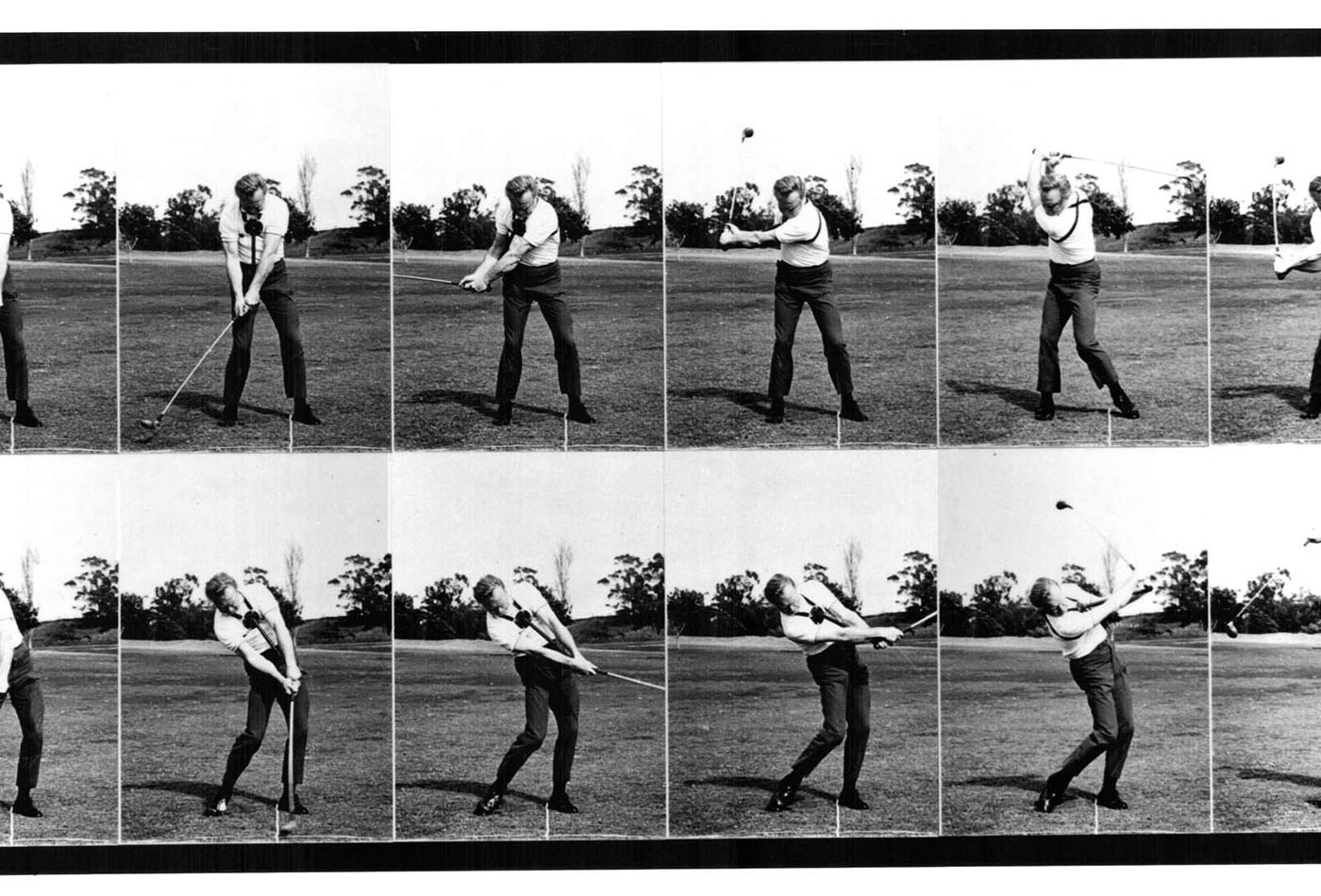

Mike Austin Sequence. (source: BlueDrew on Wikipedia)

Mike Austin Sequence. (source: BlueDrew on Wikipedia)

Oriol Vinyals is a research scientist at Google working on the DeepMind team by way of previous work with the Google Brain team. He holds a Ph.D. in EECS from University of California, Berkeley, and a master’s degree from University of California, San Diego.

Key takeaways

- Sequence-to-sequence learning using neural networks has delivered state-of-the-art performance in areas such as machine translation.

- While powerful, such approaches are constrained by a number of factors, including computational ones. LSTMs have gone a long way toward pushing the field forward.

- Besides image and text understanding, deep learning models can be taught to “code” solutions to a number of well-known algorithmic challenges, including the Traveling Salesman Problem.

David Beyer: Let’s start with your background.

Oriol Vinyals: I’m originally from Barcelona, Spain, where I completed my undergraduate studies in both mathematics and telecommunication engineering. Early on, I knew I wanted to study AI in the U.S. I spent nine months at Carnegie Mellon, where I finished my undergraduate thesis. Afterward, I received my master’s degree at UC San Diego before moving to Berkeley for my Ph.D. in 2009.

While interning at Google during my Ph.D., I met and worked with Geoffrey Hinton, which catalyzed my current interest in deep learning. By then, and as a result of wonderful internship experiences at both Microsoft and Google, I was determined to work in industry. In 2013, I joined Google full time. My initial research interest in speech recognition and optimization (with an emphasis on natural language processing and understanding) gave way to my current focus on solving these and other problems with deep learning, including, most recently, generating learning algorithms from data.

DB: Tell me about your change in focus as you moved away from speech recognition. What are the areas that excite you the most now?

OV: My speech background inspired my interest in sequences. Most recently, Ilya Sutskever, Quoc Le, and I published a paper on mapping from sequences-to-sequences so as to enable machine translation from French to English using a recurrent neural net.

For context, supervised learning has demonstrated success in cases where the inputs and outputs are vectors, features, or classes. An image fed into these classical models, for example, will output the associated class label. Until quite recently, we have not been able to feed an image into a model and output a sequence of words that describe said image. The rapid progress currently underway can be traced to the availability of high-quality data sets with image descriptions (MS COCO), and in parallel, to the resurgence of recurrent neural networks.

Our work recast the machine translation problem in terms of sequence-based deep learning. The results demonstrated that deep learning can map a sequence of words in English to a corresponding sequence of words in Spanish. By virtue of deep learning’s surprising power, we were able to wrangle state-of-the-art performance in the field rather quickly. These results alone suggest interesting new applications—for example, automatically distilling a video into four descriptive sentences.

DB: Where does the sequence-to-sequence approach not work well?

OV: Suppose you want to translate a single sentence of English to its French analog. You might use a large corpus of political speeches and debates as training data. A successful implementation could then convert political speech into any number of languages. You start to run into trouble, though, when you attempt to translate a sentence from, say, Shakespearean English, into French. This domain shift strains the deep learning approach, whereas classical machine translation systems use rules that make them resilient to such a shift.

Further complicating matters, we lack the computational resources to work on sequences beyond a certain length. Current models can match sequences of length 200 with corresponding sequences of length 200. As these sequences elongate, longer runtimes follow in tow. While we’re currently constrained to a relatively small universe of documents, I believe we’ll see this limit inevitably relax over time. Just as GPUs have compressed the turnaround time for large and complex models, increased memory and computational capacity will drive ever longer sequences.

Besides computational bottlenecks, longer sequences suggest interesting mathematical questions. Some years ago, Hochreiter introduced the concept of a vanishing gradient. As you read through thousands of words, you can easily forget information that you read three thousand words ago; with no memory of a key plot turn in chapter three, the conclusion loses its meaning. In effect, the challenge is memorization. Recurrent neural nets can typically memorize 10 to 15 words. But if you multiply a matrix 15 times, the outputs shrink to zero. In other words, the gradient vanishes along with any chance of learning.

One notable solution to this problem relies on Long Short Term Memory (LSTM). This structure offers a smart modification to recurrent neural nets, empowering them to memorize far in excess of their normal limits. I’ve seen LSTMs extend as far as 300 to 400 words. While sizable, such an increase is only the start of a long journey toward neural networks that can negotiate text of everyday scale.

Taking a step back, we’ve seen several models emerge over the last few years that address the notion of memory. I’ve personally experimented with the concept of adding such memory to neural networks: instead of cramming everything into a recurrent net’s hidden state, memories let you recall previously seen words toward the goal of optimizing the task at hand. Despite incredible progress in recent years, the deeper, underlying challenge of what it means to represent knowledge remains, in itself, an open question. Nevertheless, I believe we’ll see great progress along these lines in the coming years.

DB: Let’s shift gears to your work on producing algorithms. Can you share some background on the history of those efforts and their motivation?

OV: A classic exercise in demonstrating the power of supervised learning involves separating some set of given points into disparate classes: this is class A; this is class B, etc. The XOR (the “exclusive or” logical connective) problem is particularly instructive. The goal is to “learn” the XOR operation, i.e., given two input bits, learn what the output should be. To be precise, this involves two bits and thus four examples: 00, 01, 10 and 11. Given these examples, the output should be: 0, 1, 1 and 0. This problem isn’t separable in a way that a linear model could resolve, yet deep learning matches the task. Despite this, currently, limits to computational capacity preclude more complicated problems.

Recently, Wojciech Zaremba (an intern in our group) published a paper entitled “Learning to Execute,” which described a mapping from python programs to the result of executing those same programs using a recurrent neural network. The model could, as a result, predict the output of programs written in python merely by reading the actual code. This problem, while simply posed, offered a good starting point. So, I directed our attention to an NP-hard problem.

The algorithm in question is a highly complex and resource intensive approach to finding exactly the shortest path through all the points in the famous Traveling Salesman Problem. Since its formulation, this problem has attracted numerous solutions that use creative heuristics while trading off between efficiency and approximation. In our case, we investigated whether a deep learning system could infer useful heuristics on par with existing literature using the training data alone.

For efficiency’s sake, we scaled down to 10 cities, rather than the more common 10,000 or 100,000. Our training set input city locations and output the shortest paths. That’s it. We didn’t want to expose the network to any other assumptions about the underlying problem.

A successful neural net should be able to recover the behavior of finding a way to traverse all given points to minimize distance. Indeed, in a rather magical moment, we realized it worked.

The outputs, I should note, might be slightly sub-optimal because this is, after all, probabilistic in nature: but it’s a good start. We hope to apply this method to a range of new problems. The goal is not to rip and replace existing, hand-coded solutions. Rather, our effort is limited to replacing heuristics with machine learning.

DB: Will this approach eventually make us better programmers?

OV: Consider coding competitions. They kick off with a problem statement written in plain English: “In this program, you will have to find A, B, and C, given assumptions X, Y, and Z.” You then code your solution and test it on a server. Instead, imagine for a moment a neural network that could read a such a problem statement in natural language and afterward learn an algorithm that at least approximates the solution, and even perhaps returns it exactly. This scenario may sound far-fetched. Bear in mind, though, just a few years ago, reading python code and outputting an answer that approximates what the code returns sounded quite implausible.

DB: What do you see happening with your work over the next five years? Where are the greatest unsolved problems?

OV: Perhaps five years is pushing it, but the notion of a machine reading a book for comprehension is not too distant. In a similar vein, we should expect to see machines that answer questions by learning from the data, rather than following given rule sets. Right now, if I ask you a question, you go to Google and begin your search; after some number of iterations, you might return with an answer. Just like you, machines should be able to run down an answer in response to some question. We already have models that move us in this direction on very tight data sets. The challenges going forward are deep: how do you distinguish correct and incorrect answers? How do you quantify wrongness or rightness? These and other important questions will determine the course of future research.