Timoni West on nailing the virtual reality user experience

The O'Reilly Radar Podcast: VR UX hurdles, bringing VR mainstream, and preparing for user behavior.

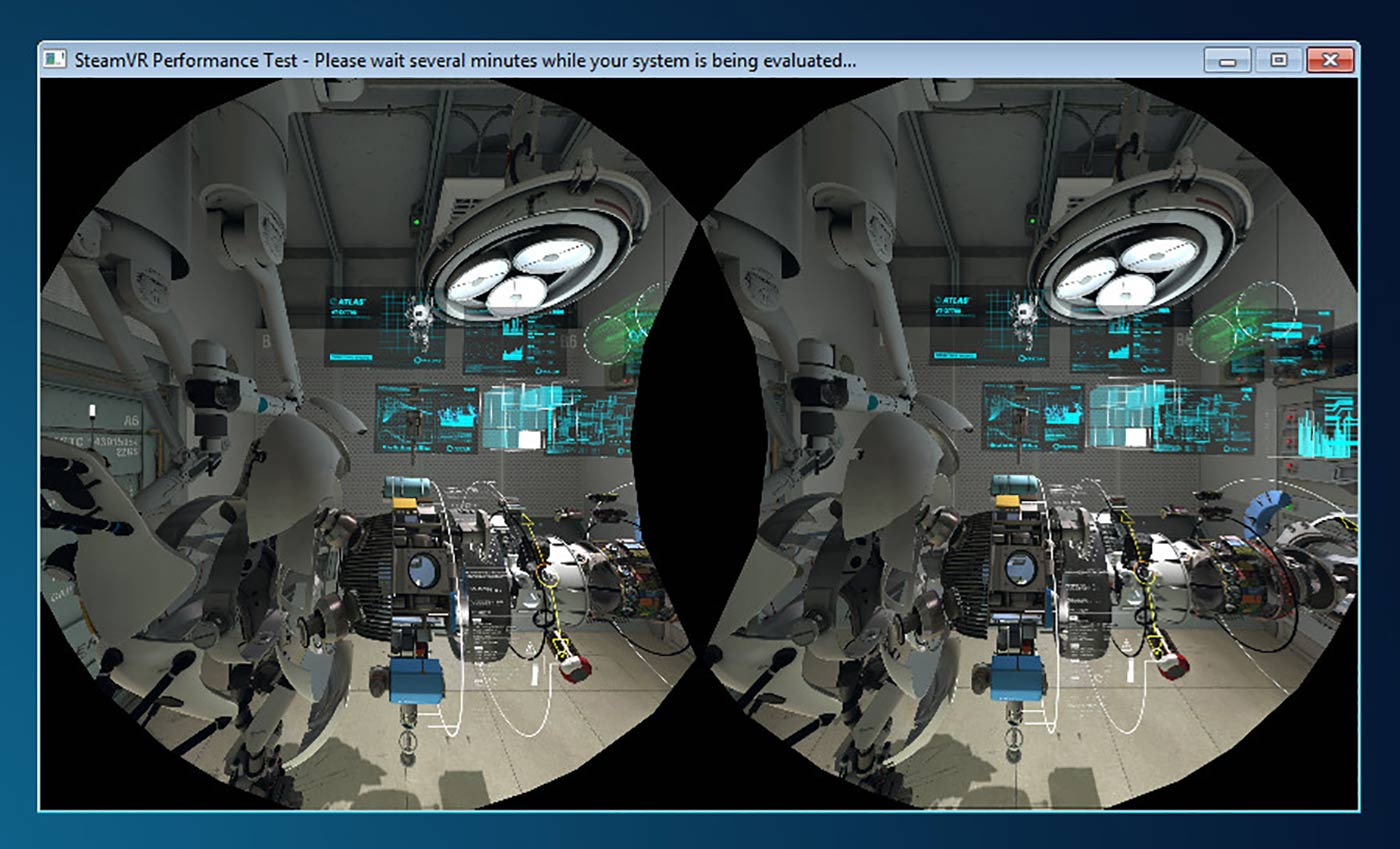

SteamVR Performance Test. (source: Steam)

SteamVR Performance Test. (source: Steam)

This week, I chat with Timoni West, the principal designer at Unity Labs, where she specializes in virtual reality (VR) user experience. We talk about VR, the UX hurdles designers are tackling, what will drive mainstream adoption, and what we can expect from VR in the future.

West will be talking more about VR at Strata + Hadoop World San Jose 2016 in her session “Virtual reality in 2016 and in the future.“

Here are some highlights from our chat:

UX hurdles in VR

The biggest UX challenges I’ve seen people tackling in various ways are, first, locomotion: how do you move around a space if the space is larger than the physical space you have available to you? If you’re going to use some sort of movement mechanic to move the user’s camera forward, how do you do that without getting them sick? There’s a couple of really brilliant solutions to this already, so I think long term maybe that won’t be so much of a big issue as it will be just deciding which one you want to go with. Do you want to use blink locomotion? Do you want to have a slow moving track? Do you want to have a portal-like mechanism, and so on.

Another big one is how do you interact with objects in the world? Obviously, you can use the triggers to grab in a lot of different VR experiences right now. But having things like secondary hot keys if you want to interact with something in a slightly different way—what is the equivalent of the alt key in VR? That’s something that comes up a lot in my particular line of work because we’re taking a very complicated piece of software and trying to translate it into VR. There’s also a lot around button mappings because no one has used controllers like these before. The Oculus Touch controllers are a bit more like a conventional game controller, but the Vive controllers definitely have a fairly new interaction, having grip buttons on the side and having the thumb pads that you can use as sort of a secondary radial menu.

So, teaching people how to use that or figuring out when it’s best to use those types of interactions—it’s all new now. There’s no standardization, nor do I think there should be at this point, but just trying to set things up so that people can smoothly move into interacting in your particular world. I think it’s one of the biggest hurdles if you’re making a game, or you’re making an experience, or if you’re making an app, or whatever for VR. Right now, there’s a lot of special controllers that have huge text instructions next to them: ‘Point here. Click here. Swipe your thumb right here. Right here, look at the arrow, right here.’ Even then, people don’t necessarily get it.

Bringing VR mainstream

I’ve shown off a lot of demos to ordinary people or friends—I had my parents come in and my brothers come in, none of whom do anything related to technology. The gear stuff is fairly compelling, especially little kids love it, but when people try out things like Fantastic Contraption or Tilt Brush, where they’re actively creating and they are actively manipulating and making their own space in the world, that seems to be where people get the most excited—when they think they have some part of it or some ownership, they’re not just looking at a beautiful scene. You can play a lot of video games and never have it occur to you that you can make a video game, right? When you have these creation tools, then I think people really feel like they can do something with it, they can own it. I think that is the thing that tumbles you over that cliff into actually considering maybe dropping $2,000 on a VR headset and computer. It is a lot of money right now. On the other hand, I’m carrying around a $700 tiny computer in my pocket all the time. Clearly, people get used to it.

Hand gestures vs controllers

When I first started in VR design, I was very bullish on natural hand gestures. I was like, ‘Yeah, of course. Of course that’s what we’re going to do—we’re going to use nail polish that doubles as a sensor and have our fingers be tracked in space. It seems intuitive.’ But the longer that I work in VR, the more I’m bullish on controllers because they have very definite and definable actions attached to them. If I make a gesture with my hand, it could be a yes, it could be a no, it could be a thumbs up, it could be snapping my fingers. Those are things you want to do anyway, and having them remap to a specific verb in a specific app isn’t always what you want. I’d like to be able to wave my hand without it opening up a menu item.

Heads-up VR UX designers: Users will try to break everything

There’s an Apollo 11 VR experience. It was a pretty well-known Kickstarter. We have one of the demos on our computer. You’re actually in the Apollo 11 shuttle and there’s two astronauts sitting next to you, you’re the furthest one to the left. I make everyone stand up and actually walk out of the capsule because then you can see the moon going toward you and you can see the Earth getting farther away from you. It’s a really cool view.

I did the demo once just trying to peer out the tiny little capsule window before I was like, ‘Wait, I’m in VR, I can just go through the wall and go look. I don’t need to be looking through this tiny little capsule window.’ It feels so uncomfortable and people are like, ‘What? You want me to walk through the…what? I can do that?’ They sort of shuffle along really cautiously and then they get outside and they’re happy. Stuff like that is pretty great. VR experience designers should definitely keep in mind that everyone will try to break everything and stick their heads in weird places.