We’ve been talking about data science and data scientists for a decade now. While there’s always been some debate over what “data scientist” means, we’ve reached the point where many universities, online academies, and bootcamps offer data science programs: master’s degrees, certifications, you name it. The world was a simpler place when we only had statistics. But simplicity isn’t always healthy, and the diversity of data science programs demonstrates nothing if not the demand for data scientists.

As the field of data science has developed, any number of poorly distinguished specialties have emerged. Companies use the terms “data scientist” and “data science team” to describe a variety of roles, including:

- individuals who carry out ad hoc analysis and reporting (including BI and business analytics)

- people who are responsible for statistical analysis and modeling, which, in many cases, involves formal experiments and tests

- machine learning modelers who increasingly develop prototypes using notebooks

And that listing doesn’t include the people DJ Patil and Jeff Hammerbacher were thinking of when they coined the term “data scientist”: the people who are building products from data. These data scientists are most similar to the machine learning modelers, except that they’re building something: they’re product-centric, rather than researchers. They typically work across large portions of data products. Whatever the role, data scientists aren’t just statisticians; they frequently have doctorates in the sciences, with a lot of practical experience working with data at scale. They are almost always strong programmers, not just specialists in R or some other statistical package. They understand data ingestion, data cleaning, prototyping, bringing prototypes to production, product design, setting up and managing data infrastructure, and much more. In practice, they turn out to be the archetypal Silicon Valley “unicorns”: rare and very hard to hire.

What’s important isn’t that we have well-defined specialties; in a thriving field, there will always be huge gray areas. What made “data science” so powerful was the realization that there was more to data than actuarial statistics, business intelligence, and data warehousing. Breaking down the silos that separated data people from the rest of the organization—software development, marketing, management, HR—is what made data science distinct. Its core concept was that data was applicable to everything. The data scientist’s mandate was to gather, and put to use, all the data. No department went untouched.

When we can’t find unicorns, we break down their skills into specialties, and these started appearing as data science came into its own. We suddenly started seeing data engineers. Data engineers aren’t primarily mathematicians or statisticians, though they’re comfortable with math and statistics; they aren’t primarily software developers, though they’re comfortable with software. Data engineers are responsible for the operation and maintenance of the data stack. They can take a prototype that runs on a laptop and make it run reliably in production. They’re responsible for understanding how to build and maintain a Hadoop or Spark cluster, together with the many other tools that are part of the ecosystem: databases (such as HBase and Cassandra), streaming data platforms (Kafka, Spark Streaming, Apache Flink), and many more moving parts. They know how to do operations in the cloud, taking advantage of Amazon Web Services, Microsoft Azure, and Google Compute Engine.

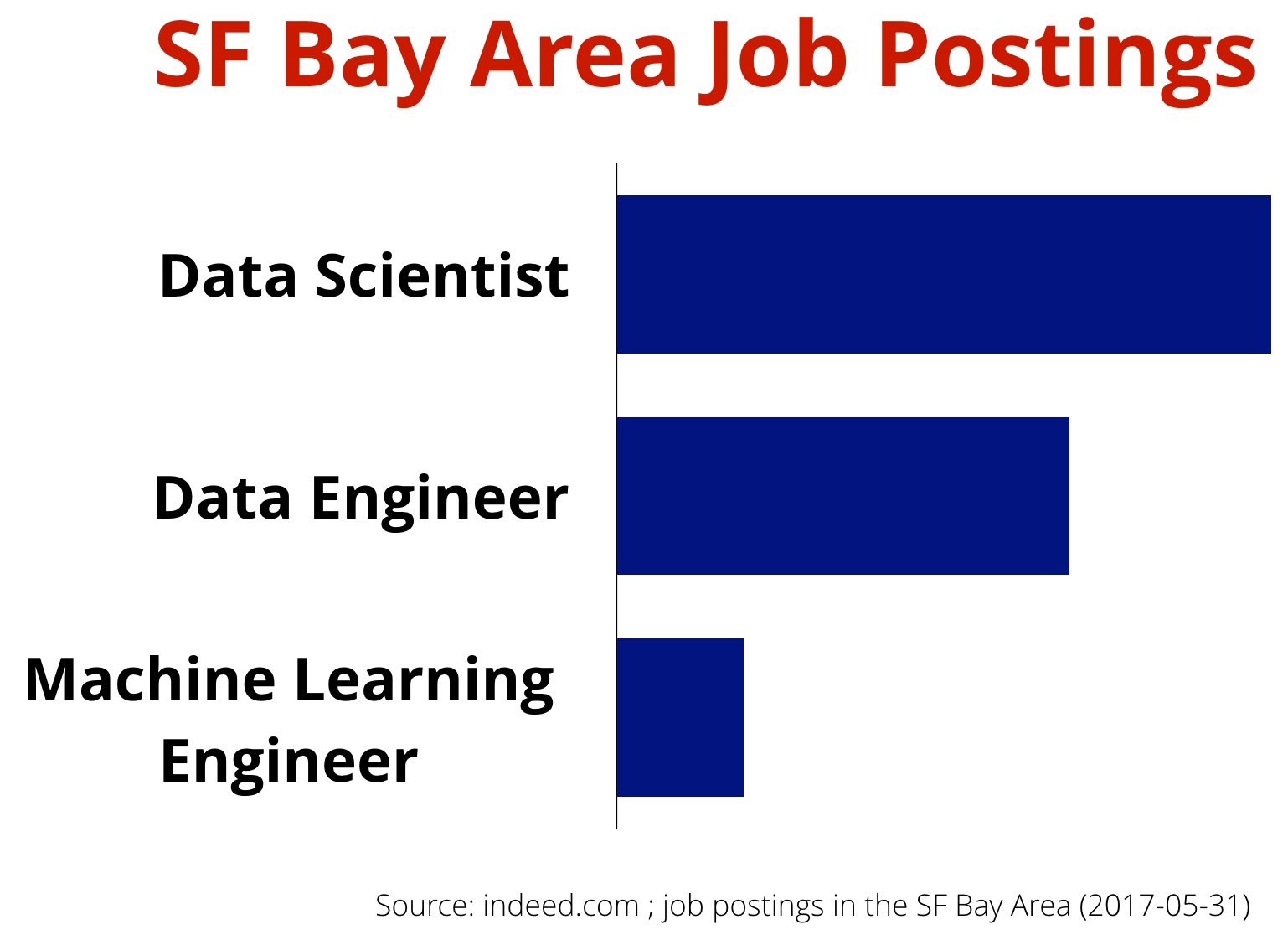

Now that we’re into the second decade of “data science,” and now that machine learning has come into its own, we’re seeing a refinement of the “data engineer.” A widely cited 2015 paper from Google highlighted the fact that real-world machine learning systems have many components aside from analytic models. Companies are beginning to focus on building data products and putting the technologies they’ve been adopting into production. In any application, the part that’s strictly “machine learning” is relatively small: someone needs to maintain the server infrastructure, watch over data collection pipelines, ensure there are sufficient computational resources, and more. To that end, we are beginning to hear more companies forming teams of machine learning engineers. This isn’t really a new specialty, as such; as machine learning (and, in particular, deep learning) became the rage in data science circles, data engineers were bound to look to the next step. But what distinguishes a machine learning engineer from a data engineer?

To some extent, machine learning engineers do what software engineers (and good data engineers) have done all along. Here are a few important characteristics of machine learning engineers:

- They have stronger software engineering skills than typical data scientists. Machine learning engineers are able to work with (and sometimes sit on the same teams as) engineers who maintain production systems. They understand software development methodology, agile practices, and the full range of tools that modern software developers use: everything from IDEs like Eclipse and IntelliJ to the components of a continuous deployment pipeline.

- Because their focus is on making data products work in production, they think holistically and factor in components like the logging or A/B testing infrastructure.

- They are up to speed on issues that are specific to monitoring data products in production. There are many resources on application monitoring, but machine learning has requirements that go even further. Data pipelines and models can go stale and need to be retrained, or they might be attacked by adversaries in ways that don’t make sense for traditional web applications. Can a machine learning system be distorted by compromising the data pipelines that feed it? Yes, and machine learning engineers will need to know how to detect these compromises.

- The rise of deep learning has led to a related but more specialized job title: deep learning engineer. We have also come across “DataOps,” though there seems to be less consensus (so far) as to what the term means.

Machine learning engineers are involved in software architecture and design; they understand practices like A/B testing; but more important, they don’t just “understand” A/B testing—they know how to do A/B testing on production systems. They understand issues like logging and security; and they know how to make log data useful to data engineers. Nothing is particularly new here: it’s a deepening of the role, rather than a change.

How does machine learning differ from “data science”? Data science is clearly the more inclusive term. But there is something significantly different about the way deep learning works. It was always convenient to think of a data scientist exploring the data: looking at alternate approaches and different models to find one that works. Classics like Tukey’s Exploratory Data Analysis set the tone for what much of data scientists have done: exploring and analyzing masses of data to find value that’s hiding in them.

Deep learning changes this model significantly. Your hands aren’t directly in the data; you know the result you want, but you’re letting the software discover it. You want to build a machine that beats Go champions, or that tags photos correctly, or that translates between languages. In machine learning, these goals aren’t attained through careful exploration; in many cases, there’s too much data to explore in any meaningful sense, and way too many dimensions. (What is the dimensionality of a Go game? Of a language?) The promise of machine learning is that it builds the model itself: it does its own data exploration and tuning.

As a result, data scientists don’t explore as much. Their goal isn’t to find significance in the data: they believe the significance is already there. Instead, their goal is to build the machine that can analyze the data and produce results: to create a neural network that works, that can be tuned to produce reliable results on the input. There’s less emphasis on statistics—indeed, the holy grail of machine learning is “democratization,” reaching a point where machine learning systems can be made by subject-matter experts, not AI Ph.D.s. We want a Go player to build the next-generation AlphaGo, not a researcher. We want a Spanish speaker to build the engine that does automatic translation into Spanish.

This change has a corresponding effect on the machine learning engineer. In machine learning, models aren’t static. Models can grow stale over time. Someone needs to monitor the system, retraining it when necessary. That’s not a job the developers who initially built the system will find appealing, but it’s deeply technical. Furthermore, it requires an understanding of monitoring tools, which haven’t been designed with data applications in mind.

Any practicing software developer or IT staffer should understand security. As far as we know, we haven’t yet seen significant attacks on machine learning systems. But they will be increasingly tempting targets. What new kinds of vulnerabilities does machine learning present? Is it possible to “poison” the data on which the system is trained, or to force a system to retrain when it shouldn’t? Because a machine learning system trains itself, we need to expect an entirely new class of vulnerabilities.

As tools get better, we will see more data scientists who can make the transition to production systems. Cloud environments and SaaS are making it easier for data scientists to deploy their data science prototypes to production, and open source tools like Clipper and Ground (new projects from UC Berkeley’s RISE Lab) are starting to appear. But we will still need data engineers and machine learning engineers: engineers who are literate in data science and machine learning, who understand how to deploy and run systems in production, and who are up to the challenges of supporting machine learning products.They’re the ultimate “humans in the loop.”

Related resources:

- What is data science?

- What is hardcore data science—in practice?: Mikio Braun on bringing data science into production

- When models go rogue: Hard-earned lessons about using machine learning in production: a forthcoming Strata Data New York talk by David Talby

- Becoming a machine learning engineer

- Data Jiujitsu – The Art of Turning Data Into Products, by DJ Patil