Will HTTP/2 make my site faster?

How latency, packet loss, content type, and third-party content affect performance.

Jet engine (source: WerbeFabrik via Pixabay)

Jet engine (source: WerbeFabrik via Pixabay)

“Will HTTP/2 make my site faster?” is a question often asked by companies focused on fast and reliable websites.

The general answer is “most likely, yes.” However, the mileage varies considerably from site to site because there are a few things that affect how much of a performance boost HTTP/2 will provide. Those are:

- Latency

- Packet loss

- Type of content the site contains

- Amount of third-party content

Below, I take a look at how each of these attributes affects the boost you can expect from HTTP/2.

Latency

In computer networks, latency is the amount of time it takes for a packet of data to get from one point to another. It is sometimes expressed as the time required for a packet to travel to the receiver and back to the sender, called Round Trip Time (RTT). Latency is generally measured in milliseconds.

HTTP/2 performs better than HTTP/1.1 over high-latency connections. This is because the binary framing and header compression built within the new version of the protocol make the communication more efficient and require fewer round trips. This is especially beneficial for mobile connections, which usually have high-latency connections and limited upstream bandwidth.

Packet Loss

Packet loss happens when packets of data traveling across a computer network fail to reach their destination; this is typically caused by network congestion. Packet loss is measured as a percentage of packets lost with respect to the number of packets sent.

High packet loss has a detrimental impact on page-load time over HTTP/2. That’s because HTTP/2 opens a single TCP connection, and the TCP protocol reduces the TCP window size each time there is loss/congestion. Fewer bytes can be sent over the wire via HTTP/2’s single TCP connection than over the six connections that are standard in HTTP/1.1.

The long-term solution to the problem of packet loss will come from the development of better underlying congestion control. Keep an eye for technologies like QUIC, a new transport that reduces latency compared to that of TCP, and BBR, an improved TCP congestion control algorithm.

Type of content

As I noted above, TCP’s congestion control can detrimentally affect HTTP/2 performance because less data will flow over the wire when there’s congestion. This will be more noticeable on pages that have larger objects because it will take much longer to download all the needed bytes through a smaller pipe.

On the other hand, pages that have a large number of small objects (150+) may see a 5-25% performance improvement of the objects delivered over HTTP/2 thanks to features like multiplexing (more requests can be made concurrently) and header compression (fewer bytes are sent to the server for each request).

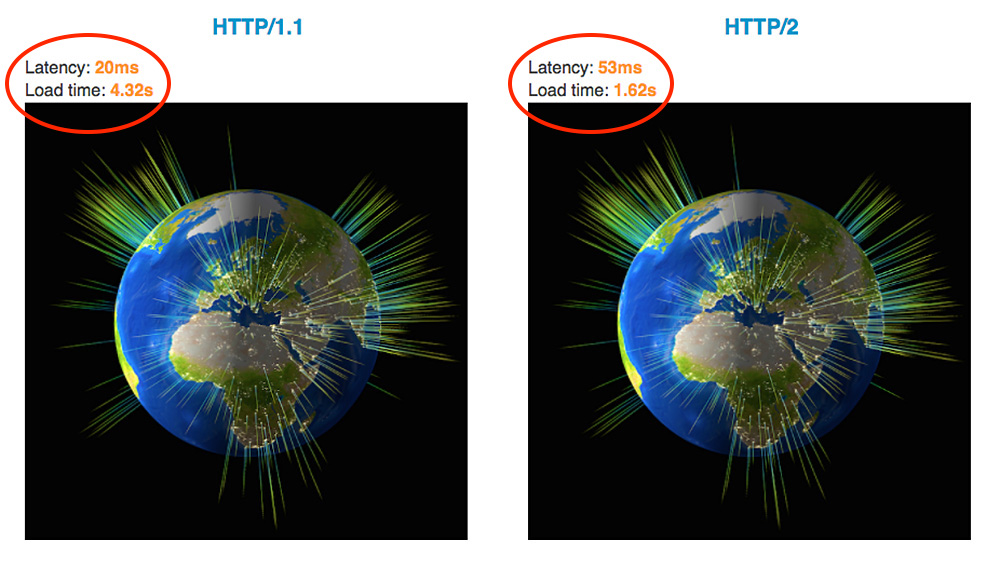

The picture below of an HTTP/2 performance demo clearly illustrates the performance impact of a page with many small objects.

Third-party content

Many websites use various kinds of third-party content, such as analytics tools, external JS libraries, external fonts, and tags for tracking, social, and advertising platforms.

There have been many blog posts and studies written warning about the performance and security implications of using third-party content, so I am not going to rehash that argument here. However, I want to mention a mistake many people make when comparing the performance of a site delivered over HTTP/2 vs HTTP/1.1: When measuring the performance provided by switching to HTTP/2, you need to limit the comparison to objects delivered only over the newer protocol version.

For example, let’s say you have a web page that has 100 embedded objects. From those, 10% of the objects belong to the base page domain and 90% come from external domains. When comparing HTTP/2 vs HTTP/1.1 performance, you would limit your measurements to just the 10% of objects on the base domain. Most likely, those external domains will not be using HTTP/2 features like dependencies and priorities, and the external results will skew the statistics. What may look like a mere 2% performance boost may be well more than 20% if you compare apples to apples.