The practical basics of Android app security

Learn where the vulnerabilities are, and how to address them.

Robot Hand (source: M. Levin, University of Washington (CC BY 2.0))

Robot Hand (source: M. Levin, University of Washington (CC BY 2.0))

Today’s mobile applications are used for a million different purposes, from social media to online banking. Given the ubiquity of these applications (and the wealth of information that these applications might contain), it is understandable that hackers might try to find weaknesses in your mobile application to steal company (or customer) data. In this article, we are going to look at techniques that hackers might use to tear apart your application for vulnerabilities, data and code. In order to protect your application (and thus your company and its customers), you have to “know your enemy” and discover if your application is vulnerable to attack. We’ll look at ways to defend your app from these common vectors.

Today’s smartphones provide an entirely new set of data about each user. When I think about personal information, I initially think about location—since your phone is on your person, it knows everywhere you have been. But your phone knows so much more. Android devices can report the other apps installed, and thus know your bank. They can report all your contacts, your email addresses and more.

As an Android developer, your application can have access to this data, and it is likely that your customers do not want this information disclosed. Potentially, your app has proprietary code that your company does not want disclosed. In my book, High Performance Android Apps, I discuss ways to speed up your application and to prevent crashes. An additional aspect of performance is the elimination of data leaks. Data leaks have the potential for erroneous credit card charges, stalking, or even identity theft. Should critical company code or algorithms become publicly known, your company could lose its competitive edge in the market. Ensuring that your customer’s and your company’s private and personal information is properly secured is an aspect of performance not to be shortchanged.

According to a March 2015 study from IBM, 50% of 400 developers surveyed have 0% of their development budget allocated to security, and 40% have not tested their mobile app for security vulnerabilities. Of the 60% who do test for security issues, 33% find them. If we assume that ratio holds for the apps untested (and one app per company), and extrapolate this across all apps, it would mean that 12% of all applications in the market have:

- No security tests run

- Major security vulnerabilities exposed

Reports come out regularly on the security vulnerabilities in mobile banking apps, dating apps, etc. The old adage that “any publicity is good publicity” probably does not hold here. When you think of the worst press your application could get, you’re probably thinking outage. But what if you accidentally exposed thousands of credit card numbers? Or made a mistake like one airline recently did, modifying their app URL which allowed fliers to access other valid boarding passes? The app performance implications are huge. This IBM-sponsored study also stated that 73% of the developers felt that they did not have enough training to perform secure coding practices. So, let’s start to figure out some of the basic vectors that hackers might use to get information out of your application.

Ethical hacking or “know your enemy”

Note that the attack vectors in this article are simply an introduction to defending your mobile app. These are perhaps the most common attacks to penetrate your application (and as you’ll see, a number of apps in Google Play today suffer from these issues).

Customers are worried about security

Just as customers want mobile applications that are performant and fast, there are indications that smartphone users are getting worried about what mobile application developers are doing with their data, how it is being stored, and how it is protected. And they have reason to be concerned. Hardly a week goes by without warning of malware, or security breaches on mobile or elsewhere. Three days before the 2015 Super Bowl, it was announced that the NFL Mobile application was sending customer login and password combinations over the air via HTTP (meaning unencrypted and in cleartext). Just a week later, IBM reported that 60% of all dating applications had security concerns and were not holding customer data in a secure fashion.

Often it is the simple things that cause the biggest pains. In 2014, Target lost 40 million credit card numbers, and it could have been prevented with simple malware detection and cleanup. So, let’s start with easy ways to secure your application.

Permissions

There is a lot of documentation about Android permissions, and how they work, so I won’t go into a lengthy description here. The five-second elevator conversation on what Android permissions are goes like this: Your Android application runs inside a unique sandbox that protects it from other applications, but the sandbox places limits on what it can do on the device. If you want your application to do things outside the “sandbox” (like vibrate the phone, use the camera, or connect to the internet) you need to add these permissions to your application (and anyone who wants to use your app must approve these permissions prior to install).

Up until Android Marshmallow, you must accept all application permissions at download. Many Android users admit to just downloading the application they want without regard to (or perhaps not understanding) the permissions. While they have gotten better over time, many of the permissions are not straightforward, and customers don’t know if they are okay or not. A recent study showed that 47% of customers hesitate to download new apps due to potential misuse of private information. With that in mind, I’d caution you to only use the permissions you really need. Some customers won’t install applications that access too many “private” functions. Additionally, there are some permissions like READ_PHONE_STATE or CAMERA that are confusing to customers and might add additional concern (“Why does Facebook need access to my camera?”). Writing a short description about how each permission is in your application description might help allay fears that customers might have. Alternatively, link to your company’s privacy policy, and use that to outline the data your application collects, and how you use it.

Log files

When debugging your application, logging pertinent details is a great way to track what is going on in your application. However, it is possible to leak data in the logs. Prior to Jelly Bean, all applications had access to read debug logs on the device. On all devices, simply connecting a device via ADB and running adb logcat allows anyone to read the logs of any application running on the device.

For example, here are logs from a popular airline application (removing date and time for space):

(18468): Preference updated:com.analytics.MIN_BATCH_INTERVAL

(18468): PushService startService

(18468): *Received GCM Registration ID: <Yes, the GCM Cloud registration ID was here>*

(18468): Saving preference: com.analytics.push.APP_VERSION value: 22

(18468): Adding event: {"data":{"push_enabled":true,"carrier":"AT&T",

"session_id":"240d5059-c976-4fb3-b59d-44553649b08c",

"transport":"GCM","connection_type":"wifi","apid":"6dc3f0c0-e5f2-4bed-b24a-b165662f3f96"},

"type":"push_service_started","event_id":"171da614-50f9-468c-b60a-1a97d39e226c","time":"1424166468"}

The analytics provider in this case is reporting all of its actions in the log. We can see that preferences are being changed in the first few lines. When I start this application, Google Cloud Messaging (GCM) is established to send push messages about my flight. The analytics provider receives this ID, sends it to the log stream, and then updates preferences. I have redacted the GCM registration ID, but having access to this value would allow communication back to the server. Google specifically states in its GCM documentation for Android that this ID should be kept secret.

There are a number of build tools that can be configured to remove all logging from your app when building a production app. Android Studio applications use ProGuard by default. Simply adding:

-assumenosideeffects class android.util.Log {

<methods>;

}

to the Proguard-project.txt will remove all logs from your production builds. Doing this removes the possibility of any data inadvertently leaking through your debugging tools.

Your application files

As soon as people begin downloading your app from the storefront, you must assume that none of the files or code in your application is safe from prying eyes. For customers looking to find vulnerabilities in your app, simply installing and using it on a rooted device gives them access to all of the app files. Your APK can be pulled off of the device and de-compiled. Let’s break down these attack vectors, and ways to prevent (or at least dissuade) hackers from getting the information they desire.

Locally stored files

Your application has a protected local storage area that can be used to keep local files on the device. These files are stored in /data/data/<yourappname>/, and are generally not visible to the end user. Rooted phones give users full access to your sandbox. But even users on stock devices can get at this data, and investigate it. When customers run a backup of all their apps, a file is created that contains all of the saved data from each application, and the backup is stored on a computer. Gaining access to a phone (or a backup of a phone) is a great way to see all of the user’s data in one place, and depending on how your application saves data, these backup files can be used to extract vulnerabilities as well.

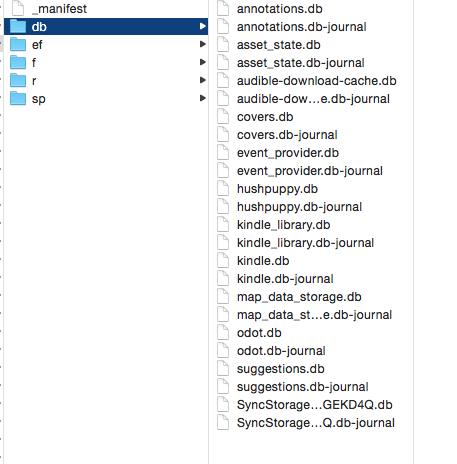

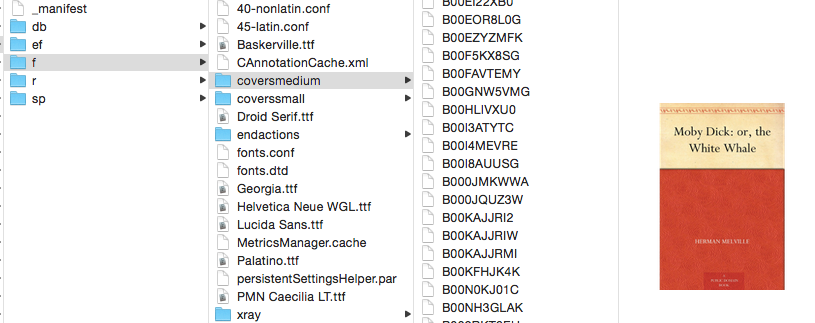

Let’s take a closer look at the files that are collected when we run a backup of an application. In the screenshot below, there are directories for databases, files, resources and shared preferences. Let’s take a look at each of these, and how they might be used to gain information to attack your app.

Databases

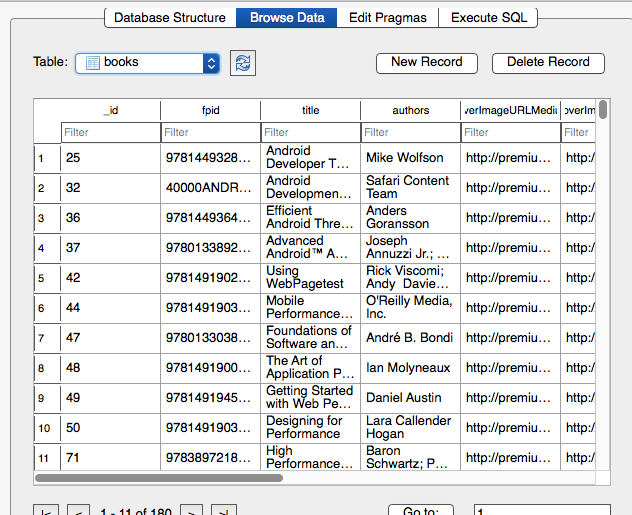

Let’s start at the easiest point of attack: database files. In the above figure you can see the list of the databases that are stored by the Amazon Kindle application on my device. Because Android uses SQLite to store data (typically user information), these databases are not secured by default. Once you have the app files on a computer, any data stored in those files are open for observation. It is important to know that login information, tokens or other critical customer data should not be stored here without some form of encryption. (In fact, as we will see, storing any token or key is not safe to store locally anywhere). The Kindle databases did not expose any personal information, but you can see the general type of data exposed in another app below.

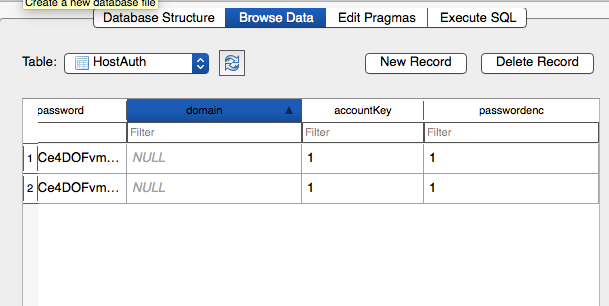

In the above figure you can see a list of all of the books I currently have in my Safari To Go app. Again, this data is not more than someone might see if they accessed my device. However, other application databases revealed logins (and passwords), tokens for services and other private data that should have been encrypted stored as cleartext. Below is a screenshot of the Exchange email client database. In the column for the password, the value has been encrypted, and therefore cannot be simply plucked from the database:

The tricky part about having an encrypted database is finding a secure place to store the key. In the next few sections we’ll look at ways to store a key (or other private information) securely inside your app’s code. As you might guess, there is no easy solution.

When using databases, you also must consider SQL Injection as a threat trajectory. We’ll look at this (and others) in penetration testing.

App files and resources

The ‘f’ directory often has files that are cached for later use, or analytics data that is being stored. I’ll pick on the Kindle app again as my example, showing that all of the book covers are stored in this directory (as are a number of fonts for viewing books):

Again, none of this data is highly confidential, and would be available from the Kindle app without a password request. The “r” directory contains resources, and were mostly caches from using webviews or analytics information.

Shared preferences

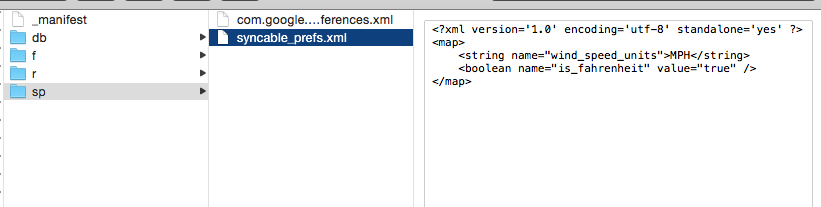

Another common place to store data is in the shared preferences. In the ‘sp’ folder of your app, various XML files can be found. There are games using this to store current levels and high scores, while others just save basic preferences:

The data shown above is for a Google weather application, and you can see that I prefer to get my weather in English units. It kind of goes without saying that it is crucial to ensure that all data in these files are either okay to be read by humans without loss of privacy, or are encrypted. It hopefully also goes without saying that storing any crypto keys in these files would not really slow down a hacker. As you might be coming to realize, there really is no 100% safe place to store the key in your application. With a bit of persistence, anyone can eventually read any file in your app.

Java files

As we have discussed in the last several sections, any file that you install locally on a device can potentially be pulled off the device and examined by a hacker. Running a backup did not expose any of your Java code, but don’t think that makes your code immune to examination. Since your .apk file is really just a zip file of your code, hackers can easily change your .apk to .zip and uncompress. Therefore, simply putting a decryption key, or AWS token, in your code is not a great idea. This is also a vector to steal IP (your code), or to find weaknesses or other exploits in your app.

To decompile and read an APK file, you need to pull it onto your computer. The commands below gives you a list of packages. Then you can get the path of the APK in the second line, and the third line pulls the package to your computer. The fourth line uses Dex2Jar to turn your APK into a Java jar file.

adb shell pm list packages

adb shell pm path <packagename>

adb pull <appPath>

sh dex2jar.sh <packageYouPulledOver>

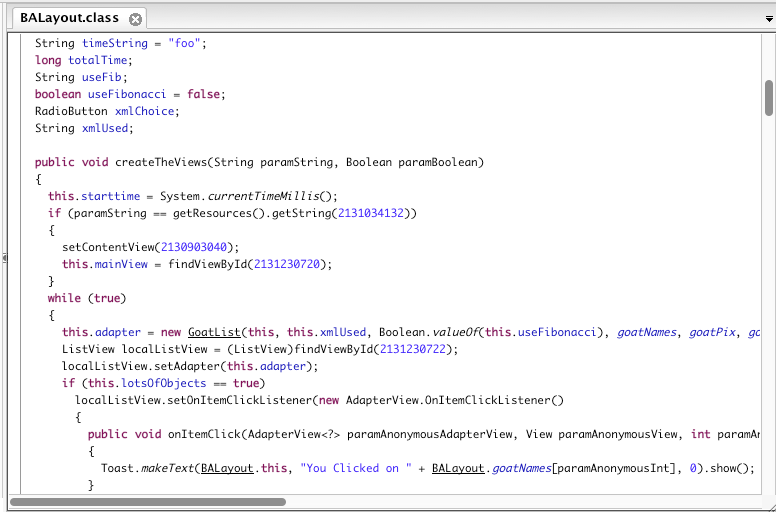

Now that you have converted this app into a jar file, you can now decompile this. I used the tool JD-GUI to open these JAR files and look at the code of “is it a goat?”:

As you can see, this code is nearly unchanged from the raw code in Android Studio. If a hacker wanted to, it would be pretty simple to figure out how the application works, borrow my code, or potentially find an exploit in my application. If I had tried to hide an encryption token here, the hacker’s work would be complete.

One quick way to make your code harder to read is to use obfuscation. There are a number of tools that will obscure your code, but Android Studio has ProGuard installed by default. When you enable your application with ProGuard, variables and objects have changed names and it is harder to read the code. (You can view the “Is it a Goat?” app to view the setup I used. There are a number of posts on the web on ways to set up ProGuard—and other obfuscation tools—to configure your obfuscation.) This is not hack-proof, but you are making it harder for the intruder to figure out to reverse engineer or find weaknesses in your application.

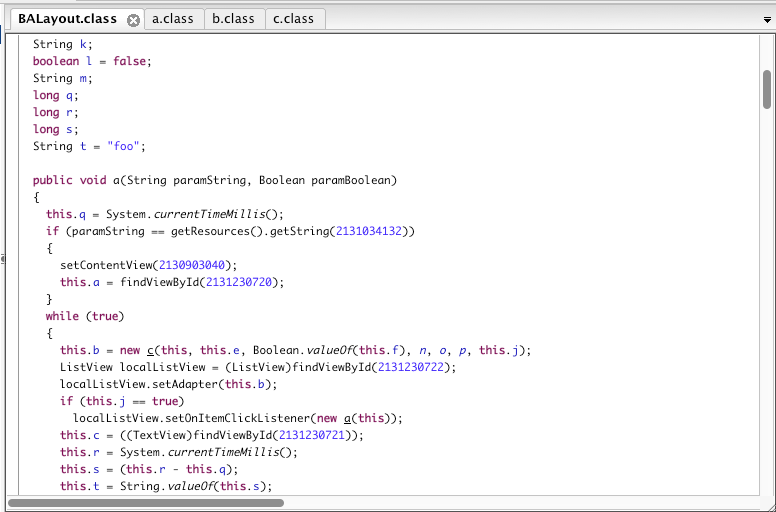

Re compiling “Is it a Goat?” with ProGuard set to true and then looking at the code, we immediately can see the difference:

The variables are now all letters (and reordered to make them harder to resolve), create TheViews is now ‘a’ and GoatList is ‘c.’ The code is not optimized or changed a great deal, but by changing the variables to letters from descriptors, the code is harder to figure out (and it’s smaller too!). While your app is not changed, by complicating the logic and variables, you make it harder for others to reverse engineer your code. This is obviously not going to stop a determined hacker (nor will it protect your encryption keys or tokens), but you are making their job harder.

In a quick survey of applications on my device, there are many applications that do not take this simple step to obscure their code and make the details harder to read.

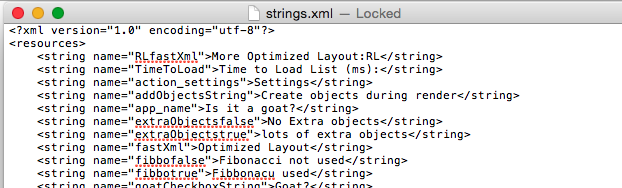

XML files

Your XML files are not safe either. Using apktool, you can decompile your Android app code into Smali, and the XML files are preserved. Obfuscation does not affect these files, so this would not be considered a safe place to hide your secret values. Any password or private information stored in shared preferences is also decoded by apktool and easily read.

All of these files are easily read (and this is from a ProGuard protected app).

Local storage of keys and tokens

There are additional ways to attempt to hide tokens or keys inside your application. Hiding the data inside an NDK class makes it harder to decipher, but a C+ application can be decompiled as well. To be absolutely positive that your application’s data is being stored securely, you will have to ask your users to login at each use (keeping the key in your customer’s memory), or connect to a server and retrieve the key from a remote server (keeping the key off the device on a server.) Obviously, this prevents your application from running without a network connection, but if security of the data is paramount it is probably the only way to be 100% certain that no one is reading the data from your app.

App penetration tests

We’ve looked at ways to exploit the data stored on the device, or how it is reported in the logs, but can we trick your application to misbehave? Using tools like drozer, you can run different activities and interact with them. This allows you to audit activity behavior under different circumstances.

To run drozer, you must install the APK on your device, and then:

adb forward tcp:31415 tcp:31415 drozer console connect

The drozer manual walks you through a number of possible penetration tests like starting activities without authentication or SQL injection into databases. For example, you can query the attack surface (areas that are potentially vulnerable) of each application:

In the above figure, drozer has identified seven activities, four broadcast receivers, three content providers and seven services that are available to external applications for potential manipulation. In general, unless there is a reason that an external process or application should access your application, the count here should be limited.

run app.activity.info -a <appname>

This command will give you a list of all Activities that can be launched without any permissions. In the drozer sample app, the activity com.mwr.example.sieve.PWList appears as an option. By launching this activity, you are able to bypass any authorization requirements, and can see a screen with a list of passwords.

Transmission (HTTPS and SPDY redux)

In High Performance Android Apps, I discuss in detail how to optimize network connections for speed. In addition to speeding up your network traffic for performance reasons, the transmission of unencrypted data is a common form of data leakage from an application. With the proliferation of Wi-Fi spoofing and other modes for capturing network traffic, it is crucial that all customer (and your company’s) private information be sent over an HTTPS connection.

Once you have encrypted the data, go back and test with Fiddler as a man-in-the-middle proxy (mitmproxy) to ensure that your HTTPS traffic is sending the expected data, and it is properly encrypted.

nogotofail

It is also crucial that your connections are safe from common vulnerabilities. In fall 2014, the Android security team released nogotofail, a suite of tests that checks for SSL security verification, bugs and other attacks that can be performed on apps (or servers) that do not have their SSL connections configured properly. It is named after the nogotofail attack, but also can help you defend against attacks like POODLE, Heartbleed and others.

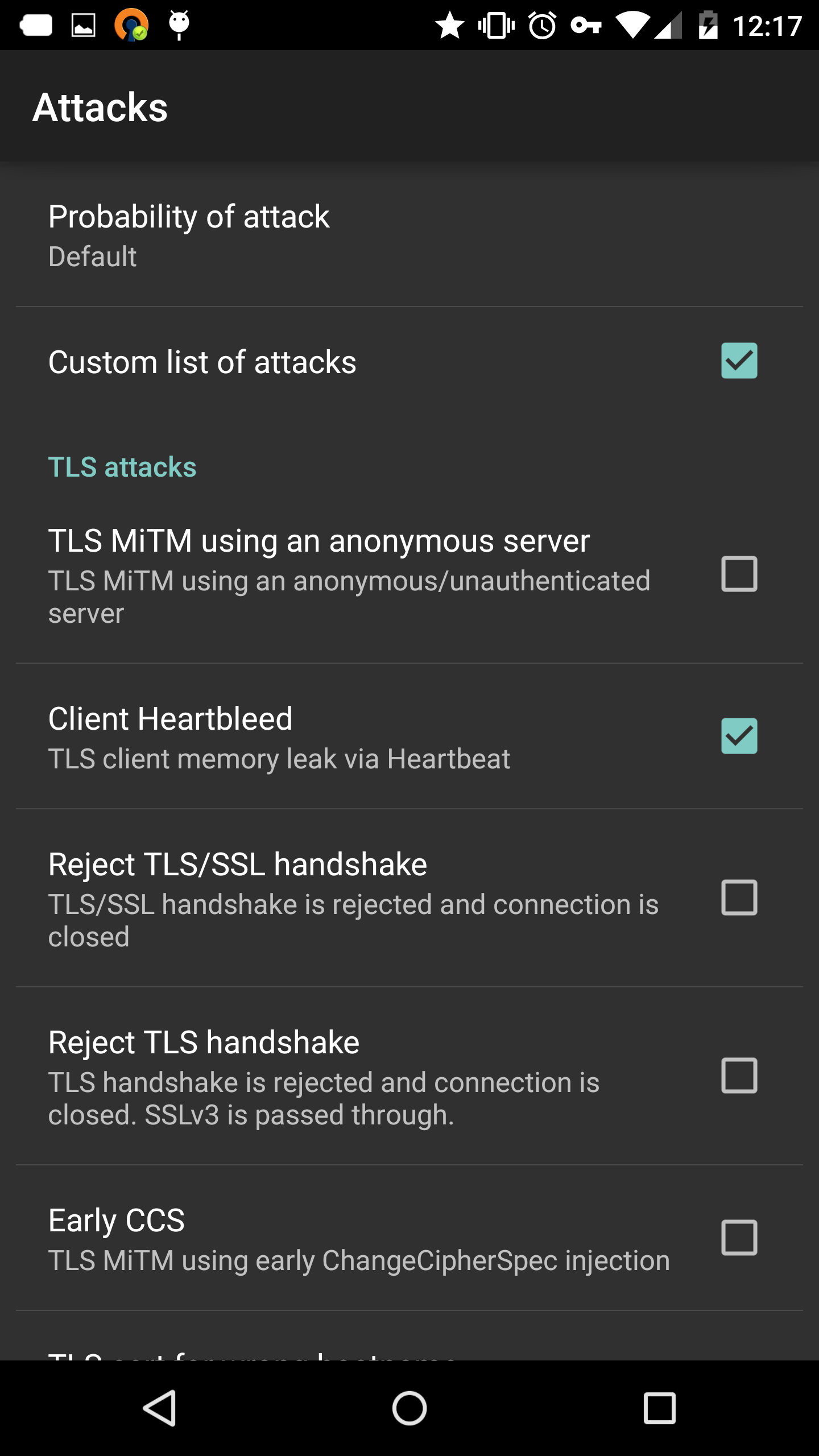

nogotofail consists of an Android app and an mitmproxy that runs on Linux. I followed the instructions to run the proxy on a Google Compute Instance, and connected my phone to the cloud server with OpenVPN (a free VPN app available in Google Play). By changing the attack vectors in the Android application (or the mitm.conf file on the server), you can see if your application fails any of the security issues. nogotofail can test your connections for:

- Evidence of connections on HTTP (rationale is HTTPS is more secure for all)

- Authorization headers in HTTP (sending credentials in headers that are not encrypted)

- Image replacement

- Attempt to strip HTTPS and force connection on HTTP

- Weak and insecure TLS ciphers

- Potential Heartbleed, POODLE and gotofail SSL vulnerabilities

The nogotofail proxy does not attack on every connection, but only a predefined percentage of connections are attacked. When the nogotofail app succeeds in attacking your application, details of the issue are written to the nogotofail MITM log (/var/log/nogotofail/mitm.log), so that you can pinpoint the issue in your app. The Android app will give you similar warnings on your device (through phone vibration and notification). If your application properly blocks the intrusion, there is no notification, but your application may not behave as expected (and it might crash!).

The nogotofail logs have the format:

date Time [<info or threat level>] [<phone IP><=><remote IP> <log or attack attempted>] (<phone> <appid> <version>) <information about the connection>

Looking at a few examples:

A:

2015-02-27 17:36:48,541 [INFO] [10.8.0.2:55400<=>54.192.7.72:443 clientheartbleed]

(client=google/shamu/shamu:5.0.1/LRX22C/1602158:

user/release-keys application="com.amazon.mShop.android.shopping"

version="502030") Selected for connection

2015-02-27 17:36:48,610 [INFO] [10.8.0.2:55400<=>54.192.7.72:443 clientheartbleed]

(client=google/shamu/shamu:5.0.1/LRX22C/1602158:

user/release-keys application="com.amazon.mShop.android.shopping"

version="502030") Connection closed

B:

2015-02-26 18:53:02,393 [ERROR] [10.8.0.2:40915<=>199.59.150.39:443 weaktlsversiondetection]

(client=samsung/t0lteatt/t0lteatt:4.1.2/JZO54K/I317UCALL1:user/release-keys

application="<app-name>" version="28")

Client enabled SSLv3 protocol without TLS_FALLBACK_SCSV

C:

2015-02-26 18:50:54,119 [CRITICAL] [10.8.0.2:41266<=>23.201.25.35:443 earlyccs]

(client=samsung/t0lteatt/t0lteatt:4.1.2/JZO54K/I317UCALL1:user/release-keys

application="<app-name>" version="28") Client is vulnerable to Early CCS attack!

D:

2015-02-27 20:01:50,864 [CRITICAL] [10.8.0.3:55685<=>216.186.48.22:80 httpauthdetection]

(client=google/shamu/shamu:5.0.1/LRX22C/1602158:user/release-keys application="<app-name>"

version="7") Authorization header in HTTP request

GET domain/PAPIService/REST/public/v1/<lots of params>/basicdata

2015-02-27 20:01:50,864 [ERROR] [10.8.0.3:55685<=>216.186.48.22:80 httpdetection]

(client=google/shamu/shamu:5.0.1/LRX22C/1602158:user/release-keys application="<app-name>"

version="7") HTTP request GET <domain>/PAPIService/REST/public/v1/<lots of params>/basicdata

E:

2015-02-27 22:03:13,686 [ERROR] [10.8.0.2:45094<=>72.21.206.6:443 insecurecipherdetection]

(client=google/shamu/shamu:5.0.1/LRX22C/1602158:user/release-keys application="<app-name>"

version="502012") Client enabled anonymous TLS/SSL cipher suites

TLS_DH_anon_WITH_AES_128_CBC_SHA, TLS_DH_anon_WITH_AES_256_CBC_SHA,

TLS_DH_anon_WITH_3DES_EDE_CBC_SHA

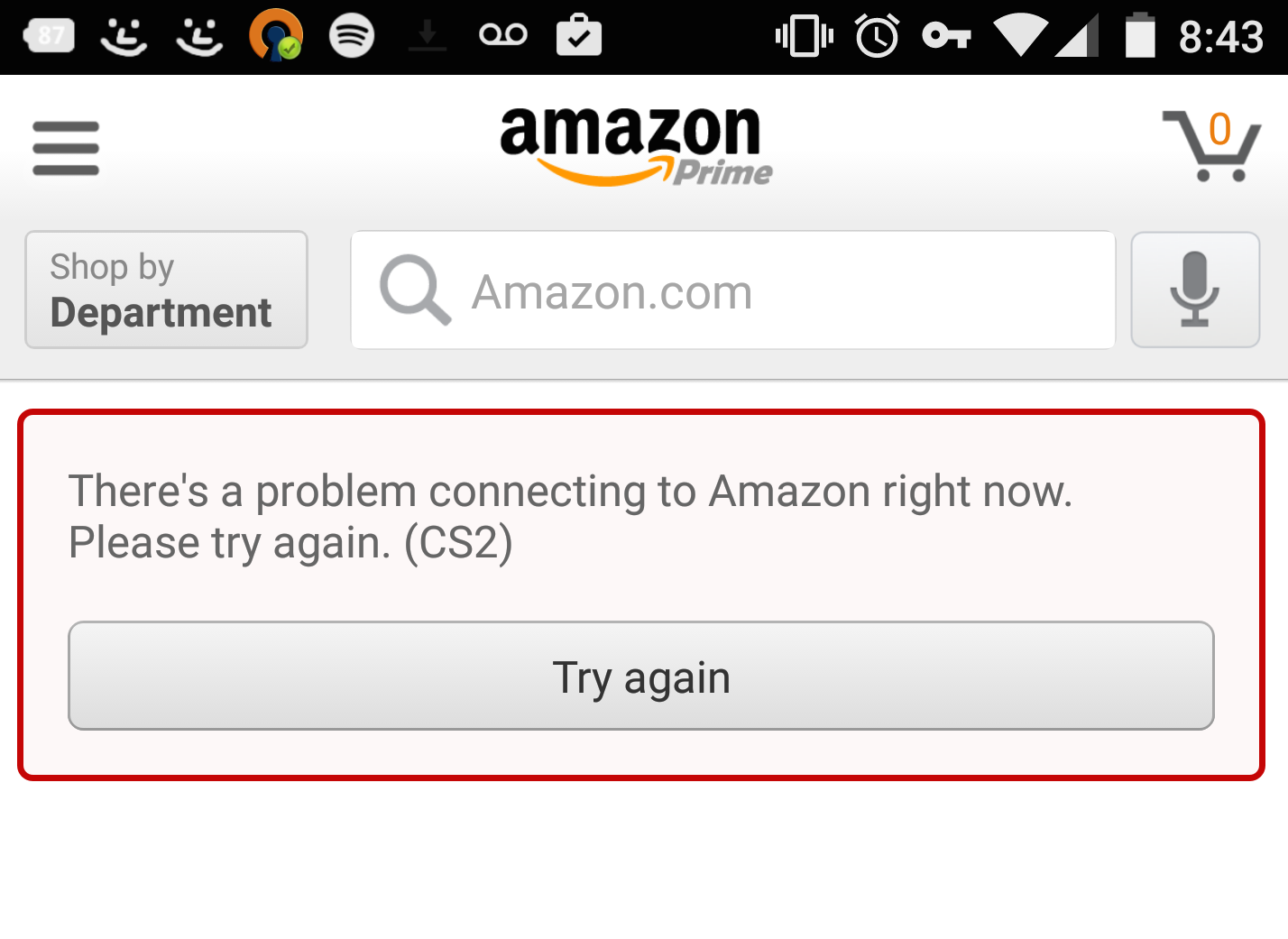

Example A shows that when we attempt a Heartbleed attack on the Amazon app, Amazon closes the connection. The application also shows an error, indicating that there is a connection issue (the smiley face notifications are nogotofail alerts):

In Example B, the application allowed an SSLv3 connection without forcing those who can to fall back away from SSLv3. This potentially exposes your app to the POODLE attack. Example C shows an Early Change Cipher attack vector. Example D shows an application that connects via HTTP and shares authorization headers over HTTP—obviously not a confidential way to share these credentials. Example E shows an app that still worked with anonymous cipher suites.

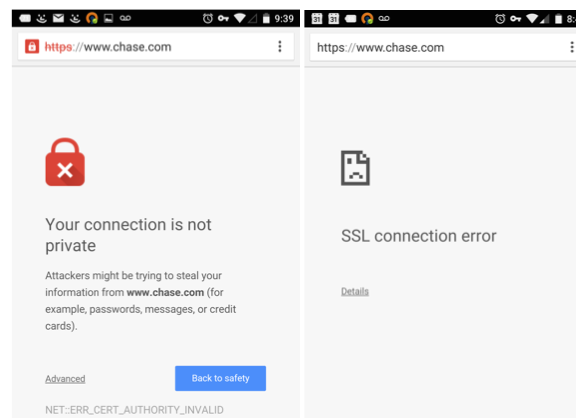

When you try to inject MITM attacks into some websites, Chrome also fails gracefully:

Other applications would just crash, telling you that the application has closed unexpectedly. From a performance perspective, a graceful fail is the best way to go.

Crypto algorithms

Keeping your (and your customers’) data secure in Android is not a trivial matter. We’ve covered the high level problems with storing passwords and data on a device in the clear. Even apps that attempt encryption face issues. A research paper published in 2013 found that 88% of nearly 12,000 apps made at least one implementation mistake, thereby weakening the security of the data being stored. Nearly 50% of all apps studied use the default Bouncy Castle values, which these researchers find to not be strong enough. The paper linked above lists six rules for secure cryptography. A follow-up blog post lists common ways to fail, and the correct ways to implement the six rules for secure data.

Ensuring proper cryptographic algorithms for your app is not something to do at the last minute or without forethought. Strong encryption will ensure that your data stays encrypted and secure from malfeasance.

Security and SDKs/libraries

Getting information about how your customers are using your app helps you discover crashes, pain points, usability patterns in a way that you can improve your app. In addition to improving the performance of your app, these SDKs can also tell you about the phone’s signal strength, battery level, location (and more). Ensure that the permissions and the frequency of reporting of the SDKs you use is acceptable for your application.

When you add an analytics SDK or other third party libraries into your mobile application, it is your responsibility to ensure that the SDKs are doing what they say they are doing. Many of the reported Android malware attacks have been a result of infected SDKs/libraries added to legitimate applications. These added libraries/SDKs are essentially subcontractors on your project. They do provide a required function, but you (or your company) ultimately bear responsibility for how every facet of your application behaves (see Trust, but verify for an example of an SDK leaking customer data).

Conclusion

When your customers download your application, there is a mutual trust that is inherent. Your side of that trust is to protect the data of your customers. While it is impossible to ensure that your application is 100% secure, the steps outlined above are a good first primer into discovering any flaws in your Android application.

My hope is that you will use these exercises as an introduction into Android security, and further examine your app to make sure you are protecting your data in a secure way. If you are interested in making your app run faster, and crash less, look at my book, High Performance Android Apps.