Chapter 1. Utilizing Cache to Offload Scale to the Frontend

Since 2008 I have run, among other things, a site that handles around 500 million page views per month, hundreds of transactions per second, and is on the Alexa Top 50 Sites for the US. I’ve learned how to scale for that level of traffic without incurring a huge infrastructure and operating cost while still maintaining world-class availability. I do this with a small staff that handles new features, in addition to a handful of virtual machines.

When we talk about scalability, we are often talking about capacity planning and being able to handle serving requests to an increasing amount of traffic. We look at things like CPU cycles, thread counts, and HTTP requests. And those are all very important data points to measure and monitor and plan around, and there are plenty of books and articles that talk about that. But just as often there is an aspect of scalability that is not talked about at all, that is offloading your scaling to the frontend. In this chapter we look at what cache is, how to set cache, and the different types of cache.

What Is Cache?

Cache is a mechanism to store data as responses to future requests to prevent the need to look up and retrieve that data again. When talking about web cache, it is literally the body of a given HTTP response that is indexed and retrieved using a cache key, which is the HTTP method and URI of the request.

Moving your scaling to the frontend allows you to serve content faster, incur far fewer origin hits (thus needing less backend infrastructure to maintain), and even have a higher level of availability. The most important concept involved in scaling at the frontend is intentional and intelligent use of cache.

Setting Cache

Leveraging cache is as easy as specifying the appropriate headers in the HTTP response. Let’s take a look at what that means.

When you open up your browser and type in the address of a website the browser makes an HTTP request for the resource to the remote host. This request looks something like this:

GET /assets/app.js HTTP/1.1 Host: [] Connection: keep-alive Cache-Control: max-age=0 User-Agent: Mozilla/5.0 (Macintosh; Intel Mac OS X 10_10_4) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/51.0.2704.84 Safari/537.36 Accept: */* Accept-Encoding: gzip, deflate, sdch Accept-Language: en-US,en;q=0.8 If-Modified-Since: Thu, 09 Jun 2016 02:49:35 GMT

The first line of the request specifies the HTTP method (in this case GET), the URI of the requested resource, and the protocol. The remainder of the lines specify the HTTP request headers that outline all kinds of useful information about the client making the request, like what the browser/OS combination is, what the language preference is, etc.

The web server in turn will issue an HTTP response, and in this scenario, that is what is really interesting to us. The HTTP response will look something like this:

HTTP/1.1 200 OK Date: Sat, 11 Jun 2016 02:08:40 GMT Server: Apache Cache-Control: max-age=10800, public, must-revalidate Connection: Keep-Aliv Keep-Alive: timeout=15, max=98 ETag: "c7c-2268d-534cf78e98dc0"

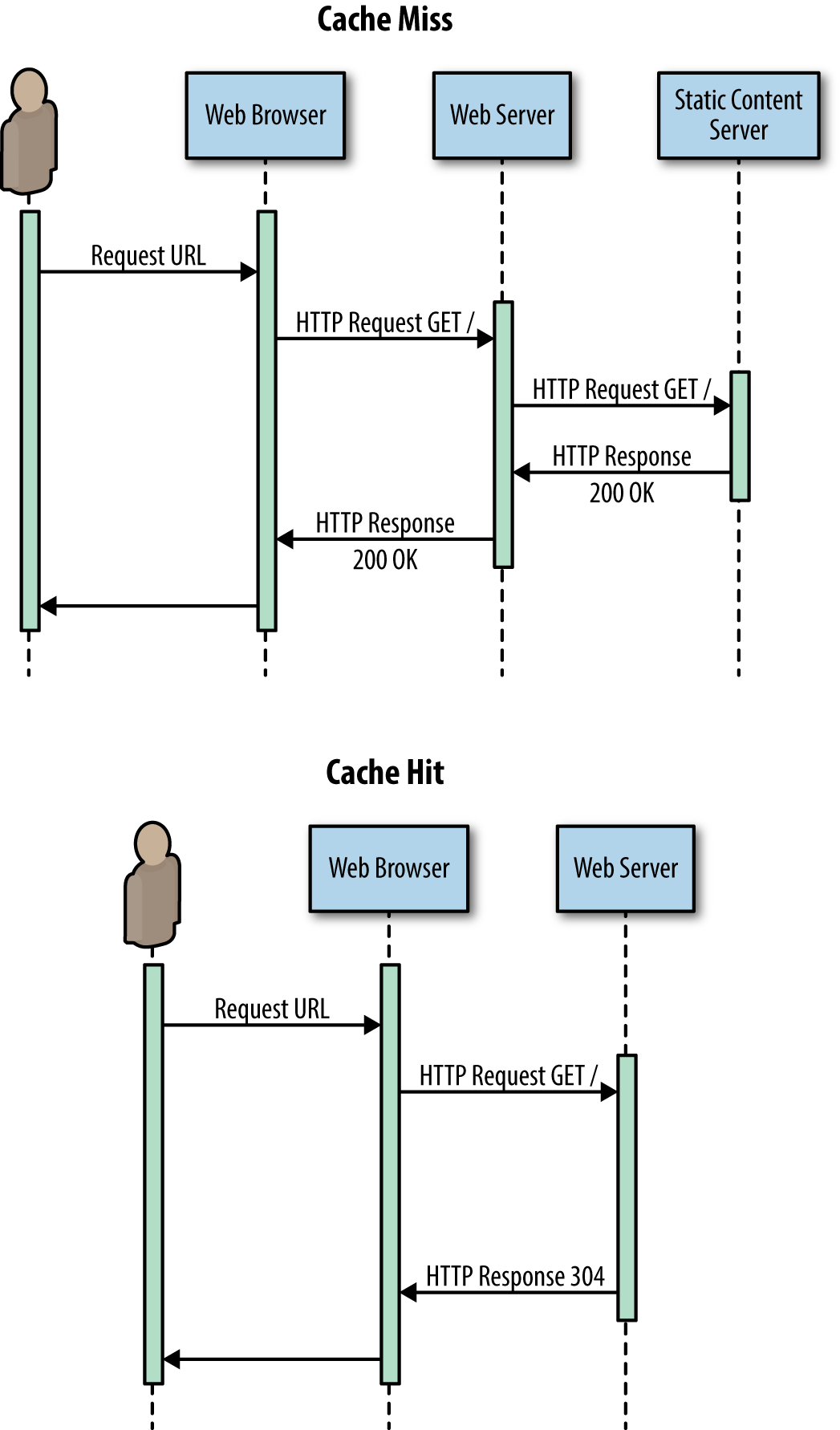

The first line of the HTTP response specifies the protocol and the status code. Generally you will see either a 200 OK for cache misses, a 304 Not Modified with an empty body for cache hits, or a 200 (from cache) for content served from browser cache.

The remainder of the lines are the HTTP response headers that detail specific data for that response.

Cache-Control

The most important header for caching is the Cache-Control header. It accepts a comma-delimited string that outlines the specific rules, called directives, for caching a particular piece of content that must be honored by all caching layers in the transaction. The following are some of the supported cache response directives that are outlined in the HTTP 1.1 specification:

- public

This indicates that the response is safe to cache, by any cache, and is shareable between requests. I would set most shared CSS, JavaScript libraries, or images to public.

- private

This indicates that the response is only safe to cache at the client, and not at a proxy, and should not be part of a shared cache. I would set personalized content to private, like an API call that returns a user’s shopping cart.

- no-cache

This says that the response should not be cached by any cache layer.

- no-store

This is for content that legally cannot be stored on any other machine, like a DRM license or a user’s personal or financial information.

- no-transform

Some CDNs have features that will transform images at the edge for performance gains, but setting the no-transform directive will tell the cache layer to not alter or transform the response in any way.

- must-revalidate

This informs the cache layer that it must revalidate the content after it has reached its expiration date.

- proxy-revalidate

This directive is the same as must-revalidate, except it applies only to proxy caches; browser cache can ignore this.

- max-age

This specifies the maximum age of the response in seconds.

- s-maxage

This directive is for shared caches and will override the max-age directive.

ETag

The ETag header, short for entity tag, is a unique identifier that the server assigns to a resource. It is an opaque identifier, meaning that it is designed to leak no information about what it represents.

When the server responds with an ETag, that ETag is saved by the client and used for conditional GET requests using the If-None-Match HTTP request header. If the ETag matches, then the server responds with a 304 Not Modified status code instead of a 200 OK to let the client know that the cached version of the resource is OK to use.

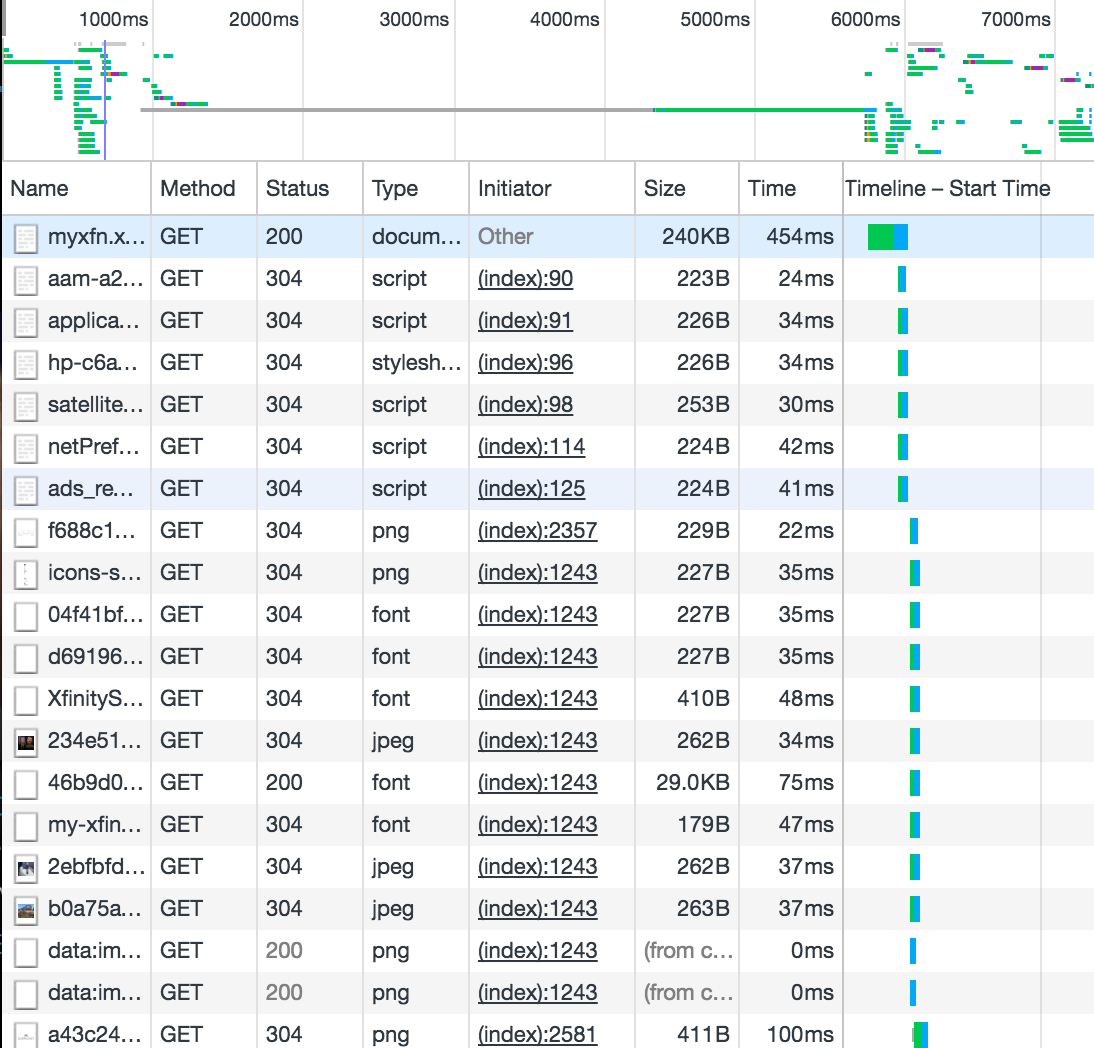

See the waterfall chart in Figure 1-1 and note the Status column. This shows the HTTP response status code.

Figure 1-1. Waterfall chart showing 304s indicating cache results

Vary

The Vary header tells the server what additional request headers to take into consideration when constructing the response. This is useful when specifying cache rules for content that might have the same URI but differs based on user agent or accept-language.

Legacy response headers

The Pragma and Expires headers are two that were part of the HTTP 1.0 standard. But Pragma has since been replaced in HTTP 1.1 by Cache-Control. Even still, conventional wisdom says that it’s important to continue to include them for backward compatibility with HTTP 1.0 caches. What I have found is that applications built when HTTP 1.0 was the standard—legacy middleware tiers, APIs, and even proxies—look for these headers and if they are not present do not know how to handle caching.

Note

I personally ran into this with one of my own middleware tiers that I had inherited at some point in the past. We were building new components and found during load testing that nothing in the new section we were making was being cached. It took us a while to realize that the internal logic of the code was looking for the Expires header.

Pragma was designed to allow cache directives, much like Cache-Control now does, but has since been deprecated to mainly only specify no-cache.

Expires specifies a date/time value that indicates the freshness lifetime of a resource. After that date the resource is considered stale. In HTTP 1.1 the max-age and s-maxage directives replaced the Expires header. See Figure 1-2 to compare a cache miss versus a cache hit.

Figure 1-2. Sequence diagram showing the inherent efficiencies of a cached response versus a cache miss

Tiers of Cache

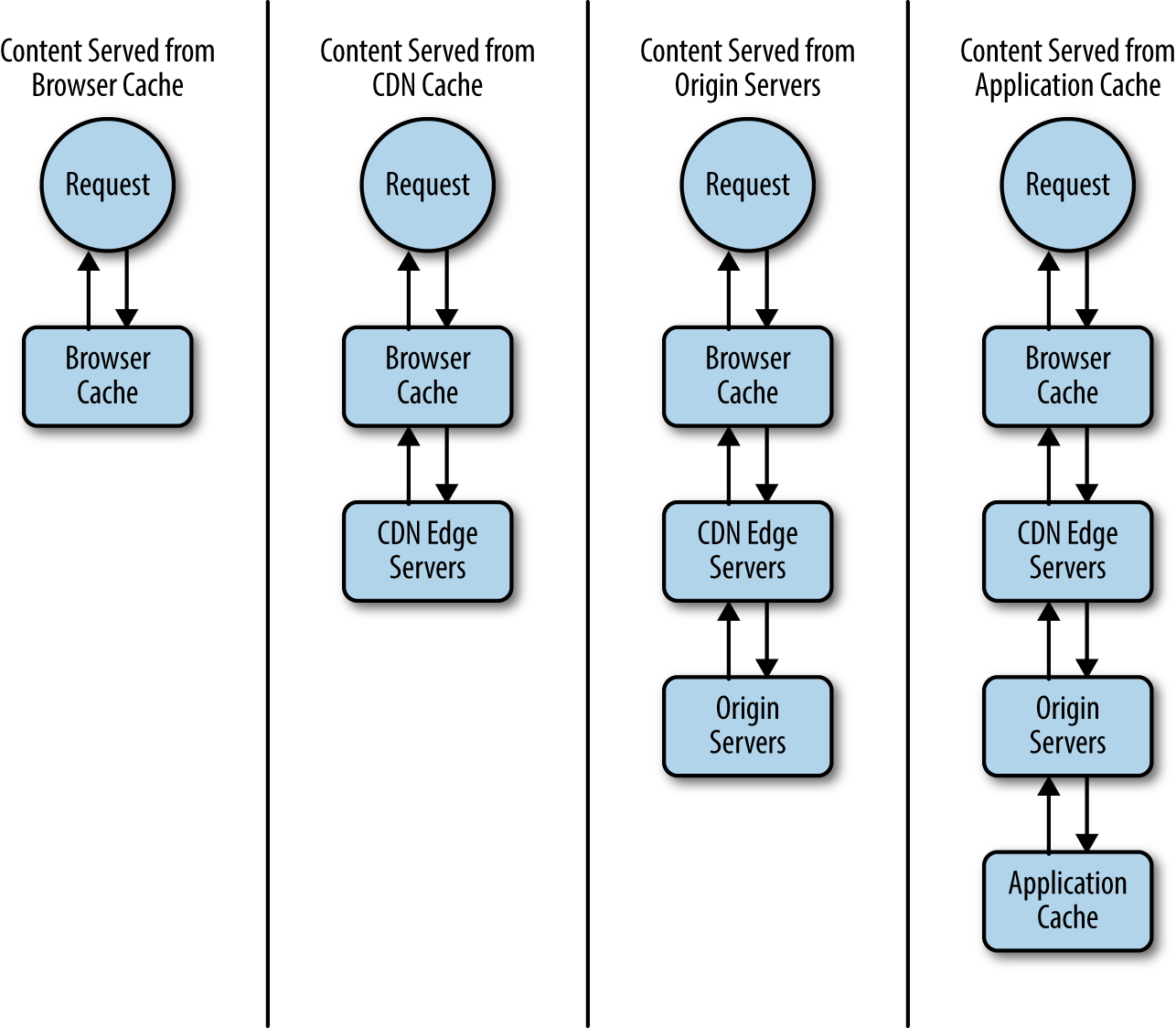

As web developers, we can leverage three main types of cache defined by where along the flow the cache is set:

- Browser cache

- Proxy cache

- Application cache

See Figure 1-3 for a diagram of each kind of cache, including a cache miss.

Figure 1-3. A request traversing different tiers of cache

Browser cache

Browser cache is the fastest cache to retrieve and easiest cache to use. But it is also the one that we have the least amount of control over. Specifically we can’t invalidate browser cache on demand; our users have to clear their own cache. Also certain browsers may choose to ignore rules that specify not to cache content, in favor of their own strategies for offline browsing.

With browser cache the web browser takes the response from the web server, reads the cache control rules, and stores the response on the user’s computer. Then for subsequent requests the browser does not need to go to the web server, it simply pulls the content from the local copy.

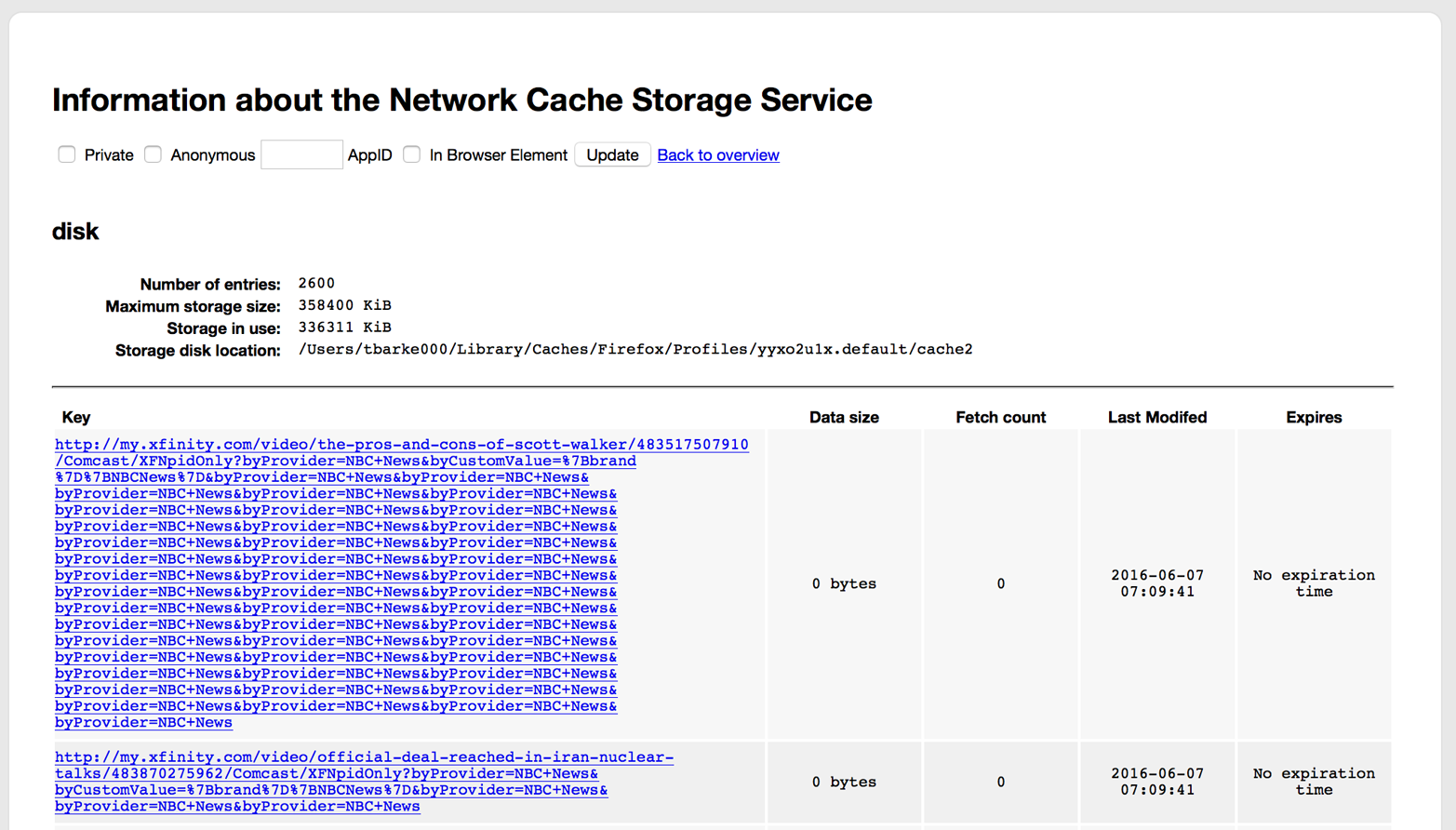

As an end user, you can see your browser’s cache and cache settings by typing about:cache in the location bar. Note this works for most browsers that are not Internet Explorer.

To leverage browser cache, all we need to do is properly set our cache control rules for the content that we want cached.

See Figure 1-4 for how Firefox shows its browser cache stored on disk in its about:caches screen.

Figure 1-4. Disk cache in Firefox’s about:cache screen

Proxy cache

Proxy cache is leveraging an intermediate tier to serve as a cache layer. Requests for content will hit this cache layer and be served cached content rather than ever getting to your origin servers.

In Chapter 2 we will discuss combining this concept with a CDN partner to serve edge cache.

Application cache

Application cache is where you implement a cache layer, like memcached, in your application or available to your application that allows you to store API or database calls so that the data from those calls is available without having to make the same calls over and over again. This is generally implemented at the server side and will make your web server respond to requests faster because it doesn’t have to wait for upstream to respond with data.

See Figure 1-5 for a screenshot of https://memcached.org.

Figure 1-5. Homepage for memcached.org

Summary

Scaling at the backend involves allocating enough physical machines, virtual machines, or just resources to handle large amounts of traffic. This generally means that you have a large infrastructure to monitor and maintain. A node is down, gets introduced to the load balancer, and is seen by the end user as an intermittent error, impacting your site-availability metrics.

But when you leverage scale at the frontend, you need a drastically smaller infrastructure because far fewer hits are making it to your origin.

Get Intelligent Caching now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.