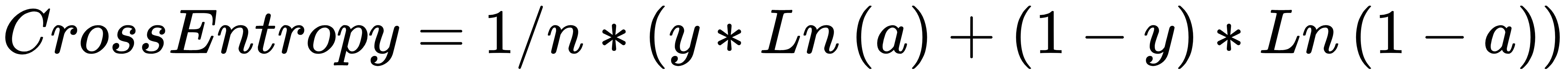

Cross entropy is a derivative-based function as it uses the derivative of a specially designed equation, which is given as follows:

Cross entropy allows the network to learn faster when the difference between the expected and actual output is greater. In other words, the bigger the error, the faster it helps the network learn. We will get our heads around this using some simple code.

Like before, for now, you can use an online alternative if you do not have Python already installed, at https://www.jdoodle.com/python-programming-online. We will cover the installation and setup in Chapter 2, Creating a Real-Estate Price Prediction ...