Chapter 6

Watson and the Jeopardy! Challenge∗

How does Watson—IBM’s Jeopardy!-playing computer—work? Why does it need predictive modeling in order to answer questions, and what secret sauce empowers its high performance? How does the iPhone’s Siri compare? Why is human language such a challenge for computers? Is artificial intelligence possible?

January 14, 2011. The big day had come. David Gondek struggled to sit still, battling the butterflies of performance anxiety, even though he was not the one onstage. Instead, the spotlights shone down upon a machine he had helped build at IBM Research for the past four years. Before his eyes, it was launched into a battle of intellect, competing against humans in this country’s most popular televised celebration of human knowledge and cultural literacy, the quiz show Jeopardy!

Celebrity host Alex Trebek read off a clue, under the category “Dialing for Dialects”:

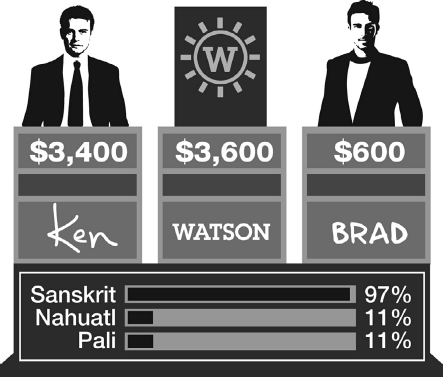

Watson, the electronic progeny of David and his colleagues, was competing against the two all-time champions across the game show’s entire 26-year televised history. These two formidable opponents were of a different ilk, holding certain advantages over the machine, but also certain disadvantages. They were human.

Watson competes against two humans on Jeopardy!

Watson buzzed in ...

Get Predictive Analytics: The Power to Predict Who Will Click, Buy, Lie, or Die now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.