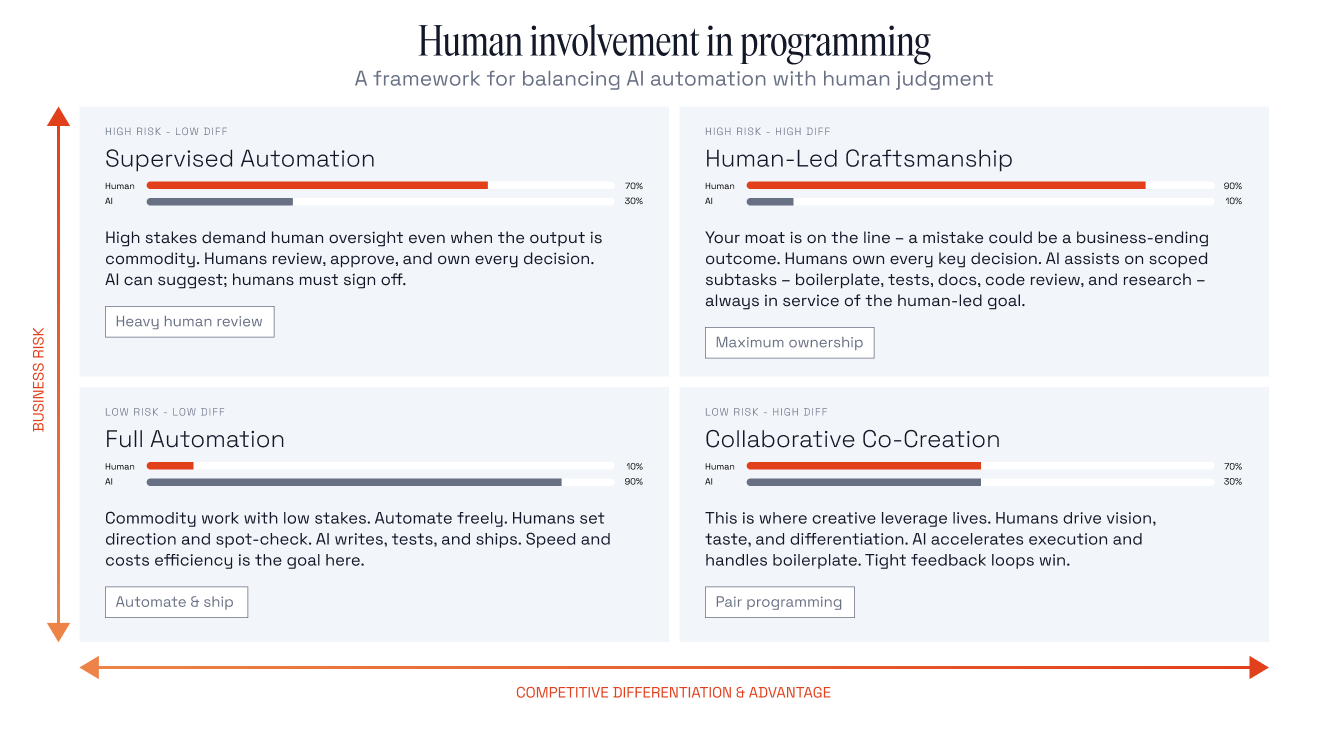

In “Don’t Automate Your Moat,” I argue that engineering organizations should match AI autonomy to two independent dimensions: business risk and competitive differentiation. I used AI Gateway cost controls as a worked example throughout the piece because a single feature touches all four quadrants depending on which piece you’re building.

A piece making that argument should probably be written that way. Otherwise the framework is just rhetoric. So here is what actually happened: The same quadrants, applied to the writing of the post, then the two practices that cut across all of them.

Full Automation: The citation mechanics

My post has eighteen footnotes, all of them needing consistent structure, working URLs, and clean formatting. This is the work the bottom-left quadrant exists for. If a URL is wrong, I fix it in the next pass and nobody outside the editing loop notices.

AI handled the mechanical assembly. I spot-checked.

Collaborative Co-Creation: The AI Gateway example and the build-versus-buy framing

Two things sit in this quadrant.

The AI Gateway example. Using a single feature as a lens across all four quadrants was a product decision for the post. But the choice of which feature, and how to slice it, was recoverable. A weaker example, or one split across three features, would have cost me a draft. AI accelerated execution once I had settled on cost controls. I drove the design choice and interrogated the trade-off.

The build-versus-buy framing. This one was collaborative. Claude proposed the concept and the analogy: that the token-funded generation loop is functionally a procurement decision, not a build decision, even though the code lives in your repo. I saw what the framing could do for the structure of the argument, that it could link cognitive debt to competitive differentiation, survive a skeptical CTO reading it cold, and give the post a through-line that held the whole piece together. From there we worked it together. My phrase “a buy decision wearing a build costume” came out of that back-and-forth, and the structure of the argument got reshaped around the framing until it actually carried. Neither of us would have produced the final version alone. That is what this quadrant is supposed to look like.

In both cases, AI moved fast on execution. The judgment about whether the contribution fit, and what work it had to do in the surrounding argument, stayed with me. Flip the ratio and the post gets worse. Not catastrophically. Just generic in places where specificity was the whole point.

Supervised Automation: The counterargument section

The research is thin. Most engineering work is maintenance and belongs in the automate quadrant regardless. Engineers can develop ownership of AI-generated code through study and iteration.

These are the objections any thoughtful reader would raise. Not because the post is anti-AI (it is not). The argument is that AI autonomy has to be matched with sufficient human understanding, and that argument has to defend itself against the case for letting AI run further with less. AI could draft the shape of those objections.

My job was verification. The bar was whether a thoughtful reader who disagrees with me would find the steelman fair. That meant tracing each concession back to make sure I was not giving away something I should have held, and each objection back to make sure I was representing the strongest version of the case rather than a convenient strawman.

The risk here is subtle. The section is unlikely to be flat-out wrong. The danger is that an unfair steelman quietly undermines the rest of the argument. A reader who notices a weak counterargument starts wondering what else is rigged. AI drafts, human verifies every path before merge.

Human-Led Craftsmanship: The parts I owned outright

This is where most of the actual time went.

The opening. The engineer who could not explain his own algorithm. The colleague paged about a service connected to a database nobody documented. Those examples were mine. The post only works if those scenes feel true, and a generated approximation of them would have read like exactly that. Not a risk worth delegating.

Defining the dimensions. Naming risk and differentiation as the two axes is one thing. Defining them in a way that holds up under pressure is another. The prose that establishes what business risk actually means (blast radius if this fails, from an afternoon to the business itself), and what competitive differentiation actually means (not the brand or the sales team, but the architecture, the algorithms, the institutional judgment that shaped them), is what every quadrant boundary depends on. If those definitions are vague, the quadrants become Rorschach tests. If they are sharp, the quadrants do real work. I wrote and rewrote those passages until a reader could apply them to their own systems without me there to translate.

The framework and the evidence behind it. The two-dimensional framing came out of my own thinking before Claude entered the loop. Once the dimensions existed, iterating with Claude on how to sharpen them was useful. It pushed me on where the dimensions overlapped and where the quadrant labels were doing too much work. But the seed had to be mine. A framework generated from a prompt would have read like one.

The evidence behind the framework worked the same way. I came in with a starter set of papers I already trusted: the METR productivity study, the MIT cognitive debt work, the Anthropic Fellows skill formation paper, the GitClear data on refactoring decline, and the Tilburg study on senior developer maintenance burden. Those were mine. From there, Claude expanded the research base, surfacing the Lancet endoscopy deskilling study, the OX Security and CodeRabbit and Apiiro analyses, and the survey work on LLM code generation in low-resource domains. That expansion was genuinely useful. It made the post broader and more current than what I would have assembled alone in the same time.

But expanding the source list is not the same as checking it. Every source Claude added had to be read against the specific claim it was being asked to support, because a framework is only as strong as the sources that anchor it. Generating a citation is mechanical. Reading a paper carefully enough to know what it proves, and whether the surrounding sentence reflects that, takes real time.

The Knight Capital loss figure was the clearest example. Different reports cite different numbers. The SEC enforcement order documents one figure. Bloomberg and other secondary sources round or reframe it. Claude pulled from whichever source it surfaced first on a given pass, and the number drifted across drafts. Catching that required going back to the primary source and pinning it.

The pattern repeated across other sources. Claude would attribute a claim to the right general area but the wrong specific paper. A finding about senior developer maintenance burden got mapped to a study that examined something adjacent but narrower. A claim about deskilling got pulled from a Lancet study that supports a more limited version of the argument than the way it had been phrased. Every structural source got reverified against what it actually proved. Several were corrected, replaced, or cut. Earlier drafts leaned on a real-world example whose causation was disputed in its sourcing. That example came out, and the Knight Capital section took its place because the SEC enforcement order documents the chain of causation directly.

This work could not be delegated. I had to own the mental model of what each paper actually proved and what it did not, the same way I had to own the mental model of the framework itself. The framework calls this the test of whether the engineer who built it could explain it in an incident review without looking at the code first. The writing equivalent is whether I could defend each citation in front of a skeptical reviewer without re-reading the abstract. The framework is the claim. The evidence is what makes it more than an opinion. Both had to be mine.

That covers the quadrants. Two practices cut across all of them and deserve their own treatment.

Using Claude as a critic

The most valuable thing Claude did on this post was push back. But you have to ask for it the right way.

Generic prompts produce generic critiques. “What do you think of this draft?” gets you a polite reaction with three suggestions. Useless. The prompt that actually works puts Claude in a specific adversarial seat. Mine looked roughly like this:

You are a pro-AI, token-maxing CTO watching your team and your competitors ship faster every quarter. You have a deeper than average understanding of AI. Provide a thorough critique of this article focusing on logic, completeness, and correctness. Be direct. Be brutal. This is not about the author’s feelings. It is about creating the best argument possible.

Three things make that prompt work. The persona is hostile to my thesis. The criteria are concrete: logic, completeness, correctness. And the explicit permission to be brutal lets the model drop the hedging it defaults to.

Working that way surfaced things I would have missed. Claude flagged that an early draft conflated cognitive debt as a risk problem with cognitive debt as a differentiation problem, and that collapsing them weakened both. It pointed out that one of the original real-world failure examples did not actually demonstrate the failure mode I was claiming, because the causation was disputed in the source material. It caught a passage where I was asserting a conclusion the evidence supported only in a narrower form.

Some of the pushback I accepted and rewrote around. Some I rejected, because Claude was applying a generic objection that did not fit the specific argument. (During one critique, Claude told me, “This post is sound advice. It did not need sixteen footnotes to establish it.” Fair point, but a bold claim from the model that couldn’t count to 18.) The point was not to follow every note. The point was to have the notes at all. A solo writer with a deadline does not get a skeptical reviewer on demand. Working this way, I did.

The same prompt structure works for structural critique. Swap the hostile CTO for a senior editor, keep the criteria concrete (where does the flow break, what arrives too late, what is Part 2 failing to deliver that Part 1 set up), and Claude will interrogate the architecture of the argument the same way it interrogated the content. Pulling the build-versus-buy framing forward in the final draft, and tightening the bridge between the risk and differentiation sections, came directly out of running that prompt.

This is what the research describes when it talks about AI use that preserves understanding. Interrogative, not delegated. Claude was stress-testing the argument I had already written, not writing it for me, the way a good editor or a skeptical colleague would.

Sounding like me, not like Claude

The hardest part of working with Claude on a post like this is not getting it to write. It’s getting it to stop writing like Claude. (Yes, I know. That’s the construction this section warns against. I, not Claude, wrote it on purpose.)

Models default to a recognizable voice. Em dashes everywhere. Rule-of-three lists at every cadence shift. “Not just X, it’s Y” as a reflexive contrast. Words like delve, leverage, robust, nuanced, comprehensive, pivotal. Transitions like moreover and furthermore. None of this is wrong, exactly. It is generic writing wearing a polished costume. Readers can feel it even when they cannot name it, and the moment they feel it, they stop trusting the argument.

The Redpanda voice is different. Smart, practical, playful, genuine. Short sentences mixed with long ones. Active voice. Plain English. The brand guide is explicit that we are not corporate, not academic, not polite-but-generic. If the post sounds like a polished bot, it has already failed before the argument starts.

The editing pass on voice was its own discipline, separate from the editing pass on argument or evidence. Claude would draft a paragraph that was structurally fine and full of tells. I would rewrite it. Forcing a sentence to sound like me usually meant cutting hedges, killing throat-clearing, and saying the thing directly. The corporate-academic register Claude defaults to is also the register that lets vague claims hide. Several places where the post is now sharper started as a voice fix that turned into a content fix.

A few of the patterns I usually cut survived in the final post. Two em dashes, one rule-of-three list, a “not X, but Y” construction. Each one earned its place. The em dashes carried a beat that commas would have flattened. The list of three was the cleanest way to render a specific argument without chopping it into fragments. The contrast was the only shape that made the claim land. The discipline is not avoiding the patterns absolutely. It is refusing to use them on autopilot.

The tagline was the purest version of this work. Velocity is table stakes. Code is a commodity. Understanding is the edge. That line went through more iterations than any other sentence in the post. Claude produced dozens of variants. None of them were quite right, because taste in a tagline is not a thing the model can verify for itself. The right version had to feel true to me when I read it out loud. The iteration was useful, but the judgment had to be mine.

The takeaway

The parts I delegated most heavily were the parts where being wrong was cheapest. The parts I owned most tightly were the parts where being wrong would have cost the argument or the reader’s trust. The most useful thing Claude did was push back, stress-test the structure, and force me to defend the work I was claiming as mine.

The friction we hit, the drifting Knight Capital figure, the misattributed citations, the model’s instinct to write like a model, did not mean the tool failed. It meant that without an owner holding the mental model, the output would have looked clean and been quietly broken. The framework decided where to spend that ownership. I made that call deliberately, and the post reflects it.