Machine Learning for Designers

This report introduces contemporary machine learning systems, but also provides a conceptual framework to help you integrate machine-learning capabilities into your user-facing designs.

Les machines de l'île (source: By Traaf on Flickr)

Les machines de l'île (source: By Traaf on Flickr)

Introduction

Since the dawn of computing, we have dreamed of (and had nightmares about) machines that can think and speak like us. But the computers we’ve interacted with over the past few decades are a far cry from HAL 9000 or Samantha from Her. Nevertheless, machine learning is in the midst of a renaissance that will transform countless industries and provide designers with a wide assortment of new tools for better engaging with and understanding users. These technologies will give rise to new design challenges and require new ways of thinking about the design of user interfaces and interactions.

To take full advantage of these systems’ vast technical capabilities, designers will need to forge even deeper collaborative relationships with programmers. As these complex technologies make their way from research prototypes to user-facing products, programmers will also rely upon designers to discover engaging applications for these systems.

In the text that follows, we will explore some of the technical properties and constraints of machine learning systems as well as their implications for user-facing designs. We will look at how designers can develop interaction paradigms and a design vocabulary around these technologies and consider how designers can begin to incorporate the power of machine learning into their work.

Why Design for Machine Learning is Different

A Different Kind of Logic

In our everyday communication, we generally use what logicians call fuzzy logic. This form of logic relates to approximate rather than exact reasoning. For example, we might identify an object as being “very small,” “slightly red,” or “pretty nearby.” These statements do not hold an exact meaning and are often context-dependent. When we say that a car is small, this implies a very different scale than when we say that a planet is small. Describing an object in these terms requires an auxiliary knowledge of the range of possible values that exists within a specific domain of meaning. If we had only seen one car ever, we would not be able to distinguish a small car from a large one. Even if we had seen a handful of cars, we could not say with great assurance that we knew the full range of possible car sizes. With sufficient experience, we could never be completely sure that we had seen the smallest and largest of all cars, but we could feel relatively certain that we had a good approximation of the range. Since the people around us will tend to have had relatively similar experiences of cars, we can meaningfully discuss them with one another in fuzzy terms.

Computers, however, have not traditionally had access to this sort of auxiliary knowledge. Instead, they have lived a life of experiential deprivation. As such, traditional computing platforms have been designed to operate on logical expressions that can be evaluated without the knowledge of any outside factor beyond those expressly provided to them. Though fuzzy logical expressions can be employed by traditional platforms through the programmer’s or user’s explicit delineation of a fuzzy term such as “very small,” these systems have generally been designed to deal with boolean logic (also called “binary logic”), in which every expression must ultimately evaluate to either true or false. One rationale for this approach, as we will discuss further in the next section, is that boolean logic allows a computer program’s behavior to be defined as a finite set of concrete states, making it easier to build and test systems that will behave in a predictable manner and conform precisely to their programmer’s intentions.

Machine learning changes all this by providing mechanisms for imparting experiential knowledge upon computing systems. These technologies enable machines to deal with fuzzier and more complex or “human” concepts, but also bring an assortment of design challenges related to the sometimes problematic nature of working with imprecise terminology and unpredictable behavior.

A Different Kind of Development

In traditional programming environments, developers use boolean logic to explicitly describe each of a program’s possible states and the exact conditions under which the user will be able to transition between them. This is analogous to a “choose-your-own-adventure” book, which contains instructions like, “if you want the prince to fight the dragon, turn to page 32.” In code, a conditional expression (also called an if-statement) is employed to move the user to a particular portion of the code if some pre defined set of conditions is met.

In pseudocode, a conditional expression might look like this:

if ( mouse button is pressed and mouse is over the 'Login' button ), then show the 'Welcome' screen

Since a program comprises a finite number of states and transitions, which can be explicitly enumerated and inspected, the program’s overall behavior should be predictable, repeatable, and testable. This is not to say, of course, that traditional programmatic logic cannot contain hard-to-foresee “edge-cases,” which lead to undefined or undesirable behavior under some specific set of conditions that have not been addressed by the programmer. Yet, regardless of the difficulty of identifying these problematic edge-cases in a complex piece of software, it is at least conceptually possible to methodically probe every possible path within the “choose-your-own-adventure” and prevent the user from accessing an undesirable state by altering or appending the program’s explicitly defined logic.

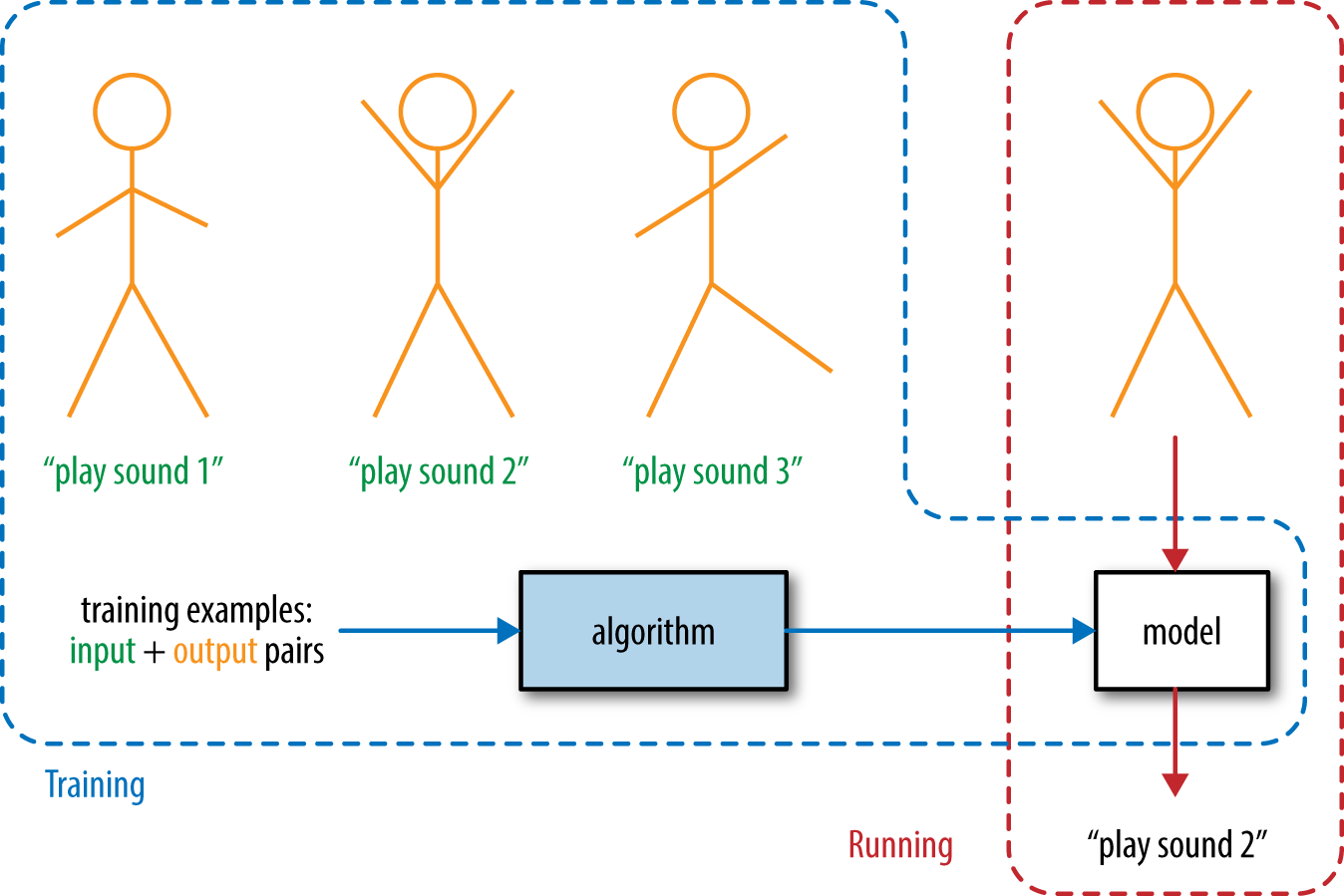

The behavior of machine learning systems, on the other hand, is not defined through this kind of explicit programming process. Instead of using an explicit set of rules to describe a program’s possible behaviors, a machine learning system looks for patterns within a set of example behaviors in order to produce an approximate representation of the rules themselves.

This process is somewhat like our own mental processes for learning about the world around us. Long before we encounter any formal description of the “laws” of physics, we learn to operate within them by observing the outcomes of our interactions with the physical world. A child may have no awareness of Newton’s equations, but through repeated observation and experimentation, the child will come to recognize patterns in the relationships between the physical properties and behaviors of objects.

While this approach offers an extremely effective mechanism for learning to operate on complex systems, it does not yield a concrete or explicit set of rules governing that system. In the context of human intelligence, we often refer to this as “intuition,” or the ability to operate on complex systems without being able to formally articulate the procedure by which we achieved some desired outcome. Informed by experience, we come up with a set of approximate or provisional rules known as heuristics (or “rules of thumb”) and operate on that basis.

In a machine learning system, these implicitly defined rules look nothing like the explicitly defined logical expressions of a traditional programming language. Instead, they are comprised of distributed representations that implicitly describe the probabilistic connections between the set of interrelated components of a complex system.

Machine learning often requires a very large number of examples to produce a strong intuition for the behaviors of a complex system.

In a sense, this requirement is related to the problem of edge-cases, which present a different set of challenges in the context of machine learning. Just as it is hard to imagine every possible outcome of a set of rules, it is, conversely, difficult to extrapolate every possible rule from a set of example outcomes. To extrapolate a good approximation of the rules, the learner must observe many variations of their application. The learner must be exposed to the more extreme or unlikely behaviors of a system as well as the most likely ones. Or, as the educational philosopher Patricia Carini said, “To let meaning occur requires time and the possibility for the rich and varied relationships among things to become evident.”1

While intuitive learners may be slower at rote procedural tasks such as those performed by a calculator, they are able to perform much more complex tasks that do not lend themselves to exact procedures. Nevertheless, even with an immense amount of training, these intuitive approaches sometimes fail us. We may, for instance, find ourselves mistakenly identifying a human face in a cloud or a grilled cheese sandwich.

A Different Kind of Precision

A key principle in the design of conventional programming languages is that each feature should work in a predictable, repeatable manner provided that the feature is being used correctly by the programmer. No matter how many times we perform an arithmetic operation such as “2 + 2,” we should always get the same answer. If this is ever untrue, then a bug exists in the language or tool we are using. Though it is not inconceivable for a programming language to contain a bug, it is relatively rare and would almost never pertain to an operation as commonly used as an arithmetic operator. To be extra certain that conventional code will operate as expected, most large-scale codebases ship with a set of formal “unit tests” that can be run on the user’s machine at installation time to ensure that the functionality of the system is fully in line with the developer’s expectations.

So, putting rare bugs aside, conventional programming languages can be thought of as systems that are always correct about mundane things like concrete mathematical operations. Machine learning algorithms, on the other hand, can be thought of as systems that are often correct about more complicated things like identifying human faces in an image. Since a machine learning system is designed to probabilistically approximate a set of demonstrated behaviors, its very nature generally precludes it from behaving in an entirely predictable and reproducible manner, even if it has been properly trained on an extremely large number of examples. This is not to say, of course, that a well-trained machine learning system’s behavior must inherently be erratic to a detrimental degree. Rather, it should be understood and considered within the design of machine-learning-enhanced systems that their capacity for dealing with extraordinarily complex concepts and patterns also comes with a certain degree of imprecision and unpredictability beyond what can be expected from traditional computing platforms.

Later in the text, we will take a closer look at some design strategies for dealing with imprecision and unpredictable behaviors in machine learning systems.

A Different Kind of Problem

Machine learning can perform complex tasks that cannot be addressed by conventional computing platforms. However, the process of training and utilizing machine learning systems often comes with substantially greater overhead than the process of developing conventional systems. So while machine learning systems can be taught to perform simple tasks such as arithmetic operations, as a general rule of thumb, you should only take a machine learning approach to a given problem if no viable conventional approach exists.

Even for tasks that are well-suited to a machine learning solution, there are numerous considerations about which learning mechanisms to use and how to curate the training data so that it can be most comprehensible to the learning system.

In the sections that follow, we will look more closely at how to identify problems that are well-suited for machine learning solutions as well as the numerous factors that go into applying learning algorithms to specific problems. But for the time being, we should understand machine learning to be useful in solving problems that can be encapsulated by a set of examples, but not easily described in formal terms.

What Is Machine Learning?

The Mental Process of Recognizing Objects

Think about your own mental process of recognizing a human face. It’s such an innate, automatic behavior, it is difficult to think about in concrete terms. But this difficulty is not only a product of the fact that you have performed the task so many times. There are many other often-repeated procedures that we could express concretely, like how to brush your teeth or scramble an egg. Rather, it is nearly impossible to describe the process of recognizing a face because it involves the balancing of an extremely large and complex set of interrelated factors, and therefore defies any concrete description as a sequence of steps or set of rules.

To begin with, there is a great deal of variation in the facial features of people of different ethnicities, ages, and genders. Furthermore, every individual person can be viewed from an infinite number of vantage points in countless lighting scenarios and surrounding environments. In assessing whether the object we are looking at is a human face, we must consider each of these properties in relation to each other. As we change vantage points around the face, the proportion and relative placement of the nose changes in relation to the eyes. As the face moves closer to or further from other objects and light sources, its coloring and regions of contrast change too.

There are infinite combinations of properties that would yield the valid identification of a human face and an equally great number of combinations that would not. The set of rules separating these two groups is just too complex to describe through conditional logic. We are able to identify a face almost automatically because our great wealth of experience in observing and interacting with the visible world has allowed us to build up a set of heuristics that can be used to quickly, intuitively, and somewhat imprecisely gauge whether a particular expression of properties is in the correct balance to form a human face.

Learning by Example

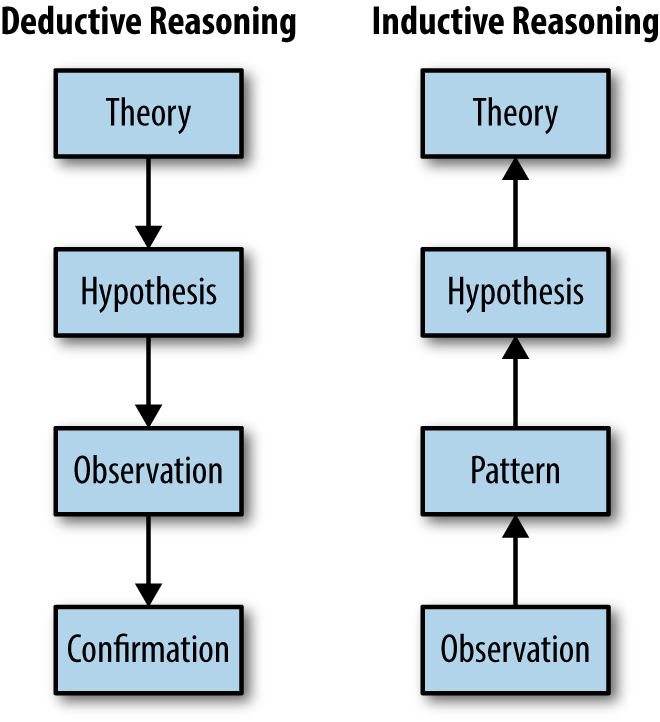

In logic, there are two main approaches to reasoning about how a set of specific observations and a set of general rules relate to one another. In deductive reasoning, we start with a broad theory about the rules governing a system, distill this theory into more specific hypotheses, gather specific observations and test them against our hypotheses in order to confirm whether the original theory was correct. In inductive reasoning, we start with a group of specific observations, look for patterns in those observations, formulate tentative hypotheses, and ultimately try to produce a general theory that encompasses our original observations. See Figure 1-1 for an illustration of the differences between these two forms of reasoning.

Each of these approaches plays an important role in scientific inquiry. In some cases, we have a general sense of the principles that govern a system, but need to confirm that our beliefs hold true across many specific instances. In other cases, we have made a set of observations and wish to develop a general theory that explains them.

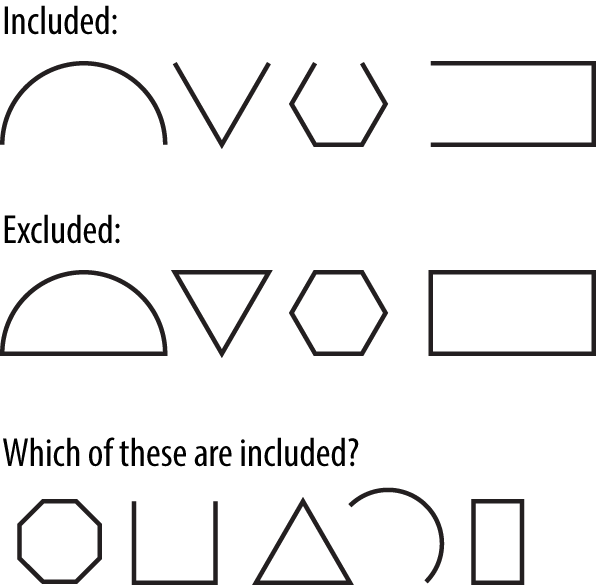

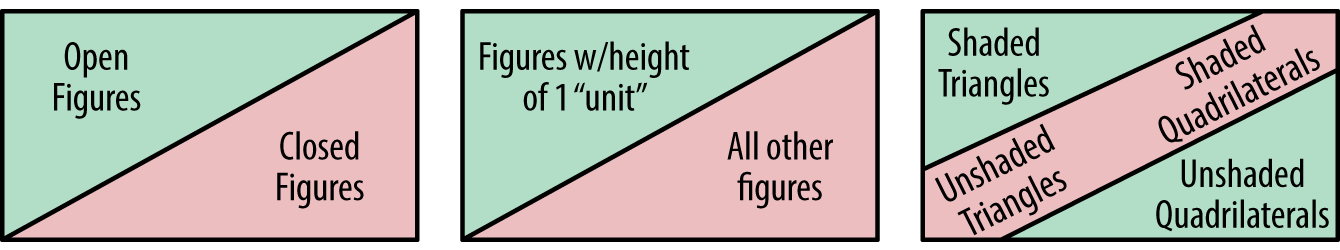

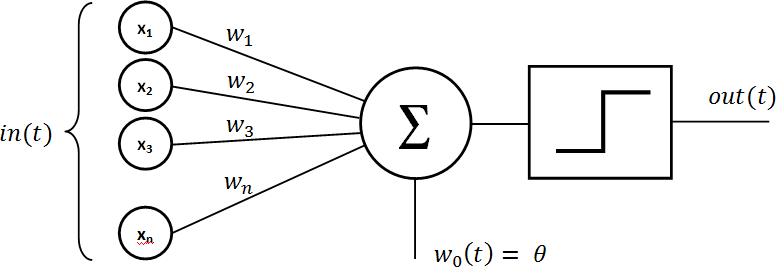

To a large extent, machine learning systems can be seen as tools that assist or automate inductive reasoning processes. In a simple system that is governed by a small number of rules, it is often quite easy to produce a general theory from a handful of specific examples. Consider Figure 1-2 as an example of such a system.2

In this system, you should have no trouble uncovering the singular rule that governs inclusion: open figures are included and closed figures are excluded. Once discovered, you can easily apply this rule to the uncategorized figures in the bottom row.

In Figure 1-3, you may have to look a bit harder.

Here, there seem to be more variables involved. You may have considered the shape and shading of each figure before discovering that in fact this system is also governed by a single attribute: the figure’s height. If it took you a moment to discover the rule, it is likely because you spent time considering attributes that seemed like they would be pertinent to the determination but were ultimately not. This kind of “noise” exists in many systems, making it more difficult to isolate the meaningful attributes.

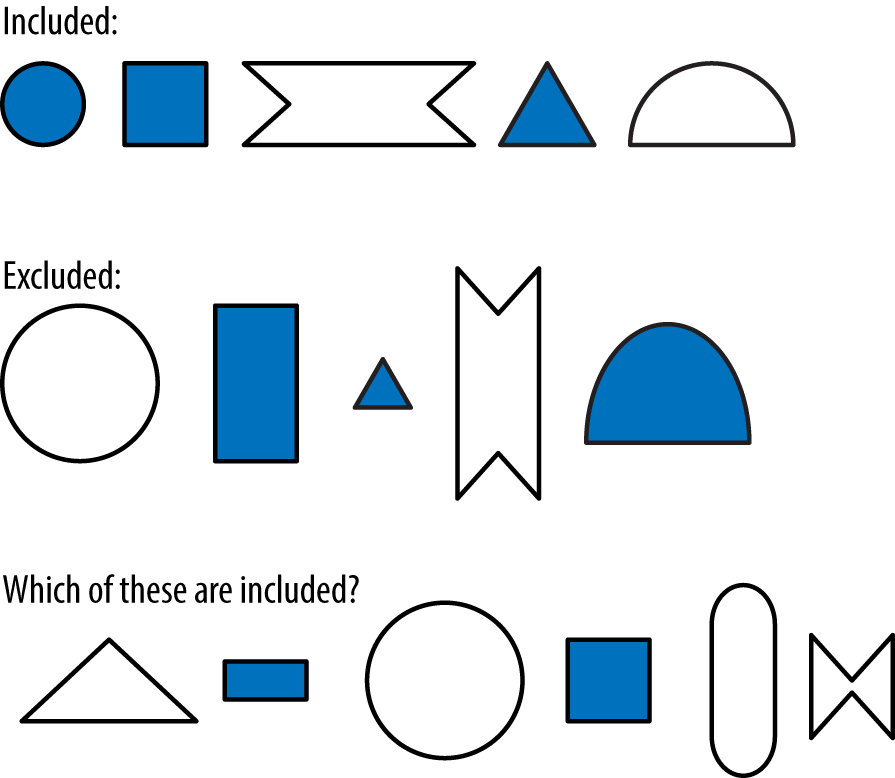

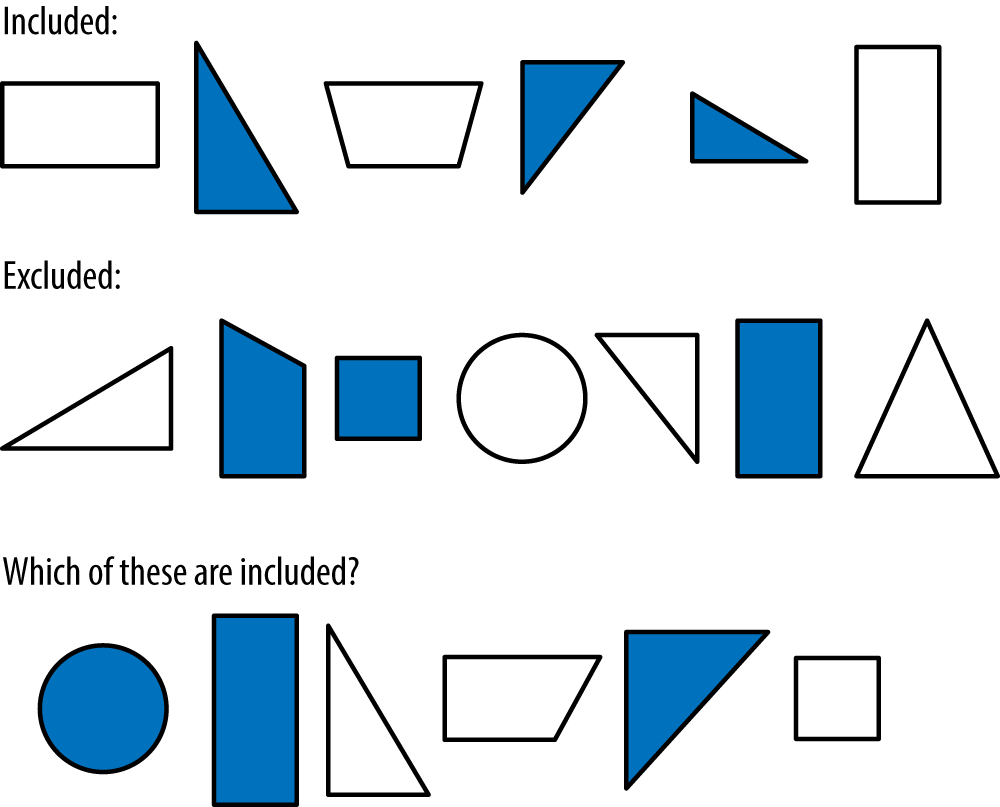

Let’s now consider Figure 1-4.

In this diagram, the rules have in fact gotten a bit more complicated. Here, shaded triangles and unshaded quadrilaterals are included and all other figures are excluded. This rule system is harder to uncover because it involves an interdependency between two attributes of the figures. Neither the shape nor the shading alone determines inclusion. A triangle’s inclusion depends upon its shading and a shaded figure’s inclusion depends upon its shape. In machine learning, this is called a linearly inseparable problem because it is not possible to separate the included and excluded figures using a single “line” or determining attribute. Linearly inseparable problems are more difficult for machine learning systems to solve, and it took several decades of research to discover robust techniques for handling them. See Figure 1-5.

In general, the difficulty of an inductive reasoning problem relates to the number of relevant and irrelevant attributes involved as well as the subtlety and interdependency of the relevant attributes. Many real-world problems, like recognizing a human face, involve an immense number of interrelated attributes and a great deal of noise. For the vast majority of human history, this kind of problem has been beyond the reach of mechanical automation. The advent of machine learning and the ability to automate the synthesis of general knowledge about complex systems from specific information has deeply significant and far-reaching implications. For designers, it means being able to understand users more holistically through their interactions with the interfaces and experiences we build. This understanding will allow us to better anticipate and meet users’ needs, elevate their capabilities and extend their reach.

Mechanical Induction

To get a better sense of how machine learning algorithms actually perform induction, let’s consider Figure 1-6.

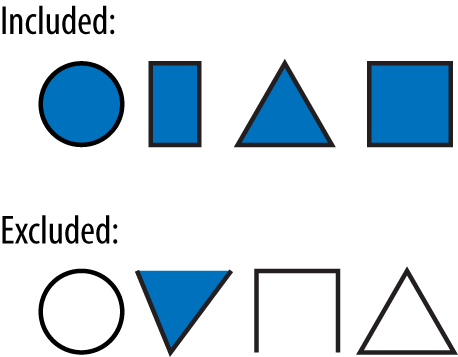

This system is equivalent to the boolean logical expression, “AND.” That is, only figures that are both shaded and closed are included. Before we turn our attention to induction, let’s first consider how we would implement this logic in an electrical system from a deductive point of view. In other words, if we already knew the rule governing this system, how could we implement an electrical device that determines whether a particular figure should be included or excluded? See Figure 1-7.

In this diagram, we have a wire leading from each input attribute to a “decision node.” If a given figure is shaded, then an electrical signal will be sent through the wire leading from Input A. If the figure is closed, then an electrical signal will be sent through the wire leading from Input B. The decision node will output an electrical signal indicating that the figure is included if the sum of its input signals is greater than or equal to 1 volt.

To implement the behavior of an AND gate, we need to set the voltage associated with each of the two input signals. Since the output threshold is 1 volt and we only want the output to be triggered if both inputs are active, we can set the voltage associated with each input to 0.5 volts. In this configuration, if only one or neither input is active, the output threshold will not be reached. With these signal voltages now set, we have implemented the mechanics of the general rule governing the system and can use this electronic device to deduce the correct output for any example input.

Now, let us consider the same problem from an inductive point of view. In this case, we have a set of example inputs and outputs that exemplify a rule but do not know what the rule is. We wish to determine the nature of the rule using these examples.

Let’s again assume that the decision node’s output threshold is 1 volt. To reproduce the behavior of the AND gate by induction, we need to find voltage levels for the input signals that will produce the expected output for each pair of example inputs, telling us whether those inputs are included in the rule. The process of discovering the right combination of voltages can be seen as a kind of search problem.

One approach we might take is to choose random voltages for the input signals, use these to predict the output of each example, and compare these predictions to the given outputs. If the predictions match the correct outputs, then we have found good voltage levels. If not, we could choose new random voltages and start the process over. This process could then be repeated until the voltages of each input were weighted so that the system could consistently predict whether each input pair fits the rule.

In a simple system like this one, a guess-and-check approach may allow us to arrive at suitable voltages within a reasonable amount of time. But for a system that involves many more attributes, the number of possible combinations of signal voltages would be immense and we would be unlikely to guess suitable values efficiently. With each additional attribute, we would need to search for a needle in an increasingly large haystack.

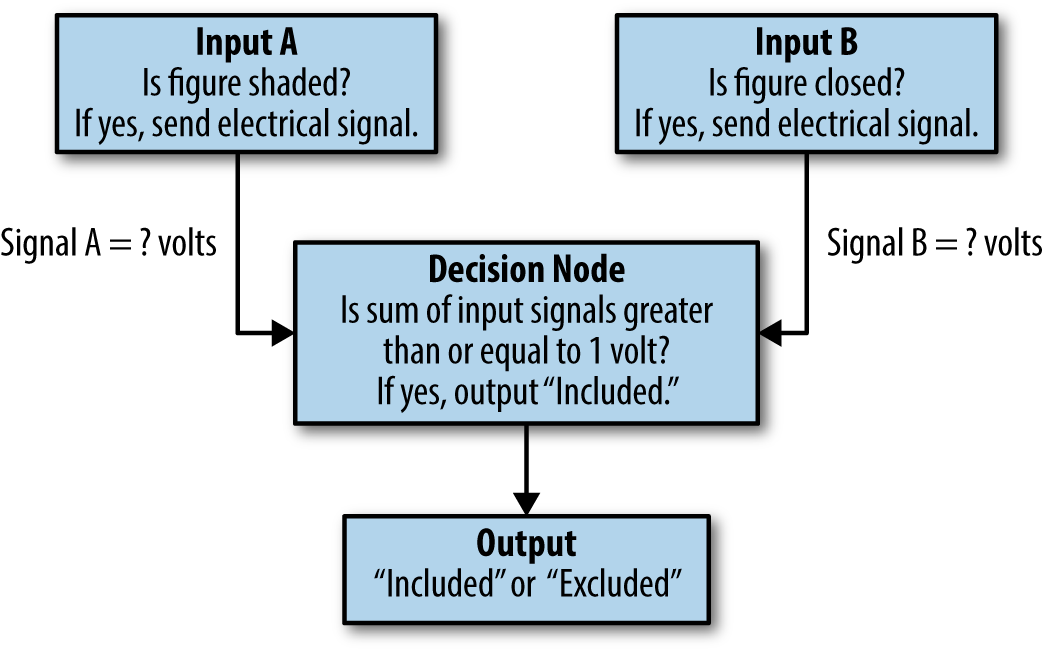

Rather than guessing randomly and starting over when the results are not suitable, we could instead take an iterative approach. We could start with random values and check the output predictions they yield. But rather than starting over if the results are inaccurate, we could instead look at the extent and direction of that inaccuracy and try to incrementally adjust the voltages to produce more accurate results. The process outlined above is a simplified description of the learning procedure used by one of the earliest machine learning systems, called a Perceptron (Figure 1-8), which was invented by Frank Rosenblatt in 1957.3

Once the Perceptron has completed the inductive learning process, we have a network of voltage levels which implicitly describe the rule system. We call this a distributed representation. It can produce the correct outputs, but it is hard to look at a distributed representation and understand the rules explicitly. Like in our own neural networks, the rules are represented implicitly or impressionistically. Nonetheless, they serve the desired purpose.

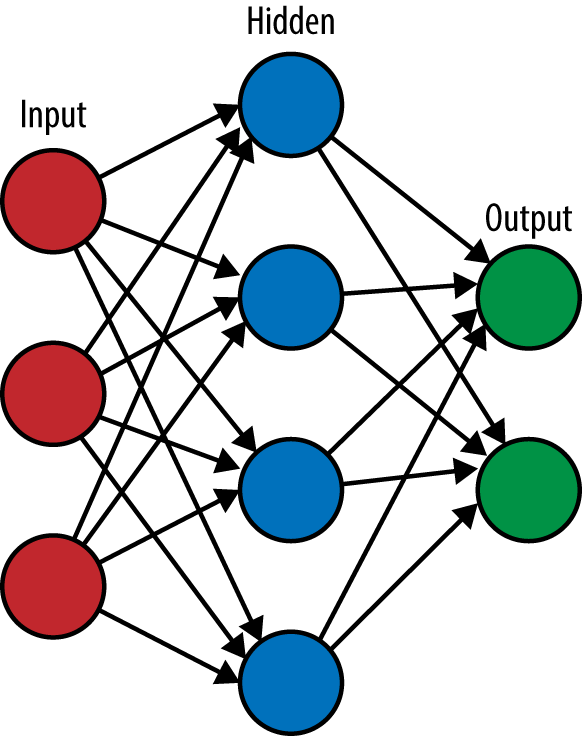

Though Perceptrons are capable of performing inductive learning on simple systems, they are not capable of solving linearly inseparable problems. To solve this kind of problem, we need to account for interdependent relationships between attributes. In a sense, we can think of an interdependency as being a kind of attribute in itself. Yet, in complex data, it is often very difficult to spot interdependencies simply by looking at the data. Therefore, we need some way of allowing the learning system to discover and account for these interdependencies on its own. This can be done by adding one or more layers of nodes between the inputs and outputs. The express purpose of these “hidden” nodes is to characterize the interdependencies that may be concealed within the relationships between the data’s concrete (or “visible”) attributes. The addition of these hidden nodes makes the inductive learning process significantly more complex.

The backpropagation algorithm, which was developed in the late 1960s but not fully utilized until a 1986 paper by David Rumelhart et al.,4 can perform inductive learning for linearly inseparable problems. Readers interested in learning more about these ideas should refer to the section Going Further.

Common Analogies for Machine Learning

Biological systems

When Leonardo da Vinci set out to design a flying machine, he naturally looked for inspiration in the only flying machines of his time: winged animals. He studied the stabilizing feathers of birds, observed how changes in wing shape could be used for steering, and produced numerous sketches for machines powered by human “wing flapping.”

Ultimately, it has proven more practical to design flying machines around the mechanism of a spinning turbine than to directly imitate the flapping wing motion of birds. Nevertheless, from da Vinci onward, human designers have pulled many key principles and mechanisms for flight from their observations of biological systems. Nature, after all, had a head start in working on the problem and we would be foolish to ignore its findings.

Similarly, since the only examples of intelligence we have had access to are the living things of this planet, it should come as no surprise that machine learning researchers have looked to biological systems for both the guiding principles and specific design mechanisms of learning and intelligence.

In a famous 1950 paper, “Computing Machinery and Intelligence,”5 the computer science luminary Alan Turing pondered the question of whether machines could be made to think. Realizing that “thought” was a difficult notion to define, Turing proposed what he believed to be a closely related and unambiguous way of reframing the question: “Are there imaginable digital computers which would do well in the imitation game?” In the proposed game, which is now generally referred to as a Turing Test, a human interrogator poses written questions to a human and a machine. If the interrogator is unable to determine which party is human based on the responses to these questions, then it may be reasoned that the machine is intelligent. In the framing of this approach, it is clear that a system’s similarity to a biologically produced intelligence has been a central metric in evaluating machine intelligence since the inception of the field.

In the early history of the field, numerous attempts were made at developing analog and digital systems that simulated the workings of the human brain. One such analog device was the Homeostat, developed by William Ross Ashby in 1948, which used an electro-mechanical process to detect and compensate for changes in a physical space in order to create stable environmental conditions. In 1959, Herbert Simon, J.C. Shaw, and Allen Newell developed a digital system called the General Problem Solver, which could automatically produce mathematical proofs to formal logic problems. This system was capable of solving simple test problems such as the Tower of Hanoi puzzle, but did not scale well because its search-based approach required the storage of an intractable number of combinations in solving more complex problems.

As the field has matured, one major category of machine learning algorithms in particular has focused on imitating biological learning systems: the appropriately named Artificial Neural Networks (ANNs). These machines, which include Perceptrons as well as the deep learning systems discussed later in this text, are modeled after but implemented differently from biological systems. See Figure 1-9.

Instead of the electrochemical processes performed by biological neurons, ANNs employ traditional computer circuitry and code to produce simplified mathematical models of neural architecture and activity. ANNs have a long way to go in approaching the advanced and generalized intelligence of humans. Like the relationship between birds and airplanes, we may continue to find practical reasons for deviating from the specific mechanisms of biological systems. Still, ANNs have borrowed a great many ideas from their biological counterparts and will continue to do so as the fields of neuroscience and machine learning evolve.

Thermodynamic systems

One indirect outcome of machine learning is that the effort to produce practical learning machines has also led to deeper philosophical understandings of what learning and intelligence really are as phenomena in nature. In science fiction, we tend to assume that all advanced intelligences would be something like ourselves, since we have no dramatically different examples of intelligence to draw upon.

For this reason, it might be surprising to learn that one of the primary inspirations for the mathematical models used in machine learning comes from the field of Thermodynamics, a branch of physics concerned with heat and energy transfer. Though we would certainly call the behaviors of thermal systems complex, we have not generally thought of these systems as holding a strong relation to the fundamental principles of intelligence and life.

From our earlier discussion of inductive reasoning, we may see that learning has a great deal to do with the gradual or iterative process of finding a balance between many interrelated factors. The conceptual relationship between this process and the tendency of thermal systems to seek equilibrium has allowed machine learning researchers to adopt some of the ideas and equations established within thermodynamics to their efforts to model the characteristics of learning.

Of course, what we choose to call “intelligence” or “life” is a matter of language more than anything else. Nevertheless, it is interesting to see these phenomena in a broader context and understand that nature has a way of reusing certain principles across many disparate applications.

Electrical systems

By the start of the twentieth century, scientists had begun to understand that the brain’s ability to store memories and trigger actions in the body was produced by the transmission of electrical signals between neurons. By mid-century, several preliminary models for simulating the electrical behaviors of an individual neuron had been developed, including the Perceptron. As we saw in the Biological systems section, these models have some important similarities to the logic gates that comprise the basic building blocks of electronic systems. In its most basic conception, an individual neuron collects electrical signals from the other neurons that lead into it and forwards the electrical signal to its connected output neurons when a sufficient number of its inputs have been electrically activated.

These early discoveries contributed to a dramatic overestimation of the ease with which we would be able to produce a true artificial intelligence. As the fields of neuroscience and machine learning have progressed, we have come to see that understanding the electrical behaviors and underlying mathematical properties of an individual neuron elucidates only a tiny aspect of the overall workings of a brain. In describing the mechanics of a simple learning machine somewhat like a Perceptron, Alan Turing remarked, “The behavior of a machine with so few units is naturally very trivial. However, machines of this character can behave in a very complicated manner when the number of units is large.”6

Despite some similarities in their basic building blocks, neural networks and conventional electronic systems use very different sets of principles in combining their basic building blocks to produce more complex behaviors. An electronic component helps to route electrical signals through explicit logical decision paths in much the same manner as conventional computer programs. Individual neurons, on the other hand, are used to store small pieces of the distributed representations of inductively approximated rule systems.

So, while there is in one sense a very real connection between neural networks and electrical systems, we should be careful not to think of brains or machine learning systems as mere extensions of the kinds of systems studied within the field of electrical engineering.

Ways of Learning

In machine learning, the terms supervised, unsupervised, semi-supervised, and reinforcement learning are used to describe some of the key differences in how various models and algorithms learn and what they learn about. There are many additional terms used within the field of machine learning to describe other important distinctions, but these four categories provide a basic vocabulary for discussing the main types of machine learning systems:

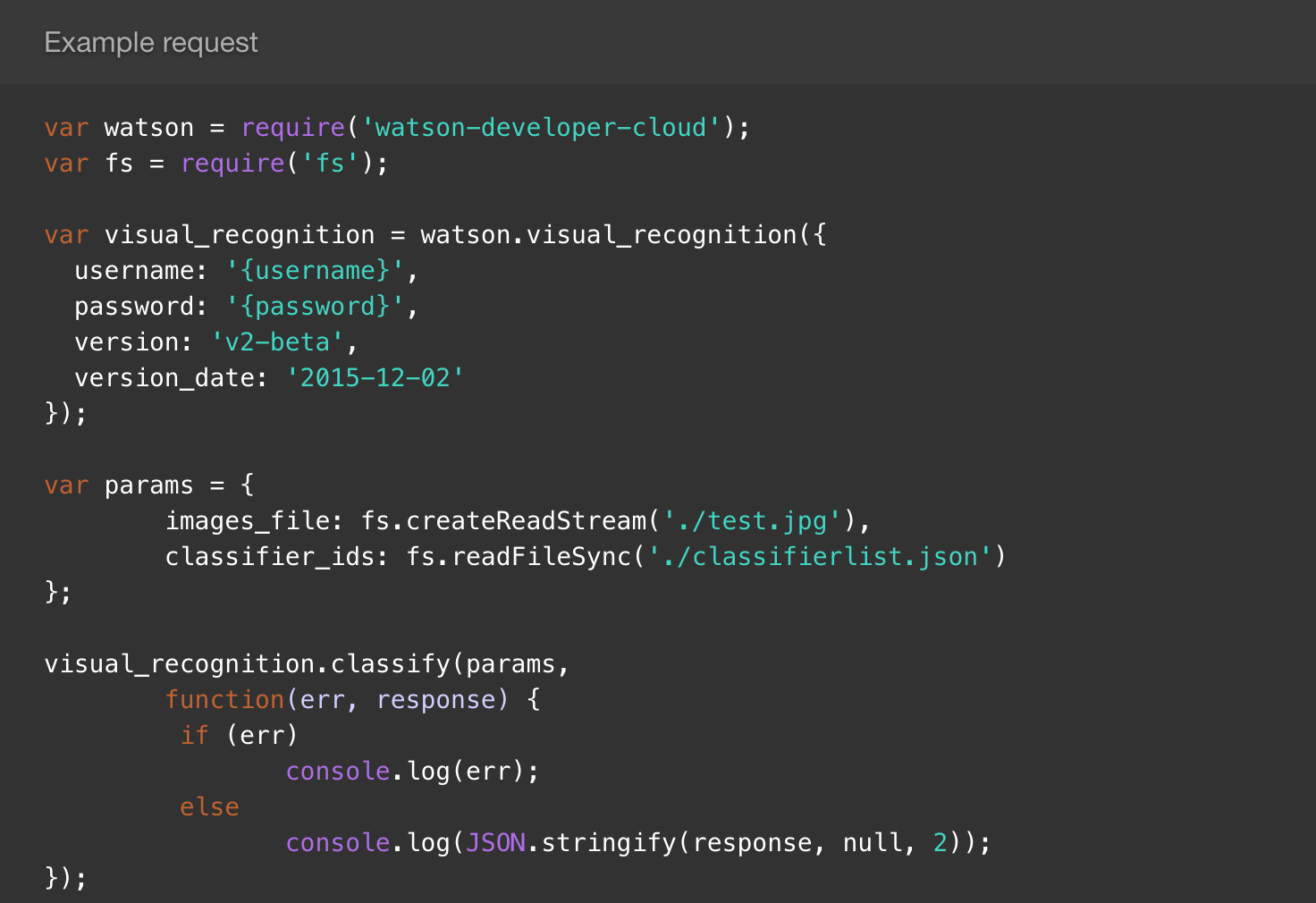

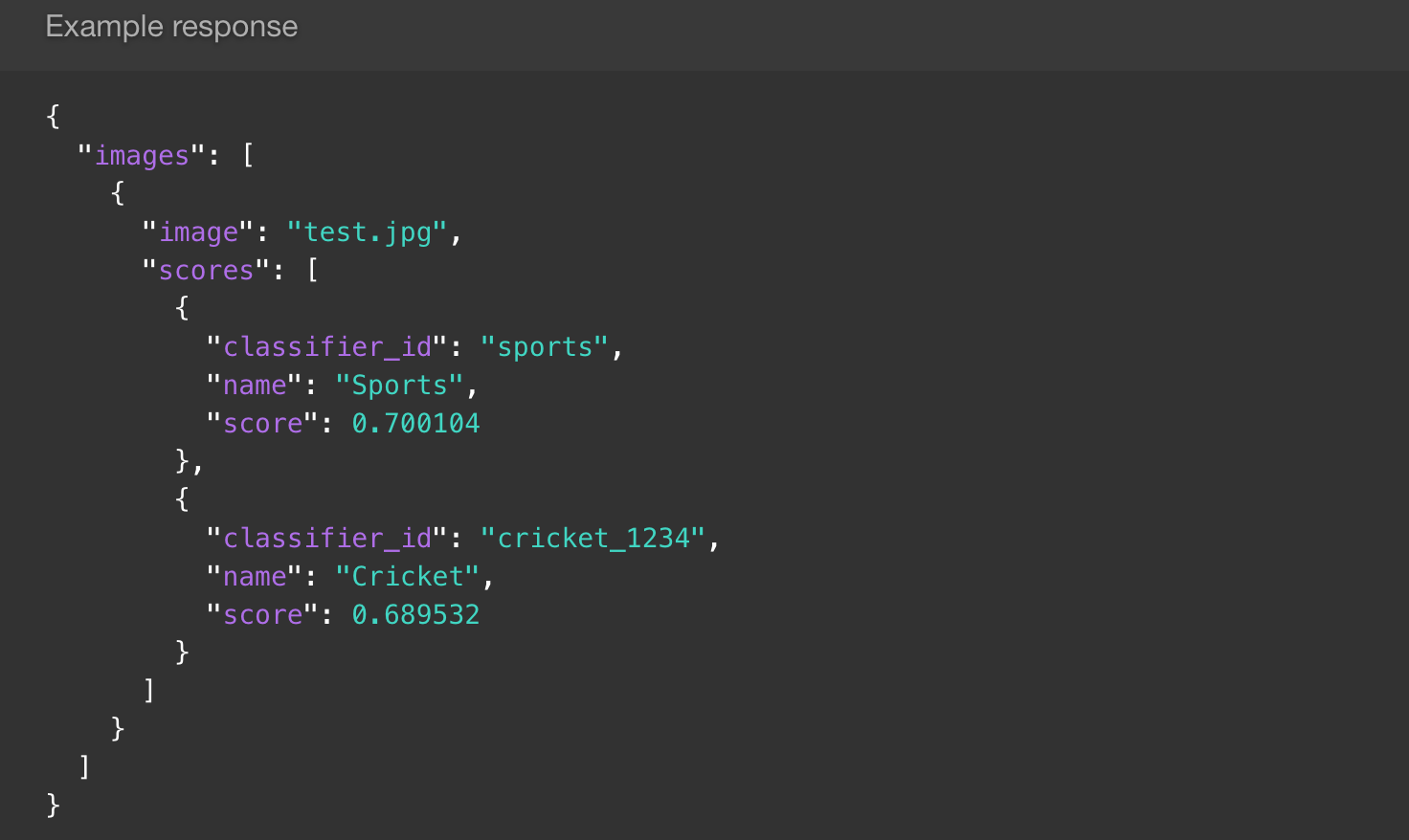

Supervised learning procedures are used in problems for which we can provide the system with example inputs as well as their corresponding outputs and wish to induce an implicit approximation of the rules or function that governs these correlations. Procedures of this kind are “supervised” in the sense that we explicitly indicate what correlations should be found and only ask the machine how to substantiate these correlations. Once trained, a supervised learning system should be able to predict the correct output for an input example that is similar in nature to the training examples, but not explicitly contained within it. The kinds of problems that can be addressed by supervised learning procedures are generally divided into two categories: classification and regression problems. In a classification problem, the outputs relate to a set of discrete categories. For example, we may have an image of a handwritten character and wish to determine which of 26 possible letters it represents. In a regression problem, the outputs relate to a real-valued number. For example, based on a set of financial metrics and past performance data, we may try to guess the future price of a particular stock.

Unsupervised learning procedures do not require a set of known outputs. Instead, the machine is tasked with finding internal patterns within the training examples. Procedures of this kind are “unsupervised” in the sense that we do not explicitly indicate what the system should learn about. Instead, we provide a set of training examples that we believe contains internal patterns and leave it to the system to discover those patterns on its own. In general, unsupervised learning can provide assistance in our efforts to understand extremely complex systems whose internal patterns may be too complex for humans to discover on their own. Unsupervised learning can also be used to produce generative models, which can, for example, learn the stylistic patterns in a particular composer’s work and then generate new compositions in that style. Unsupervised learning has been a subject of increasing excitement and plays a key role in the deep learning renaissance, which is described in greater detail below. One of the main causes of this excitement has been the realization that unsupervised learning can be used to dramatically improve the quality of supervised learning processes, as discussed immediately below.

Semi-supervised learning procedures use the automatic feature discovery capabilities of unsupervised learning systems to improve the quality of predictions in a supervised learning problem. Instead of trying to correlate raw input data with the known outputs, the raw inputs are first interpreted by an unsupervised system. The unsupervised system tries to discover internal patterns within the raw input data, removing some of the noise and helping to bring forward the most important or indicative features of the data. These distilled versions of the data are then handed over to a supervised learning model, which correlates the distilled inputs with their corresponding outputs in order to produce a predictive model whose accuracy is generally far greater than that of a purely supervised learning system. This approach can be particularly useful in cases where only a small portion of the available training examples have been associated with a known output. One such example is the task of correlating photographic images with the names of the objects they depict. An immense number of photographic images can be found on the Web, but only a small percentage of them come with reliable linguistic associations. Semi-supervised learning allows the system to discover internal patterns within the full set of images and associate these patterns with the descriptive labels that were provided for a limited number of examples. This approach bears some resemblance to our own learning process in the sense that we have many experiences interacting with a particular kind of object, but a much smaller number of experiences in which another person explicitly tells us the name of that object.

Reinforcement learning procedures use rewards and punishments to shape the behavior of a system with respect to one or several specific goals. Unlike supervised and unsupervised learning systems, reinforcement learning systems are not generally trained on an existent dataset and instead learn primarily from the feedback they gather through performing actions and observing the consequences. In systems of this kind, the machine is tasked with discovering behaviors that result in the greatest reward, an approach which is particularly applicable to robotics and tasks like learning to play a board game in which it is possible to explicitly define the characteristics of a successful action but not how and when to perform those actions in all possible scenarios.

What Is Deep Learning?

From Alan Turing’s writings onwards, the history of machine learning has been marked by alternating periods of optimism and discouragement over the field’s prospects for applying its conceptual advancements to practical systems and, in particular, to the construction of a general-purpose artificial intelligence. These periods of discouragement, which are often called AI winters, have generally stemmed from the realization that a particular conceptual model could not be easily scaled from simple test problems to more complex learning tasks. This occurred in the 1960s when Marvin Minsky and Seymour Papert conclusively demonstrated that perceptrons could not solve linearly inseparable problems. In the late 1980s, there was some initial excitement over the backpropagation algorithm’s ability to overcome this issue. But another AI winter occurred when it became clear that the algorithm’s theoretical capabilities were practically constrained by computationally intensive training processes and the limited hardware of the time.

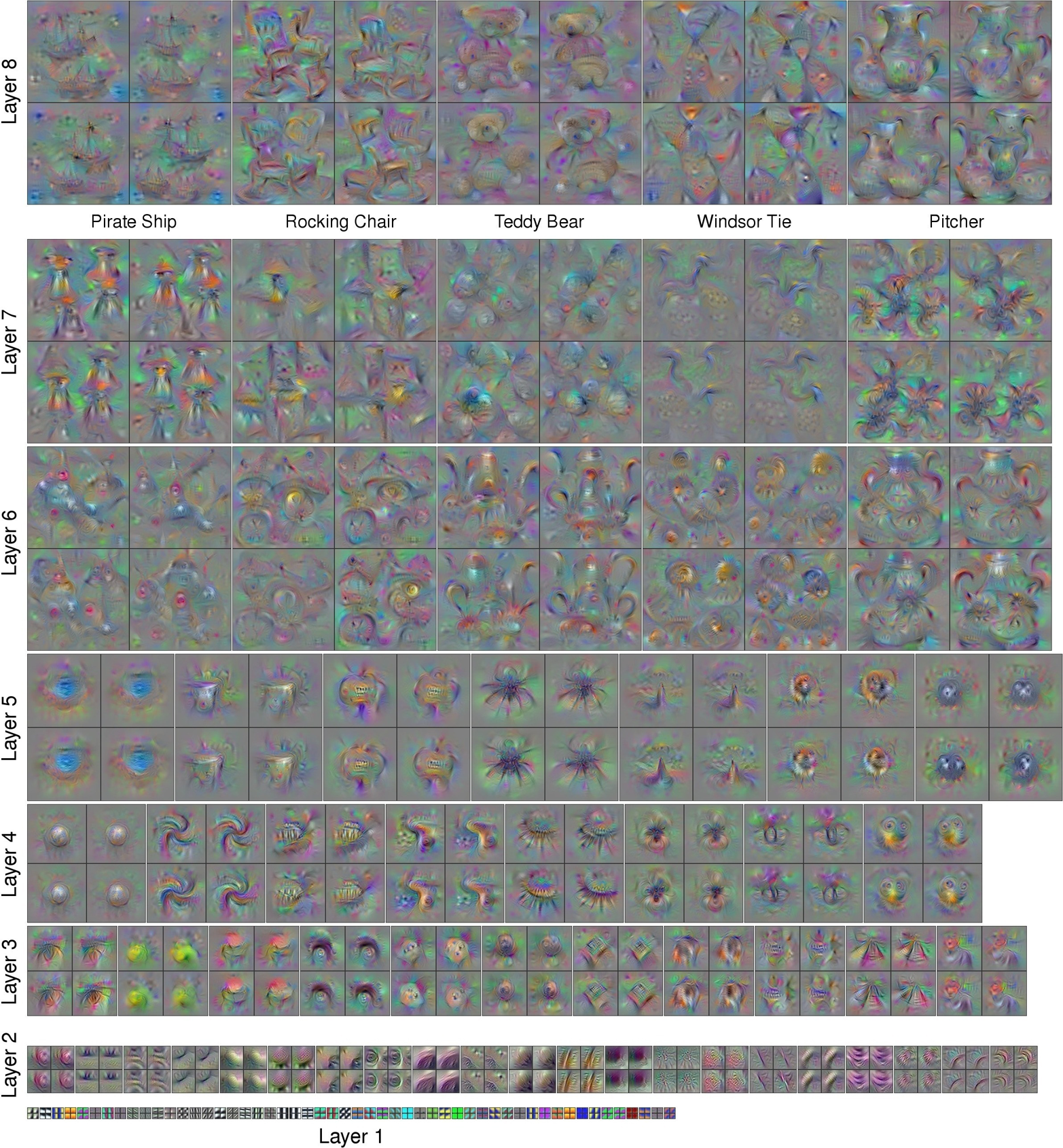

Over the last decade, a series of technical advances in the architecture and training procedures associated with artificial neural networks, along with rapid progress in computing hardware, have contributed to a renewed optimism for the prospects of machine learning. One of the central ideas driving these advances is the realization that complex patterns can be understood as hierarchical phenomena in which simple patterns are used to form the building blocks for the description of more complex ones, which can in turn be used to describe even more complex ones. The systems that have arisen from this research are referred to as “deep” because they generally involve multiple layers of learning systems which are tasked with discovering increasingly abstract or “high-level” patterns. This approach is often referred to as hierarchical feature learning.

As we saw in our earlier discussion of the process of recognizing a human face, learning about a complex idea from raw data is challenging because of the immense variability and noise that may exist within the data samples representing a particular concept or object.

Rather than trying to correlate raw pixel information with the notion of a human face, we can break the problem down into several successive stages of conceptual abstraction (see Figure 1-10). In the first layer, we might try to discover simple patterns in the relationships between individual pixels. These patterns would describe basic geometric components such as lines. In the next layer, these basic patterns could be used to represent the underlying components of more complex geometric features such as surfaces, which could be used by yet another layer to describe the complex set of shapes that compose an object like a human face.

As it turns out, the backpropagation algorithm and other earlier machine learning models are capable of achieving results comparable to those associated with more recent deep learning models, given sufficient training time and hardware resources. It should also be noted that many of the ideas driving the practical advances of deep learning have been mined from various components of earlier models. In many ways, the recent successes of deep learning have less to do with the discovery of radically new techniques than with a series of subtle yet important shifts in our understanding of various component ideas and how to combine them. Nevertheless, a well-timed shift in perspective, coupled with diminishing technical constraints, can make a world of difference in exposing a series of opportunities that may have been previously conceivable but were not practically achievable.

As a result of these changes, engineers and designers are poised to approach ever more complex problems through machine learning. They will be able to produce more accurate results and iterate upon machine-learning-enhanced systems more quickly. The improved performance of these systems will also enable designers to include machine learning functionality that would have once required the resources of a supercomputer in mobile and embedded devices, opening a wide range of new applications that will greatly impact users.

As these technologies continue to progress over the next few years, we will continue to see radical transformations in an astounding number of theoretical and real-world applications from art and design to medicine, business, and government.

Enhancing Design with Machine Learning

Parsing Complex Information

Computers have long offered peripheral input devices like microphones and cameras, but despite their ability to transmit and store the data produced by these devices, they have not been able to understand it. Machine learning enables the parsing of complex information from a wide assortment of sources that were once completely indecipherable to machines.

The ability to recognize spoken language, facial expressions, and the objects in a photograph enables designers to transcend the expressive limitations of traditional input devices such as the keyboard and mouse, opening an entirely new set of interaction paradigms that will allow users to communicate ideas in ever more natural and intuitive ways.

In the sections that follow, we will look more closely at some of these opportunities. But before doing so, it should be noted that some of the possibilities we will explore currently require special hardware or intensive computing resources that may not be practical or accessible in all design contexts at this time. For example, the Microsoft Kinect, which allows depth sensing and body tracking, is not easily paired with a web-based experience. The quickly evolving landscape of consumer electronics will progressively deliver these capabilities to an ever wider spectrum of devices and platforms. Nevertheless, designers must take these practical constraints into consideration as they plan the features of their systems.

Enabling Multimodal User Input

In our everyday interactions with other people, we use hand gestures and facial expressions, point to objects and draw simple diagrams. These auxiliary mechanisms allow us to clarify the meaning of ideas that do not easily lend themselves to verbal language. They provide subtle cues, enriching our descriptions and conveying further implications of meaning like sarcasm and tone. As Nicholas Negroponte said in The Architecture Machine, “it is gestures, smiles, and frowns that turn a conversation into a dialogue.”7

In our communications with computers, we have been limited to the mouse, keyboard, and a much smaller set of linguistic expressions. Machine learning enables significantly deeper forms of linguistic communication with computers, but there are still many ideas that would be best expressed through other means—visual, auditory, or otherwise. As machine learning continues to make a wider variety of media understandable to the computer, designers should begin to employ “multimodal” forms of human-computer interaction, allowing users to convey ideas through the optimal means of communication for a given task. As the saying goes, “a picture is worth a thousand words”—at least when the idea is an inherently visual one.

For example, let’s say the user needed a particular kind of screwdriver but didn’t know the term “Phillips head.” Previously, he might have tried Googling a variety of search terms or scouring through multiple Amazon listings. With multimodal input, the user could instead tell the computer, “I’m looking for a screwdriver that can turn this kind of screw” and then upload a photograph or draw a sketch of it. The computer would then be able to infer which tool was needed and point the user to possible places to purchase it.

By conducting each exchange in the most appropriate modality, communication is made more efficient and precise. Interactions between user and machine become deeper and more varied, making the experience of working with a computer less monotonous and therefore more enjoyable. Complexity and nuance are preserved where they might otherwise have been lost to a translation between media.

This heightened expressivity, made possible by machine learning’s ability to extract meaning from complex and varied sources, will dramatically alter the nature of human&8#211;computer interactions and require designers to rethink some of the longstanding principles of user interface and user experience design.

New Modes of Input

Visual Inputs

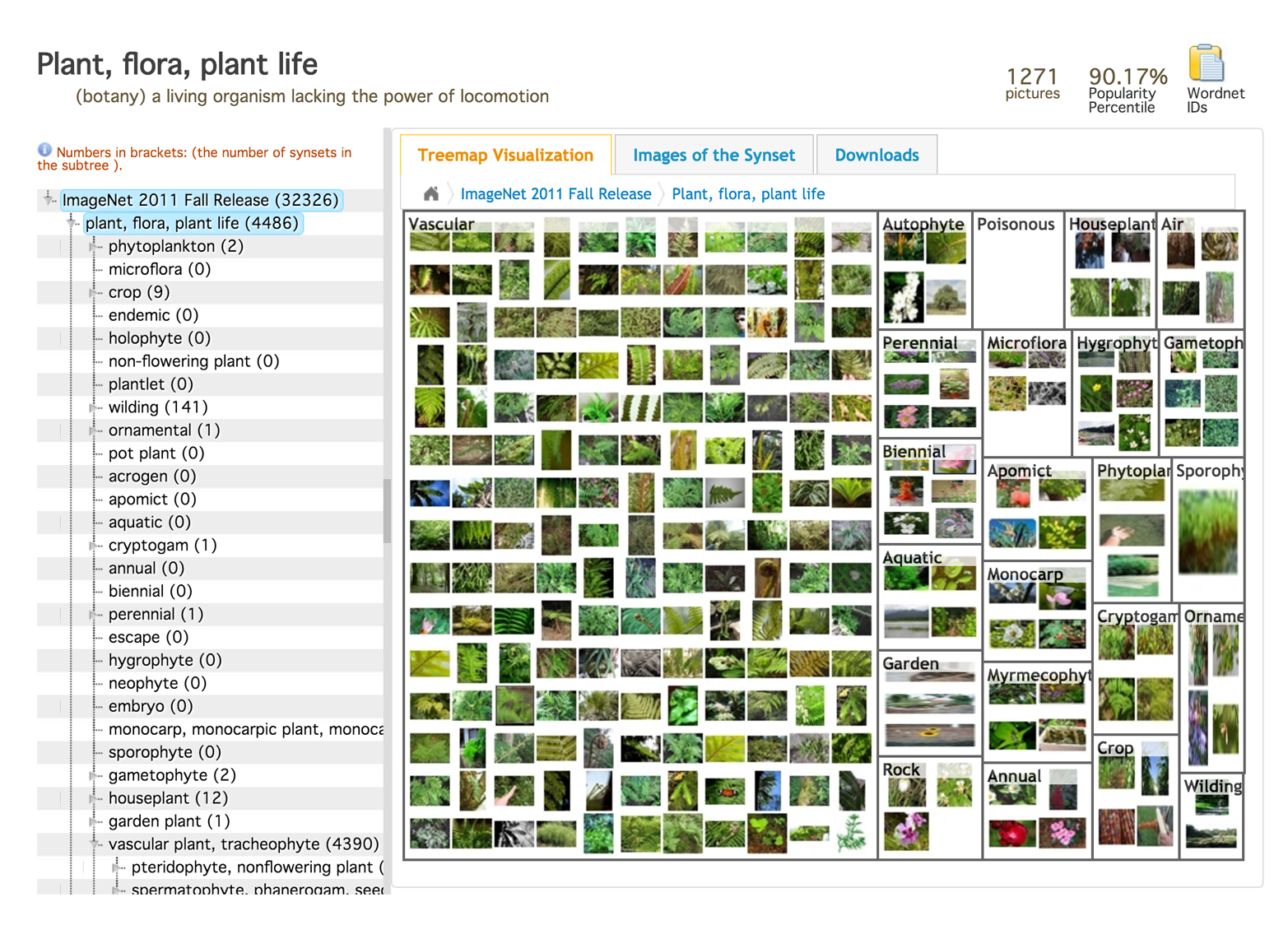

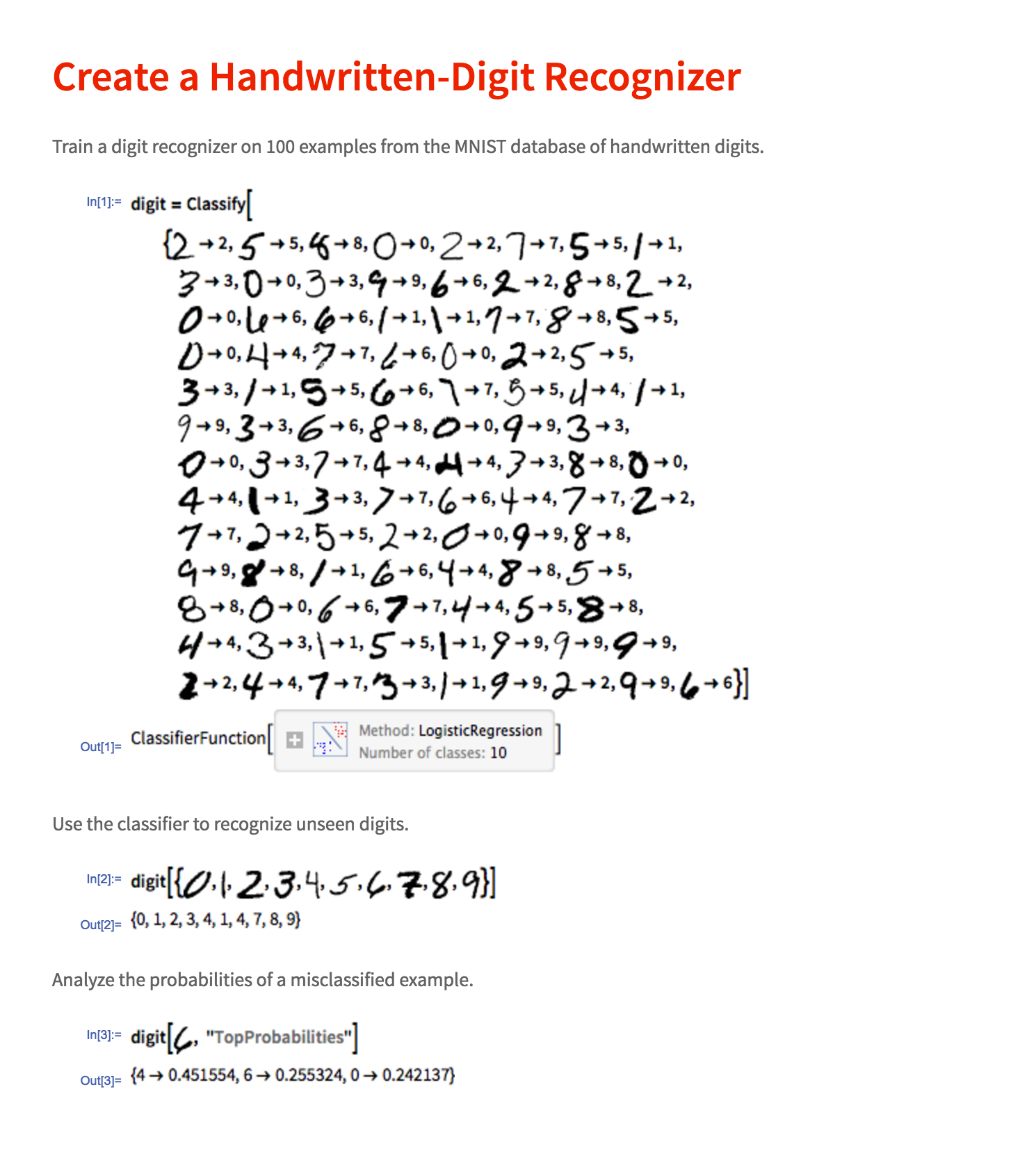

The visible world is full of nuanced information that is not easily conveyed through other means. For this reason, extracting information from images has been one of the primary applied goals throughout the history of machine learning. One of the earliest applications in this domain is optical character recognition—the task of decoding textual information, either handwritten or printed, from photographic sources (see Figure 1-11). This technology is used in a wide range of real-world applications from the postal service’s need to quickly decipher address labels to Google’s effort to digitize and make searchable the world’s books and newspapers. The pursuit of optical character recognition systems has helped to drive machine learning research in general and has remained as one of the key test problems for assessing the performance of newly invented machine learning algorithms.

More recently, researchers have turned their attention to more complex visual learning tasks, many of which center around the problem of identifying objects in images. The goals of these systems range in both purpose and complexity. On the simpler end of the spectrum, object recognition systems are used to determine whether a particular image contains an object of a specific category such as a human face, cat, or tree. These technologies extend to more specific and advanced functionality, such as the identification of a particular human face within an image. This functionality is used in photo-sharing applications as well as security systems used by governments to identify known criminals.

Going further, image tagging and image description systems are used to generate keywords or a sentence that describes the contents of an image (see Figure 1-12). These technologies can be used to aid image-based search processes as well as assist visually impaired users to extract information from sources that would be otherwise inaccessible to them. Further still, image segmentation systems are used to associate each pixel of a given image with the category of object represented by that region of the image. In an image of suburban home, for instance, all of the pixels associated with the patio floor would be painted one color while the grass, outdoor furniture and trees depicted in the image would each be painted with their own unique colors, creating a kind of pixel-by-pixel annotation of the image’s contents.

Other applications of machine learning to visual tasks include depth estimation and three-dimensional object extraction. These technologies are applicable to tasks in robotics and the development of self-driving cars as well as the automated conversion of conventional two-dimensional movies into stereoscopic ones.

The range of visual applications for machine learning is too vast to enumerate fully here. In general, though, a great deal of machine learning research and applied work has and will continue to be directed to the numerous component goals of turning once impenetrable pixel grids into high-level information that can be acted upon by machines and used to aid users in a wide assortment of complex tasks.

Aural Inputs

Like visual information, auditory information is highly complex and used to convey a wide range of content ranging from human speech to music to bird calls, which are not easily transmitted through other media. The ability for machines to understand spoken language has immense implications for the development of more natural interaction paradigms. However, the diverse vocal characteristics and speech patterns of different speakers makes this task difficult for machines and even, at times, human listeners. Though a highly reliable speech-to-text system can greatly benefit human–computer interactions, even a slightly less reliable system can result in great frustration and lost productivity for the user. Like visual learning systems, immense progress in the complex task of speech recognition has been made in recent years, primarily as a result of breakthroughs in deep learning research. For most applications, these technologies have now matured to the point where their utility generally outweighs any residual imprecision in their capabilities.

Aside from speech recognition, the ability to recognize a piece of music aurally has been another popular area of focus for machine learning research. The Shazam app allows users to identify songs by allowing the software to capture a short snippet of the audio. This system, however, can only identify a song from its original recording rather than allowing users to sing or hum a melody they wish to identify. The SoundHound app offers this functionality, though it is generally less reliable than Shazam’s functionality. This is understandable because Shazam can utilize subtle patterns in the recorded audio information to produce accurate recognition results, whereas SoundHound’s functionality must attempt to account for the potentially highly imprecise or out-of-tune approximation of the recording by the user’s own voice. Both systems, however, provide users with capabilities that would hard to supplant through other means – most readers will be able to recall a time in which they hummed a melody to friends, hoping someone might be able to identify the song. The underlying technologies used by these systems can also be directed towards other audio recognition tasks such as the identification of a bird from its call or the identification of a malfunctioning mechanical system from the noise it makes.

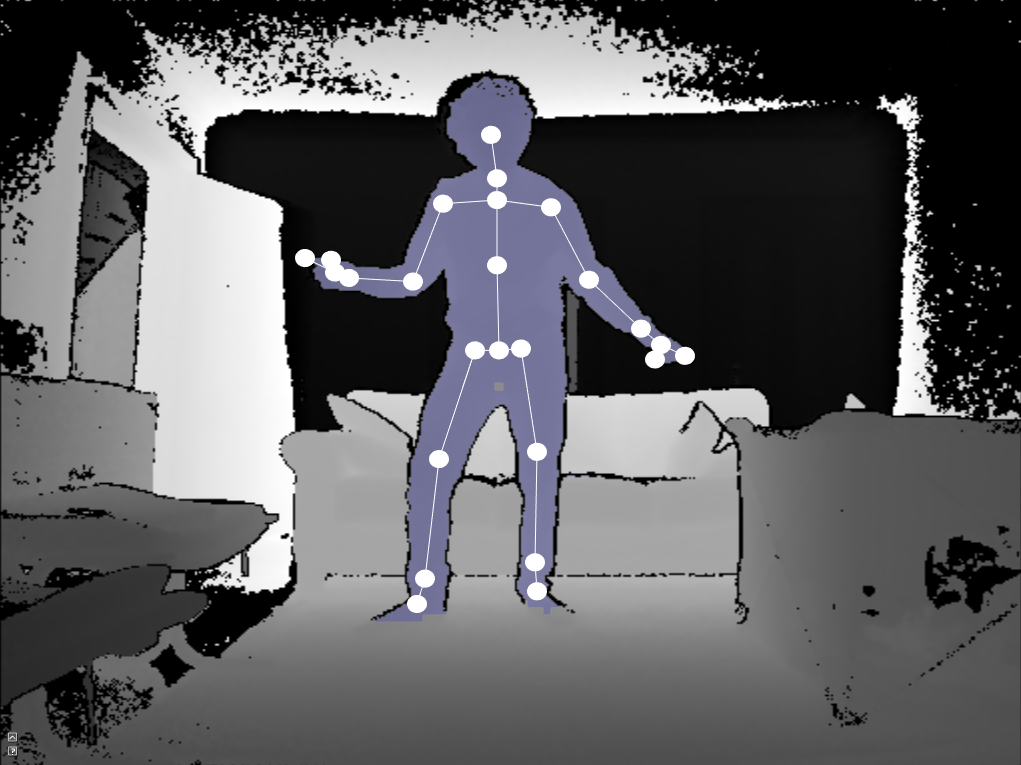

Corporeal Inputs

Body language can convey subtle information about a user’s emotional state, augment or clarify the tone of a verbal expression, or be used to specify what object is being discussed through the act of pointing. Machine learning systems, in conjunction with a range of new hardware devices, have enabled designers to provide users with mechanisms for communicating with machines through the endlessly expressive capabilities of the human body. See Figure 1-13.

Devices such as the Microsoft Kinect and Leap Motion use machine learning to extract information about the location of a user’s body from photographic data produced by specialized hardware. The Kinect 2 for Windows allows designers to extract 20 3-dimensional joint positions through its full-body skeleton tracking feature and more than a thousand 3-dimensional points of information through its high-definition face tracking feature. The Leap Motion device provides high-resolution positioning information related to the user’s hands.

These forms of input data can be coupled with machine-learning-based gesture or facial expression recognition systems, allowing users to control software interfaces with the more expressive features of their bodies and enabling designers to extract information about the user’s mood.

To some extent, similar functionality can be produced using lower-cost and more widely available camera hardware. For the time being, these specialized hardware systems help to make up for the limited precision of their underlying machine learning systems. However, as the capabilities of these machine learning tools quickly advance, the need for specialized hardware in addressing these forms of corporeal input will be diminished or rendered unnecessary.

In addition to these input devices, health tracking devices like Fitbit and Apple Watch can also provide designers with important information about the user and her physical state. From detecting elevated stress levels to anticipating a possible cardiac event, these forms of user input will prove invaluable in better serving users and even saving lives.

Environmental Inputs

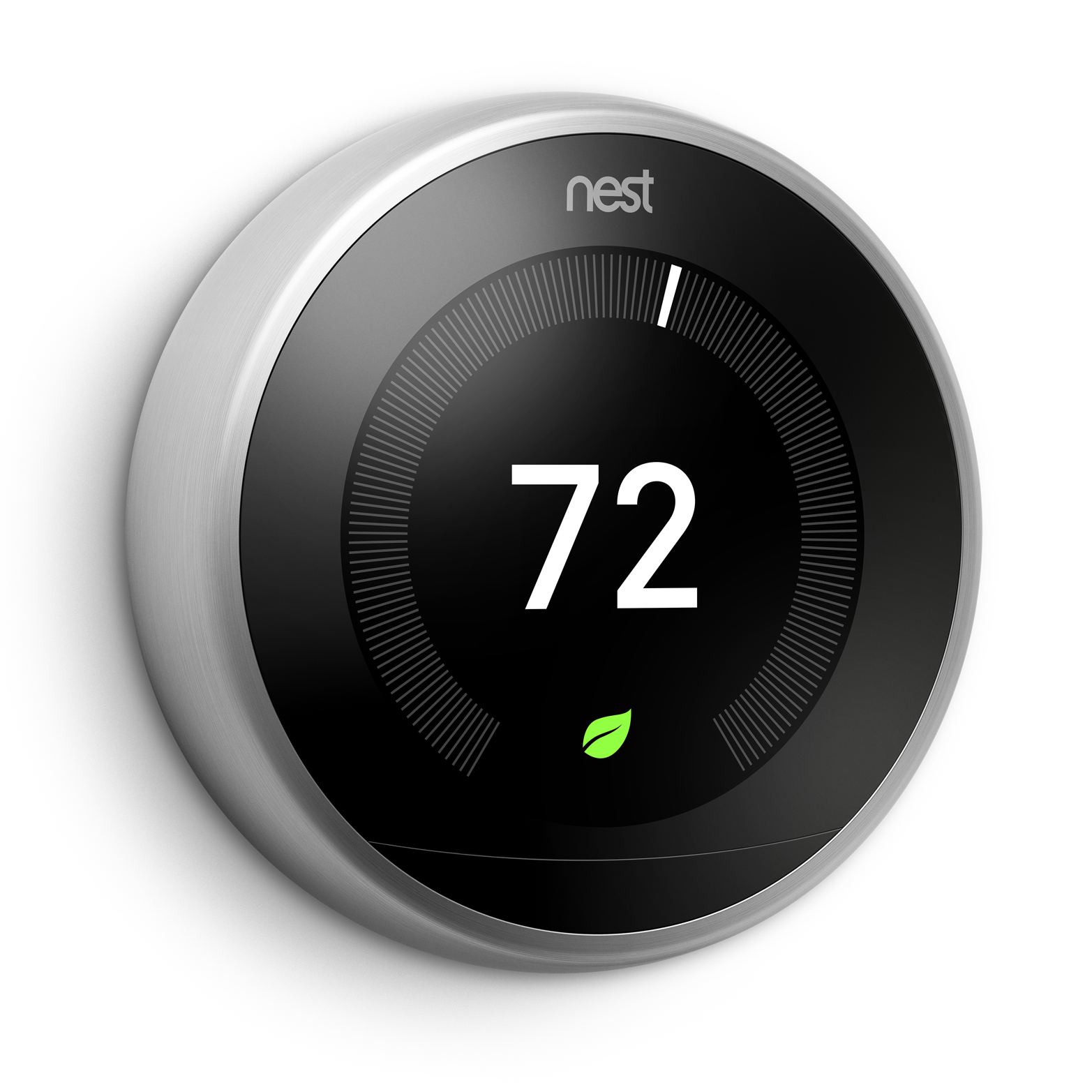

Environmental sensors and Internet-connected objects can provide designers with a great deal of information about users’ surroundings, and therefore about the users themselves. The Nest Learning Thermostat (Figure 1-14), for instance, tracks patterns in homeowners’ behaviors to determine when they are at home as well as their desired temperature settings at different times of day and during different seasons. These patterns are used to automatically tune the thermostat’s settings to meet user needs and make climate control systems more efficient and cost-effective.

As Internet-of-Things devices become more prevalent, these input devices will provide designers with new opportunities to assist users in a wide assortment of tasks from knowing when they are out of milk to when their basement has flooded.

Abstract Inputs

In addition to physical forms of input, machine learning allows designers to discover implicit patterns within numerous facets of a user’s behavior. These patterns carry inherent meanings, which can be learned from and acted upon, even if the user is not expressly aware of having communicated them. In this sense, these implicit patterns can be thought of as input modalities that, in practice, serve a very similar purpose to the more tangible input modes described above.

Mining behavioral patterns through machine learning can help designers to better understand users and serve their needs. At the same time, these patterns can also help designers to understand the products or services they offer as well as the implicit relationships between these offerings. Behavioral patterns can be mined in relation to an individual user or aggregated from the collective behaviors of numerous users.

One form of pattern mining that can be useful in serving an individual user as well as improving the overall system is the discovery of frequently coupled behaviors within the sequence of actions performed by the user. For example, a user may purchase milk whenever he buys breakfast cereal. Noticing this pattern gives designers the opportunity to construct interface mechanisms that will allow the user to address his shopping needs more easily and efficiently. When the user adds cereal to the shopping cart, a modal interface suggesting the purchase of milk could be presented to him. Alternately, these two items could be shown in proximity to one another within the interface, despite the fact that these two products would generally be situated within two different areas of the store. Rather than presenting the user with separate interfaces for each item, the system could instead dynamically generate a single interface element that would allow the user to purchase these frequently coupled items with one click. In addition to benefiting the user’s shopping experience and enabling multiuser recommendation engines, the system’s knowledge of these correlated behaviors can be used to make the system itself more efficient, aiding business processes like inventory estimation.

Mining user behavior patterns can also help businesses to better understand who their customers are. If a user frequently purchases diapers, for instance, it is a near certainty that the user is a parent. This kind of auxiliary knowledge of the user can help designers to produce interfaces that better address their target customer demographics and influence business and marketing decisions such as the determination of which advertising venues will yield the greatest influx of new customers. Designers should be careful, however, to not make assumptions about users that may embarrass or offend them by characterizing them in ways that conflict with the public persona they wish to convey.

In one famous incident, the retailer Target used purchasing patterns to determine whether a given user was pregnant so that the store could better target this much sought-after category of customer.8 Though this practice may be welcomed by some, in at least one case it created an uncomfortable situation when an angry father came to a Target store demanding to know why his high-school-aged daughter had received numerous coupons targeted at expectant mothers. The man had not been aware that his daughter was pregnant and upon learning the truth from his daughter, apologized to the retailer. Nevertheless, these kinds of customer insights can be unsettling and require great care in their treatment by designers. Issues of this kind will be discussed in greater detail in the section Mitigating Faulty Assumptions.

When handled with care, however, user insights can provide great value to customers and businesses alike. Understanding a user’s behavioral patterns can also aid security processes such as the detection of fraudulent use of a customer’s account information. Credit card companies and some retailers regularly use consumer purchasing patterns to assess whether a given purchase is fraudulent by checking whether the geographic location of the transaction and the items purchased are in line with the customer’s history. If an anomaly is detected, the transaction will be blocked and the customer will be notified that their financial information may be compromised.

Creating Dialogue

Conventional user interfaces tend to rely upon menu systems as the primary means of organizing the set of features available to the user. One positive aspect of this approach is that it provides a clear and explicit mechanism for the user to explore the system and learn what is possible within it. Hierarchical menus enable users to quickly focus their search for a specific feature by curating the system’s functionality into neatly delineated, domain-specific groupings. If the user is unaware of a particular feature, its proximity to other more familiar functions may lead the user to experiment with it, expanding her knowledge of the system in an organic manner.

The downside to hierarchical menu systems, however, is that once users have learned the system’s capabilities, they still have to spend a great deal of time navigating menus in order to reach commonly used features. This tedious labor is sometimes mediated by the software’s offering of keyboard shortcuts. But there is a limit to the number of shortcuts that can be plausibly utilized, and this approach is not easily embedded in web and mobile interfaces.

With each additional feature, conventional menu systems become increasingly complex and hard to navigate. For professional tools like 3D-modeling and video-editing software, it may be reasonable to expect the user to commit to a steep learning curve. But for intelligent assistants and other applications with broad feature sets aimed at the average user, it would be impractical or counterproductive to present the system’s functionality through a menu system. Imagine what Siri would look like if it were to take this approach!

Programmers may be familiar with a different mechanism for facilitating interaction: the “read-evaluate-print loop” (or REPL). In this kind of interface, the programmer issues a specific textual command, the machine performs it, prints the output, and waits for the user to issue another command. This back-and-forth dialogue takes the familiar form of a messaging app and allows programmers to work more quickly and dynamically by circumventing the need for the tedious and incessant navigation of menu hierarchies.

This conversational format also provides an ideal medium for facilitating the kinds of multimodal interactions described in the previous section. In this machine-learning-enhanced context, we can imagine many vibrant and varied exchanges between the user and machine. Much like a whiteboarding session held by human collaborators, the user and software would take turns addressing aspects of the task at hand, solving its component problems and clarifying their ideas through an assortment of verbal expressions, diagrams, and gestures.

Assisting Feature Discovery

Despite its many advantages, an open-ended dialogue does little to make the system’s capabilities known to the user. To make the most of a REPL programming interface, the user must hold prior knowledge of the available features or make frequent reference to the documentation, which is likely to negate any efficiency gains over menu-based workflows. For intelligent interfaces, offloading the process of feature discovery to auxiliary documentation would be even less practical.

The potential limitations of a conversational user interface can be seen in our interactions with first-generation intelligent assistants such as Siri, Cortana, and Echo(Figure 1-15). In this context, feature discovery often consists of the user scouring his or her mind for phrases that might lead to some amusing or useful response from the software.

One solution to the problem of feature discovery that is offered by many intelligent assistants is to provide the user with a set of example inputs that demonstrate a variety of features available within the system. The computational knowledge engine WolframAlpha embeds examples directly into its interface, helping new users to become acquainted with the system’s mode of interaction and the kinds of knowledge it is able to access. These well-curated examples continue to engage even seasoned users, inviting further exploration into the entertaining or informative pathways they reveal.

Demonstrating an application’s full range of features through a series of embedded examples can, however, begin to make the software look suspiciously like a reference manual. Rather than handpicking a static group of examples, machine learning or conventional programmatic logic can be used to dynamically select examples that are contextually relevant to the user’s activity. Going further, machine intelligence could be used to generate speculative extensions of the user’s trajectory, providing a series of possible next steps in a range of conceptual directions for the user to consider. This mechanism would not only extend the user’s understanding of the interface and its possibilities, but also enrich his or her fluency with the task or domain of interest at hand.

Another common mechanism for helping users to locate features within a conversational interface is to offer suggested meanings when their statements are not clear to the system. This can be helpful in correcting the improperly formed expression of a command that is already more-or-less known to the user. But if the user is completely unaware of some potentially advantageous feature, he will not think to ask for it in the first place, and this mechanism can provide no assistance.

We could, in theory, defer the problem of feature discovery to the march of progress in technology and assume that computers will eventually be intelligent enough to perform any task that could conceivably be asked of them. Yet, the machine’s ability to handle any request would not inherently translate to the user’s awareness of every possible request that might prove useful to his goals. Furthermore, the user may not be familiar enough with the problem domain to develop a set of goals in the first place.

Think for a moment about what question you might ask a Nobel laureate in chemistry. Without a strong working knowledge of the field, you would be unlikely to formulate a question that took full advantage of her expertise. To make the most of this opportunity, you would like need some guidance in finding an entry point to a fruitful line of inquiry.

To assist users in performing complex or domain-specific tasks, designers should strive to build systems that not only respond to user requests but also enrich the user’s understanding of the domain itself. It is not enough for intelligent interfaces to offer an infinite variety of features; they must also find ways of connecting the user with the possibilities they contain.

Getting Acquainted

Perhaps the greatest challenge of any conversation is knowing where to begin. When you meet someone new, you cannot be sure that your personal experiences, professional background, or even the idioms and gestures you use will overlap with theirs. For this reason, we often begin with simple pleasantries and then attempt to establish common ground by posing basic questions about the other person’s interests, line of work, and family before diving into more specific subjects and inquiries.

Similarly, in designing conversational user interfaces, we must establish common ground with users and introduce them to the system’s capabilities and modes of interaction, since they are not explicitly detailed by a menu system.

A blank page can be stifling and may lead some users to lose interest immediately. For this reason, it is important to have the user get his feet wet as quickly as possible. Rather than simply showcasing example inputs that demonstrate a few basic features, the application could instead ask the user to perform a simple task that employs one of these features. This gives the user an initial confidence boost and helps to get the conversational juices flowing.

As the user gains traction through these initial exercises, the software can gradually become less proactive in soliciting specific actions and allow the user to explore the tool on his or her own terms. Over time, the system can continue to suggest new features to the user. But designers should be careful not to bombard users with too many new ideas as they become acquainted with the system.

Once acclimated, the user’s own explorations and activities can help the system to understand what features would be most useful to her. Subsequently, if the user is idle or appears to be at an impasse, the system can suggest contextually relevant new activities or features that have never been explored by the user. This form of assistance will be discussed further in the section Designing Building Blocks.

The user should, of course, always have the ability to opt out of these suggestions or redirect them toward more relevant functionality. Before leaving these introductory exercises, it is also important to make sure that users know how to get help from the system when they need it. Many new users will be eager to dive into the software as quickly as possible, leading them to rush through these important on-boarding exercises. In a conversational interface, an obvious and natural mechanism for seeking assistance from the system would be to use a keyword like “Help!” In some cases, however, the user’s confusion may come from a lack of familiarity or comfort with the open-ended nature of a conversational interface. For this reason, it may be valuable to provide a concrete and persistent interface element such as a help button that allows the user to quickly and unambiguously reach out for assistance.

Seeking Clarity

In an open-ended dialogue with an intelligent interface, it is only natural and perhaps even desirable for the user to develop the impression that anything is possible within the system. In most instances, however, this will not be the case. Though intelligent assistants give the appearance of broad conceptual versatility, their true capabilities are generally still limited to one or a handful of specific domains of functionality. Their ability to comprehend human language and map it to a particular function is also often somewhat brittle.

Designers can help to mediate these technical limitations by providing conversational cues that lead the user away from broad or ambiguous statements, which cannot be easily parsed by the machine. A key component of this is to design for interactions that establish realistic expectations from the user and clearly indicate the kind of information that is being requested either through example or brief description.

If an application prompted you to “say what’s on your mind,” it would be disappointing or embarrassing to dive into a longwinded and enthusiastic description of your ideas only to have the machine respond with a terse “I’m sorry, I don’t understand.”

Helping the user to focus on a specific task or to communicate a singular point of information is often most difficult at the beginning of a new interaction. The user may have several impressionistic or partially formed goals, but may not have sufficient clarity to articulate a clear point of entry to the system. The absence of accumulated contextual information that could otherwise be derived from the user’s most recent actions also makes it more difficult for the machine to infer the user’s intent at the outset of an interaction.

Rather than starting with an open-ended question, the system could instead pose a specific yet basic one that will help to establish context and get the conversation going so that further details can be solicited in subsequent exchanges. In many cases, it may be helpful to provide users with a few categorical options that will localize their goals to a specific subset of the system’s overall functionality. In the context of an e-commerce site, the system might open the conversation by asking whether the user is looking to purchase a specific item, browse for items in a particular section of the store, or make a return. Once the broad context has been established, the system can seek further details from the user by posing questions that extend organically from this point of entry. If the user indicated an interest in buying a specific item, the system might pose a series of questions about the desired size, materials, or optional accessories associated with that item.

There is nothing more discouraging than having your ideas fall flat with no indication of what was confusing or how to better communicate your intended meaning. Perhaps the user’s expression was completely outside the machine’s domain of understanding. Or perhaps a single word choice or change of phrasing would have made the statement clear. But without any feedback from the machine, the user will be left in frustration to guess at the problem.

When the user’s input has been understood, the system should restate what it has understood before moving on to subsequent exchanges. If the system has not understood the user, it should try to indicate the nature of the problem to the best of its ability.

Many machine learning and natural language processing platforms can be configured to return multiple speculative interpretations of the user’s expression alongside confidence scores that numerically indicate the machine’s certainty about the correctness of each interpretation it offers. Whenever possible, intelligent applications should try to correlate these speculative interpretations with broader contextual knowledge of the user’s activities in order to eliminate the least plausible options. If this approach does not uncover a single unambiguous meaning, designers should consider presenting a handful of the most highly ranked interpretations to the user directly. Exposing users to these speculative interpretations will help them to understand the nature of the problem and rephrase or redirect a statement.

Breaking Interactions into Granular Exchanges

One of the most important things designers can do to facilitate successful exchanges between users and intelligent interfaces is to the help users break multistage workflows and their component interactions into small conceptual parcels. Simple expressions that communicate an individual command or point of information are more easily understood by machine learning and natural language processing systems than complex, multifaceted statements. Designers can help the user to deliver concise statements by creating interfaces and workflows that lead the user through a series of simple exercises or decision points that each address a single facet of a much larger and more complex task.

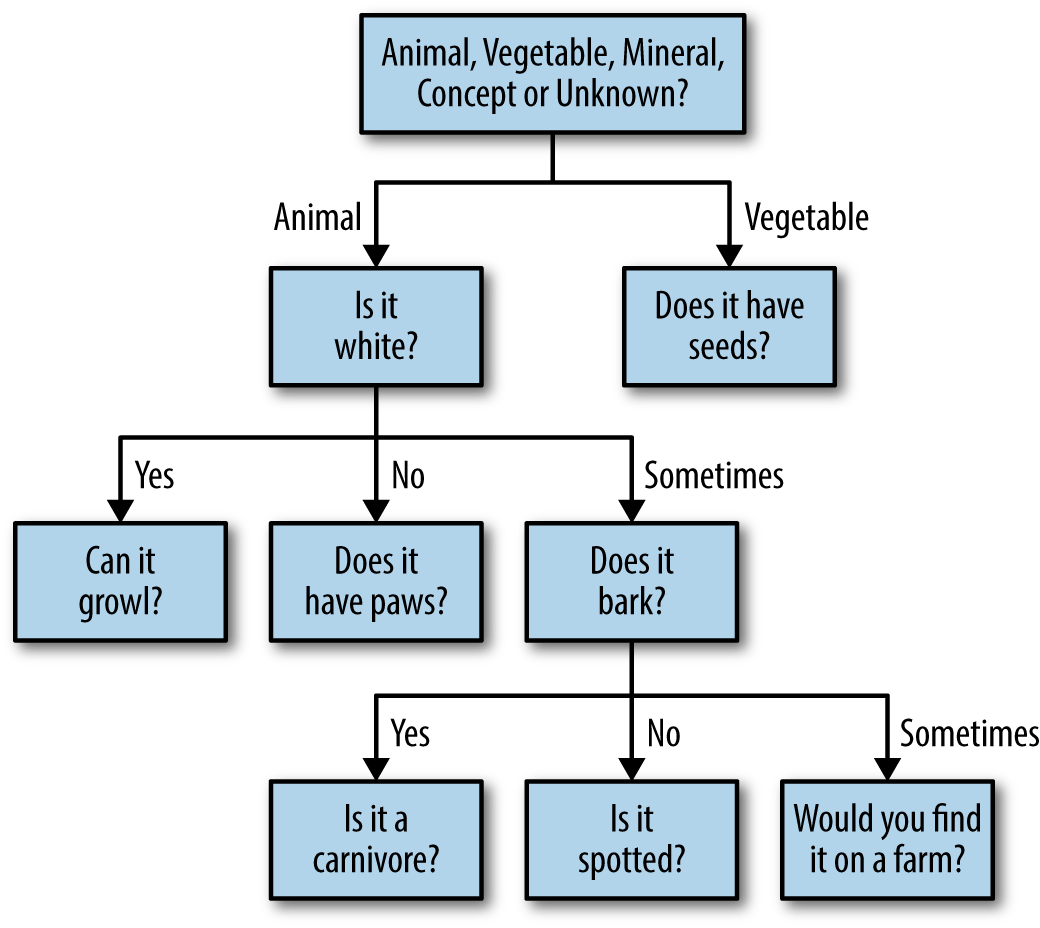

An excellent example of this approach is 20Q, an electronic version of the game Twenty Questions. Like the original road trip game, 20Q asks the user to think of an object or famous person and then poses a series of multiple choice questions in order to discover what the user has in mind (see Figure 1-16).

The first question posed in this process is always:

“Is it classified as Animal, Vegetable, Mineral, or Concept?”

Subsequent questions try to uncover further distinctions that extend from the information that has already been provided by the user. For example, if the answer to the first question were “Animal,” then the next question posed might be “Is it a mammal?” If instead the first answer were “Vegetable,” the next question might be “Is it usually green?” Each of these subsequent questions can be answered with one of the following options: Yes, No, Unknown, Irrelevant, Sometimes, Maybe, Probably, Doubtful, Usually, Depends, Rarely, or Partly.

20Q guesses the correct person, place, or thing 80% of the time after 20 questions and 98% of the time after 25 questions. This system uses a kind of machine learning algorithm called a learning decision tree (or classification tree) to determine the sequence of questions that will lead to the correct answer in the smallest number of steps possible. Using the data generated by previous users’ interactions with the system, the algorithm learns the relative value of each question in removing as many incorrect options as possible so that it can present the most important questions to the user first.

For example, if it were already known that the user had a famous person in mind, it would likely be more valuable for the next question to be whether the person is living than whether the person has written a book, because only a small portion of all historic figures are alive today but many famous people have authored a book of one kind or another.

Though none of these questions individually encapsulates the entirety of what the user has in mind, a relatively small number of well-chosen questions can uncover the correct answer with surprising speed. In addition to aiding the system’s comprehension of users’ expressions, this process can benefit the users directly in their ability to communicate ideas more clearly and purposefully. This process can also make the task of communicating an idea more playful and engaging for the user.

Aside from guessing games, designers may find this approach to be useful in a wide range of human-computer interactions. At its core, this process can be seen as a mechanism for discovering an optimal path through a large number of interrelated decisions. From this perspective, we can imagine applying this approach to processes like the creation of a financial portfolio or the discovery of new music.

In developing a financial portfolio, users would be presented with a series of questions that relate to their financial priorities and constraints in order to find a set of investments that are well-suited to their long-term goals, risk tolerance, and spending habits. In the context of music or movie discovery, this process may help to overcome some of the limiting factors associated with standard recommendation engines. For example, a user may give a particular film a high rating because it stars one of his favorite actors, even though the film is of a genre that is generally of no interest to the user. From this rating, a recommendation engine is likely to suggest similar films without giving the user the opportunity to point out that the actor rather than the genre was the key factor in his rating. Using an interactive decision tree process, this important distinction could be more easily uncovered by the system.

Designing Building Blocks

The kinds of machine-learning-enhanced workflows described above can provide designers with powerful new tools for making complex tasks more comprehensible, efficient, and enjoyable for the user. At the same time, enabling these modes of interaction may require designers to diverge from at least some of the longstanding conventions of user interface and user experience design.

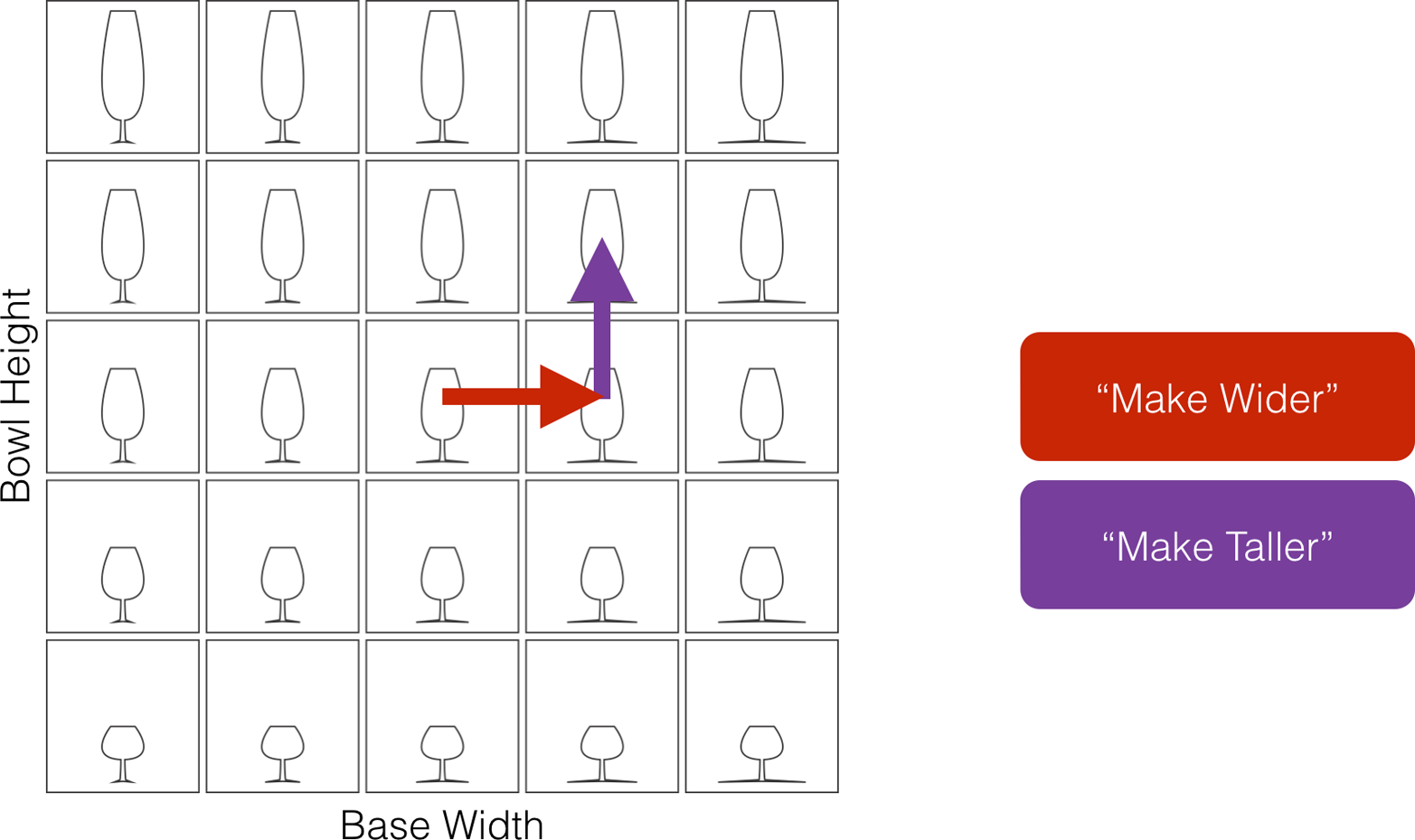

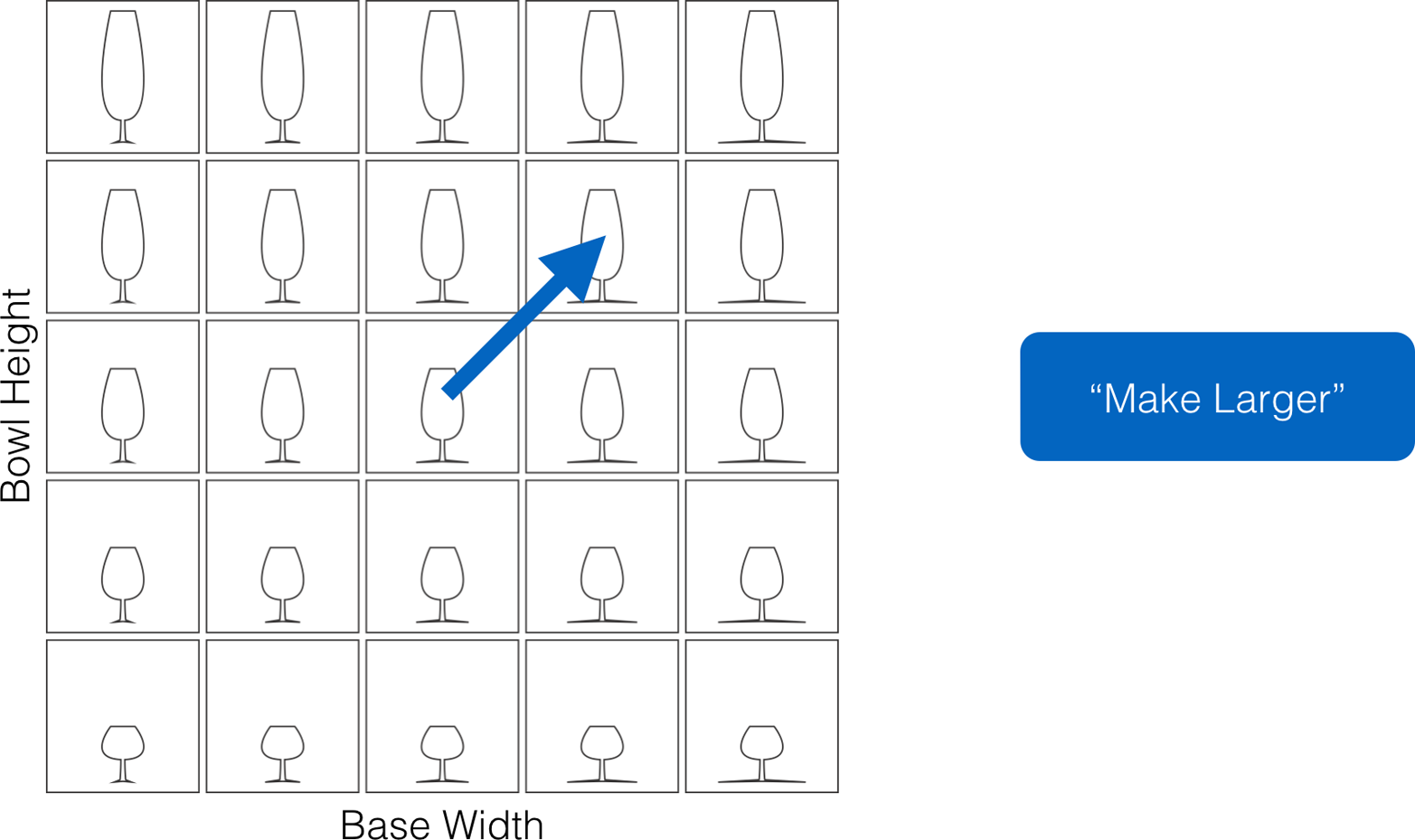

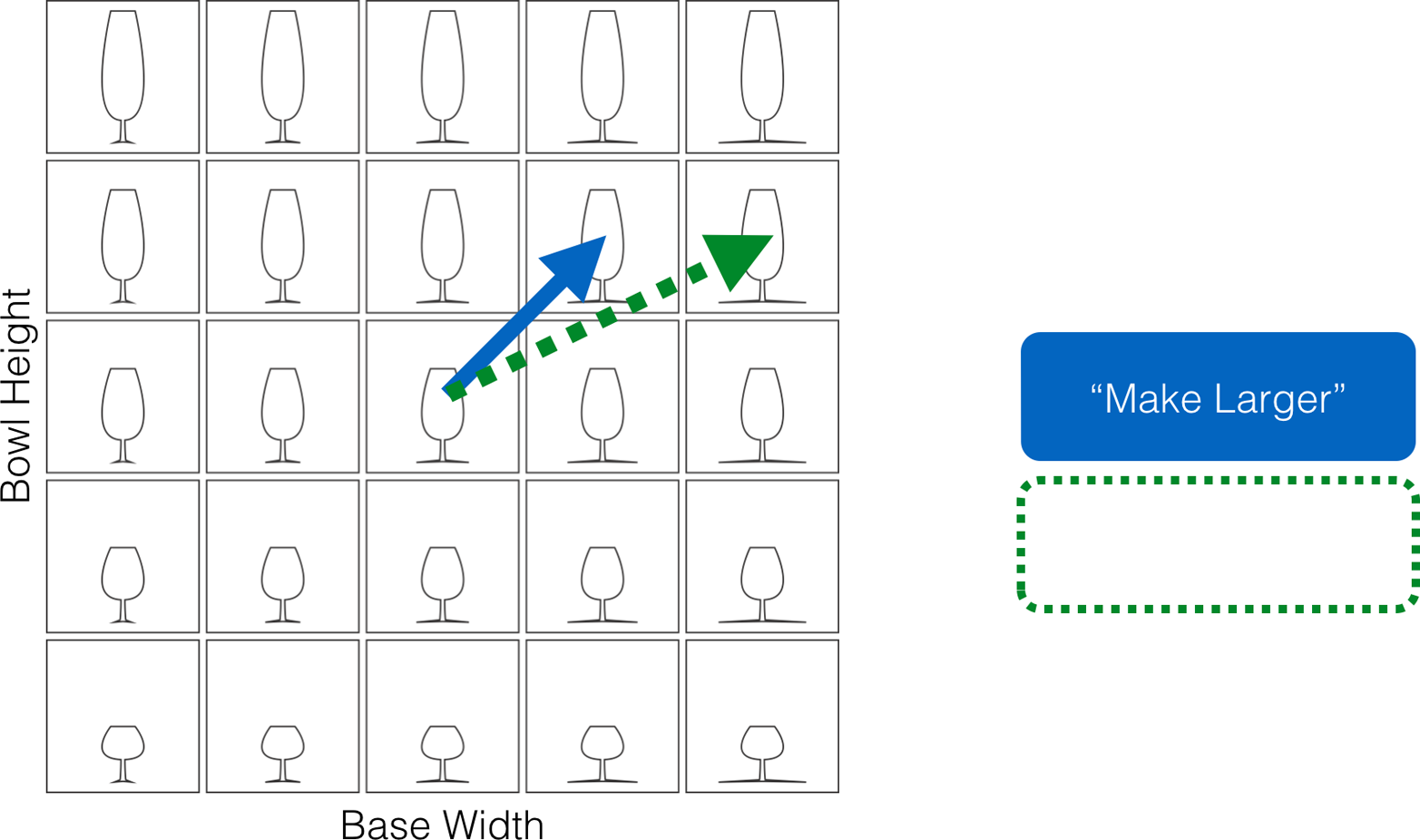

In the section Mechanical Induction, we discussed inductive learning as a search process. Similarly, any task for which the user has some desired end-state in mind and must discover a series of operations that will reach this goal can be seen as a kind of search. From this perspective, let us imagine a very large map in which each possible end-state as well as our starting point is represented by a unique set of coordinates.

In this map, each feature of the software can be seen as a road that takes us a certain distance in a particular direction. To navigate this space, we must find a sequence of actions or driving directions that lead from our starting position to the desired destination (see Figure 1-17). A low-level feature would be equivalent to a local road in the sense that it moves us only a short distance within the map, whereas a high-level feature would be more like a highway.

The nice thing about highways is that they take us great distances with a relatively small number of component actions (see Figure 1-18). The problem, however, is that highways only have exit ramps at commonly visited destinations. To reach more obscure destinations, the driver must take local roads, which requires a greater number of component actions. Ideally, a new highway would be constructed for us whenever we leave home so that we could arrive at any possible destination with a small number of actions (see Figure 1-19). But this is not possible for pre-built high-level interfaces.