Fast data is the key to next-gen capital and risk management

Responding to today’s regulations requires modern data architecture.

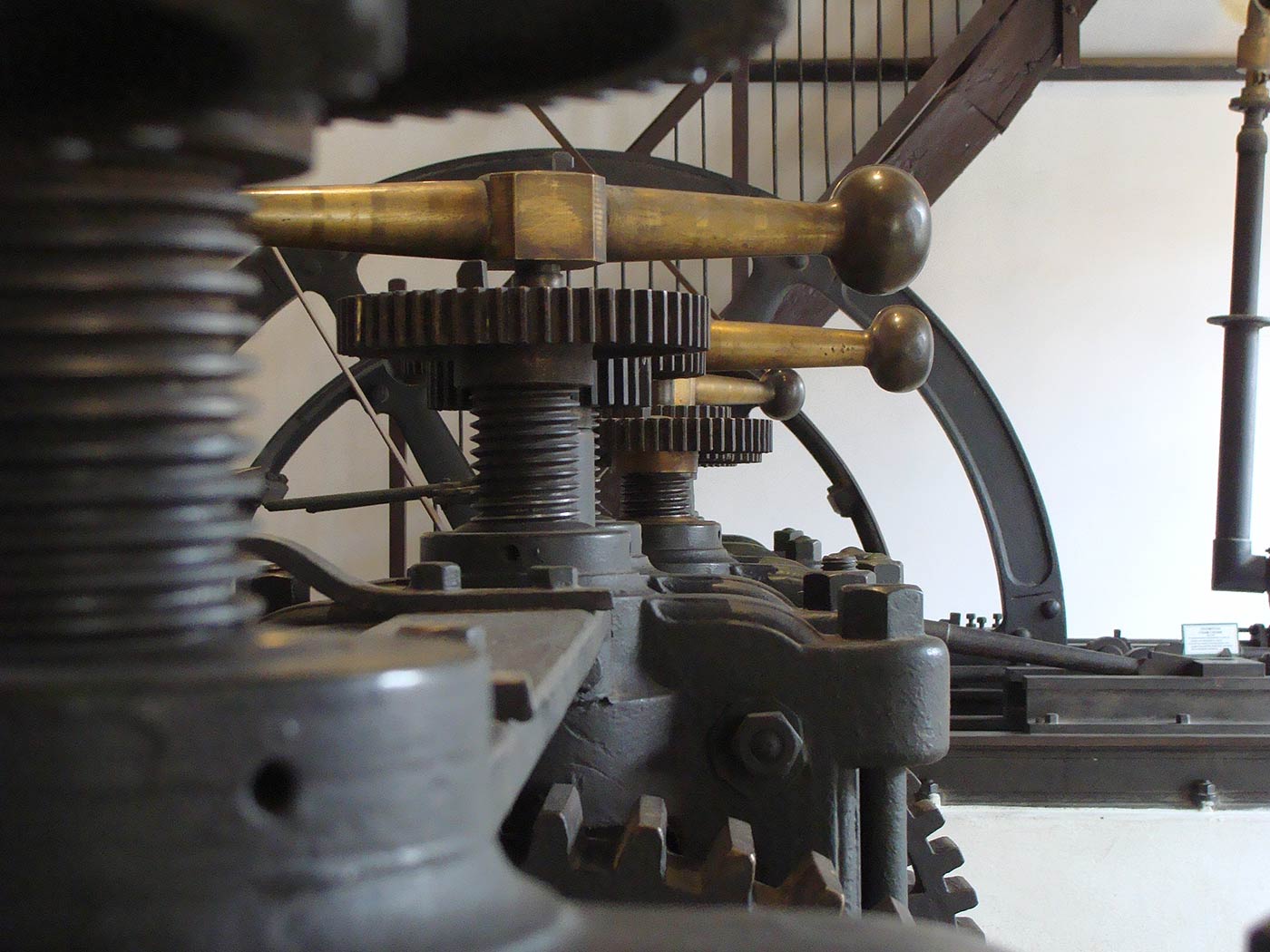

Money machine. (source: By Rodwy1328 on Wikimedia Commons.)

Money machine. (source: By Rodwy1328 on Wikimedia Commons.)

Andrew Carnegie, the story goes, once asked Frank Doubleday flat-out: “How much money did you make last month?” Doubleday hemmed and hawed about how difficult it is to calculate profits in the publishing industry, and Carnegie came back saying, “You know what I’d do if I were in a business where I couldn’t tell how much money I’d made? I’d get out of it.” The demands of business in the 21st century mean that calculation is up-to-the-minute, not by the month, and profit is only the first order of analytics: ROI, risk, volatility, and more are today’s metrics. But the point stands: if you’re not doing financial analysis as fast as your competitors, you’ll be out of the business soon enough.

Background

For most of the past century, risk management in banking was relegated to the back office. Risk analysis and calculations were an end-of-day operation, an afterthought, done post-market close; post-execution; and, crucially, it was a distant-second consideration, long behind profit and loss.

Since the global economic crisis of 2007-2008, the Basel Committee on Banking Supervision (BCBS), in conjunction with local regulators, have been focussed on making banks more safe and resilient. A whole raft of new capital charges and constraints on liquidity and leverage have been introduced: Basel II.5, Basel III, Dodd-Frank, FRTB (“Basel IV”), etc. BCBS 239, the new regulation on risk aggregation and reporting has significantly increased the risk data management capabilities banks must have—capabilities that only big data tools can provide.

Business impact

As a result of these changes, regulatory capital costs are now a significant part of trading profit and loss. The emphasis on risk management is changing and growing. Risk functions are now an integral part of trading, and risk has now moved from a back-office to a corner-office function. Exposures are monitored proactively, and risk measures calculations are calculated continuously throughout the trading day. In addition, regulatory risk impact is now a critical part of pre-trade decision-making.

Operational/technology impact

In practical terms, each of the “four V’s” of financial risk data—Volume, Velocity, Variety, and Veracity—has seen a huge uptick over the past decade.

More Volume:The volume of data required for effective risk management has increased by several orders of magnitude. This is due to several factors:

- More regulatory measures need to be calculated.

- More market scenarios are required for each trade.

- The new regulatory measures are more complex and are required to be calculated at a more granular level: trade or sub-trade level. (Previously, position-level reporting was sufficient.) In addition, calculation of these measures requires more inputs.

- The regulatory metrics have to be calculated at a firm level to take into account diversification benefits and overall portfolio effect. Hence, to calculate the impact of a new trade on capital charges, the data for the entire portfolio of the bank is required, not just a particular trading desk.

Increased Velocity: At the height of the last crisis, some firms took more than a month to work out their exposure to distressed counterparties. This is no longer acceptable. A near real-time view of positions and exposures is necessary for effective risk management, estimation of capital costs in pre-trade decisions, and more timely reporting to satisfy regulations.

Growing Variety of data inputs and calculations: The new measures are nonlinear and require complex and deep representations of trades as well as enormous amounts of reference and historic market data for the various assets held by the bank. The new risk measures introduced as part of Basel II.5/Basel III and proposed as part of FRTB (aka, Basel IV) are complex.

We have come a long way from the pre-2008-crisis requirement to calculate value-at-risk (VaR) at the position level: Under the Basel II.5 regulations, the risk of a trading portfolio must be broken down into five “buckets”—value at risk (VaR) (how much could be lost in an average trading day); stressed VaR (how much could be lost in extreme conditions); plus three types of credit risk, ranging from the risk of single credits to those of securitized loans. Basel III brought in more types of risk evaluation (CVA, Wrong-way risk, Stressed EPE, and more).

Veracity: Most of the capital charges are firm-wide measures. They are required to be calculated on all the bank’s positions. This requires gathering and integrating feeds from multiple data sources. BCBS239 lays down general principles for management of risk data sets, such as completeness, traceability, accuracy, validation, reconciliation, and integrity. These have lead to increased need for veracity of data.

Challenge

Traditional tools, systems, and architectures—RDBMS, C++ based, client-server architectures, and the like—are ill-equipped to handle these requirements. These architectures use read/write relational databases and maintain the state in those databases incrementally as new data is seen. Systems built on relational databases with mutable data models are complex and hard to scale. In addition, the relational data model is ill-equipped to handle complex data structures such as vectors and matrices commonly required required for and generated by risk calculations.

Solution

The Web behemoths—Google, Yahoo, Amazon, and Facebook—have successfully solved similar big data challenges (Volume, Velocity, Variety, and Veracity) using new (and now open source) tools and ideas.

- Hadoop and No-SQL databases provide scalable, resilient storage.

- Tools like Avro, Thrift, or Protocol Buffers store data using rich, evolvable schemas.

- Kafka provides fast, scalable, durable, and distributed messaging infrastructure.

- Apache Spark is a fast and general engine for large-scale data processing that can run SQL, streaming, and complex analytics.

- The Lambda Architecture defines a consistent approach to choosing big data technologies and to wiring them together to build a generic, scalable, and fault-tolerant risk management platform.

These tools can be used by banks to build a position-aware risk management platform that properly aggregates all intra-day trading activity, monitors exposures and risk, and provides traders with the ability to quickly and cost-effectively model and price new trades. It can help banks create a robust foundation to achieve compliance and, ultimately, a significant competitive edge by making efficient use of capital. Financial institutions with a robust data infrastructure will be in a far stronger position to cope in the future because they’ll be able to identify and roll up exposures across any number of dimensions.

Summary

Banks are faced with two options:

- Change the existing systems to satisfy the minimum regulatory requirements. Although it requires lower investment, this may not be the low-risk option as legacy platforms are being stretched to their limits and breaking points. In addition this limits the ability of traders to effectively manage capital as legacy platforms have limited capacity and flexibility which is required to run additional scenarios for pre-trade analysis and capital optimization.

- Take a holistic view and recognize that your risk infrastructure is not just required to meet regulatory requirements, but is essential for capital optimization and lasting competitive advantage. Switching the risk infrastructure to the big data stack that allows them not only to meet the minimum regulatory needs, but also give them a platform to optimize capital use more effectively.

The banks that have taken the second path and migrated their risk platforms to purpose-built big data infrastructure have reaped lasting benefits. Here are a couple of case studies showing how two investment banks are successfully embracing big-data solutions.

Case study 1 : Portfolio stress testing

A tier 1 investment bank built a new portfolio stress testing platform on the Hadoop stack to replace a system running on a relational database. The time taken to define a new scenario reduced from weeks to a day, and the time taken to run a new scenario reduced from one a day to one in minutes. The flexible scenario definition model and schema allowed the bank to use the same system to meet a variety of different stress testing requirements from different regulators and internal users. The following table outlines their chosen solutions and subsequent benefits:

| Solution Features | Benefit |

|---|---|

| Rich types supported by Hive allowed the definition of shocks and scenarios using a hierarchical model that was concise and expressive. | Business users can define a new scenario with 300 interconnected rules compared to 50,000 rules previously. The time taken to define a new scenario has been reduced from three weeks to less than a day. |

| Apache Spark was used to run scenario generation aggregation and reporting. | The bank can now run multiple stress scenarios in an hour. The users were limited in running a single scenario per day on the legacy system. |

| HDFS for persistence, Hive for a SQL interface for reports and self-service analysis |

An integrated portfolio stress testing platform handling ingest, calculation, storage, reporting, ad-hoc query, and analysis features was built using the standard components in the Hadoop stack within months.

An integrated platform enables stress testing results and analytics to be available immediately. It also provides the capability to drill-down to the most granular trade level details if required. Reporting capabilities allow breakdown and attribution of stress results by various dimensions. |

| Parquet columnar storage format and fast splittable compression | Storage requirements reduced by a 10th |

| Evolvable schemas and Hive-rich types | Users can add more position and asset attributes to shock and scenario definitions on the fly without the need to change the system. |

Case study 2 : VaR aggregation and reporting platform

A tier 1 investment bank built a strategic scenario valuations store using HDFS and Hive. VaR aggregation functions were built on top of the scenario store. This allowed fast and flexible VaR aggregations on the fly without the need to re-run new analytical jobs. The rich data model can store VaR Profit & Loss projections (PnLs) generated using different methodologies such as monte carlo and historical simulation. The ability to run custom analytics on Hive allowed the PnL store to be used for Fundamental Review of the Trading Book (FRTB) QIS reporting and other risk functions, such as back testing. The following table outlines their chosen solutions and subsequent benefits

| Solution Features | Benefit |

|---|---|

| High-definition data warehouse built using Hive stores the complete risk profile of all positions. | A high-definition warehouse allows users to run flexible and dynamic VaR aggregations and “what if” reports on the fly. |

| Ability to define run custom logic on the high-definition warehouse with user-defined functions (UDFs). | Custom analytics can be run on the warehouse without the need to run new analytics jobs. The VaR warehouse was able to handle FRTB requirements of scaled expected shortfall in weeks by defining new user defined functions. The backtesting process and analytics such as p-value and alpha also utilize the warehouse. |

| Evolvable schemas and Hive complex types | The warehouse can handle PnL vectors from different VaR methodologies like monte carlo and historical simulations using the same tables. In addition, the data model can support a varying number of scenarios at varying levels of aggregation. |