From binoculars to big data: Citizen scientists use emerging technology in the wild

Colin Kingen, software engineer for Wildbook, explains the technology driving data capture and wildlife research.

Whale shark. (source: Wild Me Director Simon Pierce, used with permission.)

Whale shark. (source: Wild Me Director Simon Pierce, used with permission.)

For years, citizen scientists have trekked through local fields, rivers, and forests to observe, measure, and report on species and habitats with notebooks, binoculars, butterfly nets, and cameras in hand. It’s a slow process, and the gathered data isn’t easily shared. It’s a system that has worked to some degree, but one that’s in need of a technology and methodology overhaul.

Thanks to the team behind Wildme.org and their Wildbook software, both citizen and professional scientists are becoming active participants in using AI, computer vision, and big data. Wildbook is working to transform the data collection process, and citizen scientists who use the software have more transparency into conservation research and the impact it’s making. As a result, engagement levels have increased; scientists can more easily share their work; and, most important, endangered species like the whale shark benefit.

In this interview, Colin Kingen, a software engineer for WildBook, (with assistance from his colleagues Jason Holmberg and Jon Van Oast) discusses Wildbook’s work, explains classic problems in field observation science, and shares how Wildbook is working to solve some of the big problems that have plagued wildlife research. He also addresses something I’ve wondered about: why isn’t there an “uberdatabase” to share the work of scientists across all global efforts? The work Kingen and his team are doing exemplifies what can be accomplished when computer scientists with big hearts apply their talents to saving wildlife.

Imagine looking through the same 5,000 images every time you get a new one, and looking closely enough to identify a matching pattern of spots in seven of them so you can tag an image as a certain animal.

One of the exciting aspects of your work is your mission, which focuses on putting technology into the hands of citizen scientists to collect data on wildlife. What are some of the challenges and opportunities that inspired the creation of Wildbook?

Wildlife biology is a field observation science that relies heavily on a technique called “mark-recapture,” in which animals in a population are individually marked (e.g., ear tags on deer or leg bands on birds) and their presences and absences are recorded manually by observers. On-site research teams are generally poorly funded and must focus limited resources on narrow windows of observation; the small resulting data sets run the risk of reflecting project limitations rather than species behavior. Arriving at a critical mass of data for population analysis (especially for rare or endangered species) can take years for small teams of researchers. Long required observation periods and manual data processing (e.g., matching photos “by eye”) can create multi-year lags between study initialization and scientific results, as well as create conclusions too coarse or slow for effective conservation action.

Now, imagine a more ideal solution: a wildlife research and conservation community continuously informed about animal population sizes and the interactions, movements, and behaviors of individual or small groups of animals. Integrate the cameras of tourists and citizen scientists, pouring the potential of big data into local conservation efforts, and augment researchers with computer vision and artificial intelligence to manage the volume of data and remove the burdens of curation, freeing them to focus on critical questions: What is the local wildlife population trajectory? Where do the animals go—and why? Are recent conservation measures reversing observed declines?

Wildbook is a multi-institution project that originally emerged out of the combination of two distinct efforts. In Western Australia, a biologist, a programmer, and a NASA scientist found a new way to “tag” whale sharks using only their spots and by collecting photographs from the dive industry. Separately, in Kenya, professors from Rensselaer Polytechnic Institute, the University of Illinois-Chicago, and Princeton University found a way to identify individual zebras based on photographs of their stripes. When the teams joined forces, Wildbook became a single open source project aimed at revolutionizing wildlife research, much of which is still entrenched in 1990s desktop software.

How is Wildbook’s interface designed, and what considerations helped to make it an easy-to-use, yet powerful, collecting tool for citizen scientists?

There are really two sides to the interface design: submissions and information available to citizen scientists, and the workflow for the researchers processing the submissions. The submission side can vary between Wildbooks created for different species. Some projects monitor a small population of critically endangered species, and the data is input and processed by a small number of researchers. These do not rely as much on citizen science. In situations like these, the submission process requires more specific information from researchers, and it requires less explanation. When citizen science input is used, however, the submission itself is pretty simple. You go to the site, upload some images, and provide some background information, if you can. The researchers in charge of the project can then categorize the contribution and run it through image analysis to see if any wildlife in the images is individually identifiable. We try to make the submission process easy. There are only a few fields we require, like date and location, and these can be approximate.

The projects that really try to engage citizen scientists (e.g., whale shark.org) are constantly evolving, and fascinating. You need to carefully consider to whom you are presenting. We work with many groups studying aquatic creatures—our first project and flagship is whaleshark.org. We think about what might motivate someone to give us their images and data. Did they hear about a particular Wildbook from a friend conducting research, or stumble upon it while searching the internet for information about an animal they saw on a scuba dive? The point of entry is important. We don’t just want to convince someone to give us something, either. We want people to be engaged.

This engagement is often creating a link between the individual animal and the citizen scientist, and other people like them. We offer email updates on whether we have identified the particular animal, and then when it has been sighted by anyone else. The submitter can then look at the profile of the animal they personally saw and find images taken by other people, perhaps from across the world. There is even the capability for someone to “adopt” an animal and give it a nickname that is visible on its profile page. This creates a sense of community and fosters interest. The citizen scientist is now part of this animal’s narrative, along with other people. I think this motivates them, and makes them more likely to continue participating.

One of the most exciting things we’ve been working on lately is Wildbook AI. This searches through YouTube videos looking for keywords and then goes through the video looking for animals to detect. If we identify an animal, we can comment on the video and let the user know, and then add it to the Wildbook database. We can look through metadata to find date and location to add to the entry. Right now, we are working on automatically creating a comment for the YouTube user to ask for some of this information if it is missing, which hopefully makes the data more useful and can draw the YouTube user (who may never have heard of us) into being a part of the project. This really adds value to Wildbook, in that we’re keeping it simple to use, drawing people in, and returning the gift of data with the gift of knowledge, even through an outside source like YouTube.

There is a vast amount of historical research that is stuck in the world of spreadsheets, obsolete databases, and filing cabinets.

Your site mentions two projects—MantaMatcher and Whale Sharks—that use Wildbook to track both populations in the wild. Can you share a couple of examples of the kinds of data being collected, how it’s making a difference, and why this data has been hard or impossible to collect prior to Wildbook?

The data starts with a single record of interaction with an animal. When someone goes on a dive and takes a picture of a whale shark, for example, we take that image or images with whatever background information that can be provided (e.g., where and when) and create an Encounter in Wildbook. The Encounter is a data record and visitable page that represents a single interaction with an animal at one point in time. We can then run the images through analysis and find out if it matches images in other encounters of the same individual. Individuals have a page that consists of all the Encounters in which they were identified. They have an ID, possibly a nickname, and potentially plenty of other information, like physical tagging, measurements, and age. We can also see co-occurrence—animals that have been sighted together and may be operating as a pack, pod, or other social group. We can then look at the collected encounters of a group or individual and see their movements, or look at the number of individuals over time to track birth and death. This population data can help contribute to the evaluation of a species’ threatened status. In 2016, the whale shark was moved up to “endangered” from “vulnerable” on the IUCN Redlist, based on data from whaleshark.org.

There are many other ways the data is useful. Some animals are notoriously very hard to tag, and therefore hard to track. Researchers simply cannot be in as many places as the public, and therefore only a citizen science-based approach can get enough data to make meaningful conclusions about population size and health. There is also the issue of trap response, which can occur during physical tagging, where an animal is understandably wary of an area where it was hurt or scared, and avoids it or creatures like humans that were present. Of course, we don’t want to hurt or scare animals, and this also makes data less useful because of the changed behavior.

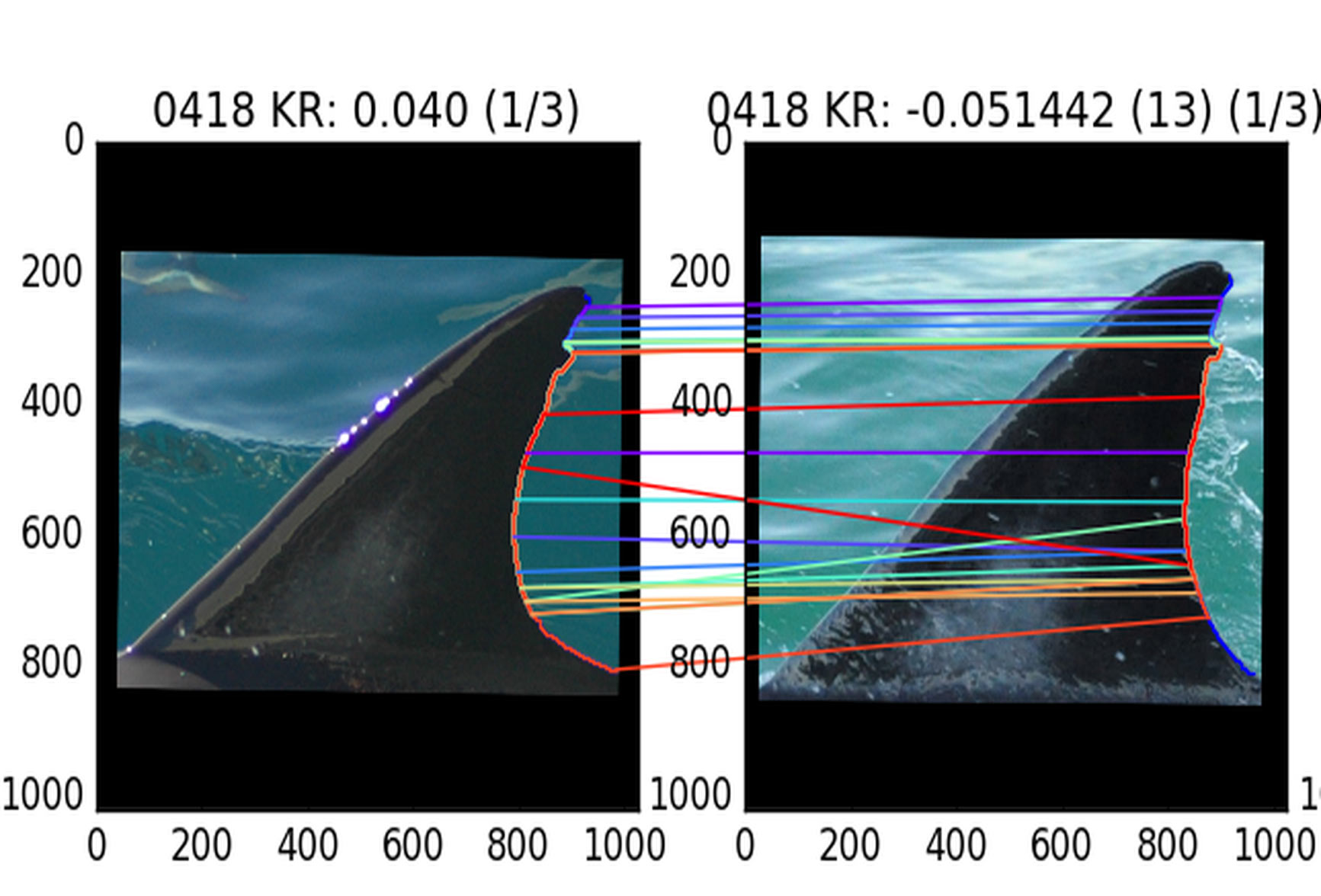

An answer is to take images and look for identifiable markings and build a record that way. But for a human, this is incredibly tedious. Imagine looking through the same 5,000 images every time you get a new one, and looking closely enough to identify a matching pattern of spots in seven of them so you can tag an image as a certain animal. Wildbook can create the data records for you; link them together in intuitive ways; and, coupled with AI image analysis, look through all those pictures in a matter of seconds to find a match, if there is one. The computer vision AI is also quite good at what it does. It can find matches that might not be so obvious to a human.

So, on the front end of the research, the benefit is reducing harm to animals and saving researchers countless hours that are then available to go back into the field, or to draw conclusions from the processed data. Another benefit is for collaborating researchers. People from all over the world can contribute to the same data set to improve predictions and conclusions instead of emailing differently formatted spreadsheets back and forth.

As you mention on your site, Wildbook is “the data management layer of the IBEIS project.” In other words, the IBEIS project is the computing/AI power that drives the functionality and cataloguing of Wildbook. How does IBEIS work, and why was it the right solution for Wildbook?

IBEIS was the original name of the computer vision aspect of our project. We currently have integrated it all into a single product that is now simply Wildbook. So, historically, we still have some references in documentation and our code to IBEIS. However, Wildbook can be used with or without the image analysis functionality, depending on the needs of the users, so this data management layer is at the fundamental core of Wildbook to this day.

Since what was originally IBEIS was developed specifically with Wildbook in mind, it was the right solution by design—it solved the problem of automating the processing of photographic data. It does so by two main steps: detection and identification. Detection finds the animals in the image. We train the software to know what a certain species looks like from multiple angles by giving the software a collection of images that contain the animal we want to identify and marking its position and the quality of the image. We then feed in this set of images and a second set of images that do not contain the chosen animal.

Once we have a body of images that have our animal in them, we can run them through identification. New images are loaded into the image analysis layer, which then checks them against each other and returns sets of potential matches, including how confident it is about those matches. These matches can then be checked and saved into Wildbook as encounters with detection, and possibly matched or created as distinct individuals by identification. The data that image analysis passes back is purely match-based. It doesn’t care about anything other than the image. That’s why Wildbook’s data management layer is important: it is used to combine computer vision results with all that pertinent metadata, such as date, location behavior, and the running record of matches.

The technology is progressing, but there is still a significant lack of computer science skills in the field of biology and wildlife research. We want to help with that.

As I’ve researched conservation and technology topics for this blog series, I’ve come across many conservation groups doing important work across the globe. There doesn’t appear to be an “uberdatabase” that connects the work or aggregates the research. Given the work you do, is this is a concern for you, too? How can the research of conservationists and citizen scientists be made more transparent and easily accessible?

There is certainly not an “uberdatabase” for wildlife and conservation research; though, you’ll see a few high-level biodiversity databases out there (iNaturalist.org, GBIF.org, iOBIS.org). One reason for this is the state of the data and a lack of standards. There is a vast amount of historical research that is stuck in the world of spreadsheets, obsolete databases, and filing cabinets. Between researchers, organizations, and the passage of time, this data can be formatted differently or rearranged inconsistently by changing research groups. The first barrier is getting that information standardized. That’s something we do all the time. We work directly with researchers, organize their data, process it faster, and get people outside of their group engaged and willing to contribute.

Sometimes there are issues of security, as well. Researchers can be concerned about who has access to certain information and who can change it. This can be due to someone writing an academic paper and wanting to keep their data close for a while, or wanting to protect the species. Location data can be very sensitive for animals that are endangered—or poached or sold as exotic pets. Sometimes the location where an image was taken can even be inferred from the image’s background, and for something like a critically endangered tortoise in a population of hundreds that can then be sold on the black market for tens of thousands of dollars, there is a real risk. So, security and separation of some information is very important, and it gets complicated quickly where competition among research groups emerges.

Another issue is the separation of different missions. Many groups are particularly concerned with the study of one animal, especially endangered ones. They use their particular Wildbook as a place to not only track and input data, but as a way to inform visitors about the situation and add a name or brand to the way they are advancing conservation for that species. For these groups, it might be more desirable to have a stand–alone site instead of being one amongst a vast number of others. We can have customized Wildbooks for two different groups to have different sites and branding, but they use the same database in an effort to not stand in the way of that kind of concern.

I don’t think the “uberdatabase” is near at hand. My hope is that we can start linking groups at a smaller level first. Most of our Wildbooks only track one species, but we are currently working with groups that track multiple species of whales and dolphins. Bringing citizen scientists and researchers together by a common interest in a certain group of animals or a geographical region is the goal for now, focusing especially on producing actionable information for local conservation action.

Is there anything else you feel is important that I’ve missed about your work, mission, and technology?

The technology is progressing, but there is still a significant lack of computer science skills in the fields of biology and wildlife research. We want to help with that. I think we have found a great role in conservation because we help researchers, for many species across the globe, be more effective with their data and we help free up time to study it. Engaging citizen scientists is going to be a really important part of research in the future, as well. When people don’t just read about something in a magazine but participate, they become that much more invested and aware of what is going on, not only with the animal they photographed, but with the future of the species and the planet. We are giving everyone the ability to play an active part of emerging knowledge and science, and I’m excited about that.