Painters on the Brooklyn Bridge. (source: Museum of Photographic Arts, public domain.)

Painters on the Brooklyn Bridge. (source: Museum of Photographic Arts, public domain.) Introduction

Finding Signals in the Noise

Popular data science publications tend to creep me out. I’ll read case studies where I’m led by deduction from the data collected to a very cool insight. Each step is fully justified, the interpretation is clear—and yet the whole thing feels weird. My problem with these stories is that everything you need to know is known, or at least present in some form. The challenge is finding the analytical approach that will get you safely to a prediction. This works when all transactions happen digitally, like ecommerce, or when the world is simple enough to fully quantify, like some sports. But the world I know is a lot different. In my world, I spend a lot of time dealing with real people and the problems they are trying to solve. Missing information is common. The things I really want to know are outside my observable universe and, many times, the best I can hope for are weak signals.

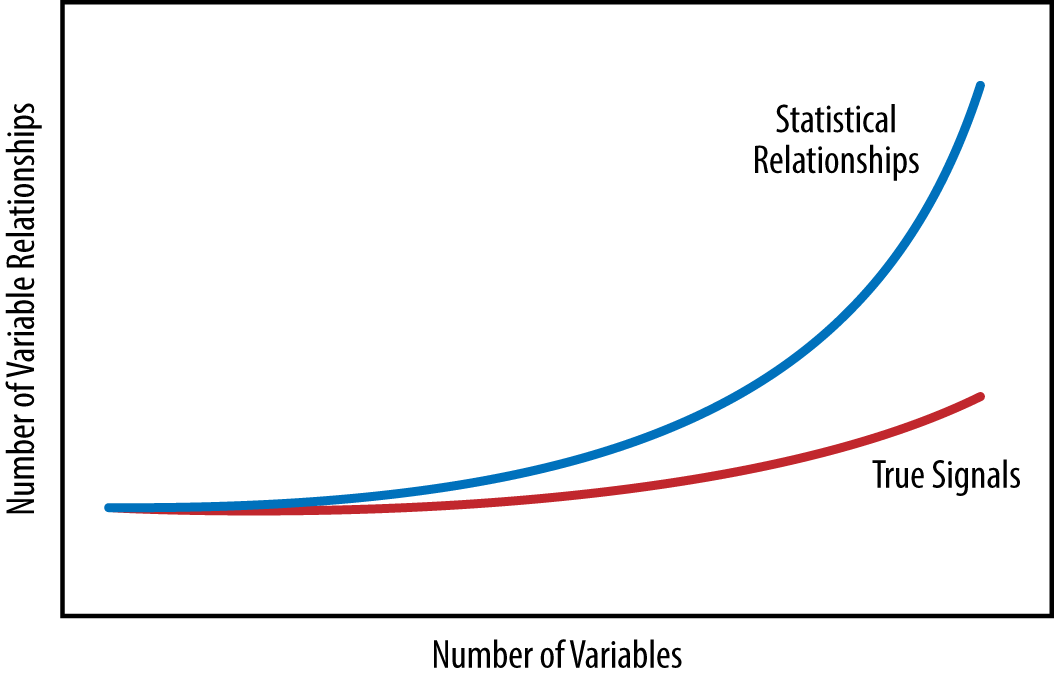

CSC (Computer Sciences Corporation) is a global IT leader and every day we’re faced with the challenge of using IT to solve our customer’s business problems. I’m asked questions like: what are our client’s biggest problems, what solutions should we build, and what skills do we need? These questions are complicated and messy, but often there are answers. Getting to answers requires a strategy and, so far, I’ve done quite well with basic, simple heuristics. It’s natural to think that complex environments require complex strategies, but often they don’t. Simple heuristics tend to be most resilient when trying to generate plausible scenarios about something as uncertain as the real world. And simple scales. As the volume and variety of data increases, the number of possible correlations grows a lot faster than the number of meaningful or useful ones. As data gets bigger, noise grows faster than signal (Figure 1-1).

Finding signals buried in the noise is tough, and not every data science technique is useful for finding the types of insights I need to discover. But there is a subset of practices that I’ve found fantastically useful. I call them “data science that works.” It’s the set of data science practices that I’ve found to be consistently useful in extracting simple heuristics for making good decisions in a messy and complicated world. Getting to a data science that works is a difficult process of trial and error.

But essentially it comes down to two factors:

- First, it’s important to value the right set of data science skills.

- Second, it’s critical to find practical methods of induction where I can infer general principles from observations and then reason about the credibility of those principles.

Data Science that Works

The common ask from a data scientist is the combination of subject matter expertise, mathematics, and computer science. However I’ve found that the skill set that tends to be most effective in practice are agile experimentation, hypothesis testing, and professional data science programming. This more pragmatic view of data science skills shifts the focus from searching for a unicorn to relying on real flesh-and-blood humans. After you have data science skills that work, what remains to consistently finding actionable insights is a practical method of induction.

Induction is the go-to method of reasoning when you don’t have all the information. It takes you from observations to hypotheses to the credibility of each hypothesis. You start with a question and collect data you think can give answers. Take a guess at a hypothesis and use it to build a model that explains the data. Evaluate the credibility of the hypothesis based on how well the model explains the data observed so far. Ultimately the goal is to arrive at insights we can rely on to make high-quality decisions in the real world. The biggest challenge in judging a hypothesis is figuring out what available evidence is useful for the task. In practice, finding useful evidence and interpreting its significance is the key skill of the practicing data scientist—even more so than mastering the details of a machine learning algorithm.

The goal of this book is to communicate what I’ve learned, so far, about data science that works:

- Start with a question.

- Guess at a pattern.

- Gather observations and use them to generate a hypothesis.

- Use real-world evidence to judge the hypothesis.

- Collaborate early and often with customers and subject matter experts along the way.

At any point in time, a hypothesis and our confidence in it is simply the best that we can know so far. Real-world data science results are abstractions—simple heuristic representations of the reality they come from. Going pro in data science is a matter of making a small upgrade to basic human judgment and common sense. This book is built from the kinds of thinking we’ve always relied on to make smart decisions in a complicated world.