TensorFlow on Android

Take deep learning mobile.

Smartphone. (source: Pixabay)

Smartphone. (source: Pixabay)

If you followed my previous post, you learned how to install GPU-accelerated TensorFlow and create your own image classifier on a Linux computer. Honestly, though, the process of classifying individual images is time consuming on a laptop: you have to download the image you want to classify and enter a lot of code into the terminal just to classify your image.

Thankfully (though it’s not well publicized) you can run the Inception classifier—or your own image classifier—live on your camera-equipped phone. You simply need to point your camera at what you’re trying to classify and TensorFlow will tell you what it thinks it is. You can also use TensorFlow on iOs and Raspberry Pi, but for this tutorial, I will be using an Android device.

I’ll also step you through how I learned to get my custom classifier working on my Android device—getting the custom graph to work was a lot of work and was not documented anywhere. A lot of searching in TensorFlow’s GitHub forums was necessary; I hope I can spare you some of that trouble.

Download Android SDK & NDK

You can download Android SDK using the terminal and then extract it into your TensorFlow directory.

$ wget https://dl.google.com/android/android-sdk_r24.4.1-linux.tgz $ tar xvzf android-sdk_r24.4.1-linux.tgz -C ~/tensorflow

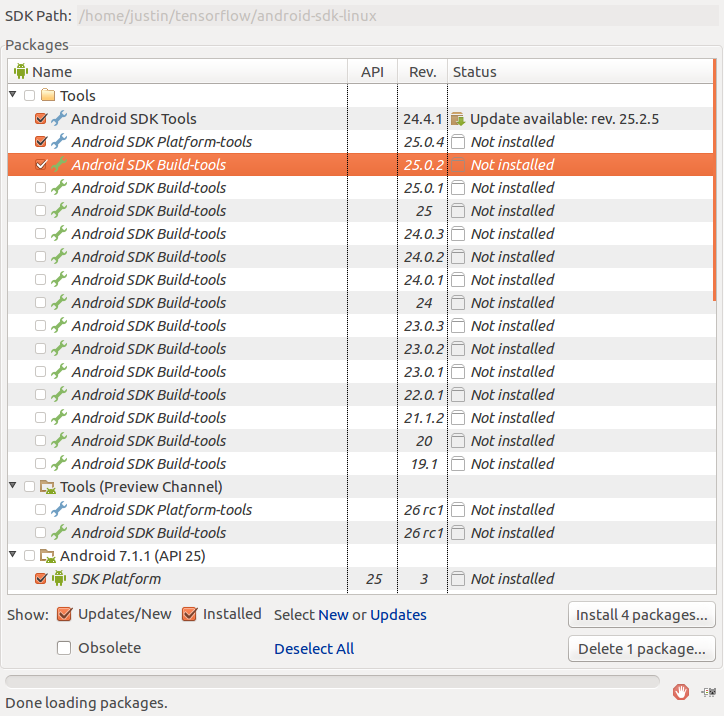

Then we need to download some additional build tools for the SDK; you want to make sure you select version 25.0.2 of the Android SDK Build-tools.

$ cd ~/tensorflow/android-sdk-linux $ tools/android sdk

Next you can download the Android NDK and extract using:

$ wget https://dl.google.com/android/repository/android-ndk-r12b-linux-x86_64.zip $ unzip android-ndk-r12b-linux-x86_64.zip -d ~/tensorflow

Download Inception

$ cd ~/tensorflow $ wget https://storage.googleapis.com/download.tensorflow.org/models/inception5h.zip -O /tmp/inception5h.zip $ unzip /tmp/inception5h.zip -d tensorflow/examples/android/assets/

Modify WORKSPACE File

In order to build our app using the Android tools, we will need to modify our workspace file.

$ gedit ~/tensorflow/WORKSPACE

You can copy the code below and overwrite the similar lines in your WORKSPACE file.

android_sdk_repository( name = "androidsdk", api_level = 25, build_tools_version = "25.0.2", path = "android-sdk-linux") android_ndk_repository( name="androidndk", path="android-ndk-r12b", api_level=21)

Enable USB debugging and adb

In order to use adb, you have to put your phone into developer mode and enable USB debugging. To do this, make sure the phone isn’t connected to a computer via USB and:

- Go to Setting – General – About Phone

- Go to Software info and touch “Build number” seven times in a row

- This will start a counter and will tell you when you are in Developer Mode

- Go to Settings – General – Developer Options

- Enable USB Debugging

Every Android phone is different, but I found my Android G4 had to be in PTP mode to use adb. You will also have to confirm the debugging connection via the phone after you plug your cell phone into your computer. When a screen appears on your phone saying “Allow USB debugging,” make sure you select the box “Always allow from this computer” and press OK.

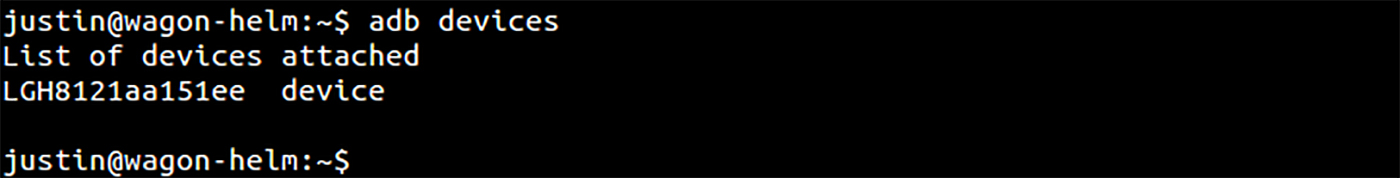

To test if this works, we can install adb, plug in our phone, and enter:

$ sudo apt-get install android-tools-adb $ adb devices

You should get a readout similar to this:

Build the APK

$ cd ~/tensorflow $ bazel build //tensorflow/examples/android:tensorflow_demo

Install the APK

This is the only step I feel like I could not objectively test. Every android device is different; I suggest upgrading your device to Android 6.0 if you are having any issues. On my friend’s Moto G, I had to remove the -g from this command:

$ adb install -r -g bazel-bin/tensorflow/examples/android/tensorflow_demo.apk

You can now have fun with TensorFlow and the Inception classifier on your android device. I find the best part is the humorous classifications it sometimes gets wrong. Keep in mind the Inception classifier only knows 1,000 images used from the Imagenet challenge.

Using a custom classifier

To use a custom graph from our own classifier, we have to optimize the graph file for mobile use and put it into the assets directory.

$ python ~/tensorflow/tensorflow/python/tools/optimize_for_inference.py \ --input=tf_files/retrained_graph.pb \ --output=tensorflow/examples/android/assets/retrained_graph.pb \ --input_names=Mul \ --output_names=final_result

Copy labels into assets folder

$ cp ~/tensorflow/tf_files/retrained_labels.txt ~/tensorflow/tensorflow/examples/android/assets/

Edit ClassifierActivity.java

$ gedit ~/tensorflow/tensorflow/examples/android/src/org/tensorflow/demo/ClassifierActivity.java

We need to edit this file to use our custom graph: replace the following lines with these variables and save (if you want to revert back to the older file you can find a copy here):

private static final int INPUT_SIZE = 299; private static final int IMAGE_MEAN = 128; private static final float IMAGE_STD = 128; private static final String INPUT_NAME = "Mul"; private static final String OUTPUT_NAME = "final_result"; private static final String MODEL_FILE = "file:///android_asset/retrained_graph.pb"; private static final String LABEL_FILE = "file:///android_asset/retrained_labels.txt";

Rebuild the APK

$ cd ~/tensorflow $ bazel build //tensorflow/examples/android:tensorflow_demo

Reinstall the APK

$ adb install -r -g bazel-bin/tensorflow/examples/android/tensorflow_demo.apk

And there we have it! You can now use your own custom classifier on your Android Device. There are endless ideas that researchers and hobbyists alike could experiment with using this technology. One idea I had was to try to learn how to classify nutrient deficiencies or other botanical ailments in plants by classifying images of unhealthy leaves. If you would like to learn how to further compress the graph file and how to use a classifier on iPhone, you can follow Pete Warden’s tutorial.

I hope I’ve inspired you and can’t wait to see what you come up with! Feel free to tag me on Twitter @wagonhelm or #TensorFlow and share what you created.

Note: This post has been updated to be compatible with TensorFlow v1.1