Glass blocks (source: stux via Pixabay)

Glass blocks (source: stux via Pixabay) In not available, we stated that “XenServer is a pre-packaged, Xen-based virtualization solution.” This implies that anyone with sufficient skill can re-create XenServer by starting with the Xen hypervisor. In reality, there is a rather large number of decisions anyone embarking on this task must make, and thankfully the team at Citrix has already made the bulk of those decisions. In this chapter, we’ll cover the core components that make up a production XenServer deployment.

XenServer Isn’t Linux, but dom0 Is

The misconception that XenServer is Linux is easily arrived at because from installation to privileged user space access, everything looks, feels, and tools much like a standard Linux environment. The boot loader used is extlinux, and the installer uses a familiar dialog for interactive setup and post installation. The administrator ends up within a Linux operating system logged in as the privileged user named root.

Post installation, when the Xen hypervisor starts, it is instantiating a privileged VM known as the control domain or, as it is commonly referred to, dom0. This control domain is a Linux VM with a custom kernel and a modified CentOS base with a very small footprint. From an administrative point of view, dom0 can be seen as a true, highly privileged VM that is responsible for core operations within Xen-based virtualization.

If you’re familiar with Linux-based systems running open source virtualization solutions, such as CentOS with KVM installed, your first instinct may likely be to log in: attempting to configure the XenServer installation within the control domain. You might also expect that XenServer will automatically accept any Linux style configuration changes you make to core items like storage and networking. And for those of you who have worked with VMware vSphere in the past, this Linux environment may appear similar to the service console.

The point we are making here is that while XenServer isn’t Linux, and dom0 is, being a Linux expert is not a requirement. Rarely will changes to Linux configuration files inside of dom0 be required, and we want to guide you away from making your administrative life any more complicated than it needs to be. The control domain for XenServer has a very rich command-line interface; and where changes may be needed, they will be discussed within this book.

For those of you who are familiar with Linux, you might encounter situations where commands and packages appear to be missing. As an example, if you try to execute yum update, you’ll quickly find that the repository yum points to is empty. It might then become tempting to point the yum package management utility at an upstream CentOS or EPEL (Extra Packages for Enterprise Linux) software repository.

Warning

Why yum Is Disabled

The XenServer control domain is highly customized to meet the needs of a virtualization platform, and as a result, installation of packages not explicitly designed or certified for XenServer could destabilize or reduce scalability and performance of the XenServer host. In the event host operations aren’t impacted, when a XenServer update or upgrade is installed, it should be expected that any third-party or additional packages might break or be completely removed and that configuration changes made to files XenServer isn’t explicitly aware of could be overwritten.

Now, with these warnings out of the way, here are the Linux operations that are perfectly safe in a XenServer environment:

-

Issuing any XenServer

xecommand - If the command is issued as part of a script, be sure to back up that script because a XenServer update might overwrite or remove it. Throughout this book, we’ll provide instructions based on

xecommands. - Interactively running any Linux command that queries the system for information

- It’s important to note that some commands might have richer XenServer equivalents. A perfect example of this is

top. While this utility is available as part of the user space in XenServer, the information it provides is only reflective of Linux processes running in dom0; not virtual machine or physical host statistics. The utilityxentopis a XenServer equivalent that provides richer information on the running user VMs in the system, and is also executed from dom0. Throughout this book, you’ll see references to extended commands that, as an administrator, you’ll find solve specific tasks. - Directly modifying configuration files that XenServer explicitly tracks

- A perfect example of such a configuration file is /etc/multipath.conf. Because dom0 is a CentOS distribution, it also contains support for the majority of the same devices an equivalent CentOS system would. If you wish to connect iSCSI storage in a multipath manner, you will likely need to modify /etc/multipath.conf and add in hardware-specific configuration information.

Architecture Interface

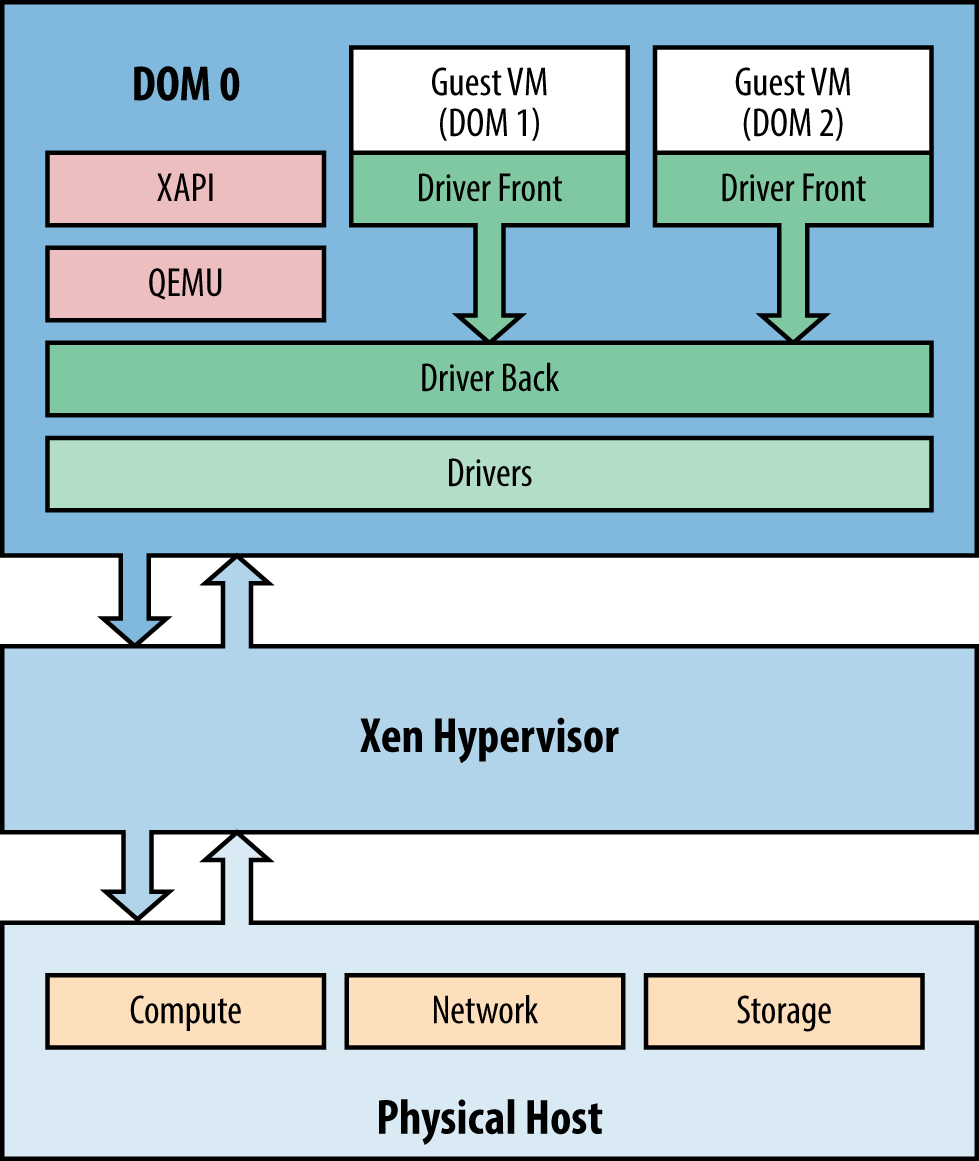

Because XenServer isn’t Linux, but dom0 is, what are the core interfaces within a XenServer environment? For that we need a diagram; see Figure 1-1. In this diagram, we see three main hardware elements: compute, network, and storage. Each of the lines represents how access is maintained. The first software element is the hypervisor. It is loaded from local storage and interfaces with compute to provide virtual machine services.

The first virtual machine is dom0, and as has already been discussed, dom0 is a privileged domain. Unprivileged domains are known as domU, or more simply, “Guest VMs,” “guests,” or just “VMs.” All domains are controlled by the hypervisor, which provides an interface to compute services. VMs, of course, need more than just compute, so dom0 provides access to the hardware via Linux device drivers. The device driver then interfaces with a XenServer process, thus providing a virtual device interface using a split-driver model. The interface is called a split-driver model because one portion of the driver exists within dom0 (the backend), while the remainder of the driver exists in the guest (the frontend).

This device model is supported by processes including the Quick Emulator (QEMU) project. Because HVM and PVHM guests (discussed in not available) do not contain para-virtualization (PV) drivers, QEMU and QEMU-dm emulate aspects of hardware components, such as the BIOS, in addition to providing network and disk access.

Finally, tying everything together is a toolstack, which for XenServer is also from the Xen Project and is called XAPI.

XenCenter: A Graphical Xen Management Tool

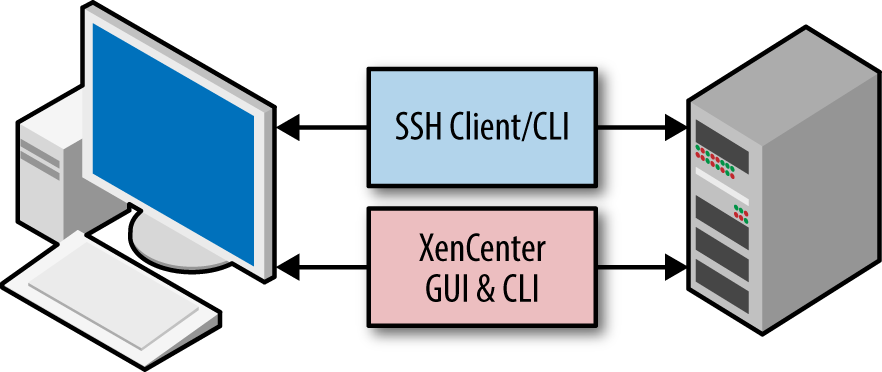

Once a XenServer host is installed, it can be managed immediately via Secure Shell (SSH) access to the command-line interface (CLI). Additionally, XenServer can also be managed by using XenCenter, a Windows-based, graphical interface for visualization and video terminal access to one or more XenServer hosts (Figure 1-2).

XenCenter provides a rich user experience to manage multiple XenServer hosts, resource pools, and the entire virtual infrastructure associated with them. While other open source and commercial tools exist to manage XenServer, XenCenter is specifically designed in parallel with each XenServer release. XenCenter takes user credentials and then interacts with XenServer using the XenAPI.

XenCenter is designed to be backward compatible with legacy XenServer versions, which means best practice is to always use the most recent XenCenter available. If you require the specific version of XenCenter that shipped with the XenServer software installed on a given host, you can obtain it by opening a web browser and entering the IP address of your XenServer host.

Core Processes

The core processes of a XenServer are what differentiates XenServer from other Xen based distributions or from other implementations of dom0 that might exist. Each of the following core processes either implement a key feature of XenServer, are responsible for its scalability, or are critical to its security.

XAPI

XAPI is the core toolstack interface and exposes the Xen management API. It is responsible for the coordination of all operations across a XenServer environment and interfaces directly with most critical components.

XAPI also responds to HTTP requests on port 80 to provide access to download the XenCenter installer.

Log data relevant to XAPI can be found in either /var/log/xensource.log or /var/log/audit.log. These logs are particularly relevant when administrative tools, such as XenCenter, use XAPI to manage XenServer infrastructure.

xhad

xhad is the XenServer High Availability (HA) daemon. It is automatically started if the XenServer host is in a pool that has HA enabled on it. This process is responsible for all heartbeat activities and exchanges heartbeat information with other member servers to determine which hosts have definitely failed due to watchdog timer failure and subsequent host fencing.

Warning

High Availability

It is strongly recommended that a XenServer deployment utilizing HA should have at least three XenServer hosts within a pooled configuration. While it is possible to enable HA with two hosts, heartbeat priority will be based on the XenServer host unique identifier.

Because XAPI is a critical component of operation, xhad serves as a XAPI watchdog when HA is enabled. If XAPI appears to have failed, then xhad will restart it. HA should always be configured via XAPI commands, and the resultant configuration will be stored on each host in /etc/xensource/xhad.conf.

Log data relevant for xhad can be found on dom0 in /var/log/xha.log.

xenopsd

The Xen Operations daemon, xenopsd, is responsible for managing the life of a Guest VM while providing separation between management (XAPI) and virtualization processes (Xen). As a Guest VM is created or started, a xenopsd process is spawned: responsible for managing low-level tasks for that Guest VM such as resource manipulation and the collection of resource usage statistics for dom0.

Log data relevant to xenopsd can be found in /var/log/xensource.log.

xcp-rrdd

xcp-rrdd is a daemon that is designed to receive and submit data in a round-robin fashion, which is then stored in a round-robin database. This daemon receives metrics from xenopsd regarding resource usage of Guest VMs. Examples of such statistics are disk IOPS, CPU load, and network usage. Where XenServer’s NVIDIA GRID GPU or vGPU pass-through technology is used, metrics for these workloads are also collected. This information is then distributed back to the administrator—on request, such as with XenCenter—for viewing both current and historical performance for Guest VMs.

Log data relevant to xcp-rrdd can be found in both /var/log/xcp-rrdd-plugins.log and /var/log/xensource.log.

xcp-networkd

This daemon is responsible for the monitoring and reporting of XenServer network interfaces, such as the virtual bridged network.

SM

SM is the storage manager and is responsible for mapping supported storage solutions into XAPI as storage repositories, plugging virtual storage devices into storage repositories, and handling storage operations like storage migration and snapshots.

Log data relevant to the storage manager can be found in /var/log/SMlog.

perfmon

Perfmon is a daemon that tracks dom0 performance and statistics.

mpathalert

mpathalert sends notifications to XenCenter, or another management interface polling for XAPI messages, when storage issues related to multipathing occur. This is a useful tool to troubleshoot networking issues for specific storage types and to also prevent single points of failure, such as where one path is down and is not coming back online.

Log data relevant to mpathalert can be found in /var/log/daemon.log and /var/log/messages.

snapwatchd

The snapwatchd daemon is responsible for calling, monitoring, and logging for processes related to VM snapshots. Information such as the virtual disk state is kept in sync, allowing XAPI to track any changes in a Guest VM’s disk or associated snapshots.

Log data relevant to snapwatchd can be found in /var/log/SMlog.

stunnel

stunnel, or the secure tunnel, is used to encrypt various forms of traffic between real or virtual points by using Open SSL. Client connections to Guest VMs, such as vncterm access through XenCenter, leverage stunnel to ensure these types of sessions are kept separate and secure.

Log data relevant to stunnel can be found in /var/log/secure and /var/log/xensource.log.

xenconsoled

The recording and logging of console-based activity, inclusive of guest and control domain consoles, is handled by xenconsoled.

Log data relevant to xenconsoled can be found in /var/log/xen/ or /var/log/daemon.log.

xenstored

The xenstored daemon is a database that resides in dom0. It provides low-level operations, such as virtual memory, shared memory, and interfaces with XenBus for general I/O operations. The XenBus provides a device abstraction bus similar to PCI that allows for communication between Guest VMs and dom0. Device drivers interact with the xenstored configuration database to process device actions such as “poweroff” or “reboot” resulting from VM operations.

Log data relevant to xenstored can be found in /var/log/xenstored-access.log, /var/log/messages, and /var/log/xensource.log.

squeezed

squeezed is the dom0 process responsible for dynamic memory management in XenServer. XAPI calls squeezed to determine if a Guest VM can start. In turn, squeezed is responsible for interfacing with each running Guest VM to ensure it has sufficient memory and can return host memory to XenServer should a potential overcommit of host memory be encountered.

Log data relevant to squeezed can be found in /var/log/xensource.log, /var/log/xenstored-access.log, and depending on your XenServer version, /var/log/squeezed.log.

Critical Configuration Files

During host startup, dom0 uses init scripts and XAPI to validate its configuration. This ensures that a XenServer can return to a known valid configuration state following a host outage, such as a restart or recovery from a loss of power. If Linux configuration files have been manually modified by an administrator, it’s not uncommon for XAPI to overwrite those changes from its configuration database.

Warning

Modification of Critical Configuration Files

In this section of the book, the files and directories listed are for administrative purposes only. Unless specified, do not modify or alter the directories and/or contents.

As a failsafe mechanism, if configuration fails the sanity checks, dom0 will boot into maintenance mode, and VMs will not start. Should this occur, administrative intervention will be required to correct the issue and bring the host back online. This model ensures a healthy system and validates the integrity of the physical host, virtual machine data, and associated storage.

/boot/extlinux.conf

The bootloader used by XenServer 6.5 is extlinux: a derivative of the Syslinux Project specifically geared toward booting kernels stored on EXT-based filesystems. Example 1-1 shows a default configuration of the kernel boot.

This configuration file provides instructions, based on a label convention, as to which kernel configuration should be loaded along with additional parameters once the physical hardware has been powered on. The default label (or kernel definition) used to load XenServer will always be flagged under the xe label.

Example 1-1. Example of the default XenServer kernel boot configuration

label xe # XenServer kernel mboot.c32 append /boot/xen.gz mem=1024G dom0_max_vcpus=2 dom0_mem=2048M,max:2048M watchdog_timeout=300 lowmem_emergency_pool=1M crashkernel=64M@32M cpuid_mask_xsave_eax=0 console=vga vga=mode-0x0311 --- /boot/vmlinuz-2.6-xen root=LABEL=rootenhlwylk ro xencons=hvc console=hvc0 console=tty0 quiet vga=785 splash --- /boot/initrd-2.6-xen.img

Additionally, kernel configurations are also offered for serial console access, or xe-serial, as well as a safe kernel, or safe, as shown in Example 1-2. These can be accessed during the initial boot sequence by entering menu.c32 when prompted by the boot: prompt.

Example 1-2. Alternate kernels

label xe-serial # XenServer (Serial) kernel mboot.c32 append /boot/xen.gz com1=115200,8n1 console=com1,vga mem=1024G dom0_max_vcpus=2 dom0_mem=2048M,max:2048M watchdog_timeout=300 lowmem_emergency_pool=1M crashkernel=64M@32M cpuid_mask_xsave_eax=0 --- /boot/vmlinuz-2.6-xen root=LABEL=root-enhlwylk ro console=tty0 xencons=hvc console=hvc0 --- /boot/initrd-2.6-xen.img label safe # XenServer in Safe Mode kernel mboot.c32 append /boot/xen.gz nosmp noreboot noirqbalance acpi=off noapic mem=1024G dom0_max_vcpus=2 dom0_mem=2048M,max:2048M com1=115200,8n1 console=com1,vga --- /boot/vmlinuz-2.6-xen nousb root=LABEL=root-enhlwylk ro console=tty0 xencons=hvc console=hvc0 --- /boot/initrd-2.6-xen.img

/etc/hosts

The hosts file is specifically populated so that specific dom0 processes can resolve to any of the following entries within the hosts file:

- localhost

- localhost.localdomain

- 127.0.0.1

The following command allows you to view the contents of the hosts file:

# cat /etc/hosts 127.0.0.1 localhost localhost.localdomain

/etc/hostname

The hostname file contains the distinct name for the host that was provided during installation. This file is only relevant to the control domain unless it is on record within the infrastructure’s DNS (Domain Name Service).

As an example, the hostname can be xenified01 and stored as such in /etc/hostname:

# cat /etc/hostname xenified01

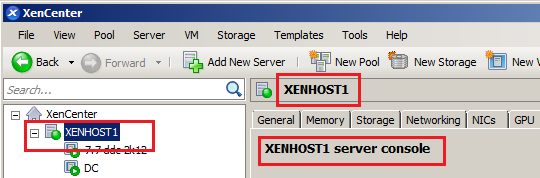

However, in XenCenter this host can be given a friendly name, such as XENHOST1 for visual purposes, as shown in Figure 1-3.

/etc/multipath.conf

Many storage devices offer the ability for a host, such as XenServer, to leverage multiple paths or I/O channels for storage purposes. XenServer supports storage configurations that use multiple paths. This support is provided via device mapper multipathing (DM-MP), and the configuration information is stored in /etc/multipath.conf. The default /etc/multipath.conf file covers a wide range of modern storage devices ensuring XenServer hosts may utilize storage redundancy, failover, and aggregation for supported storage solutions.

Warning

Do Not Alter multipath.conf Without Guidance

While dom0 make look like a standard CentOS distribution, modifying multipath.conf following Linux examples found on the Internet can easily adversely impact system stability and performance. Always modify multipath.conf under the guidance of Citrix technical support or your hardware vendor.

/etc/resolv.conf

The resolve.conf file contains DNS entries for XenServer that were specified during installation (discussed in not available) or changed via XenCenter or XAPI command.

While this file can be manually modified for troubleshooting name resolution issues, on reboot, the XAPI configuration will overwrite any modifications made to this file in an effort to retain valid DNS server settings configured via XenCenter or through the xe command-line tool.

/etc/iscsi/initiatorname.iscsi

Open-iSCSI is installed to facilitate storage connections that use iSCSI-based technology. During an install, a unique iSCSI Qualified Name (IQN) is generated for each XenServer host and is stored, per XenServer host, in /etc/iscsi/initiatorname.iscsi.

As an administrator trying to lock down iSCSI storage for specific hosts, the IQN can be determined per XenServer host, as shown in Example 1-3.

Example 1-3. Finding the XenServer’s IQN

# cat /etc/iscsi/initiatorname.iscsi InitiatorName=iqn.1994-05.com.redhat:dc785c10706

The output provides the IQN, and this can then be added to the initiator white list on the storage device. If a reinstall of XenServer is done, this value will change and all iSCSI hosts will need to have their white lists updated with the new, randomly generated IQN.

/etc/xensource/

In line with standard Linux convention, many “config” files related to Xen, XenServer, and other core XAPI processes are stored here. The majority of these files are generated on installation, updated from Xen-based tools, and in some cases, created when a XenServer administrator enabled a given feature.

boot_time_cpus

This is populated on each boot and contains information regarding the physical host’s socket and cores-per-socket information. For Linux users, if this seems familiar, it is a dump of cpuinfo that is found in the /proc/ runtime filesystem. Example 1-4 shows the command to view the contents of the boot_time_cpus file. This information is not only saved in this file but also known by dom0. In turn, the XAPI database is updated with this information and propagated to peers where the host is a member in a XenServer pool.

Example 1-4. View boot_time_cpus

# cat /etc/xensource/boot_time_cpus

To compare the accuracy of this file against dom0’s Linux-based /proc/ filesystem (populated at runtime), issue the command listed in Example 1-5.

Example 1-5. Checking for CPU information and its accuracy

# diff /etc/xensource/boot_time_cpus /proc/cpuinfo

The normal output should be nothing, or that no difference exists between /proc/cpuinfo and /etc/xensource/boot_time_cpus. As the former is generated by dom0 after the host boots and the Xen kernel begins its boot processes, any difference between these files would be an indication of a serious misconfiguration.

/etc/xensource/bugtool/

The bugtool directory has a few subdirectories and XML files, which define scope, attributes, and limits for data output as it relates to XenServer’s built-in bugtool. Such files dictate tools, maximum output size, and other criteria necessary for the crash dump kernel (or server status report) to access, exploit, and store data needed under erroneous conditions.

/etc/xensource/db.conf

This file, along with a slightly longer version named db.conf.rio, describes the following XenServer database configuration information:

- Where to find the XAPI database

- The format to use when reading the database

- Sanity information relating to session usage and validity

/etc/xensource/installed-repos/

The installed-repos directory contains subdirectories that, either from a fresh installation or upgrade, list the repositories used during installation of the host. If supplemental packs are installed, they will also be listed under installed-repos; and upon upgrade, you will be alerted to the presence of the supplemental pack. This ensures that if an installation is dependent upon a specific supplemental pack, during upgrade, an administrator can verify and obtain a suitable replacement.

/etc/xensource/master.d/

This directory can contain one or more init-level scripts that were used during host initialization. In addition, an example init-level script is provided and for XenServer administrators is a good reference illustrating the flow of run-level activities, initialization sequencing, and resources called. This example script should be named 01-example, but if one is not present on your system, this is OK.

/etc/xensource/network.conf

The network.conf file describes to the administrator what type of virtual network fabric is being used by a XenServer host. Example 1-6 shows the command to view this file. There are two syntactical options: bridge or openvswitch. bridge corresponds to the legacy Linux network bridge, while openvswitch corresponds to the Open Virtual Switch or ovs. The ovs is the default network used for all installations starting with XenServer 6.0, but if an older system is upgraded, then the Linux bridge may still be in place. It’s important to note that new network functionality will only be developed for the ovs, while at some point, the Linux bridge may be retired.

Example 1-6. View network.conf

# cat /etc/xensource/network.conf

The output will either state “bridge” or “openvswitch,” but this is a powerful piece of information to have—especially in diagnosing XenServer pool issues where the virtual network fabric, or “backend networking,” is in question. Furthermore, there are some third-party Citrix products that may require that the virtual networking fabric is of one type or another. If it is found that a particular host needs to have its virtual network fabric switched, ensure all Guest VMs are halted (or migrated to another XenServer host), and issue the command listed in Example 1-7.

Example 1-7. Change network backend

# xe-switch-network-backend {bridge | openvswitch}The host should reboot, and upon reboot will be conducting network operations via the mode specified.

/etc/xensource/pool.conf

Another key file for troubleshooting, pool.conf is used to determine if a host is a standalone server, pool master (primary), or slave (pool member reporting to a primary XenServer). The file can have one of two values: master or slave.

Using the cat command, as illustrated in Example 1-8, one can determine from the contents of pool.conf if the XenServer host thinks it is a pool master or a pool slave/member.

Example 1-8. Determine host role

# cat /etc/xensource/pool.conf

If the result of the command is master, then the host has the role of pool master. If the result of the command includes the word slave, the host is a member server and the IP address of the pool master will also be provided. When working with a standalone XenServer host (i.e., one not part of a pool), the standalone host will assume the role of master.

Only in the event of serious configuration corruption should this file be manually edited, and then only under guidance from the XenServer support team. Examples of such corruption include:

- High Availability being enabled when it should be disabled, such as during maintenance mode

- A network communication problem among pool members

- dom0 memory exhaustion

- Timeout induced by latency when electing a new pool master

- A full, read-only root filesystem

- Stale or read-only PID file

/etc/xensource/ptoken

The ptoken file is used as a secret, along with SSL, in XenServer pools for additional security in pool member communications.

This topic, along with XenServer’s use of SSL, is discussed in not available.

/etc/xensource/scripts/

The scripts directory contains one or more init-level scripts, much like the master.d directory.

/etc/xensouce/xapi-ssl.conf

The XenAPI is exposed over port 443, but proper authentication is required to invoke or query the API. In addition to authentication, a certificate private key has to be exchanged to a trusted source to secure further communications to and from a XenServer via SSL-based encryption. This is where xapi-ssl.conf is involved because it dictates the cipher, encryption method, and where to store the XenServer’s unique certificate.

/etc/xensouce/xapi-ssl.pem

The xapi-ssl.pem is a private key that is generated on installation by /etc/init.d/xapissl, which references xapi-ssl.conf to produce a unique Privacy-Enhanced Mail (PEM) file for a XenServer host. This file is what, along with two-factor authentication, is exchanged amongst XenServer hosts, XenServer administration tools, etc., to secure communications back and forth to a unique, specific XenServer host.

Replacing the xapi-ssl.pem file with a self-signed or signed certificate is discussed in not available.

/etc/ntp.conf

In our experience, this is probably the most important of all configuration files that a XenServer administrator has liberty to modify at any time. In part, this is due to the nature of system and clock synchronization for precision. But it’s also due to the fact that any virtualization solution must facilitate time synchronization across Guest VMs. The Network Time Protocol configuration file—leveraged by ntpd—should never reference a virtual machine and should always reference a local, physical machine with no more than four entries.

While any virtualization solution will do its best to accommodate time drift, if the bare metal host’s clock drifts too far off, this can affect scheduling as well as eventually bring a Guest VM down. It is vital that /etc/ntpd.conf is set up accordingly, per time zone, so the host can appropriately update Guest VMs and their internal clocks. Additionally, if XenServer hosts are pooled, time drift between hosts must be minimized, and NTP settings be identical on all pool members.

/var/xapi/

This directory contains the complete XAPI database, which is a reference of hardware utilized within a standalone XenServer installation or pool installation.

Warning

System State Information

Files within the /var/xapi/ directory contain state information and should never be manually edited under any circumstance. If modified, virtualization performance can become degraded because the XAPI database is based off the collective hardware, objects, and dependencies for virtualizing Guest VMs.

/var/patch/ and /var/patch/applied/

These two directories—/var/patch/ and /var/patch/applied/—are extremely important because between the two, they contain the footprint of hotfixes, patches, and other maintenance metadata that is essential for XAPI. Whether you have a standalone XenServer or a pool of XenServers, XAPI has to have a means to ensure that the following sequence has been successfully performed:

- Patches have been applied to a standalone host.

- Patches have been applied to all hosts in pool.

- All post-patch actions, such as a reboot, have been accomplished.

Now, while both directories contain files with unique, UUID-based filenames, it is worth noting that the most important directory is that of /var/patch/applied/, because as the name implies, this contains a minimal footprint for patches that have been applied to a host.

This directory—/var/patch/applied/—should never be deleted because it is used by XAPI to check if a pool member needs a patch applied to it, if there are new hotfixes available for your XenServer host, and so on. As such, deletion of these files could lead to a host incorrectly seeing itself as requiring a patch.

XenServer Object Relationships

While dom0 is in charge of controlling a host’s resources, it is also responsible for creating objects that represent portions of host resources. These objects are stacked to ensure that a VM’s operating system perceives real hardware, which is in reality a virtual representation of physical hardware. The difference is that these objects are assigned to a specific domU, protected, queued, and handled by dom0 to facilitate the flow of I/O for seamless virtualization. The key hardware types used across all XenServer deployments are network devices and disk storage. If the VM is hosting graphics-intensive applications, the virtualization administrator may need to assign a virtual GPU to it as well.

All objects are assigned universal unique identifiers (UUIDs) along with a secondary UUID that is known as an opaque reference. While physical objects will have a static UUID for the life span of the object, virtual objects can change depending upon system state. For example, the UUID of a virtual device may change when the VM is migrated to a different host. These distinct identifiers aide with mapping of resources to objects, as well as provide the XenServer administrator means to track such resources with xe.

Network Objects

From an architecture perspective, a XenServer deployment will consist of three network types: primary management, storage, and VM traffic. The default network in all XenServer installations will automatically be assigned to the first available network interface and that network interface will become the primary-management network. While all additional network interfaces can be used for storage or VM traffic, and can also be aggregated for redundancy and potentially increased throughput, the primary-management network is key because it is responsible for:

- Allowing administrator access through authenticating tools, such as XenCenter

- Sustaining a communication link amongst pooled XenServer hosts

- Negotiating live VM migrations

- Exposing XAPI to third-party tools

Understanding the relationship between XenServer network objects is critical to properly defining a network topology for a XenServer deployment.

Note

Planning for Multiple Pools

Although we’re focused on the design and management of a single XenServer pool in this book, if your requirements dictate multiple pools, you should account for that in your design. This is particularly true for infrastructure that can be shared between pools such as the network and storage systems. Details on these subjects can be found in not available.

pif

The pif object references the physical network hardware as viewed from within dom0. Because dom0 is CentOS based, the default network will be labeled “eth0” and will be assigned the IP address of the XenServer host. All additional physical networks will be represented as “ethX” but only physical interfaces with a “Management” or “Storage” role will have assigned IP addresses. The list of all physical network interfaces can be obtained from the command line as shown in Example 1-9.

Example 1-9. Which interfaces are configured on a host

# xe pif-list params=all

network

Because a XenServer will typically host many virtual machines that need to be connected to a network, a virtual switching fabric is created. Each fabric bridges all hosts in a XenServer resource pool and is implemented using the Open Virtual Switch or ovs. The number of virtual switches contained in a XenServer environment will vary and can often be dynamic. The list of all networks can be obtained from the command line as shown in Example 1-10.

Example 1-10. Which networks are configured for a host

# xe network-list params=all

The logical connection between network and pif is maintained by the PIF-uuids field.

vif

Each VM in a XenServer environment will typically have at least one virtual NIC assigned to it. Those virtual NICs are each called a vif and are plugged into the network fabric. All vifs are assigned a MAC address by XenServer. Often, network administrators look for virtual NICs to have a MAC vendor ID that they can then filter on. Because XenServer was designed to handle a large number of VMs, the vendor ID of a universally administered MAC address would artificially limit virtual networking so XenServer uses locally administered VMs to avoid the potential of MAC collision.

The IP address a vif receives is set by the guest and communicated to XenServer through an interface in the XenServer tools. Because the XenServer network fabric implements a virtual switch, each vif plugs into a network, which in turn plugs into a pif representing a physical NIC that is then connected to a physical switch. The list of all virtual network interfaces can be found using the command line shown in Example 1-11.

Example 1-11. Current virtual network configuration for all VMs in the pool

# xe vif-list params=all

bond

Optionally, administrators can configure redundant network elements known as “bonds.” NIC bonding, also referred to as “teaming,” is a method by which two or more NICs are joined to form a logical NIC. More on bonding can be found in not available. The list of all bonded networks can be found using the command line shown in Example 1-12.

Example 1-12. Determine current bond configuration

# xe bond-list params=all

If a bond is defined, the “master,” “slaves,” and “primary-slave” configuration items all refer to pif objects.

GPU Objects

XenServer supports the use of graphics cards with VMs. A physical graphics card can be directly assigned to a VM; and with certain NVIDIA and Intel chips, the physical card can also be virtualized. This allows the capacity of a single card to be shared through virtual GPUs.

pGPU

A physical graphical processor unit (pGPU) is an object that represents a physical video card, or graphics engine, that is installed as an onboard or PCI device on the physical host. Example 1-13 shows the command to get a list of all pGPUs installed for a host, as well as an example of results after running the command.

One example of a pGPU is an onboard VGA chipset, which is used by dom0 and its console. Example 1-14 shows the command to use to find the default GPU being used by dom0. Unless a dedicated graphic adapter or the host has a CPU with embedded GPU capabilities, the primary pGPU detected on boot is the only one that can be utilized by XenServer. Certain graphic adapters can be used in either GPU pass-through mode where the entire GPU is assigned to a VM, or in virtualized graphics mode where a portion of the GPU is assigned to different VMs.

Example 1-13. Determine physical GPUs installed for a host. Result shows a NVIDIA Quadro present.

# xe pgpu-list uuid ( RO) : 47d8c17d-3ea0-8a76-c58a-cb1d4300a5ec vendor-name ( RO): NVIDIA Corporation device-name ( RO): G98 [Quadro NVS 295] gpu-group-uuid ( RW): 1ac5d1f6-d581-1b14-55f1-54ef6a1954b4

Example 1-14. Determine the default GPU used by dom0

# lspci | grep "VGA"

gpu-group

A GPU group is simply a collection of graphics engines that are contained within a single, physical graphics card. If the graphics card is able to partition its resources into multiple GPU objects, then a GPU group will contain a reference to each object, as shown in Example 1-15.

Example 1-15. List GPU resources associated with physical graphics adapter

# xe gpu-group-list

vgpu

A vgpu represents a virtual graphics adapter as defined by the graphics card. The vgpu-type-list command shown in Example 1-16, returned three possiblevGPU options for the given host.

Example 1-16. View the GPU types within a XenServer host

# xe vgpu-type-list uuid ( RO) : ad32125b-e5b6-2894-9d16-1809f032c5bb vendor-name ( RO): NVIDIA Corporation model-name ( RO): GRID K100 framebuffer-size ( RO): 268435456 uuid ( RO) : ee22b661-4aa0-e6e6-5876-e316c3ea09fe vendor-name ( RO): NVIDIA Corporation model-name ( RO): GRID K140Q framebuffer-size ( RO): 1006632960 uuid ( RO) : 2025cc3e-c869-ef44-2757-a1994cc77c8e vendor-name ( RO): model-name ( RO): passthrough framebuffer-size ( RO): 0

Storage Objects

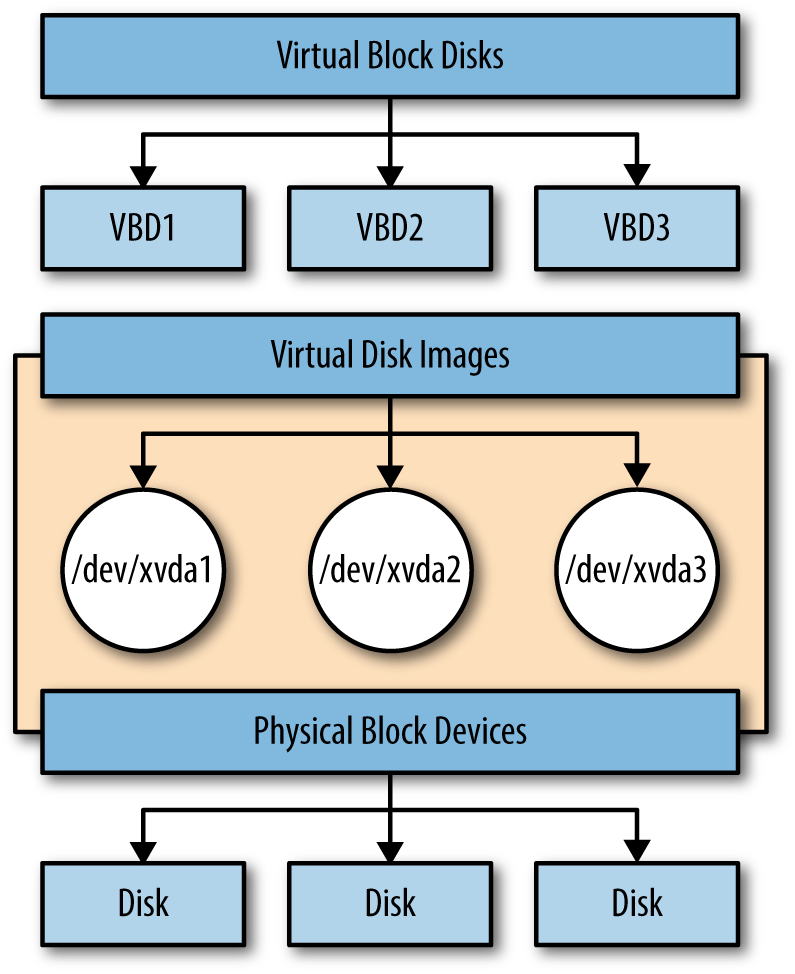

From an architecture perspective, storage will consist of two concepts represented by four distinct objects. Each is uniquely identified and maintained within the XAPI database and are as follows:

- Storage repositories (SRs) are physical devices that will contain the virtual disks associated with a VM.

- Physical block devices (PBDs) map physical server storage to a storage repository.

- Virtual disk interfaces (VDIs) are virtual hard drives that leverage a storage-management API: keeping the disk type hidden to the VM, but transactions handled accordingly by the hypervisor.

- Virtual block disks (VBDs) map VDIs to virtual machines.

The relationship between these four objects is shown in Figure 1-4.