Bringing gaming to life with AI and deep learning

Machine learning opens the door for the use of training rather than programming in game development.

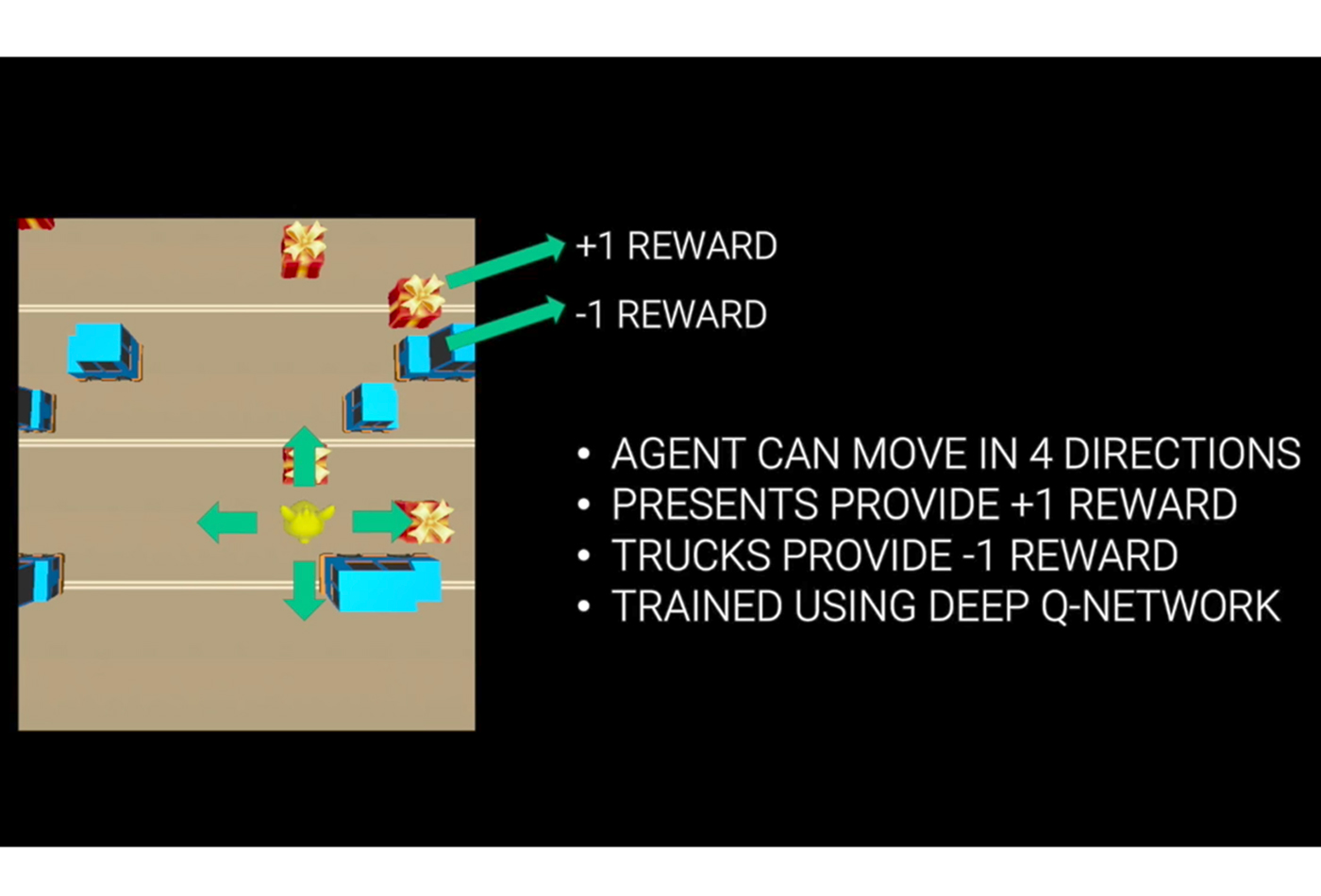

Screenshot from the Unity Reinforcement Learning Demo (source: Danny Lange, used with permission)

Screenshot from the Unity Reinforcement Learning Demo (source: Danny Lange, used with permission)

Game development is a complex and labor-intensive effort. Game environments, storylines, and character behaviors are carefully crafted, requiring graphics artists, storytellers, and software engineers to work in unison. Often, games end up with a delicate mix of hard-wired behavior in the form of traditional code and a somewhat more responsive behavior in the form of large collections of rules. Over the last few years, data intensive machine learning (ML) solutions have obliterated rule-based systems in the enterprise—think Amazon, Netflix, and Uber. At Unity, we have explored the use of some of these technologies, including deep learning in content creation and deep reinforcement learning in game development. We see great promise in the new wave of ML and AI.

To some data-driven large enterprises, ML is nothing new. In 2007, when Netflix famously launched the Netflix Prize as an open competition for the best collaborative filtering algorithm to predict user ratings for films, it was the opening shot to the wave of AI media coverage we experience today. But in early 2000, some large corporations had already dabbled with data-driven decision-making and ML in order to improve their businesses. Amazon had been hard at work on their own recommendation algorithms, trying to uncover user preferences to, in turn, convert those preferences into higher sales. Advertisement technologies were another early adopter that used ML to improve click-through rates (CTR). Over the years, ML technologies have matured and spread to many industries.

Recommendation algorithms, for instance, have evolved from just recommending to probing for more information through a mixture of exploration and exploitation. The challenge is that when Amazon or Netflix use their recommendation systems to gather data, they get an incomplete view of the user preferences if they only present highly recommended items to users but none of the many other items in their catalogs. The solution is a subtle shift from pure exploitation to adding an element of exploration.

More recently, algorithms such as contextual bandits have gained in popularity due to their inherent ability to explore and exploit, thus better learn what they do not yet know about their customer. Trust me, bandit algorithms are lurking behind many pages when you visit Amazon. We have a nice piece written up on the power of contextual bandits on the Unity Blog. Check it out for an interactive demo of contextual bandits.

In early 2015, DeepMind took the contextual bandits a step further and published results from a system that combines deep neural networks with reinforcement learning at scale. This system is able to master a diverse range of Atari 2600 games to superhuman level with only the raw pixels and score as inputs. The folks at DeepMind put the concept of exploration versus exploitation on steroids. While the contextual bandits are shallow in their ability to learn behavior, deep reinforcement learning is able to learn the sequences of actions required to maximize a future cumulative reward. In other words, they are able to learn behavior optimized for long-term value (LTV). In several of the Atari games, LTV expressed itself in the form strategy development normally reserved for human players. Watch this video of the Breakout game to see an example of strategy development:

At Unity, we asked ourselves what it would take for a chicken to learn to cross a very busy roadway without being killed by an oncoming truck while collecting gift packages. Using a generic reinforcement learning algorithm similarly to the DeepMind experiments, we provided the chicken with a positive reward for picking up a gift package and a negative reward for being hit by a truck. Additionally, we provided the chicken with four actions: move left, right, forward, and backward. With just the raw pixels and score as input, and these very simple instructions, the chicken achieves superhuman capabilities in just under six hours of training. Watch our video here:

So, how did we do this from a practical point of view? Actually, it was easy. A set of Python APIs allowed us to hook the Unity game up to a TensorFlow service running on Amazon Web Services (AWS). TensorFlow is Google’s framework for deep learning first released in 2015. As you will notice from the video, early on in the training process, the chicken is primarily exploring, but as the learning process progresses, it gradually shifts to exploitation. Notice that an important capability of this learning system is that it successfully deals with never-seen-before situations. The combination of the trucks appearing and the placement of gift packages is entirely random, and while the chicken has been trained for hours, statistically it will constantly encounter scenarios it has never experienced before. Our Python APIs make it very simple to read frames and the internal state from a game and reversely control the game using an ML model.

Now, let us reflect a bit on this chicken and its superhuman capabilities. While the chicken serves as as a reminder of how Amazon, Netflix, and Uber can use the same technologies to get better at serving their customers—whether that involves a frictionless Uber pickup experience or a Netflix show tailored to my particular taste—it does open the door for the use of training rather than programming in game development.

Just imagine training a non-player character (NPC) in a game, rather than coding its behavior. The steps that the game developer will go through involves creating a game scenario in which the NPC is trained using cloud-based reinforcement learning connected via the aforementioned Python APIs. The scenario can be entirely synthetic or it can involve a group of human players from which the NPC learns. When the NPC performs satisfactorily, another set of Unity APIs allows a developer to directly embed TensorFlow models in their games, thus eliminating the need for the game to remain connected to the TensorFlow cloud-service.

Some game developers may say they have “been there and done that” when they last tried ML in gaming 10-15 years ago. But that was a different day and age. Though expressive recurrent neural networks (RNNs) such as long short-term memory (LSTM) for sequence learning and convolutional neural networks (CNNs) for spatial feature learning had been invented, the lack of computing power combined with the lack of scalable and sophisticated software frameworks prevented successful application in a practical and demanding domain such as game development.

While the use of deep reinforcement learning in game development is still in its early phase, it has become clear to us that it is potentially a disruptive game technology, similar to what has been proven to be the case in some large enterprises. Mature, scalable ML frameworks such as TensorFlow running in the cloud paired with integration APIs are lowering the barrier of entry for game developers and ML researchers. Just as ML is moving to every corner of the enterprise, you should also expect to find ML in every corner of your next game.