Evolving infrastructures of the industrial IoT

The rise of smart machines in the new Internet economy.

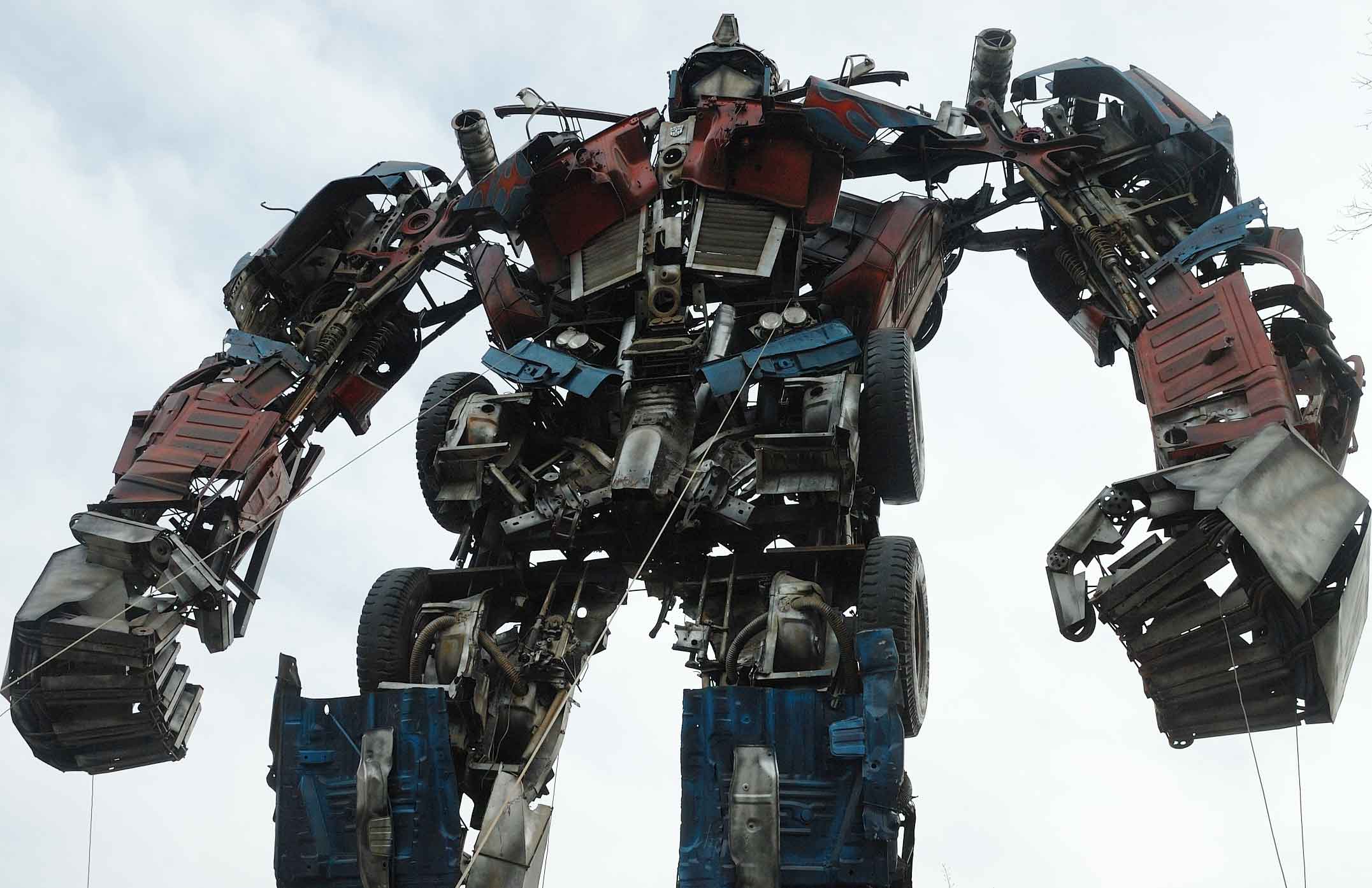

Robot sculpture (source: O'Reilly)

Robot sculpture (source: O'Reilly)

Imagine two worlds. In one world, people communicate by using abstract mathematical symbols. In the other world, people communicate by punching each other in the arm. Now imagine people from those two worlds meeting up and trying to communicate.

A similar kind of meet-up is occurring today, as the information technology (IT) world attempts to merge with the operational technology (OT) world. The goal of that merger is the Industrial Internet, variously known as the Industrial Internet of Things, Internet 4.0, Internet +, and other monikers.

Arranging a marriage between the Internet and traditional industry isn’t like slapping together a peanut butter and jelly sandwich. It’s more like trying to engineer a new and highly complex life form, using pre-existing parts that weren’t designed to work together.

Computers—of course!—are at the heart of the problem. We use symbols to communicate with our computers, but we use levers, switches, and knobs to communicate with our machines. We often communicate with our computational resources from far away, but we tend to be standing right next to our machines and devices when we flip the switch to turn them on.

Computers don’t care where we are, but machines typically require our physical presence. As a result, we almost never encounter latency issues with our machines. I turn a knob on the stove and it begins heating up. I touch the switch on the air conditioner and the room gets cooler. I push down on the accelerator pedal and the car goes faster. There are no intermediary networks between me and the machine.

Computers and machines are like cats and dogs—they just aren’t natural partners. The easy solution would be creating machines that have the capabilities of computers. But that would require scrapping millions of perfectly good machines or spending tons of money retrofitting them with chips and microprocessors.

Challenges of Merging Industry and the Internet

Combining the power and potential of the Internet with the world’s industrial platforms seems like a great idea. But there are fundamental challenges that resist easy solutions.

It’s important to remember that the Internet was a serendipitous one-of-a-kind event in human history. It might as well have dropped out of the sky, like Athena emerging full-grown from the head of Zeus. It was a largely unintended gift, wrapped up and delivered to us by an odd set of parents: Cold War paranoia coupled with the rise of computer networks at research facilities and universities across the United States. The Pentagon needed a communications framework that would survive a thermonuclear conflict; the researchers simply wanted to exchange messages between their computer networks.

That original Internet, increasingly referred to as the “consumer Internet,” was engineered primarily to convey messages. Twenty years ago, the messages we sent contained mostly text; today we’re sharing everything from classical music to cat videos, along with detailed blueprints for skyscrapers, live images from various space missions, and the latest episodes of “Silicon Valley” on HBO.

The Industrial Internet: A System of Systems

In the old days, we sometimes referred to the Internet as a “network of networks.” The Industrial Internet is more like a “system of systems.” The original Internet had relatively few moving parts. The Industrial Internet will have trillions of moving parts. Some of those parts will be moving slowly and predictably. Many of those parts will be spinning, tumbling, gyrating, flying, pumping, tunneling, and drilling.

“This is not a deterministic system,” says Joseph Salvo, founder and director of the Industrial Internet Consortium and director at GE Global Research. “We’ll need resiliency and the capabilities for reacting quickly because it will be harder to predict exactly what’s going to happen.”

Largely because of its origins as a government-funded science project, the consumer Internet and its various parts are held together by a kind of consensual centrifugal force. No similar force binds the innumerable components of the Industrial Internet. Unlike the Internet we’ve grown accustomed to using, the Industrial Internet is the child of commercial interests.

“In a technical sense, the Internet is intrinsically convergent,” says Chris Greer, director of the Smart Grid and Cyber-Physical Systems Program Office, and national coordinator for Smart Grid Interoperability at the National Institute of Standards and Technology (NIST). “It’s focused on exchanging bits over networks between computers. That single focus is a driver for convergence.”

Spinning Toward Divergence

In sharp contrast, the Industrial Internet is intrinsically divergent. “It’s not arising from a common technology goal, but from many different commercial goals, such as advanced manufacturing, smart power grids and intelligent transportation,” Greer says. “The challenge is finding the right forces and levers for promoting a convergent and interoperable Industrial Internet. It’s a hard problem.”

It’s also an important problem. The Industrial Internet isn’t just another tech fad. It’s the beginning of a new chapter in human history. Will the Industrial Internet advance the dream of universal prosperity, or will it quickly mutate into a chaotic and dangerous “Internet of unsafe things,” as some are already calling it?

Taming the Industrial Frontier

In the absence of a natural binding force, a rough draft of rules and principles is gradually emerging. In 2014, several companies with deep roots in the digital and physical economies launched the Industrial Internet Consortium (IIC). In 2015, the IIC published the Industrial Internet Reference Architecture, a lengthy document that describes and defines the various systems and frameworks necessary to sustain a viable Industrial Internet.

The document is long, but worth reading. Here’s why: It’s not merely a laundry list of technical specifications; it also contains a rudimentary social contract with a built-in moral compass—something the original Internet still lacks. Reading the document feels like reading an imaginary but highly detailed first draft of Asimov’s Three Laws of Robotics.

Clearly, the authors of the reference architecture are aware of the risks posed by a fully operational Industrial Internet spanning oceans and continents. The authors note the inherent difficulties of combining two domains (IT and OT) with different purposes, standards, disciplines, and ontologies. They even mention the “symbol-grounding problem,” alluded to earlier in this report, which comes into play when we try to use abstract symbols and computational models to operate machines that are normally operated by physical means.

Safety, Security, and Resilience: Industrial IoT Brings New Concerns

Basic expectations are listed (e.g., privacy, reliability, usability, and scalability). The complex and evolutionary nature of the new system (large scale, multi-vendor, multi-national, public-private) is acknowledged. The system must have “end-to-end characteristics” and “emergent properties,” according to the document. The three key characteristics are:

- Safety

- Security

- Resilience

Those three words—safety, security, and resilience—rarely surface in casual conversations about software development. On the other hand, they are commonly heard in conversations about construction, manufacturing, energy, pharmaceuticals, healthcare, transportation, law enforcement, military, and government.

The reason is simple: People are rarely physically injured or killed by software. It’s a different story with hardware, which is why we wear hardhats while touring factories and construction sites.

Safety, according the authors, is when the system operates “without causing unacceptable risk of physical injury or damage to the health of people, either directly, or indirectly…”

Security is when the system operates “without allowing unintended or unauthorized access, change or destruction of the system or the data and information it encompasses.”

Resilience refers to the system’s ability to “avoid, absorb, and/or manage dynamic adversarial conditions…and to reconstitute operational capabilities after casualties.”

Together, those key characteristics signal clear intentions: Let’s not try not to harm anyone. Let’s make sure that bad people can’t take over the system. Let’s make sure that when the system crashes, we can bring it up again quickly.

The document is a great starting point, and definitely feels like an improvement over the amorality of the original Internet. But will it prevent us from winding up like the Krell? In the 1956 science-fiction classic “Forbidden Planet,” the Krell create a perfect global manufacturing system that kills them when they turn it on.

Integration and Interoperability

Nobody wants a replay of the anarchic Wild West. But too many rules and regulations can stifle innovation. How much “law and order” is too much? Which are more likely to produce better outcomes, a top-down set of strictures or an organically grown body of commonly accepted behaviors?

The main difference between the Industrial Internet and the consumer Internet can’t be reduced to a simple case of “law and order vs. the Wild West.” The real difference is both substantive and strategic, and it raises a critical question: If you’re building a global digital system to run billions of machines, should the system emerge from careful planning or haphazard serendipity?

For Greer and his colleagues at NIST, the answer is careful planning. The Industrial Internet is a network of cyber-physical systems and applications that meet four basic criteria:

- Integrated

- Co-designed

- Adaptive

- Predictive

“It’s truly an integration of cyber and physical systems, not just bolting IT onto a physical system,” Greer says. “The systems aren’t accidental hybrids, but intentionally co-designed to integrate their cyber and physical components. Taken as a whole, the systems are adaptive and predictive to optimize function.”

Interoperability is crucial, since it encourages the development of horizontal systems over domain-specific vertical systems. “We’re interested in a globally interoperable system, not something that’s specific to any one domain,” Greer says. Horizontal platforms invite collaboration and knowledge sharing; vertical platforms generally veer toward secrecy and strict control of intellectual property.

Salvo and his colleagues at the IIC favor the development of horizontal systems built on platforms that are “secure, open, and standards-based,” such as IBM’s Bluemix, Microsoft’s Azure, and GE’s Predix.

Despite the presence of corporate sponsors such as AT&T, Cisco, IBM, GE, and Intel, meetings of the IIC feel less like buttoned-down business conferences and more like Grateful Dead concerts. There’s a strong sense of adventure and wide-eyed enthusiasm, enlivened by an interesting blend of demographics and disciplines.

The boldest strides so far have been made by players in the transportation and energy sectors. GE, for example, attributes huge reductions in fuel costs to its focus on gathering in-flight data from sensors on jet engines, analyzing the data in real time, and generating insight that can be used to optimize engine performance and minimize unplanned downtime. The company uses similar techniques to trim costs and improve energy production at wind farms and power plants in Asia and the Middle East.

Smart Cities Blaze a New Trail

Some of the most exciting examples of successful cyber-physical marriages have come from Global City Teams Challenge (GCTC), a network of “action clusters” that apply networked technologies to create smarter cities and communities. GCTC is a partnership of NIST, US Ignite, the Department of Transportation (DoT), the National Science Foundation (NSF), the International Trade Administration (ITA), the Department of Health and Human Services (HHS), and the Department of Energy (DoE).

Smart cities and smart communities make great test beds for a variety of reasons, including real needs (everything from disaster management to improved parking), critical mass (lots of people, businesses, and existing infrastructure), and common goals (providing public services such as health, safety, education, mass transit, and sanitation).

“When we held the challenge in 2014, we had more than four dozen cities and communities participating,” Greer says. “We had cities large and small from across the U.S. and cities from the U.K., Spain, Italy, Israel, and Indonesia. It really demonstrated the interest and momentum of the smart city movement.”

GCTC projects included a system for automated detection, triage, and treatment of severe contagious disease outbreaks; a storm-resilient modular micro-grid; a next-generation emergency response dispatch system; deploying sensor networks on buildings to monitor allergens; and a system for enabling citizens to participate in tracking multimodal transportation patterns for the purpose of developing green alternatives.

Greer sees the smart city movement as a driver for convergence, sort of a counter-force to purely commercial interests that would tend to produce closed-off, proprietary systems based primarily on the needs of separate industry verticals.

“Smart cities are a positive force for progress,” Greer says, noting differences between the ways smart city projects evolve in the U.S. and elsewhere. “In Europe and Asia, they tend to use top-down approaches, while in the U.S., we rely much more on a bottom-up, commerce-driven approach.”

Another force driving the Industrial Internet toward convergence is manufacturing, which accounts for 12% of the U.S. GDP, or roughly $2.09 trillion, according to the National Association of Manufacturers. Each dollar spent on manufacturing adds $1.37 to the U.S. economy, putting it ahead of other economic sectors in terms of its multiplier effect.

Manufacturing also generates more data than any other sector in the economy—but currently uses less than 10% of it, says William King, Chief Technology Officer at the Digital Manufacturing and Design Innovation Institute (DMDII) at UI LABS in Chicago, IL, where he directs a $100M portfolio of technology investments. King is a professor of engineering at the University of Illinois Urbana-Champaign, and an outspoken proponent of data-driven manufacturing.

“Data flows across the lifecycle of every product, and yet more than 90% of that data is thrown away,” says King. “There’s an enormous gap between what people actually use and the size of the opportunity.”

The DMDII and GE Global Research have partnered to create the Digital Manufacturing Commons (DMC), an open source community for sharing data, analytical models, simulations, and knowledge. “It’s a collaborative platform and marketplace for ideas,” King says. Ideally, the DMC will also serve as a virtual bridge between large manufacturers with deep pockets and thousands of smaller firms whose existence is essential to the vitality of the 21st century global manufacturing ecosystem.

The continuous interplay between large, small, and medium-size companies is critical to progress in the manufacturing sector. “The big companies have the resources to pursue new ideas, but many of those new ideas come from smaller companies,” King says. “There are all kinds of great opportunities out there.” The smaller companies are often more innovative, and the larger companies have the deep resources necessary for bringing new products and services to market.

Engendering a Collaborative Mindset

There is more than mere idealism at work here as players in the Industrial Internet attempt to draft basic guidelines and outline practical frameworks. The lack of a collaborative manufacturing ecosystem creates costs and reduces efficiency. The prime example is NASA, which operates spacecraft with life spans that are measured in decades. Many of the original parts in those spacecraft were invented and manufactured by small companies that no longer exist. When NASA needs a replacement part for an older spacecraft, it needs to design and manufacture the part from scratch.

King and his colleagues are aiming to avoid those kinds of scenarios as digital manufacturing evolves into a larger part of the global economy. “We’re seeing massive digitization of industry—not just in manufacturing, but also in energy and transportation. Companies need to become more information-savvy. Today, companies make products. Tomorrow, they will make products and sell data about those products,” King says.

The shift from purely physical manufacturing to cyber-physical manufacturing will open doors for millions of entrepreneurs and start-ups all over the world. It will also create serious economic threats and challenges for longstanding incumbents. “The barriers to entry are much lower for companies offering digital services than for companies manufacturing widgets,” says King.

Addressing Connectivity

Some believe the Industrial Internet will evolve quickly into the default “nervous system” of the growing cyber-physical economy. If that happens, are the world’s communication networks capable of supporting such a system?

Let’s look at the Internet of Things (IoT), which is the Industrial Internet’s consumer-centric cousin. The IoT is expected to connect 50 billion devices by 2020. That’s a large number of devices, but it pales in comparison to the predicted trillions of machines, parts, and sub-assemblies that will be part of the Industrial Internet.

“At a minimum, the IoT will probably double the amount of data on networks,” says Benjamin Beckmann, lead scientist in the Complex Systems Engineering Lab at GE Global Research. “But with the Industrial Internet, we foresee data volume increasing by 1,000X in the not too distant future. That will require us to rethink how we transfer data and how we do computation.”

If we continue pumping data into existing networks, we face the possibility of hitting the Shannon Limit. Named for information theorist Claude Shannon, the Shannon Limit denotes a theoretical boundary to the amount of information you can feed into a network before the information begins to degrade. Some experts estimate we could hit the Shannon Limit for fiber-optical cable next year; others see us hitting the wall in 2021. Whether you agree or disagree with Shannon’s theory, there’s no question that radically higher levels of data will challenge the capabilities of networks and their operators.

For example, there are only five “knobs” you can twist to push more information through a fiber-optical network, says Beckmann.

- Change the physical spacing of the fibers.

- Polarize the light going through the fibers.

- Increase the frequencies.

- Change the timing of the light pulses.

- Move the signal around in space by shifting its phase or amplitude.

After you’ve exhausted those five possibilities, the only choice left is building more fiber-optical infrastructure or moving to another medium. (For a more detailed discussion about scaling optical fiber networks, please read Peter J. Winzer’s excellent article in the March 2015 issue of Optics & Photonics News.)

Fortunately, the Industrial Internet will rely on a mix of media and supporting technologies for connectivity, including wired (coaxial, fiber-optical, Ethernet), wireless (Wi-Fi, Bluetooth, cellular), and satellite. Parsing connectivity into three broad categories makes the problem look simple, but it isn’t. Within the wireless category, for example, is WPAN (wireless personal area network), WLAN (wireless local area network), and WWAN (wireless wide area network), each of which contains several sub-categories, such as ANT+, Bluetooth Smart, ZigBee, Z-Wave, 802.11 a/b/g, LTE, 4G, and the greatly anticipated 5G. It’s a veritable alphabet soup of protocols and standards, with varying degrees of compatibility.

For established network connectivity providers like AT&T, sorting through tangles of competing and conflicting technologies goes with the territory. “The role of the network provider is ensuring that connections are secure, global, and multi-network,” says Mobeen Khan, executive director, advanced mobility solutions at AT&T Business Solutions. “That’s what we do, day in and day out.”

The machines and devices themselves are more likely to cause problems than the networks, he says. “The real challenge is making sure that industrial assets are connected, addressable, and can be updated, just like software. The assets should be network-ready from day one.” Ideally, says Khan, network providers collect, organize, and present data to their customers. The complex processes going on behind the scenes should be largely invisible.

“When we talk to customers about connectivity, we don’t just talk about cellular connectivity. If you’re connecting x-ray machines in a hospital, maybe the best way is to connect through Ethernet or Wi-Fi,” he explains. “We provide connectivity in a multi-network environment, and a single interface into multiple types of connectivity.”

At the edges of a network, most connectivity will be handled by wireless technologies. As you get closer to the “heart” of a network, connectivity will be handled by a mix of technologies. As always, timing will be everything. For non-critical devices, latencies measured in minutes or even hours will be acceptable. For critical machinery operating in hazardous conditions, latency will be measured in fractions of a second.

“Control over real-time processes can be tricky,” says Zoran Kostic, an associate professor of electrical engineering at Columbia University and a former research scientist at Bell Labs. “Today, our faster response times are on the order of several hundred milliseconds. But one millisecond is the magic number we’re striving toward. The problem is the speed of light. If I want to send a command to a machine and have it respond in one millisecond, it can’t be more than 150 kilometers away.”

Kostic sees “ad hoc” networks as part of the solution. Let’s say you go to a conference and there’s no Wi-Fi. If everyone at the conference agreed to reconfigure the Wi-Fi modules on their laptops and tablets, they could create an ad hoc point-to-point network of conference attendees.

For the Industrial Internet, a more likely solution will be embedding more intelligence in machines or devices so they can make decisions on their own, without going on a network. Salvo sees this trend leading to another trend, the emergence of self-aware networks and groups of devices. “This is the end of the Information Age and the beginning of an international revolution,” says Salvo. From his point of view, the Industrial Internet is a live demonstration of Metcalfe’s law, which claims “the value of a telecommunications network is proportional to the square of the number of connected users of the system (n2).”1

VHS, Betamax, or Netflix?

It’s safe to say that achieving seamless interoperability across a truly global Industrial Internet will require networks composed of multiple heterogeneous technologies and standards for sharing information efficiently. In the past, the inability to agree on standards constrained or slowed the growth of several industries, including broadcast television, which spent years arguing over the best way to transmit color images. In the late 1970s and early 1980s, VHS went toe-to-toe with Betamax for domination of the home videocassette recorder (VCR) market. VHS, which was considered the inferior standard, won the battle. But VHS was eventually eclipsed by DVD technology, which is rapidly losing ground to streaming video today.

When VCRs were introduced, the movie studios lobbied heavily for laws that would have severely curtailed their penetration into consumer markets. Today, it seems laughable that Hollywood was so easily frightened. As it turned out, VCRs generated hundreds of millions of dollars in additional revenue for the studios and created valuable new markets for Hollywood’s products.

Even though the studio moguls were proven wrong in the final analysis, you have to give credit to Hollywood for making its case. Who is making the case for—or against—the Industrial Internet? If the Industrial Internet has the potential its supporters claim it has, shouldn’t the public be clamoring for their governments and elected officials to fund more projects and support more experiments to determine the best ways to make it work?

If the Industrial Internet is destined to become a positive force for social good and economic growth, why aren’t more people talking about it and getting involved?