December 2019

Intermediate to advanced

468 pages

14h 28m

English

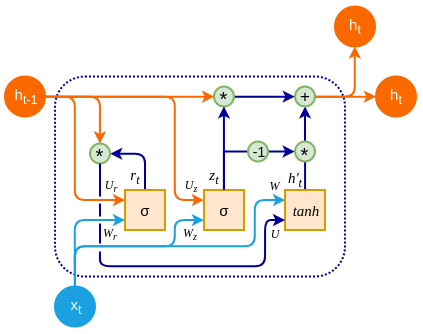

A Gated Recurrent Unit (GRU) is a type of recurrent block that was introduced in 2014 (Learning Phrase Representations using RNN Encoder-Decoder for Statistical Machine Translation, https://arxiv.org/abs/1406.1078 and Empirical Evaluation of Gated Recurrent Neural Networks on Sequence Modeling, https://arxiv.org/abs/1412.3555) as an improvement over LSTM. A GRU unit usually has similar or better performance than an LSTM, but it does so with fewer parameters and operations:

Similar to the classic RNN, a GRU cell has a single hidden state, ht. You can think of it as a combination of the hidden and ...

Read now

Unlock full access