Chapter 7. The Simplest Way to Keep Artificial Intelligence Ethical

I wrote an AI application designed to discover cohorts for patients. If you are diagnosed with an illness (especially a chronic illness), one of the best things that you can do to improve your long-term health is to actively manage your illness with other patients in a similar situation. Find people like you with a similar diagnosis and support each other. For patients who opt-in to such a program, AI can automatically find patient cohorts and help those with similar situations find one another.

I ran my model to find suitable partners for patients and checked the results of an early version, and I saw a huge red flag: the algorithm segregated people mostly by race and gender. Using AI Forensics, we caught the problem early and adjusted the algorithm’s structure and training to prevent racial and gender bias.

Know Thine AI: Create a Profile of the AI Based on Its Recent Behavior

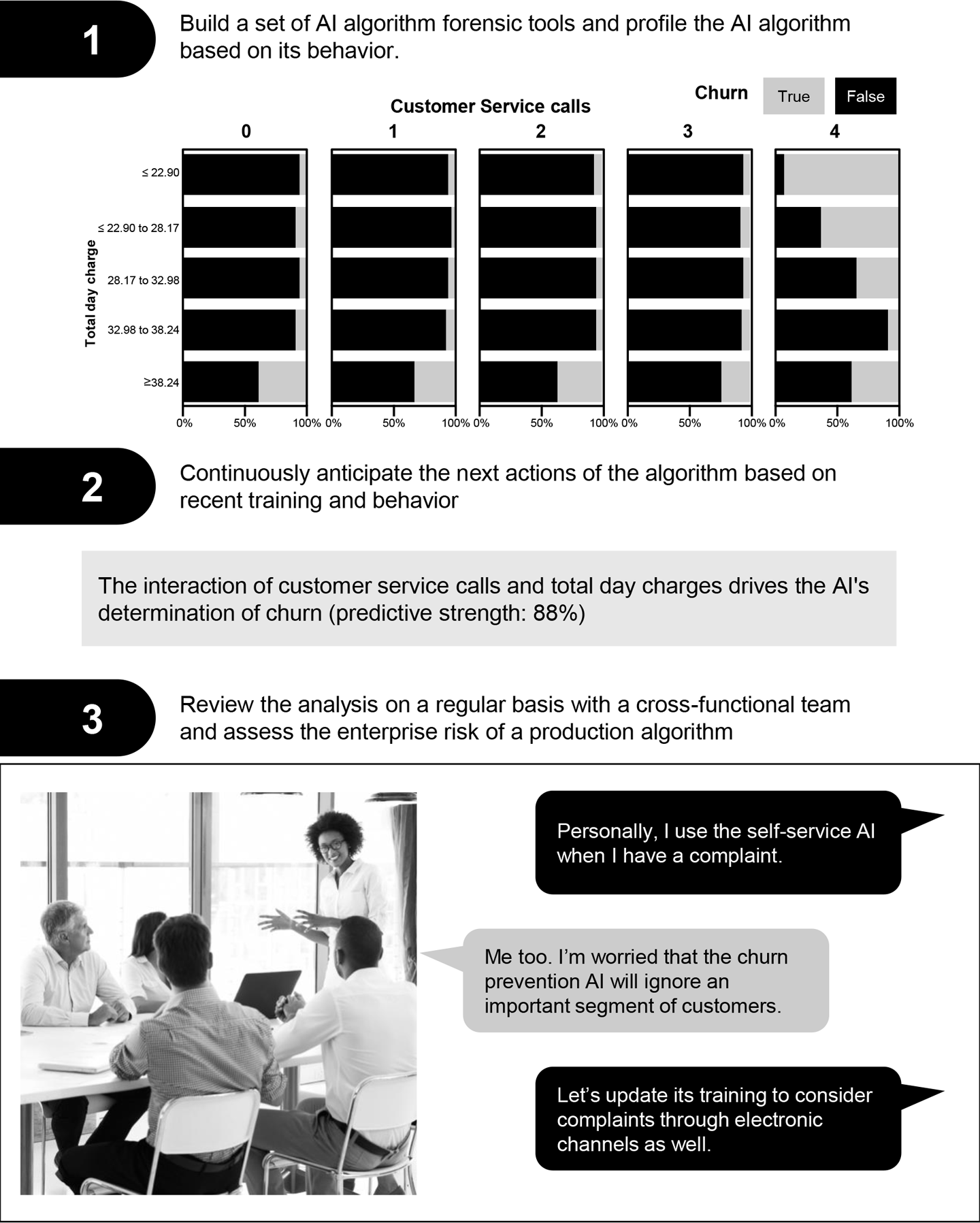

Bias, ethics, and fairness is a big risk factor in AI. The simplest way of protecting against this risk is to check the results. Part of a standard checklist for protecting against ethical violations with AI is to build AI forensics tools, use those tools to profile the algorithm, use the profile to anticipate behavior, and discuss the anticipated behavior with a diverse risk mitigation team (see Figure 7-1).

Figure 7-1. Performing AI ...

Get Artificial Intelligence now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.