So far in this book you have created your new instances and installed the necessary software on them by hand. There’s nothing wrong with that, and it’s probably the way you’ll wind up configuring your virtual machines as you go on. That said, there is another—and potentially easier—way to accomplish the same thing. You can actually import precreated instances of your software straight into your VPC using the Amazon EC2 API Command Line Tools.

Over the last several years, virtual machines have been growing in popularity as a way to quickly create and scale infrastructure resources. In fact, each of the EC2 instances you’ve been creating in this book is actually a virtual machine hosted in Amazon’s cloud. The two most common formats for virtual machines are the virtual machine disk format (VMDK, created by VMWare Corporation) and the Virtual Hard Disk (VHD) format, an invention of Microsoft. Each format has gained a significant following, and there are now many vendors that package preconfigured versions of their software in one or both of these formats.

The advantage of using one of these preconfigured images is that you can directly import them into the Amazon EC2 infrastructure as a fully ready instance. You can even import them into your existing VPC—if you know the magic words, that is! The way to accomplish these feats of IT magic is with the Amazon EC2 API Command Line Tools.

Amazon is a very developer-friendly entity. For just about every service they offer, they also offer some kind of SDK or other developer tool. The AWS services are no exception. For the purpose of automating common AWS functions—like creating VPCs, starting and stopping an EC2 instance, or just about any other thing you can think of—Amazon has provided a wonderful set of command-line tools. The set you care about in this section of the book are those having to do with EC2 instances and VPCs. These collections of functions all exist in one set of command-line tools known as the EC2 API Command Line Tools.

There are too many to list completely here, but they cover approximately these functional areas:

AMIs/images

Availability zones and regions

Customer gateways

DHCP options

Elastic block store

Elastic IP addresses

Elastic network interfaces

Instances

Internet gateways

Key pairs

Monitoring

Network ACLs

Placement groups

Reserved instances

Route tables

Security groups

Spot instances

Subnets

Tags

Virtual machine (VM) import and export

VPCs

Virtual private gateways

VPN connections

Windows

Yikes! That’s a lot of stuff!

For your purposes, you’re only going to be concerned with three specific functions from the VM import function group.

ec2-cancel-conversion-taskec2-describe-conversion-tasksec2-import-instance

The ec2-import-instance command does

exactly what it sounds like. It imports a virtual machine you have on a

local computer and converts the VM to a valid EC2 instance. This process

is called a conversion task. It

therefore stands to reason that

ec2-describe-conversion-tasks gets information about

your currently running tasks and

ec2-cancel-conversion-task cancels a task that’s in

process.

Before you can use these tools, you need to install them on your local machine.

In this chapter, I’m going to assume that you’re installing these tools on a Windows machine. I make this assumption because a) that’s the dominant desktop platform in IT and b) it’s the trickiest to get working.

The EC2 Command Line tools can be found at the Amazon

developer site. They come in a ZIP file, so be sure to unzip them

someplace you can easily remember, like

c:\ec2-tools.

The next thing you need is a current version of a Java runtime environment (JRE), which you can get from the Oracle Java site. Once you’ve downloaded and run the installer for the JRE, you can continue. For this chapter I’m going to use the installation path of my JRE: c:\Program Files(x86)\java\jre7\.

Many of the EC2 command-line tools also require a client certificate to identify you. This is for your protection, I promise. Since you probably don’t have such a pair from Amazon yet, let’s get those now.

Go to the main Amazon developer portal.

Select the pull-down in the upper right titled My Account/Console, and select Security Credentials.

You might be prompted to sign in to your Amazon developer account, so do that. If not, just continue.

In the Access Credentials part of the page, click the tab marked X.509 Certificates.

If this book is your first experience with Amazon AWS, you will need to create a certificate pair. This pair consists of two parts: a private-key file that you must store locally and a certificate file that you can always redownload if you need to.

Warning

You will get one—and only one—opportunity to save the private-key file associated with your certificate. It will automatically download through your browser when you create a new pair.

Save this file someplace safe and memorable.

I cannot stress this warning enough: if you misplace this file (as I have) you will need to invalidate the certificate it corresponds to and create a new pair.

Click the Create a New Certificate link.

A new window will pop up with two buttons: one to download the private-key file and one to download the new certificate. Click each button in turn, and save each file someplace safe.

Click the Access Keys tab.

Copy the Access Key ID to a text file someplace safe and private.

Click Show and copy the Secret Access Key value to the same file.

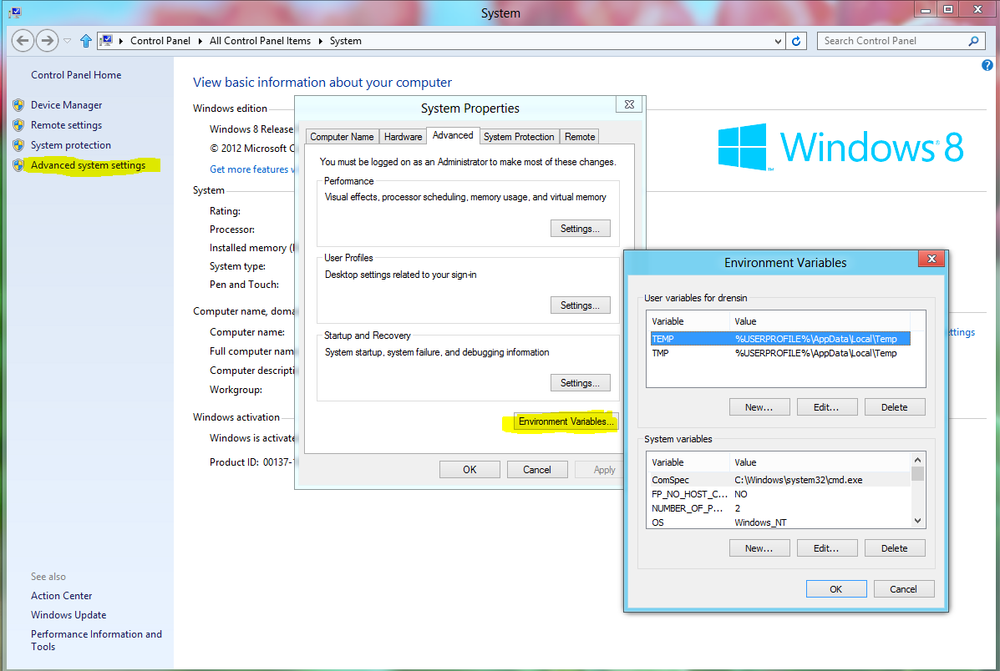

With the client certificate out of the way, you need to set some very handy environment variables. On your Windows machine, right-click My Computer and select Properties → Advanced Settings → Environment Variables.

You need to set the following system variables:

EC2_HOMEThe location on disk where you unzipped the EC2 tools. You want the directory that contains the bin subdirectory.

JAVA_HOMEWhere the current JRE is installed. In my case that’s c:\Program Files(x86)\Java\Jre7.

EC2_PRIVATE_KEYThe full path to the private-key file you downloaded earlier.

EC2_CERTThe full path to the X.509 certificate you also saved.

ACCESS_KEY_IDThe value of the Access Key ID you saved earlier.

SECRET_ACCESS_KEYThe value of the Secret Access Key you saved earlier.

PATHThis is a preexisting variable that defines where Windows goes to look for software you want to run. You need to append the string

%EC2_HOME%to it so it looks something like:c:\Windows System;c:\someplace else;%EC2_HOME%

Tip

If you’re running on Mac OS X (as I am) or on Linux, you probably just want to create a simple shell script that exports these variables, or you can define them in a well-known place for whichever shell you use—in my case .bash-profile, because my native shell is Bash.

Now that your tools are downloaded and configured, it’s time to have some fun!

Since the point of this chapter is to teach you how to upload your own VMs as instances in your VPC, you should first start with a test image.

Note

Although the EC2 service supports both Linux and Windows Server instances, at the time of this writing you can import only images built on Windows Server 2008, 2008 R1, and 2008 R2 through the command-line tools. It’s a bummer, I know, but I’m sure Amazon will get around to rectifying it in the near future. They tend to be pretty good at that stuff.

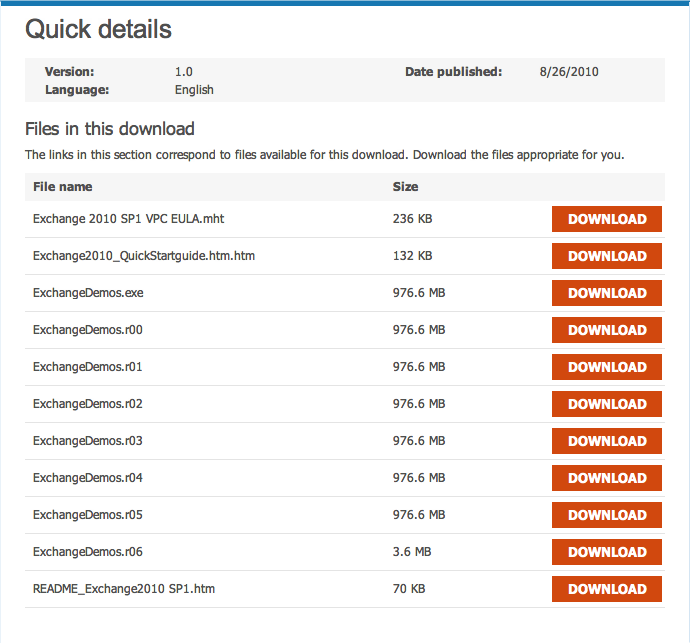

Not all of you will already have a handy VHD or VMDK to test with; if you don’t, you can go get one from our friends at Microsoft:

Go to the Microsoft Exchange Server Software Evaluation Page.

You may need to sign in with a valid Microsoft Live account. Get one if you don’t have one.

Download all the files from the page.

Run the executable once all the files are downloaded.

The VHD image is actually wrapped in a self-extracting RAR archive.

Tip

If you’re doing this on a Mac, don’t worry that one of the files is a Windows executable. Just grab a copy of the free version of the Stuffit Expander utility and select the .exe file. It will expand just fine from there. Alternatively, there’s always the great open source Rar Expander.

My machine extracted the VHD to ExchangeDemos\SLC-DC01\VirtualHard Disks.

Change directory into the extraction directory.

The command you’re interested in is

ec2-import-instance. A shortened version of its syntax

is:

ec2-import-instance -tinstance_type[-ggroup] -ffile_format-aarchitecture-bs3_bucket_name[-oowner] -wsecret_key[-svolume_size][-zavailability_zone] [-ddescription] [--user-data-filedisk_image_filename] [--subnetsubnet_id]

Table 4-1. ec2-import-instance options

| Option Name | Description | Required |

|---|---|---|

-t, --instance-type

instance_type | Specifies the type of instance to be launched. Type: String Default:

Valid

values: Example:

| Yes |

-g, --group group | The security group within which the instances should be run. Determines the ingress firewall rules that are applied to the launched instances. Only one security group is supported for an instance. Type: String Default: Your default security group Example: | No |

-f, --format file_format | The file format of the disk image. Type: String Default: None Values: Example: | Yes |

-a, --architecture architecture | The architecture of the image. Type: String Default: i386 Values: Condition: Required if instance type is specified; otherwise defaults to i386. Example: | Yes |

--bucket s3_bucket_name | The Amazon S3 destination bucket for the manifest. Type: String Default: None Condition: The Example:

| Yes |

-o, --owner-akid access_key_id | Access key ID of the bucket owner. Type: String Default: None Example:

| No |

-w, --owner-sak secret_access_key | Secret access key of the bucket owner. Type: String Default: None Example:

| Yes |

--prefix prefix | Prefix for the manifest file and disk image file parts within the Amazon S3 bucket. Type: String Default: None Example: | No |

--manifest-url url | The URL for an existing import manifest file already uploaded to Amazon S3. Type: String Default: None. This

option cannot be specified if the Example:

| No |

-s, --volume-size volume_size | The size of the Amazon Elastic Block Store volume, in Gibibytes (230 bytes), that will hold the converted image. If not specified, EC2 calculates the value using the disk image file. Type: String Default: None Example: | No |

-z, --availability-zone availability_zone | The Availability Zone for the converted VM. Type: String Default: None Values: Use

Example: | No |

-d, --description description | An optional, free-form comment returned verbatim

during subsequent calls to

Type: String Default: None Constraint: Maximum length of 255 characters Example: | No |

--user-data user_data | User data to be made available to the imported instance. Type: String Default: None Example: | No |

--user-data-file

disk_image_filename | The file containing user data made available to the imported instance. Type: String Default: None Example: | No |

--subnet subnet_id | If you’re using Amazon Virtual Private Cloud, this specifies the ID of the subnet into which you’re launching the instance. Type: String Default: None Example: | No |

--private-ip-address

ip_address | If you’re using Amazon Virtual Private Cloud, this

specifies the specific IP address within Type: String Default: None Example: | No |

--monitor | Enables monitoring of the specified instance(s). Type: String Default: None Example:

| No |

--instance-initiated-shutdown-behavior

behavior | If an instance shutdown is initiated, this determines whether the instance stops or terminates. Type: String Default: None Values: Example:

| No |

-x, --expires days | Validity period for the signed Amazon S3 URLS that allow EC2 to access the manifest. Type: String Default: 30 days Example: | No |

--ignore-region-affinity | Ignore the verification check to determine that the bucket’s Amazon S3 Region matches the EC2 Region where the conversion task is created. Type: None Default: None Example:

| No |

--dry-run | Does not create an import task, only validates that the disk image matches a known type. Type: None Default: None Example:

| No |

--no-upload | Does not upload a disk image to Amazon S3, only

creates an import task. To complete the import task and upload the

disk image, use Type: None Default: None Example:

| No |

--dont-verify-format | Does not verify the file format. You don’t recommend this option because it can result in a failed conversion. Type: None Default: None Example:

| No |

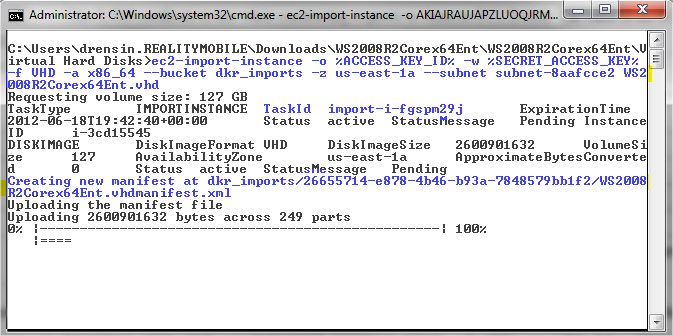

You don’t need all of these options, of course. Since you want to launch your new instance inside of your existing VPC, your command will take the form of:

ec2-import-instance -o %ACCESS_KEY_ID% -w %SECRET_ACCESS_KEY% -f VHD -a

x86_64 --bucket S3_bucket_name -z zone_of_VPC --subnet

subnet_ID_for_VPC path_to_VHD_or_VMDKIn my particular case, my VPC is in availability zone

us-east-1a and my subnet ID is

subnet-8aafcce2. I also created an S3 bucket named

dkr_imports as temporary storage for my import jobs.

With all this in mind, my command will be:

ec2-import-instance -o %ACCESS_KEY_ID% -w %SECRET_ACCESS_KEY% -f VHD -a

x86_64 --bucket dkr_imports -z us-east-1a --subnet subnet-8aafcce2

SLC-DC01.vhdTip

You need a valid S3 bucket for this process because that’s where Amazon stores your uploaded instance while it converts it to a VPC. No worries if you don’t already have one created. As long as you specify a name, the command will create a bucket with that name for you.

If the image upload process went well, you should receive a message like:

Done. Average speed was 4.852 MBps. The disk image for import-i-fgspm29j has been uploaded to Amazon S3 where it is being converted into an EC2 instance. You may monitor the progress of this task by running ec2-describe-conversion-tasks. When the task is completed, you may use ec2-delete-disk-image to remove the image from S3.

The

parts highlighted in bold are the important bits of this message. First,

it has given you the name of the import task:

import-i-fgspm29j. Second, it has told you that you can

check on the status of your import by running

ec2-describe-conversion-tasks; finally, it conveys that

you can delete your intermediate file from your S3 bucket using the

ec2-delete-disk-image command.

As you may have inferred from these messages, the import process is actually three separate processes:

- Upload

This is the step you just completed where your VHD was uploaded to Amazon for conversion into a runnable instance in your VPC.

- Image conversion

Once the image is uploaded, Amazon needs to convert the image to an intermediate format it uses to populate an EBS volume that it will attach to your new instance.

- Instance creation

The final stage of the process is where Amazon creates an instance and attaches the new EBS volume to it as its root storage device.

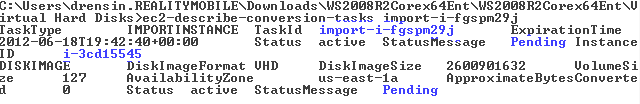

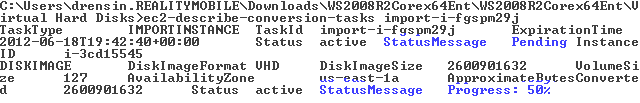

If you run

ec2-describe-conversion-tasks on task

import-i-fgspm29j, you might see something like:

I’ve highlighted some important parts. The first thing to notice is that you’re getting two statuses with this command. The higher one in the output is the instance creation status, while the lower one is the conversion status. Since I ran this command immediately after the upload, you can see that both tasks are still pending.

If I wait a little while and run the command again, I get the following:

Notice here that the creation status is still pending while the conversion status is at 50 percent.

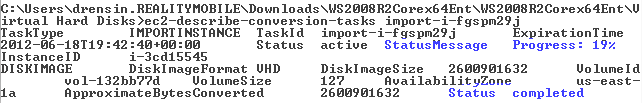

Next, I wait 30 more minutes and get the following:

The instance creation process is at 19 percent and conversion process is marked as completed.

In general, expect to wait at least an hour after your upload for the instance to be created and online.

Tip

For those of you running on either Linux or Mac OS X, you can check on the status of your import every 5 minutes by running this handy command:

while true; do ec2dct import-i-fgspm29j | grep StatusMessage | cut -f 7-10; sleep 300; clear; done

Of course you need to substitute your import task ID for mine, but you get the point.

Eventually, the process will complete and you will be able to start your new instance from the EC2 instance list in the AWS Management Console.

Tip

This instance we’ve uploaded happens to be an entire IT infrastructure on one machine: domain controller, Active Directory, and Exchange server. If you really wanted to get up and running fast, you could just import this instance into a regular EC2 instance and call it a day, but you want more service than this provides. (And doing so would make this book wicked short!)

Please note that when this instance starts you will be able to RDP in to it from your gateway machine, but we’ll need special credentials to get in. More on that in a minute.

While you’re waiting for your import to finish, you should go over some do’s and dont’s as they relate to importing an instance into AWS.

Amazon has a very detailed guide on how to prepare your existing VMs (Citrix, VMWare, and Microsoft Hyper-V). Read it twice. It will shortcut lots of potential problems. In the meantime, here are some highlights:

- Ensure that remote desktop is enabled.

If RDP is not already enabled on the image you are importing, you will have no way to connect to it once it’s an EC2 instance.

- Windows firewall must allow public RDP traffic.

There’s no point enabling RDP if the firewall won’t allow public IP addresses to use it.

- Autologon must be disabled.

You can’t RDP into a Windows Server machine if autologon is enabled, because there’s no username/password prompting.

- No Windows updates should be pending.

When AWS first boots the new instance, the very last thing you want to happen is for it to start into an update cycle.

Note

While you’re waiting for your import and conversion task to complete, I’d like to specifically thank Peter Beckman from the EC2 Import/Export team at Amazon Web Services. My first couple of attempts to do this failed for some subtle reasons, and he was super helpful while I was troubleshooting. If you get a chance, drop him a shout-out at,"pbeck@amazon.com", his email address, and tell him “thanks” for helping your humble author!

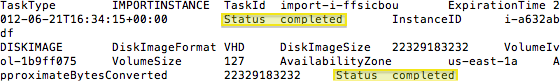

If everything goes according to plan, you should see this:

When you go back to your AWS console and look at your EC2 instances, you should see one like this:

The instance has no label because you didn’t give it one during

the import. You could have, but you already had a bunch of command-line

options to manage. Go ahead and click the field and give it a meaningful

name—maybe something like The Instance I’m Going to Delete in 2

Minutes. Then, right-click and start it up.

Now you should verify that everything is working OK.

Find the internal IP address of the instance from its details page. In my case it’s 10.0.0.31.

Connect to the gateway via your VPN.

Using your RDP client, connect to the gateway machine you created.

Once there, open a command prompt and type

ping 10.0.0.31. Of course, substitute your IP address. If you get the following, things are looking up.C:\Users\Administrator>ping 10.0.0.31 Pinging 10.0.0.31 with 32 bytes of data: Reply from 10.0.0.31: bytes=32 time=7ms TTL=128 Reply from 10.0.0.31: bytes=32 time<1ms TTL=128 Reply from 10.0.0.31: bytes=32 time<1ms TTL=128 Reply from 10.0.0.31: bytes=32 time=43ms TTL=128 Ping statistics for 10.0.0.31: Packets: Sent = 4, Received = 4, Lost = 0 (0% loss), Approximate round trip times in milli-seconds: Minimum = 0ms, Maximum = 43ms, Average = 12msNow for the big test. RDP to the new instance by typing the command

mstsc /v:10.0.0.31 /console /admin

Again, use your IP address.

Tip

This particular image from Microsoft is already its own domain. You will need the following credentials to log in to it:

Username:

contoso\AdministratorPassword:

pass@word1

Accept the certificate if asked.

If all goes well, you will have successfully connected to your newly imported instance. Congratulations!

Go ahead and poke around the instance for a bit if you like, but eventually you’re going to have to terminate it. You don’t need it and it’s not a good idea to have two different domains and controllers on the same subnet.

Once we’ve had your fun with your new instance, it’s time to clean up. You need to do two things:

Clean up the temporary import files created for you in S3.

Terminate the instance.

The first is accomplished with the following command:

ec2-delete-disk-image -t import-i-ffsicbou -o %ACCESS_KEY_ID% -w %SECRET_ACCESS_KEY% 0% |--------------------------------------------------| 100% |==================================================| Done

This command deletes all temporary files associated with

the import task specified after the -t flag.

The final thing to do is to terminate the instance by right-clicking it in the AWS console and selecting Terminate.

So ... what have you learned?

If you already have Windows Server-based virtual machines running in your existing infrastructure, you can easily and securely import them into the AWS cloud. This is very handy when migrating from a traditional on-site infrastructure to a cloud-based architecture.

As it happens, you can also do this process in reverse. You can create and configure an instance in the AWS cloud and then export it to VM to use on a physical machine—maybe a demo laptop, for example.

Of course, we’ve only scratched the surface of what the EC2 command-line tools can do. You can also, for instance, upload a raw disk image and attach it to an already configured instance as another disk drive—and that’s just the beginning. But this is a book about IT virtualization, not scripting or programming, and that subject alone could go a solid hundred pages.

Get Building a Windows IT Infrastructure in the Cloud now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.