Chapter 12Neural Networks

The inspiration for neural networks was the recognition that complex learning systems in the animal brains consisted of closely interconnected sets of neurons. Although a particular neuron may be relatively simple in structure, dense networks of interconnected neurons could perform complex learning tasks such as classification and pattern recognition. The human brain, for example, contains approximately 1011 neurons, each connected on average to 10,000 other neurons, making a total of 1,000,000,000,000,000 = 1015 synaptic connections. Artificial neural networks (hereafter, neural networks) represent an attempt at a very basic level to imitate the type of nonlinear learning that occurs in the networks of neurons found in nature.

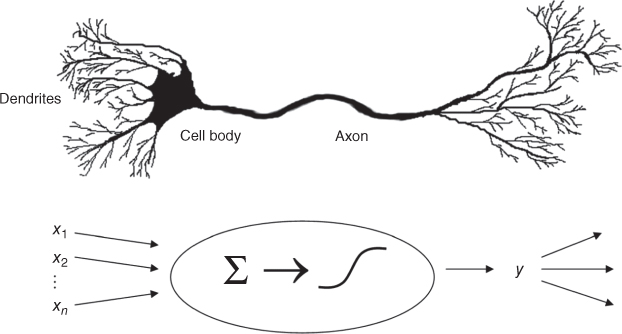

As shown in Figure 12.1, a real neuron uses dendrites to gather inputs from other neurons and combines the input information, generating a nonlinear response (“firing”) when some threshold is reached, which it sends to other neurons using the axon. Figure 12.1 also shows an artificial neuron model used in most neural networks. The inputs (xi) are collected from upstream neurons (or the data set) and combined through a combination function such as summation (∑), which is then input into (usually nonlinear) activation function to produce an output response (y), which is then channeled downstream to other neurons.

Get Data Mining and Predictive Analytics, 2nd Edition now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.