Chapter 17. Decision Trees

A tree is an incomprehensible mystery.

Jim Woodring

DataSciencester’s VP of Talent has interviewed a number of job candidates from the site, with varying degrees of success. He’s collected a dataset consisting of several (qualitative) attributes of each candidate, as well as whether that candidate interviewed well or poorly. Could you, he asks, use this data to build a model identifying which candidates will interview well, so that he doesn’t have to waste time conducting interviews?

This seems like a good fit for a decision tree, another predictive modeling tool in the data scientist’s kit.

What Is a Decision Tree?

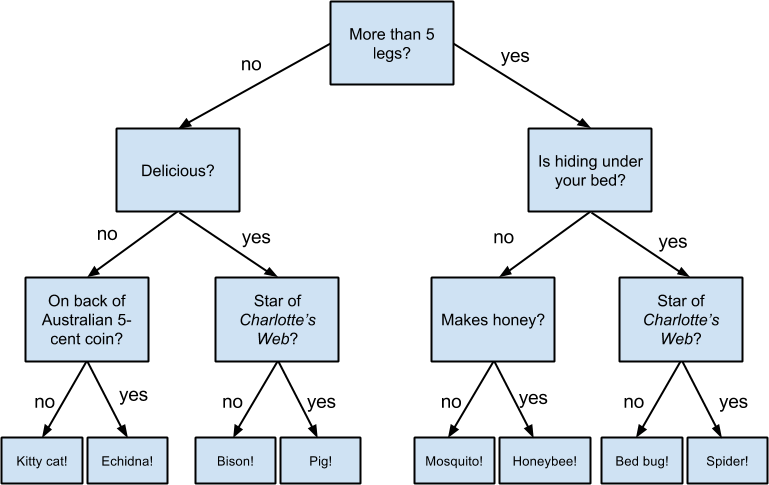

A decision tree uses a tree structure to represent a number of possible decision paths and an outcome for each path.

If you have ever played the game Twenty Questions, then you are familiar with decision trees. For example:

-

“I am thinking of an animal.”

-

“Does it have more than five legs?”

-

“No.”

-

“Is it delicious?”

-

“No.”

-

“Does it appear on the back of the Australian five-cent coin?”

-

“Yes.”

-

“Is it an echidna?”

-

“Yes, it is!”

This corresponds to the path:

“Not more than 5 legs” → “Not delicious” → “On the 5-cent coin” → “Echidna!”

in an idiosyncratic (and not very comprehensive) “guess the animal” decision tree (Figure 17-1).

Figure 17-1. A “guess the animal” decision tree

Decision trees have a lot to recommend them. They’re ...

Get Data Science from Scratch, 2nd Edition now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.