Chapter 4. Memory Usage in Distributed Systems

Introduction

Memory is fundamentally different from other hardware resources (CPU, disk I/O, and network) because of its behavior over time: when a process accesses a certain amount of memory, the process generally keeps and holds that memory even if it is not being used, until the process completes. In this sense, memory behaves like a ratchet (a process’s memory usage increases or stays constant over time but rarely decreases) and is not time-shiftable.

Also unlike other hardware resources (for which trying to use more than the node’s capacity merely slows things down), trying to use more than the physical memory on the node can cause it to misbehave badly, in some cases with arbitrary processes being killed or the entire node freezing up and requiring a physical reboot.

Physical Versus Virtual Memory

Modern operating systems use virtual memory to conceptually provide more memory to applications than is physically available on the node. The operating system (OS) exposes to each process a virtual memory address that maps to a physical memory address, dividing the virtual memory space into pages of 4 KB or more. If a process needs to access a virtual memory address whose page is not currently in physical memory, that page is swapped in from a swap file on disk. When the physical memory is full, and a process needs to access memory that is not currently in physical memory, the OS selects some pages to swap out to disk, freeing up physical memory.

Many modern languages such as Java perform memory management via garbage collection, but one side effect of doing so is that garbage collection itself touches those pages in memory, so they will not be swapped out. Most multi-tenant distributed systems run applications written in a variety of languages, and some of those applications will likely be doing garbage collection at any time, causing a reduction in swapping (and thus more physical memory usage).

A node’s virtual memory space can be much larger than physical memory for two primary reasons:

A process requires a large amount of (virtual) memory, but it doesn’t need to use that much physical memory if it doesn’t access a particular page.

Because of swapping, some virtual memory is in physical memory, but some might be on disk.

Node Thrashing

In most cases, allowing the applications running on a node to use significantly more virtual memory than physical memory is not problematic. However, if many pages are being swapped in and out frequently, the node can begin thrashing, with most of the time on the node spent simply swapping pages in and out. (Keep in mind that disk seeks are measured in milliseconds, whereas CPU cycles and RAM access times are measured in nanoseconds, roughly a million times faster.)

Thrashing has two primary causes:

- Excessive oversubscription of memory

This results from running multiple processes, the sum of whose virtual memory is greater than the physical memory on the node. Generally, some oversubscription of memory is desired for maximum utilization, as described later in this chapter. However, if there is too much oversubscription, and the running processes use significantly more virtual memory than the physical memory on the node, the node can spend most of its time inefficiently swapping pages in and out as different processes are scheduled on the CPU. This is the most common cause of thrashing.

Note

Although in most cases excess oversubscription is caused by the scheduler underestimating how much memory the set of processes on a node will require, in some cases a badly behaved application can cause this problem. For example, in some earlier versions of Hadoop, tasks could start other processes running on the node (using “Hadoop streaming”) that could use up much more memory than the tasks were supposed to.

- An individual process might be too large for the machine

In some cases, the process’s working set (the amount of data that needs to be held in memory in order for the algorithm to work efficiently) is larger than the physical RAM on the node. In this case, either the application must be changed (to a different algorithm that uses memory differently, or by partitioning the application into more tasks to reduce the dataset size) or more physical memory must be added to the box.

As with many other issues described in this book, these problems can be exacerbated on multi-tenant distributed systems, for which a single node typically runs many processes from different workloads with different behaviors, and no one developer has the incentive or ability to tune a particular application. (However, multi-tenant systems also provide possibilities for improved efficiency; for example, by running a memory-light but CPU-intensive application alongside an application that is a heavy user of memory.)

Detecting and Avoiding Thrashing

Because swapping prevents a node from running out of physical memory, it can be challenging to detect when a node is truly out of memory. Monitoring other metrics becomes necessary. For example, a node that is thrashing (as opposed to swapping in a healthy way) might exhibit the following symptoms:

Kernel metrics related to swapping show a significant amount of swapping in and out at the same time.

The amount of physical memory used is a very high fraction (perhaps 90 percent or more) of the memory on the node.

The node appears unresponsive or quite slow; for example, logging into the node takes 10–60 seconds instead of half a second, and when typing in a terminal an operator might see a delay before each character appears. Ultimately, the node can crash.

Because the node is so slow and unresponsive, logging in to try to fix things can become impossible. Even the system’s kernel processes that are trying to fix the problem might not be able to get enough resources to run.

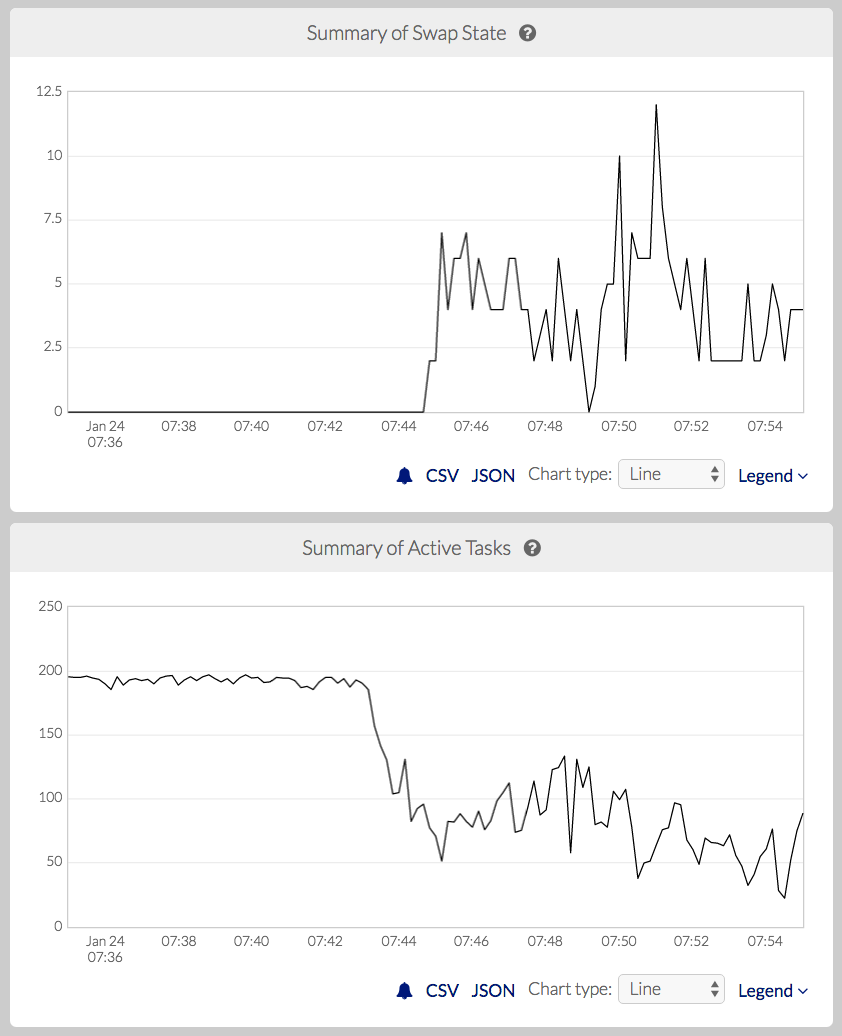

When a node begins thrashing badly, it is often too late to fix without physically power-cycling the node, so it is better to avoid thrashing altogether. A conservative approach would be to avoid overcommitting memory, but that leads to underutilization of the node and the distributed system as a whole. A better approach would be to adjust in real time to keep the node from ever going over the edge from healthy swapping into thrashing. For example, Pepperdata has developed a machine learning–based classifier that detects early signs of thrashing based on a combination of system metrics. When the classifier predicts that a node is about to start thrashing, Pepperdata reduces the memory usage on the node, either by preventing new Hadoop containers from being scheduled on the node, or in extreme cases by stopping containers (deciding which containers to stop based on the amount of overall work that would be lost by stopping them); see Figure 4-1.

Figure 4-1. Detection of sudden, extreme swapping based on machine learning (top), and an automated response of stopping containers to maintain cluster stability (bottom). (source: Pepperdata)

Kernel Out-Of-Memory Killer

The Linux kernel has an out-of-memory (OOM) killer that steps in to free up memory when all else fails. If a node has more pages accessed by the sum of the processes on the node than the sum of physical memory and on-disk virtual memory, there’s no memory left, and the kernel’s only choice is to kill something. This frequently happens when on-disk swap space is set to be small or zero.

Unfortunately, from the point of view of applications running on the node, the kernel’s algorithm can appear random. The kernel is unaware of any semantics of the applications running on the node, so its choice of processes to kill might have unwanted consequences, such as the following:

Processes that have been running for a very long time and that are nearly complete, instead of newly-started processes for which little work would be wasted.

Processes that parts of a job running on other nodes depend on, such as tasks that are part of a larger workflow.

Underlying daemons of the distributed system (for example, the distributed file system process running on a node), whose killing can cause many jobs to stall, thus slowing down the entire distributed system.

Implications of Memory-Intensive Workloads for Multi-Tenant Distributed Systems

As with other hardware resources, the impact of memory usage on multi-tenant distributed systems is more complicated than on a single node. This is because memory-intensive workloads run on many nodes in the cluster simultaneously, and processes running on different nodes in the cluster are interdependent. Both factors often can cause problems to cascade across the entire cluster. This problem is particularly bad with memory because unlike CPU, disk I/O, and network, for which bottlenecks only slow down a node or the cluster, memory limits that lead to thrashing or OOM killing can cause nodes and processes to go completely offline.

An example in the case of Hadoop is HDFS, in which data is replicated on a subset (usually three) of the nodes in the cluster, and running applications can access any one of the three replications. The scheduler can very easily cause memory-intensive workloads to run on many nodes of the cluster, resulting in thrashing or OOM killing occurring on many nodes at once. Those nodes might effectively freeze up due to thrashing, or the OOM killer might kill the daemon serving the HDFS data that is stored on the node. Losing access to just one replica of HDFS data can slow down processes running on other nodes on the cluster; for cases in which all three replicas of a certain file are on nodes where HDFS is unresponsive, jobs cannot run at all, and the entire cluster can grind to a halt.

Solutions

For a special-purpose distributed system, the developer can design the system to use memory more effectively and safely. For example, monolithic systems like databases might have better handling of memory (e.g., a database query planner can calculate how much memory a query will take, and the database can wait to run that query until sufficient memory is available). Similarly, in a high-performance computing (HPC) system dedicated to a specific workload, developers generally tune the workload’s memory usage carefully to match the physical memory of the hardware on which it will run. (In multi-tenant HPC, when applications start running, the state of the cluster cannot be known in advance, so such systems have memory usage challenges similar to systems like Hadoop.)

In the general case, developers and system designers/operators can take steps to use memory more efficiently, such as the following:

For long-running applications (such as a distributed database or key-value store), a process might be holding onto memory that is no longer needed. In such a case, restarting the process on the node can free up memory immediately, because the process will come back up in a “clean” state. This kind of approach can work when the operator knows that it’s safe to restart the process; for example, if the application is running behind a load balancer, one process can be safely restarted (on one node) at a time.

Some distributed computing fabrics like VMware’s vSphere allow operators to move virtual machines from one physical machine to another while running, without losing work. Operators can use this functionality to improve the efficiency and utilization of the distributed system as a whole by moving work to nodes that can better fit that work.

An application can accept external input into how much memory it should use and change its behavior as a result. For example, the application can use less cache or compress the data stored in memory, both of which trade more CPU time for less memory usage. This is similar to how the Linux kernel adjusts to use less disk cache space if running processes need more memory. An example of a specific application with this behavior is Oracle’s database, which compresses data in memory.

Hadoop tends to be very conservative in memory allocations; it generally assumes the worst case, that every process will require its full requested virtual memory for the entire run time of the process. This conservatism generally results in the cluster being dramatically underutilized because these two assumptions are usually untrue. As a result, even if a developer has tried to carefully tune the memory settings of a given job, most nodes will still have unused memory, due to several factors:

For a given process, the memory usage changes during its run time. (The usage pattern depends on the type of work the process is doing; for example, in the case of Hadoop, mappers tend to rapidly plateau, whereas reducers sometimes plateau and sometimes vary over time. Spark generally varies over time.)

Different parts of a job often vary in memory usage because they are working on different parts of the input data on different nodes.

Memory usage of a job can change from one run to the next due to changes in the total size of the input data.

A naive approach to improving cluster utilization by simply increasing memory oversubscription can often be counterproductive because the processes running on a node do sometimes use more memory than the physical memory available on the node. When that happens, the throughput of the node can decrease due to the inefficiency of excessive swapping, and if the node begins thrashing, it can have side effects on other nodes as well, as described earlier.

Software like Pepperdata provides a way to increase utilization for distributed systems such as Hadoop by monitoring actual physical memory usage and dynamically allowing more or fewer processes to be scheduled on a given node, based on the current and projected future memory usage on that node. These solutions can dramatically increase throughput while avoiding the negative consequences of overusing physical memory and the cascading failures that can result.

Summary

Memory is often the primary resource limiting the overall processing capacity of a node or an entire distributed system; it is common to see a system’s CPU, disk, and network running below capacity because of either actually or artificially limited memory use. At the opposite extreme, overcommitment of memory can cause instability of individual nodes or the cluster as a whole. To ensure predictable and reliable performance and maximize throughput in a multi-tenant distributed system, effective memory handling is critical. Operators can use existing software solutions to increase cluster utilization while maintaining reliability.

Get Effective Multi-Tenant Distributed Systems now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.