Chapter 4. The Cloud

The cloud is the central station of any IoT solution, and a critical component. While it may be tempting to think about the IoT device cloud as the equivalent of a web or mobile application, the Internet of Things introduces its own unique characteristics and subsystems. In this chapter, we’ll examine some of those characteristics, including cloud-device connectivity and security, device ingress and egress, and data normalization and protocol translation.

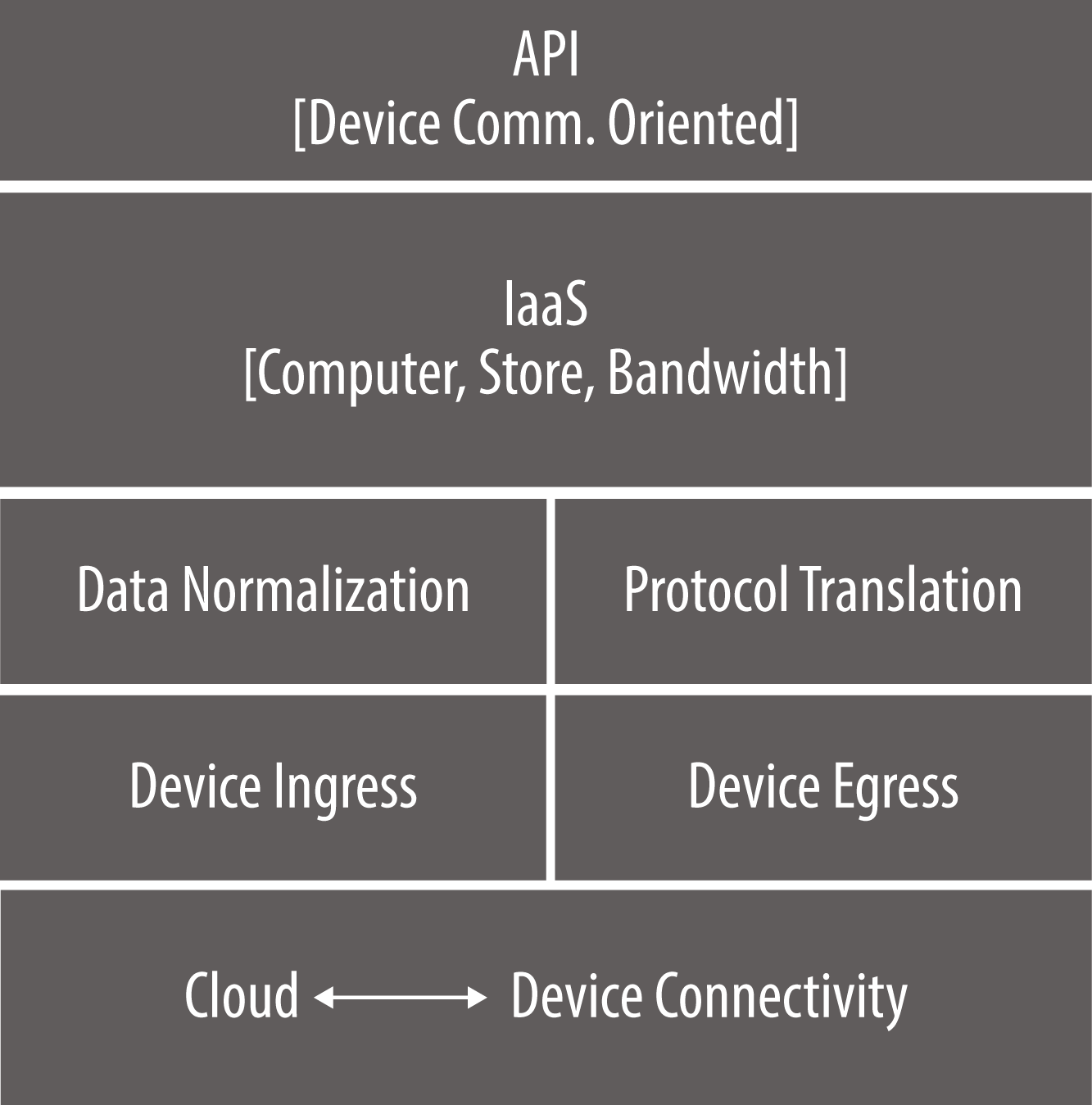

In IoT technical diagrams such as Figure 4-1, it’s common to structure visualizations of the architecture from the bottom up, reflecting the mental model of edge devices being “on the ground” relative to the cloud. Using this diagram, let’s follow the journey of a message as it flows from the edge to the cloud.

Figure 4-1. The device cloud is the central station of an IoT solution, processing and analyzing data received from the edge

Cloud-to-Device Connectivity

Making sure we get the right message from the edge device to the cloud is paramount. When it comes to cloud-to-device connectivity, security is increasingly a major area of concern, and rightly so. Even a modest-sized IoT installation can provide numerous potential intrusion points via edge devices that, unlike modern PCs or smartphones, have limited computing resources. This combination of traffic quantity, device constraints, and the wide variety of IoT solution configurations makes for a bevy of challenges.

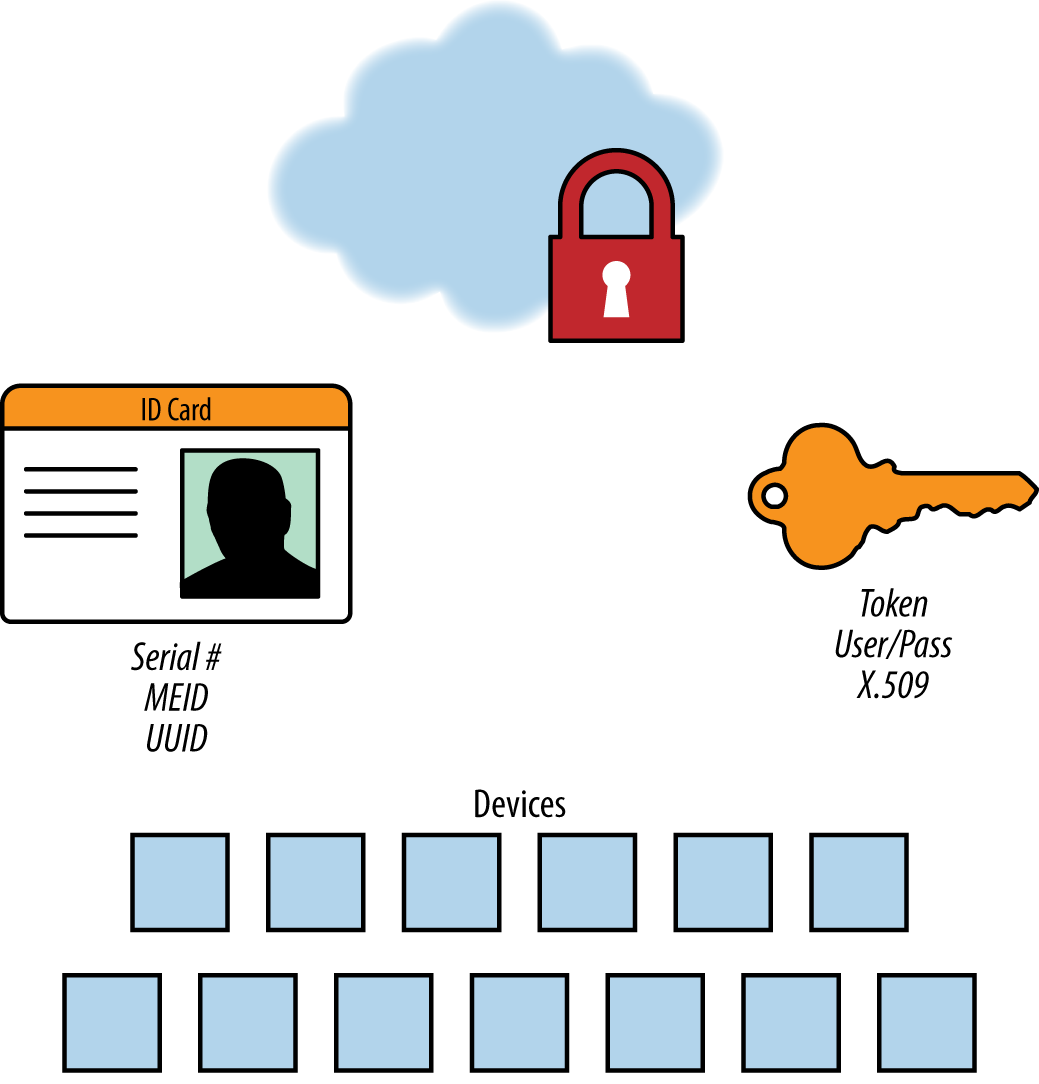

Device authentication prior to any data transmission is a critical security measure (Figure 4-2). In a web or enterprise application there are authenticated users, systems the users are authorized to access, and roles that designate the users’ privileges. In the IoT, however, we see a new kind of actor in the system: the device itself, which must supply its own credentials and identity to the cloud. Device authentication prevents data spoofing and junk data, and guards against cloud-to-device information flow that could get into the wrong hands. Since this access point represents a new attack surface, a device identity and authorization layer is a requirement for any system. IoT-connected devices also need the benefit of encryption standards like Transport Layer Security (TLS) v1.2 and banking-grade certificate exchange and validation.

Figure 4-2. Device authentication is a critical security measure

In an IoT solution, every product, sensor, gateway, and communication device at the edge requires a unique identifier. As you might expect, there are a variety of techniques for achieving this, which is why it’s important for the cloud software to be flexible in the way it identifies a device. Often, you can use the serial number of a product (stamped-in by the manufacturer) as a unique identifier. However, it’s not unusual to encounter products that lack a serial number altogether. If the device has a mobile radio, you can use the mobile equipment identifier (MEID) or another form of cellular identifier.

Once you’ve chosen an identifier, you’ll need to authenticate to the cloud. But common techniques in consumer and enterprise systems to solve this problem fall short when it comes to the IoT. For instance, in the case of username/password combinations, how do you update the password of an IoT device at the edge? And what if there are millions of them? X.509 certificates that identify a client securely to a communicating server are the best approach. However, a public key infrastructure (PKI) is needed to produce these certificates and sign, distribute, and revoke them when they’ve been challenged.

Messaging and the IoT

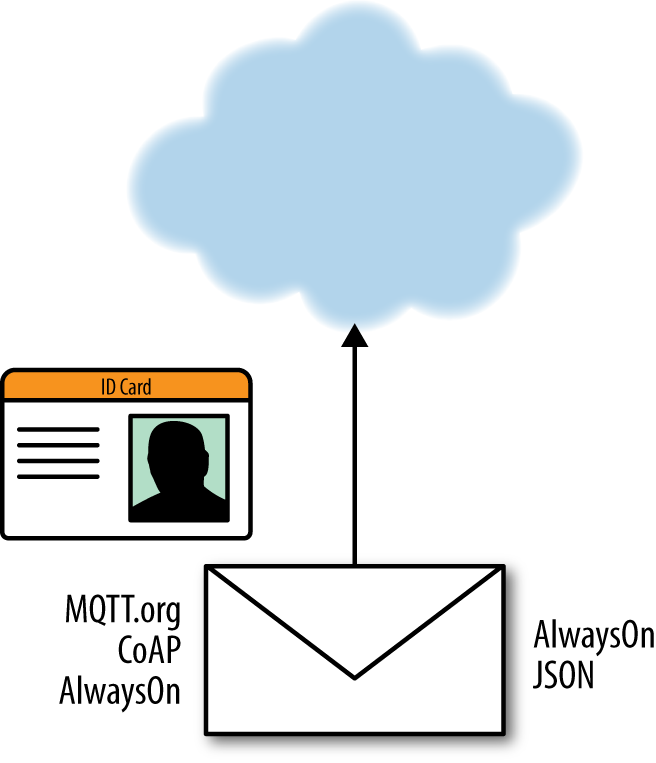

How do you represent a message in an IoT system? To begin with, we need our credentials—our device ID card—to be part of the message envelope. The envelope protocol tells the system, “Here’s who I am. Here’s where I want this message to go. And here are some characteristics of how I want the message to be delivered.”

When it comes to envelope protocols, there are many emerging standards, some of them more pertinent for certain industries or certain types of IoT solutions. MQTT (Message Queuing Telemetry Transport) and CoAP (Constrained Application Protocol) are strictly envelope protocols. They do not care about the contents—to them, the message inside is just a bucket of bytes. When describing the message contents, engineers may use JSON, HTML, CSV, or even a proprietary binary format. Other protocols such as AlwaysOn are designed to incorporate both envelope and message information (Figure 4-3).

Figure 4-3. While messaging protocols contain identity information for authentication purposes, most leave the content to another format

Back to the Future: How Reliable Is Your Network?

At the beginning of the Internet revolution in the late ’90s, network reliability was not a given, and outages were not unusual. Software engineers could not assume they would have continuous connectivity. Over time, improved connectivity and fault-tolerance made these scenarios less of a concern. However, when it comes to the Internet of Things, the relatively rudimentary nature of device connectivity requires adjusting the engineer’s outlook yet again—perhaps back to the cautious mindset of the early Internet days.

IoT networks, as you might expect, are generally not as reliable as today’s mobile or business Internet connections. The IoT does not, as yet, have the same fault-tolerance built into the client and server layers that you might expect with consumer-oriented modern web browsers and smartphone applications. In comparison, IoT edge devices run on relatively rudimentary operating systems and are subject to a variety of real-world factors that can limit connectivity.

For example, in a smart, connected operation for commercial vehicle fleet-deployment and monitoring, a truck might be outfitted with telematics device, communicating over a cellular network to provide regular updates regarding location, status, and route. However, at any moment, data flow from the vehicle could be compromised by something as simple as driving into a parking garage or tunnel. Suddenly, the truck disappears from the network and the system receives a fragment of a message. The software waits on those bytes, eating up resources in our cloud networking layer. The truck may be gone for minutes, hours, or days. We have no way of knowing. And then, as suddenly as it left, it returns, and the system receives the rest of the message. This all-too-common scenario needs to inform the design of IoT systems. Importantly, our software layer needs to understand and compensate for network unreliability, and not assume that we’ll receive complete messages or have a well-behaved network client from connected devices.

Device Ingress/Egress

Next, as we move up our IoT cloud stack, is the device ingress and egress layer. IoT solutions, the majority of the time, are concerned with device ingress—receiving incoming messages from the edge to the cloud. The sheer volume of data can challenge the scale of cloud systems. A system might have hundreds of thousands of connected devices, each sending multiple messages every minute. For example, if every device in a 250,000-device system sent just 3 messages per minute, it would result in over a billion messages per day. By way of comparison, Twitter, as of this writing, has about 320 million monthly active users who send about 900 million messages per day. It’s feasible that the data ingress of just one IoT installation can easily match or even surpass the total traffic of Twitter on a daily basis.

In contrast, once in a while, an IoT system needs to communicate with one of the many connected devices. Cloud-to-edge messaging may include sending notifications about new configurations, firmware, or commands to trigger actuation. While it’s far more infrequent than device ingress, when you need device egress, it’s important to have the right communication protocol and communication infrastructure in place. Particularly when triggering actuation, it’s important that users see timely responses to their cloud-based commands. Perhaps a smartphone user is asking their garage door to close because they realize they left it open when they exited the house. From a user experience perspective, if the command is successful, the end result can seem almost magical—the ability to instantly and remotely command one of many connected devices.

Of course, some classes of edge devices are not always powered on or do not maintain a persistent network connection. Duty-cycled devices simply connect on their own schedule and receive updates when reconnection occurs, much like retrieving email from a POP3 email client. The downside with this setup is that you don’t get your messages exactly when they’re sent. Some IoT frameworks allow for an active connection from the cloud to the edge, pushing messages as soon as they’re available. This gives a much crisper interaction for end users who want to control and update IoT devices. Even though device egress happens rarely, when it does happen, it needs to work well.

Data Normalization and Protocol Translation

While industry coalitions are hard at work defining open standards for interoperability with the IoT, there are many legacy M2M systems with thousands of connected devices that currently speak different proprietary protocols. For this reason, a well-designed IoT cloud system includes a dedicated protocol translation layer. This layer translates the “over the wire/air” protocol to the native, or canonical, protocol that’s understood by the upper layers of the system.

Once a message is received, the data needs to be normalized, which involves extracting information from the device message and putting it into a data storage schema. As the message comes through the device ingress layer, you’ll need to parse the JSON, CSV file, or proprietary format. IoT data is often time series data—discreet values with identifiers, like sensor type or status reading, and a timestamp of when that value was ascertained. However, just having time series-data storage doesn’t completely solve the problem, as there are also many forms of unstructured data such as log files, diagnostics, images, and video.

Data Consistency

Message delivery consistency has a significant impact on your IoT applications. The order in which messages are received and processed makes all the difference.

When scaling the messaging infrastructure for an IoT application, be wary of dumping messages into a queue and processing them later. When you start to distribute those queues and distribute your architecture, it’s very easy to forget to connect the temporal locality of a single device that sent us a series of messages being processed in order. For instance, if a device has a status of “door open” and then a second later “door closed,” what happens if the messages are received out of order? Now there’s a temporal ordering issue. The system needs to assert that the first message the device sent is received and processed by the application before the second message.

Infrastructure

The next layer in our cloud stack is the Infrastructure layer. For many IoT solution creators, the elastic compute resources of an Infrastrucure-as-a-Service (IaaS) provider are a sensible alternative to the substantial initial investment and ongoing maintenance cost of constructing their own data centers. Infrastructure-as-a-Service, whether public or a managed private cloud from an IaaS vendor, can give solution creators the ability to automatically provision new compute, storage, and network resources on demand. This is particularly useful for IoT solutions that may have significantly large but temporary workloads. For example, within the annual lifecycle of an IoT system, there are certain events that demand more compute and bandwidth resources than others—for instance, a firmware update deployed to a million devices, or the seasonal launch of a new product. IaaS resources are convenient and scalable: the third-party vendor owns all compute resources, storage, and networking capabilities, and handles all system maintenance.

How Much Data Is Too Much Data?

Collecting trillions of data points demands a well-thought-out strategy in order to set automated policies for data retention and “data governance.” IoT data can be big, fast, unstructured, and write-heavy. When the data is pouring in, it might seem like you’re collecting and storing information that’s just meaningless noise. And it’s true that some classes of IoT applications rarely read data values a second time once they’ve been written. In cases like this, some would argue the data isn’t going to do any good, because no one will ever look at it. In those situations, it’s a question whether to store the data at all.

But we do, indeed, want to store it. The useless data “noise” of today might well be the most impactful information of tomorrow. With the help of a machine learning algorithm, you may find that some of your data is in fact a signal that something is about to go wrong, giving you non-intuitive insight into your IoT system.

Even if you don’t have the machine learning implementation and algorithms to process it today, don’t throw these data points away, because training those future machine learning models with historical data can be absolutely critical.

The Value of Small Data

With all this discussion of big data, it’s worth keeping in mind the great value that you can get from small data generated by an IoT installation. There are many use cases where simply knowing the location and status of a piece of equipment on a daily basis can provide tremendous value. For instance, if you’ve leased a piece of construction equipment to a customer who’s required to keep it in Massachusetts, perhaps once a day you’ll want to know that your equipment remains in the right area. You don’t need to hear from it every second, or need big data from it. You just need to know where it is. In scenarios such as this, small data can be very useful.

APIs

The final layer in our IoT cloud device stack is the API, the lingua franca of modern systems. The majority of API usage occurs at the application layer, which is discussed in more detail in Chapter 5; however, there are a few notable elements we should discuss here. In particular, the device cloud stack should provide lower-level access to insulate the application layer from the devices. Common API functions may include:

Provisioning a new device

Querying data elements supplied by a device

Sending commands to devices

The Topology of the Cloud

The tremendous power and potential of the Internet of Things comes from the fact that it binds digital resources to the physical world and vice versa. However, while the cloud is an excellent metaphor that helps us to discuss abstract and complex concepts about servers, networks, and data centers, we must always be cognizant of the physical nature of the Internet of Things and the resulting challenges and limitations that brings.

For example, the physical location of cloud assets such as data centers can be critical in an IoT system. It’s an all-too-common scenario to have data generated by devices in one region that cannot leave a certain geographic area for reasons of network latency, governmental controls, or even industry regulations. As a case in point, it’s not acceptable for medical device data generated in Central Europe to be transmitted just anywhere in the cloud. The European authorities will tell you the data cannot transgress European boundaries. In situations like these, your abstraction of the cloud needs to be both regionally and locally aware.

Importantly, as we’ve discussed in previous chapters, digital messages are not immune from the inconvenient realities of the physical world. Cloud processing is far from instantaneous, subject to unforeseen delays and network unreliability.

The concept of fog or edge computing attempts to transcend some of these physical limitations. According to Cisco’s “Fog Computing and the Internet of Things: Extend the Cloud to Where the Things Are”:

The Internet of Things (IoT) is generating an unprecedented volume and variety of data. But by the time the data makes its way to the cloud for analysis, the opportunity to act on it might be gone.

For example, when you have an edge device that needs to communicate with another entity—an application, business process, or even the device right next to it—it must first send that message to the cloud, which processes the information and transmits a response back down to the edge. Communication with the cloud could very well mean sending a data transmission thousands of miles away over a network with questionable reliability. In contrast, with fog computing, that processing happens on nodes physically closer to where the data is originally collected.

According to Cisco, fog computing provides intelligent infrastructure at or closer to the edge that:

Analyzes the most time-sensitive data at the network edge, close to where it is generated instead of sending vast amounts of IoT data to the cloud.

Acts on IoT data in milliseconds, based on policy.

Sends selected data to the cloud for historical analysis and longer-term storage.

Flexible cloud topology like this can be a requirement in environments like hospitals, where there’s a mission-critical need to quickly analyze real-time data from connected devices and initiate immediate action. In this kind of scenario, you’ll want a multi-tiered approach to data collection and analysis, starting with a smart gateway or an IoT server to apply localized intelligence, aggregation, and business rules before communicating up to the next level. At the second tier, you might have a regional instance to aggregate big data, applying business rules and logic that spans local regions. Lastly, you’ll have a fully distributed master system capable of seeing the complete picture, while collecting some trimmed down data for the purposes of analytics. The variety of IoT systems and the need for flexible solutions that respond to real-time events quickly make fog computing a compelling option.

Get Foundational Elements of an IoT Solution now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.