6

NONLINEAR REGRESSION

6.1 INTRINSIC LINEARITY/NONLINEARITY

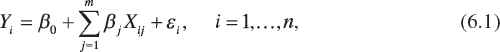

In the classical linear regression model, the multivariate regression hyperplane has the form

where we have m predetermined explanatory variables or regressors X1, ..., Xm and ε is a random error term. Here Equation 6.1 depicts a linear regression model since it is linear in the parameters β0, β1, ..., βm. It is assumed that n > p = m + 1 and no exact linear relationship exists between the Xj's, j = 1,..., m.

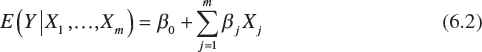

For fixed Xj's, j = 1,..., m, the population regression hyperplane is specified as the conditional mean of Y given the Xj's or

given that E(ε) = 0. Given Equation 6.2,

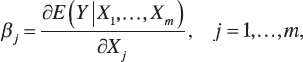

![]()

that is, the population intercept is the mean of Y given that all of the Xj's are set equal to zero. Also,

is termed the jth partial regression coefficient; that is, as Xj increases by one unit, the average value of Y changes by βj units, given that all remaining explanatory variables are held constant. Once the βk's, k = 0, 1,..., m, are estimated from the sample information, we obtain the sample regression hyperplane

where Ŷ is the estimated value of ...

Become an O’Reilly member and get unlimited access to this title plus top books and audiobooks from O’Reilly and nearly 200 top publishers, thousands of courses curated by job role, 150+ live events each month,

and much more.

Read now

Unlock full access