Chapter 1. Primer on Latency and Bandwidth

Speed Is a Feature

The emergence and the fast growth of the web performance optimization (WPO) industry within the past few years is a telltale sign of the growing importance and demand for speed and faster user experiences by the users. And this is not simply a psychological need for speed in our ever accelerating and connected world, but a requirement driven by empirical results, as measured with respect to the bottom-line performance of the many online businesses:

-

Faster sites lead to better user engagement.

-

Faster sites lead to better user retention.

-

Faster sites lead to higher conversions.

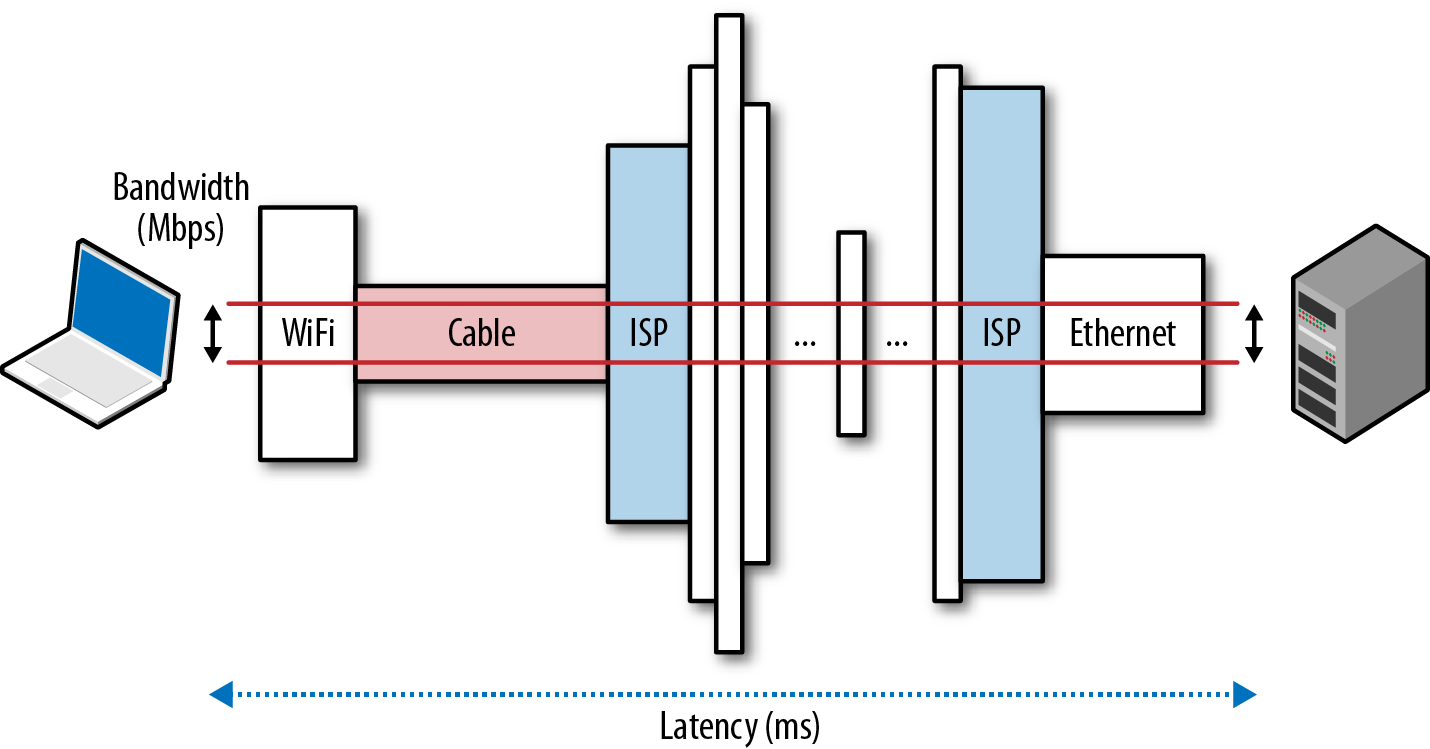

Simply put, speed is a feature. And to deliver it, we need to understand the many factors and fundamental limitations that are at play. In this chapter, we will focus on the two critical components that dictate the performance of all network traffic: latency and bandwidth (Figure 1-1).

- Latency

-

The time from the source sending a packet to the destination receiving it

- Bandwidth

-

Maximum throughput of a logical or physical communication path

Figure 1-1. Latency and bandwidth

Armed with a better understanding of how bandwidth and latency work together, we will then have the tools to dive deeper into the internals and performance characteristics of TCP, UDP, and all application protocols above them.

Become an O’Reilly member and get unlimited access to this title plus top books and audiobooks from O’Reilly and nearly 200 top publishers, thousands of courses curated by job role, 150+ live events each month,

and much more.

Read now

Unlock full access