Chapter 3. DataFrames, Datasets, and Spark SQL

Spark SQL and its DataFrames and Datasets interfaces are the future of Spark performance, with more efficient storage options, advanced optimizer, and direct operations on serialized data.

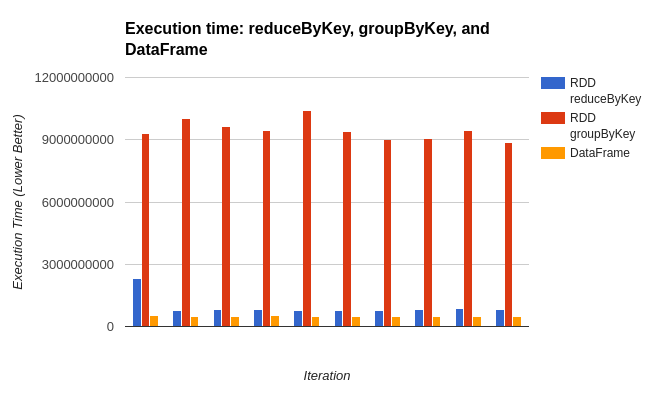

These components are super important for getting the best of Spark performance (see Figure 3-1).

Figure 3-1. Relative performance for RDD versus DataFrames based on SimplePerfTest computing aggregate average fuzziness of pandas

These are relatively new components; Datasets were introduced in Spark 1.6, DataFrames in Spark 1.3, and the SQL engine in Spark 1.0.

This chapter is focused on helping you learn how to best use Spark SQL’s tools and how to intermix Spark SQL with traditional Spark operations.

Warning

Spark’s DataFrames have very different functionality compared to traditional DataFrames like Panda’s and R’s. While these all deal with structured data, it is important not to depend on your existing intuition surrounding DataFrames.

Like RDDs, DataFrames and Datasets represent distributed collections, with additional schema information not found in RDDs.

This additional schema information is used to provide a more efficient storage layer (Tungsten), and in the optimizer (Catalyst) to perform additional optimizations.

Beyond schema information, the operations performed on Datasets and DataFrames are such that the optimizer can inspect the ...

Get High Performance Spark now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.