Chapter 4. Find

âBe very, very quiet; we are hunting wabbits.â

Elmer J. Fudd

The first half of the F3EAD cycleâFind, Fix, and Finishâare the primary operations components, which for us means incident-response operations. For these first three phases, the adversaries are targeted, identified, and eradicated. We use intelligence to inform these operation actions, but thatâs not the end of our use of intelligence. Later in the process, we will use the data from the operations phase in the second half of F3EAD the intelligence phase: Exploit, Analyze, Disseminate.

This chapter focuses on the Find phase, which identifies the starting point for both intelligence and operational activities. In the traditional F3EAD cycle, the Find phase often identifies high-value targets for special operations teams to target. In intelligence-driven incident response, the Find phase identifies relevant adversaries for incident response.

In the case of an ongoing incident, you may have identified or been given some initial indicators and need to dig for more; or in the case of threat hunting, you may be searching for anomalous activity in your networks. Regardless of the situation, before you can find anything, you need to have an idea of what it is you are looking for.Â

Various approaches can be taken in the Find phase. The method should be determined by the nature of the situation or incident as well as the goal of the investigation. Different methods may be combined as well to ensure that you have identified all possible pieces of information.

Actor-Centric Targeting

When there is credible information on the actor behind an attack, or you are being asked to provide information on a particular attack group, it is possible to conduct actor-centric targeting.

Actor-centric investigations are like unravelling a sweater: you find a few little pieces of information and begin to pull on each one. These threads can give you insight into the tactics and techniques that the actor used against you, which then give you a better idea of what else to look for. The result is powerful, but it can be frustrating. You never know which thread will be the key to unravelling the whole thing. You just have to keep trying. Then suddenly you may dig into one aspect that opens up the entire investigation. Persistence, and luck, are key aspects of actor-centric investigations.

In some cases, incident responders will go into an investigation with an idea of who the actor behind the incident may be. This information can be gleaned from a variety of sources; for example, when stolen information is offered for sale on underground forums, or when a third party makes the initial notification and provides some information on the attacker. Identifying at least some details of an attacker makes it possible to carry out actor-centric targeting in the Find phase.

When conducting actor-centric targeting, the first step is to validate the information that has been provided on the attacker. It is important to understand if and why the attacker in question would target your organization. The development of a threat model, a process that identifies potential threats by taking an attackerâs view of a target, can speed this process and can help identify the types of data or access that may have been targeted. This information can also feed into the Find phase, where incident responders search for signs of attacker activity.

A threat model can allow you to use actor-centric targeting even if you do not have concrete information on the actor by determining potential or likely attackers. Of the hundreds of tracked criminal, activist, and espionage groups, only a small handful will be generally interested in your organization. Assessing which of these groups are truly threats to you is not a perfect science, but you have to make your best guess and keep in mind that the list you come up with will not be an authoritative list but will still be a good place to start. After some time, experience will be the best guide to who you should be concerned about.

Once you validate the initial information, the next step is to identify as much information as possible on the actor. This information will help to build the target package on the attacker, which will enable operations to fix and finish the attack. Information on the actor can include details of previous attacks, both internal and external.

Starting with Known Information

In almost every situation, some information will be available on threat actors, whether that comes from previous incidents or attack attempts within your own environment (internal information) or intelligence reports produced by researchers, vendors, or other third parties (external information). Ideally, a combination of both types will be available in order to provide the best overall picture of the threat. Â

Strategic and tactical intelligence are both useful at the this stage. Strategic intelligence on actors can provide information on the actorâs potential motivation or goals, where they ultimately want to get to, and what they ultimately want to do when they get there. Tactical intelligence can provide details on how an actor typically operates, including their typical tactics and methods, preferred tools, previous infrastructure used, and other pieces of information that can be searched for during the Fix stage and contextualize the information that is found.

It is also useful, though difficult, to understand whether the actors tend to operate alone, or whether they work with other actor groups. Some espionage groups have been known to divide tasks between several groups, with one group focusing on initial access, another focusing on accomplishing the goals of the attack, another for maintaining access for future activities, and so forth. If this is the case, there may be signs of multiple actors and multiple activities in a network, but further analysis should be conducted to see whether the activities fit the pattern of multiple actor groups working together or whether it is possible that several actors are operating independently.

Useful Find Information

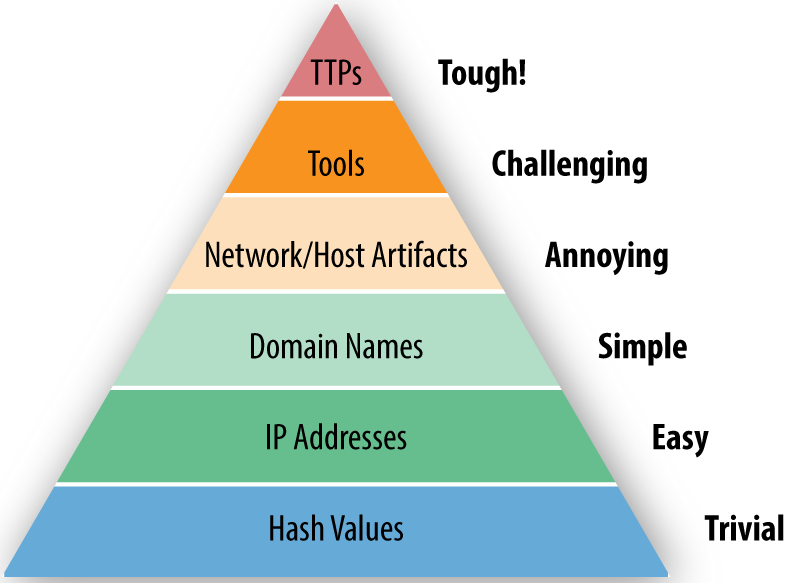

During the Find phase, our biggest goal is developing information that will be useful during the Fix portion of the F3EAD cycle. The most useful information is information thatâs hard for the actor to change. Incident responder David J. Bianco captured this concept, and its impact on the adversary, in his Pyramid of Pain, shown in Figure 4-1.

Figure 4-1. David J Biancoâs Pyramid of Pain

The Pyramid of Pain is a model depicting how central various types of information are to an actorâs tool chain and objectives, which corresponds to how hard they are to change. At the bottom, you have basic characteristics that attackers can change regularly by tweaking small details of malware or network configurations such as recompiling malware (to generate a new hash) or pointing a domain name at a new IP address for command and control. At the top, you have core capabilities that are central to who an actor is, such as core techniques and methodologies.

So how do we use this model? The Pyramid of Pain is all about understanding the relative value and temporal nature of different types of indicators of compromise (more on those in the next section!) and actor information. Are hashes useless? Not at all; theyâre incredibly useful in many contexts and provide a great starting place for an investigation, but they change often and easily (often just by recompiling a piece of malware). On the opposite end of the spectrum, an actor that specializes in compromising websites with SQL injection would have a relatively difficult time switching tactics to spear phishing with zero-day exploits. The result is when it comes to threat information, we prefer and get longer use out of information toward the top of the pyramid. Our goal in both incident response and intelligence analysis is trying to move higher up the pyramid, thus making it more difficult for the adversary to evade us.

Indicators of compromise

The simplest data (and thus lower on the Pyramid of Pain) to gather are commonly referred to as indicators of compromise (IOCs). The earliest definition of IOCs comes from Mandiantâs OpenIOC website (OpenIOC is the Mandiant proprietary definition for IOCs compatible with its MIR products). While IOCs can come in a variety of formats (weâll discuss this more next chapter), theyâre all defined the same way: âa description of technical characteristics that identify a known threat, an attackerâs methodology, or other evidence of compromise.â

IOCâs typically focus on atomic pieces of information at the bottom of the Pyramid of Pain. These can be subdivided based on where the information is found:

- Filesystem indicators

-

File hashes, filenames, strings, paths, sizes, types, signing certificates

- Memory indicators

- Network indicators

-

IP addresses, host names, domain names, HTML paths, ports, SSL certificates

Each type of indicator has unique uses, is visible in different positions (whether monitoring a single system or a network), and depending on the format itâs in, may be useful with different tools.

Behavior

Far more complex for attackers to change are behaviors, captured in the top level of the Pyramid of Pain as TTPs. This is a loose group that goes beyond tools and instead focuses on how theyâre used to achieve an attackerâs goals. Behavior is more abstract than TTPs and canât be easily described the way IOCs can.

Behaviors can often be best understood in terms of the kill chain from Chapter 3, as in how does an attacker achieve each piece? Here are a few hypothetical examples:

- Reconnaissance

-

(Usually based on inference.) The attacker generally profiles potential victims based on conference proceedings documents found online.

- Weaponization

-

The attacker uses a Visual Basic for Applications (VBA) macro sent inside a Microsoft Word document.

- Delivery

-

The attacker sends a phishing email from a fake industry group based on information from the proceedings of the conference identified during reconnaissance.

- Exploitation

-

The attackerâs VBA macro executes when the victim opens the attached Word document and downloads the second-stage payload.

- Installation

-

The attacker uses a privilege escalation exploit to install a second-stage payload, a remote-access Trojan, so it starts up at login and achieves persistence.

- Command and control

-

The RAT uses connections to a micro blogging site to exchange encoded communication for command and control.

- Actions on Objective

-

The attacker attempts to steal technical schematics and email by compressing them and uploading them through a file-sharing service.

Make sure that you document any information that you find in a way that will help you remember it for use in future steps of the intelligence-driven incident-response process.

Using the kill chain

Actor-centric targeting is often a good place to start, partially because it has the most straightforward model when combined with the kill chain. Any information that you are given or find at the start of your investigation will most likely come from one, or, if you are lucky, two phases of the kill chain. A good strategy is to use the surrounding phases of the kill chain to determine what other information to look for, so identifying where your existing information sits within the kill chain can determine where else you should look. In the previous example, if the only information you knew about the attack is that the attackers used macros in a Word document during the exploitation phase, you could research that behavior and identify that you should look for artifacts related to privilege escalation to determine whether the exploitation was successful. Another option would be to move in the other direction on the kill chain and to look for the delivery method, searching for email senders or subjects related to the original information that you received. Even if the attackers did not keep things 100% identical across attacks, similarities can be identified, especially when you know what you are looking for.

Scenario: building a kill chain

Building a kill chain for a new attacker is a great place to start building your understanding, even if there arenât a lot of things to fill in at the beginning. One of the great things about the kill chain, even one filled with question marks, is that it provides a structure for knowing what to look for next.

In our case, weâre starting with a private report being passed around a variety of organizations similar to ours. (In reality, this report was released in 2014 as the Operations SNM report.) Another security team thought the report could be useful. Using this report, weâre going to start building a kill chain for the actor weâre calling GLASS WIZARD, and will document what we know and what gaps we have.

GLASS WIZARD kill chain

-

Goals

- Actor targets a wide variety of victims, including economic, environmental, and energy policy organizations as well as high-tech manufactures and service providers.

- Actor targets a variety of domestic targets, indicating possible domestic security focus.

-

Reconnaissance

- Unknown.

-

Weaponization

- Use of stolen certificates to avoid OS-level code-signing protection.

-

Delivery

- Spear phishing.

- Strategic website compromises.

- Direct attacks against publicly facing services.

-

Exploitation

- Unknown.

-

Installation: Host

- Wide variety of malware varieities used: Poison Ivy, Gh0st Rat, PlugX, ZXShell,  Hydraq, DeputyDog, Derusbi, Hikit, ZoxFamily (ZoxPNG, ZoxSMB).

- Escalates privileges using local exploits, remote exploits (for example, the ZoxRPC tool), and compromised credentials from other systems.

-

Installation: Network

- Uses compromised credentials to move around the network using standard network administration tools such as RDP.

- May install secondary malware on other hosts.

-

Communication

- Highly divided (minimally reused) infrastructure for each set of targets and campaigns.

- Preference for DNSPOD and DtDNS DNS providers.

- <target>.<holderdomain>.<tld> domain name pattern.

- Use of compromised infrastructure to hide in legitimate traffic.

-

Actions on target

- Compromises large groups of machines and identifies useful material quickly, possibly indicating dynamic goals based on whatâs available.

- Custom scripting for on-target actions.

- Exfiltration?

Note

Sometimes individual items could go in multiple categories. In our kill chain, the element direct attacks against publicly facing services is sort of Delivery, but could also be described as exploitation. In some cases, this is important, but the real important thing is capturing the information. It can always be edited, so when creating a kill chain, donât get too stuck arguing one phase versus another. Again, this is just a model, so itâs not perfect; it just needs to be useful.

Though not a part of the kill chain, another thing this report tells us is related actors, campaigns, and operations including the following:

- APT1

- DeputyDog

- Elderwood

- Ephemeral Hydra

- Operation Aurora

- Operation Snowman

- PLA Third Department Unit 61486 and Unit 61398

- Shell Crew

- VOHO Campaign

How relevant these related actors are to our investigation depends on a lot of things, but for now we just want to keep them around. In addition, a wide variety of links make up the sources for the reports, and weâll want to keep those as well to reference and possibly analyze later.

Now we have an initial kill chain based on our first pieces of information about GLASS WIZARD. While itâs obvious we have huge gaps in the structure of understanding, this actor is starting to come together. We know some of the techniques this actor might use to get into our organization, and we have considerable information about what they might do once they get in. Throughout the rest of the F3 operations phase, weâre going to use the information we have to try to track and respond to the adversary and fill in some of these blanks as we go.

Goals

The attackerâs goals are the most abstract of all of the information you will gather in the Find stage, because in many cases it has to be inferred from their actions rather than being clearly spelled out. However, an attackerâs goal is one of the things that will rarely change, even if it is identified by a defender. An attacker focused on a particular goal cannot simply change goals to avoid detection, even if they may change TTPs, tools, or indicators. No matter what technologies the attacker chooses to use or how they choose to use them, they still have to go where their target is. As a result, goals are the least changeable aspect of an attackerâs behavior and should be a core attacker attribute to track.

Seeing an attacker change goals is a key data point and should always be watched closely. It may signal a shift in interest or could be the setup for a new type of attack. Regardless, it gives considerable insight into attribution and strategic interests.

The goals for GLASS WIZARD are abstract:

- GLASS WIZARD targets a wide variety of victims, including economic, environmental, and energy policy organizations as well as high-tech manufacturers and service providers.

- GLASS WIZARD targets a variety of domestic targets, indicating possible domestic security focus.

It may even be worth referring back to the original report to understand these better, and possibly update the kill chain.

Asset-Centric Targeting

Asset-centric targeting is all about what youâre protecting, and focuses on the specific technologies that enable operations. It can be incredibly useful for instances when you do not have specific information on an attack against your network and want to understand where and how you would look for indications of an attack or intrusion.

One of the most notable examples of this form of targeting is industrial control systems (ICS). Industrial control systems, which control things like dams, factories, and power grinds, are specialized systems that require specific domain knowledge to use and thus attack. A threat intelligence team can limit entire classes of attackers based on their ability to understand, have access to, and test attacks against ICS systems.

In a case involving specialized systems, we are talking about not only massively complex systems, but in many cases massively expensive ones as well. During an attackerâs pre-kill chain phase, they have to invest incredible amounts of time and effort into getting the right software to find vulnerabilities and then environments to test exploits.

Understanding who is capable of attacking the types of systems youâre protecting is key to asset-centric targeting, because it allows you to focus on the kinds of indicators and tools that are useful for attacking your technology. Every extra system an attacker invests in being able to attack is an opportunity cost, meaning they canât spend the same time and resources working on another type of technology needing the same level of resources. For example a team putting effort into attacking ICS would not have the resources to also put effort into attacking automotive technology.

Third-party research can help and hurt technology-centric attacks, either by aiding the attackers with basic research (thus saving them time and resources) or by helping defenders understand how the attackers might approach it and thus how to defend it. Most defenders have a limited need to dig into these topic-specific issues, but they provide a focused view of the attacker/defender paradigm.

Using Asset-Centric Targeting

Because asset-centric targeting focuses on the assets that an attacker would target, in most cases the organizations that will get the most out of  this method are those based around a unique class of technology such as industrial control, power generation, self-driving cars, flying drones, or even Internet of Things devices. Obviously, each has its own specific considerations, but should be approached with a similar but customized kill-chain-style approach. Robert Lee, a noted industrial control systems expert, demonstrated building a custom asset-centric kill chain in his paper âThe Industrial Control System Cyber Kill Chain.â

What about the GLASS WIZARD team? So far, we have no information on them that would help in asset-centric targeting. The GW team, like the vast majority of actors, Â is targeting the widest range of systems, which means Microsoft Windowsâbased systems on a series of interconnected networks probably managed by Active Directory. This gives an adversary the widest attack opportunities. Asset-centric targeting is all about narrow specifics. In many cases, the actors themselves are known for focusing because of the complications of targeting hard-to-find systems.

News-Centric Targeting

This is a little bit tongue in cheek, but one of the most common targeting methodologies that occurs in less-disciplined organizations is what often gets called CNN-centric targeting or news-centric targeting. This usually starts with an executive seeing something on public news or hearing an offhanded comment from someone else that trickles down to the threat intelligence team, who are now tasked with analyzing the implications of the threat.

Letâs set the record straight: these kinds of investigations are not an entirely bad thing. There is a reason even the largest traditional intelligence providers monitor news sources: journalism and intelligence are closely related. Current events can often have a dramatic impact on the intelligence needs of an organization. The key is often to distill what may seem an unfocused query into something more cogent and closely defined.

For example, if a stakeholder comes to you having seen the news clip âChinese hackers infiltrated U.S. companies, attorney general saysâ and wants to know whether this is relevant to your organization. There are a few key points to think about to answer this question:

- First take the time to read the article and watch the video and associated media. Who are the groups and people referenced? Donât just focus on attackers, but focus on victims and third parties as well.Â

- This article is discussing a specific group of attackers. Do you know who those attackers are?

- What is the question being asked? Start big and then get small. Itâs easy at first to look into the names mentioned in the article or video and say youâre not any of those companies, or even related, but go deeper. The true question is likely, âAre we at risk of intellectual property theft from state-sponsored actors?â

- If possible, identify any information that will help you determine whether you have been compromised or will help you put defenses into place that would identify similar attack attempts. This is the beauty of the Find phase: you can identify any pieces of information that may be useful moving forward, regardless of what prompted the request, by making it part of the formal process.Â

It is useful to view this type of targeting as an informal request for information, rather than as offhanded (and sometimes annoying)Â requests. The request for information is the process of taking investigation cycle direction from the outside. We will discuss this concept more later in the chapter.

Targeting Based on Third-Party Notification

One of the worst experiences a team can have is when a third party, whether a peer company, law enforcement, or worst of all, Brian Krebsâ blog, reports a breach at your organization. When a third party notifies you of a breach, in most cases the targeting is done for you. The notifier gives you an actor (or at least some pointers to an actor) and hopefully some indicators. From there, the incident-response phase begins: figuring out how to best use the information given (something weâll talk more about in the next chapter).

The active targeting portion of a third-party notification focuses primarily on what else you can get from the notifier. Getting as much information as possible is about establishing that you (the communicator) and your organization have a few key traits:

- Actionability

- Confidentiality

- Operational security

Sharing intelligence in a third-party notification is largely a risk to the sharing party. Protecting sources and methods is tough work, and harder when itâs outside your control, such as giving the information to someone unknown. As a result, it is up to the receiver to demonstrate that information will be handled appropriately, both in protecting it (operational security and confidentiality) and in using it as well (actionability).

The result is that the first time a third-party shares information, they may be reluctant to share very much, perhaps nothing more than an IP address of attacker infrastructure and a time frame. As the receiver is vetted and shown to be a trustworthy and effective user of shared intelligence, more context might be shared. These types of interactions are the base idea behind information-sharing groups, be they formal groups like ISACs or informal groups like mailing lists or shared chats. Mature and immature organizations both gain from being members of these types of sharing groups. Just be sure your organization is in a position to both share what you can and act on whatâs shared with you. The more context that an organization can share around a particular piece of information, the more easily and effectively other organizations will be able to act on that piece of information.

Note

One thing that can be a struggle in many organizations is getting the authority to share information. Although most organizations are happy to get information from other security teams or researchers, many are reluctant to share information back, either to individuals or groups. This is a natural concern, but to be effective, teams must surmount it. This goes back to the childhood adage that if you donât share, no one will share with you. In many cases, this means engaging your legal team and developing a set of rules around sharing information.

Prioritizing Targeting

At this point in the Find phase, it is likely that you have gathered and analyzed a lot of information. To move onto the next phase, Fix, you need to prioritize this information so that it can be acted on.

Immediate Needs

One of the simplest ways to prioritize targeting a request from stakeholders is based on immediate needs. Did an organization just release a threat report about a particular group, and now your CISO is asking questions? Is the company about to make a decision that may impact a country with aggressive threat groups and they have asked for an assessment of the situation? If there are immediate needs, those should be prioritized.

Judging the immediacy of a Find action is a tough thing. Itâs easy to get caught up in new, shiny leads. Experience will lead to a slower, often more skeptical approach. Itâs easy to chase a hunch or random piece of information, and itâs important to develop a sensitivity to how immediately a lead needs to be addressed. The key is often to slow down and not get caught up in the emergency nature of potentially malicious activity. Many an experienced incident responder has a story of getting too caught up in a target that looked important, only to realize later it was something minor.

Past Incidents

In the absence of an immediate need, itâs worth taking time to establish your collection priorities. Itâs easy to get caught up in the newest threat or the latest vendor report, but in most cases the first place to start is with your own past incidents.

Many attackers are opportunistic, attacking once due to a one-time occurrence such as a vulnerable system or misconfiguration. This is particularly common with hactivist or low-sophistication attackers. Other actors will attack continously, often reusing the same tools against different targets. Tracking these groups is one of the most useful implementations of threat-intelligence processes. In many cases, analyzing these past incidents can lead to insights for detecting future attacks.

Another advantage of starting your targeting with past incidents is youâll already have considerable amounts of data in the form of incident reports, firsthand observations, and raw data (such as malware and drives) to continue to pull information from. Details or missed pieces of past incidents may be re-explored in the Find phase.

Criticality

Some information that you may have identified in this phase will have a much more significant impact on operations than other pieces of information that you have gathered. For example, if, during the Find phase you uncover indications of lateral movement in a sensitive network, that information is of a much higher priority than information indicating that someone is conducting scans against an external web server. Both issues should be looked into, but one clearly has a higher potential impact than the other: the higher-priority issues should be addressed first. Criticality is something that will vary from organization to organization based on what is important to that particular organization.

Organizing Targeting Activities

It is important to understand how to organize and vet the major outputs of our Find phase. Taking time, whether itâs 10 minutes or 10 hours, to really dig into what information is available and understand what you are potentially up against will put you in a good position to move forward. You have to organize all of the information you have just collected and analyzed into a manageable format.

Hard Leads

Hard leads include information you have identified that has a concrete link to the investigation. Intelligence that is in the hard lead category provides context to things that have been identified and that you know is relevant. These leads have been seen in some part of the network, and during the Find phase you will be searching for things such as related activity in other parts of the network. It is important to understand what pieces of intelligence are directly related to the incident and which pieces of intelligence are only potentially related. Similar to the data sources we discussed in Chapter 3, the different types of leads are all useful; they are just used in different ways.

Soft Leads

Much of the information that you have discovered in the Find phase will fall into the category of soft leads. Soft leads may be additional indicators or behaviors that you have identified that are related to some of the hard leads, but at this point you have not looked to see whether the indicators are present in your environment or what the implications of that are; that will be done in the Fix phase. Soft leads also include things such as information from the news on attacks that target similar organizations to yours, or things that have been shared by an information-sharing group that you know are legitimate threats but not whether they are impacting you. Soft leads can also include things such as behavioral heuristics, where you are looking for patterns of activity that stand out rather than a concrete piece of information. This types of searches, those often technically more difficult to carry out, can produce significant results and generate a great deal of intelligence.

Grouping Related Leads

In addition to identifying which leads are hard and which are soft, it is also a good idea to keep track of which leads are related to each other. The presence of hard leads, either from an active incident or a past incident, will often lead you to identify multiple soft leads that you will be looking for in the Fix phase. This is known as pivoting, where one piece of information leads you to the identification of multiple other pieces of information that may or may not be relevant to you. In many cases, your initial lead may have limited benefit, but a pivot could be extremely important. Keeping track of which soft leads are related to hard leads, or which soft leads are related to each other, will help you interpret and analyze the results of your investigation. In this Find phase, you are taking the time and effort to identify information related to the threats against your environment. You donât want to have to spend time reanalyzing the information because you do not remember where you got it from or why you cared about it in the first place.

All of these leads should also be stored and documented in a way that will allow you to easily move into the subsequent phases and add information. There are a variety of ways that this information can be documented. Many teams still use good old Excel spreadsheets. Others have transitioned to tools such as threat-intelligence platforms (there are open source and commercial versions of these), which allow you to store indicators, add notes and tags, and in some cases link indicators together. The most important thing about documenting this stage of the incident-response process is that you find something that is compatible with your workflow and something that allows the team visibility into what has been identified and what still needs to be vetted or investigated. We have seen many teams spend far more time than they need to in the Find phase because of duplication of effort or a lack of good coordination. Donât fall into this trap! Once you have identified information about the threat you are dealing with and documented properly, you are ready to move into the next phase.

Lead Storage

Although we wonât start talking about tracking incident-response activity and incident management until Chapter 7, itâs important to take a second to discuss lead tracking. Every incident responder has stumbled across a piece of information in a lead that theyâve seen before only to fail to contextualize it. Taking the time to note your leads, even just solo in a notebook, is essential for success. Hereâs a solid format for saving your leads:

- Lead

-

The core observation or idea.

- Datetime

-

When it was submitted (important for context or SLAs).

- Context

-

How was this lead found (often useful for investigation).

- Analyst

-

Who found it.

This approach is simple and easy, but effective. Having these leads available will give you a starting point for reactive and proactive security efforts and also contextualize ongoing incidents in many cases.

The Request for Information Process

Similar to leads, requests for information (sometimes called a request for intelligence) are the process of getting direction from external stakeholders into a teamâs incident response or intelligence cycle. This process is meant to make requests uniform, and to enable them to be prioritized, and easily directed to the right analyst.

Requests for information (weâll call them RFIs for short) may be simple (only a sentence and a link to a document), or complex (involving hypothetical scenarios and multiple caveats). All good RFIs should include the following information:

- The request

-

This should be a summary of the question being asked.

- The requestor

-

So you know who to send the information back to.

- An output

-

This can take many forms. Is the expected output IOCs? A briefing document? A presentation?

- References

-

If the question involves or was inspired by a document, this should be shared.

- A priority or due date

-

This is necessary for determining when something gets accomplished.

Beyond that, the RFI process needs to be relevant and workable inside your organization. Integration is key. Stakeholders need to have an easy time submitting requests and receiving information back from it, whether that be via a portal or email submission. If you or your team are frequently overrun by a high volume of informal RFIs, putting a formal system into place is one of the best ways to manage the workload. Weâll discuss RFIs, specifically as intelligence products, more in Chapter 9.

Conclusion

The Find phase is a critical step in the F3EAD process that allows you to clearly identify what it is that you are looking for. Find often equates to targeting, and is closely related to the Requirements and Direction phase of the intelligence cycle. If you do not know what your task is or what threat you are addressing, it is hard to address it properly. Find sets the stage for the other operations-focused phases in the cycle.

You will not spend the same amount of time in the Find phase for each project. At times the Find phase is done for you; other times it involves only a small amount of digging; and at still other times the Find phase is a lengthy undertaking that involves multiple people within a team focusing on different aspects of the same threat. When faced with the latter, make sure to stay organized and document and prioritize leads so that you can move into the Find phase with a comprehensive targeting package that includes exactly what you will be looking for.

Now that we have some idea about who and what weâre looking for, itâs time to dig into the technical investigation phase of incident response. We call this the Fix.

Get Intelligence-Driven Incident Response now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.