Components of Hadoop

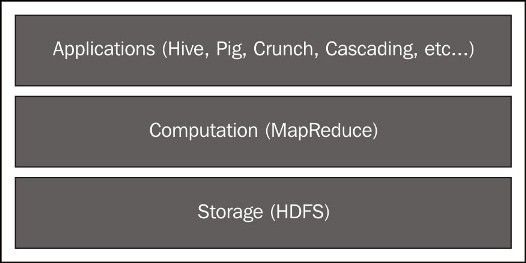

The broad Hadoop umbrella project has many component subprojects, and we'll discuss several of them in this book. At its core, Hadoop provides two services: storage and computation. A typical Hadoop workflow consists of loading data into the Hadoop Distributed File System (HDFS) and processing using the MapReduce API or several tools that rely on MapReduce as an execution framework.

Hadoop 1: HDFS and MapReduce

Both layers are direct implementations of Google's own GFS and MapReduce technologies.

Common building blocks

Both HDFS and MapReduce exhibit several of the architectural principles described in the previous section. In particular, ...

Get Learning Hadoop 2 now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.