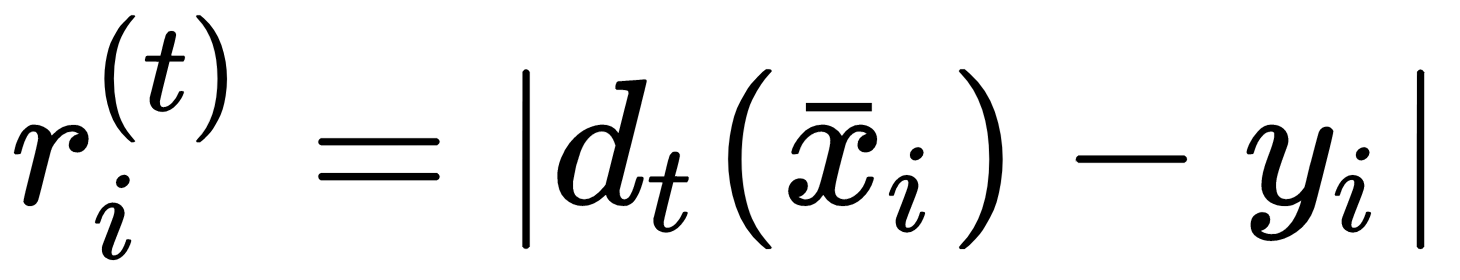

A slightly more complex variant has been proposed by Drucker (in Improving Regressors using Boosting Techniques, Drucker H., ICML 1997) to manage regression problems. The weak learners are commonly decision trees and the main concepts are very similar to the other variants (in particular, the re-weighting process applied to the training dataset). The real difference is the strategy adopted in order to choose the final prediction yi given the input sample xi. Assuming that there are Nc estimators and each of them is represented as function dt(x), we can compute the absolute residual ri(t) for every input sample:

Once the set Ri ...