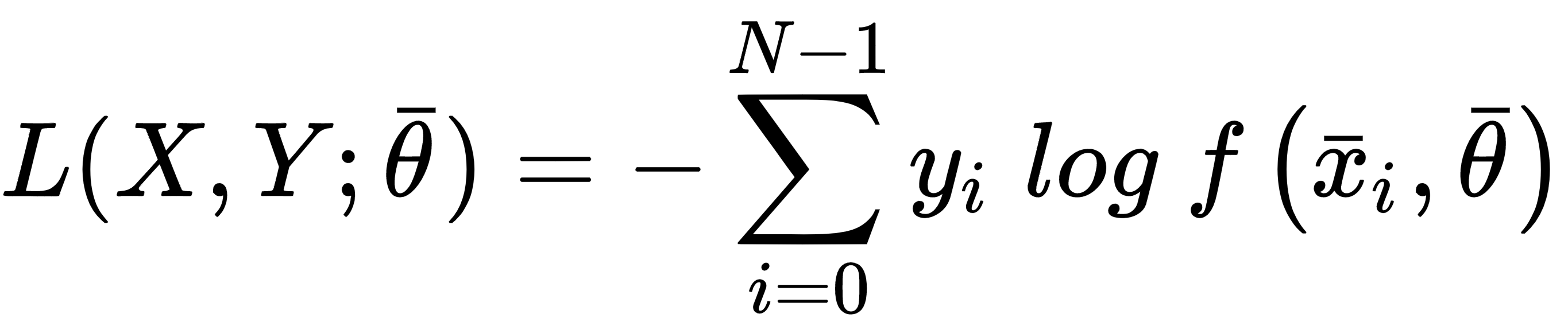

Categorical cross-entropy is the most diffused classification cost function, adopted by logistic regression and the majority of neural architectures. The generic analytical expression is:

This cost function is convex and can be easily optimized using stochastic gradient descent techniques; moreover, it has another important interpretation. If we are training a classifier, our goal is to create a model whose distribution is as similar as possible to pdata. This condition can be achieved by minimizing the Kullback-Leibler divergence between the two distributions:

In the previous expression, pM is the distribution generated ...