Chapter 1. Getting Started with PyTorch

In this chapter we set up all we need for working with PyTorch. Once we’ve done that, every chapter following will build on this initial foundation, so it’s important that we get it right. This leads to our first fundamental question: should you build a custom deep learning computer or just use one of the many cloud-based resources available?

Building a Custom Deep Learning Machine

There is an urge when diving into deep learning to build yourself a monster for all your compute needs. You can spend days looking over different types of graphics cards, learning the memory lanes possible CPU selections will offer you, the best sort of memory to buy, and just how big an SSD drive you can purchase to make your disk access as fast as possible. I am not claiming any immunity from this; I spent a month a couple of years ago making a list of parts and building a new computer on my dining room table.

My advice, especially if you’re new to deep learning, is this: don’t do it. You can easily spend several thousands of dollars on a machine that you may not use all that much. Instead, I recommend that you work through this book by using cloud resources (in either Amazon Web Services, Google Cloud, or Microsoft Azure) and only then start thinking about building your own machine if you feel that you require a single machine for 24/7 operation. You do not need to make a massive investment in hardware to run any of the code in this book.

You might not ever need to build a custom machine for yourself. There’s something of a sweet spot, where it can be cheaper to build a custom rig if you know your calculations are always going to be restricted to a single machine (with at most a handful of GPUs). However, if your compute starts to require spanning multiple machines and GPUs, the cloud becomes appealing again. Given the cost of putting a custom machine together, I’d think long and hard before diving in.

If I haven’t managed to put you off from building your own, the following sections provide suggestions for what you would need to do so.

GPU

The heart of every deep learning box, the GPU, is what is going to power the majority of PyTorch’s calculations, and it’s likely going to be the most expensive component in your machine. In recent years, the prices of GPUs have increased, and the supplies have dwindled, because of their use in mining cryptocurrency like Bitcoin. Thankfully, that bubble seems to be receding, and supplies of GPUs are back to being a little more plentiful.

At the time of this writing, I recommend obtaining the NVIDIA GeForce RTX 2080 Ti. For a cheaper option, feel free to go for the 1080 Ti (though if you are weighing the decision to get the 1080 Ti for budgetary reasons, I again suggest that you look at cloud options instead). Although AMD-manufactured GPU cards do exist, their support in PyTorch is currently not good enough to recommend anything other than an NVIDIA card. But keep a lookout for their ROCm technology, which should eventually make them a credible alternative in the GPU space.

CPU/Motherboard

You’ll probably want to spring for a Z370 series motherboard. Many people will tell you that the CPU doesn’t matter for deep learning and that you can get by with a lower-speed CPU as long as you have a powerful GPU. In my experience, you’ll be surprised at how often the CPU can become a bottleneck, especially when working with augmented data.

Storage

Storage for a custom rig should be installed in two classes: first, an M2-interface solid-state drive (SSD)—as big as you can afford—for your hot data to keep access as fast as possible when you’re actively working on a project. For the second class of storage, add in a 4TB Serial ATA (SATA) drive for data that you’re not actively working on, and transfer to hot and cold storage as required.

I recommend that you take a look at PCPartPicker to glance at other people’s deep learning machines (you can see all the weird and wild case ideas, too!). You’ll get a feel for lists of machine parts and associated prices, which can fluctuate wildly, especially for GPU cards.

Now that you’ve looked at your local, physical machine options, it’s time to head to the clouds.

Deep Learning in the Cloud

OK, so why is the cloud option better, you might ask? Especially if you’ve looked at the Amazon Web Services (AWS) pricing scheme and worked out that building a deep learning machine will pay for itself within six months? Think about it: if you’re just starting out, you are not going to be using that machine 24/7 for those six months. You’re just not. Which means that you can shut off the cloud machine and pay pennies for the data being stored in the meantime.

And if you’re starting out, you don’t need to go all out and use one of NVIDIA’s leviathan Tesla V100 cards attached to your cloud instance straightaway. You can start out with one of the much cheaper (sometimes even free) K80-based instances and move up to the more powerful card when you’re ready. That is a trifle less expensive than buying a basic GPU card and upgrading to a 2080Ti on your custom box. Plus if you want to add eight V100 cards to a single instance, you can do it with just a few clicks. Try doing that with your own hardware.

The other issue is maintenance. If you get yourself into the good habit of re-creating your cloud instances on a regular basis (ideally starting anew every time you come back to work on your experiments), you’ll almost always have a machine that is up to date. If you have your own machine, updating is up to you. This is where I confess that I do have my own custom deep learning machine, and I ignored the Ubuntu installation on it for so long that it fell out of supported updates, resulting in an eventual day spent trying to get the system back to a place where it was receiving updates again. Embarrassing.

Anyway, you’ve made the decision to go to the cloud. Hurrah! Next: which provider?

Google Colaboratory

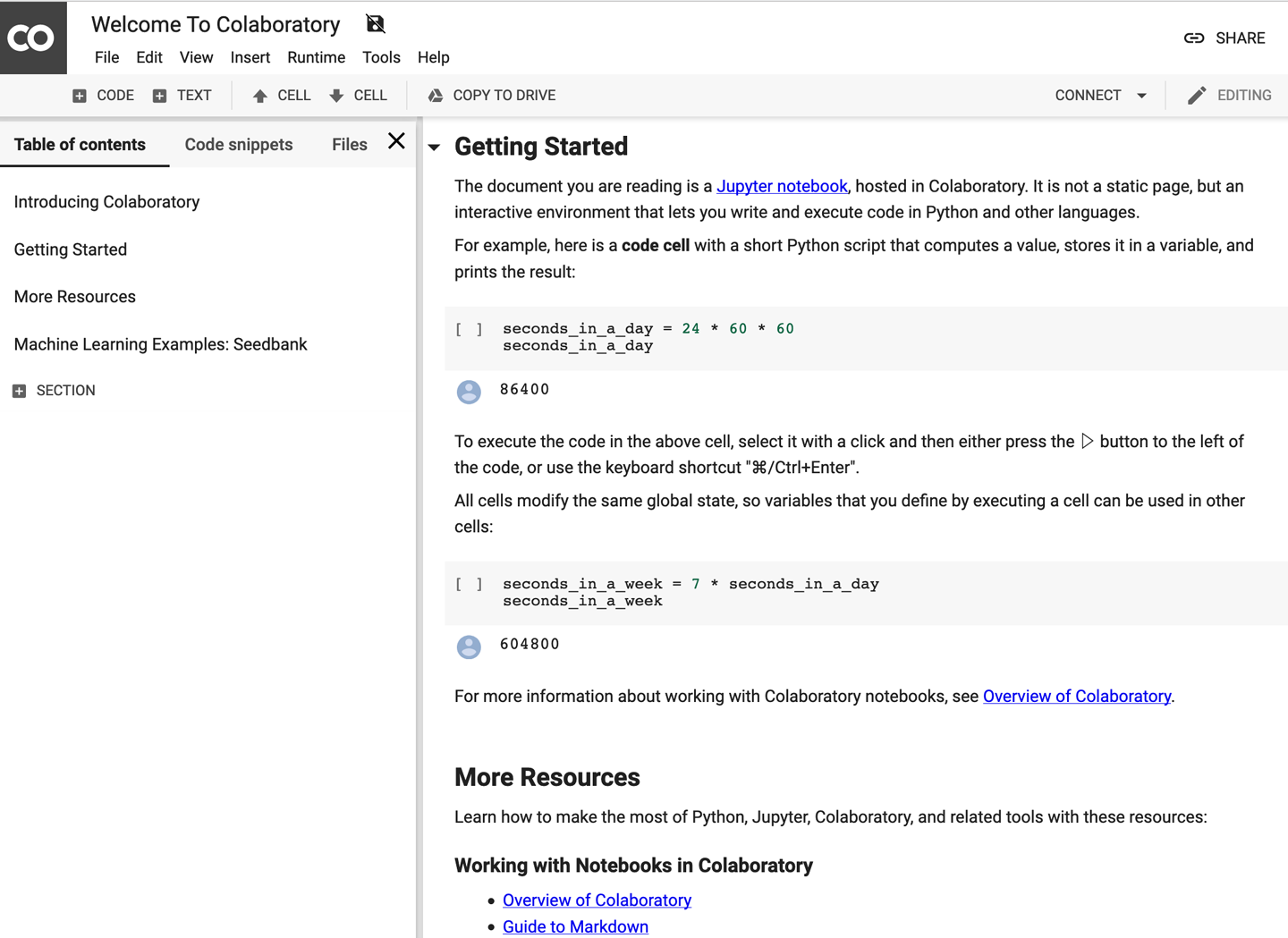

But wait—before we look at providers, what if you don’t want to do any work at all? None of that pesky building a machine or having to go through all the trouble of setting up instances in the cloud? Where’s the really lazy option? Google has the right thing for you. Colaboratory (or Colab) is a mostly free, zero-installation-required custom Jupyter Notebook environment. You’ll need a Google account to set up your own notebooks. Figure 1-1 shows a screenshot of a notebook created in Colab.

What makes Colab a great way to dive into deep learning is that it includes preinstalled versions of TensorFlow and PyTorch, so you don’t have to do any setup beyond typing import torch, and every user can get free access to a NVIDIA T4 GPU for up to 12 hours of continuous runtime. For free. To put that in context, empirical research suggests that you get about half the speed of a 1080 Ti for training, but with an extra 5GB of memory so you can store larger models. It also offers the ability to connect to more recent GPUs and Google’s custom TPU hardware in a paid option, but you can pretty much do every example in this book for nothing with Colab. For that reason, I recommend using Colab alongside this book to begin with, and then you can decide to branch out to dedicated cloud instances and/or your own personal deep learning server if needed.

Figure 1-1. Google Colab(oratory)

Colab is the zero-effort approach, but you may want to have a little more control over how things are installed or get Secure Shell (SSH) access to your instance on the cloud, so let’s have a look at what the main cloud providers offer.

Cloud Providers

Each of the big three cloud providers (Amazon Web Services, Google Cloud Platform, and Microsoft’s Azure) offers GPU-based instances (also referred to as virtual machines or VMs) and official images to deploy on those instances. They have all you need to get up and running without having to install drivers and Python libraries yourself. Let’s have a run-through of what each provider offers.

Amazon Web Services

AWS, the 800-pound gorilla of the cloud market, is more than happy to fulfill your GPU needs and offers the P2 and P3 instance types to help you out. (The G3 instance type tends to be used more in actual graphics-based applications like video encoding, so we won’t cover it here.) The P2 instances use the older NVIDIA K80 cards (a maximum of 16 can be connected to one instance), and the P3 instances use the blazing-fast NVIDIA V100 cards (and you can strap eight of those onto one instance if you dare).

If you’re going to use AWS, my recommendation for this book is to go with the p2.xlarge class. This will cost you just 90 cents an hour at the time of this writing and provides plenty of power for working through the examples. You may want to bump up to the P3 classes when you start working on some meaty Kaggle competitions.

Creating a running deep learning box on AWS is incredibly easy:

-

Sign into the AWS console.

-

Select EC2 and click Launch Instance.

-

Search for the Deep Learning AMI (Ubuntu) option and select it.

-

Choose

p2.xlargeas your instance type. -

Launch the instance, either by creating a new key pair or reusing an existing key pair.

-

Connect to the instance by using SSH and redirecting port 8888 on your local machine to the instance:

ssh-Llocalhost:8888:localhost:8888\-iyour.pemfilenameubuntu@yourinstanceDNS -

Start Jupyter Notebook by entering

jupyter notebook. Copy the URL that gets generated and paste it into your browser to access Jupyter.

Remember to shut down your instance when you’re not using it! You can do this by right-clicking the instance in the web interface and selecting the Shutdown option. This will shut down the instance, and you won’t be charged for the instance while it’s not running. However, you will be charged for the storage space that you have allocated for it even if the instance is turned off, so be aware of that. To delete the instance and storage entirely, select the Terminate option instead.

Azure

Like AWS, Azure offers a mixture of cheaper K80-based instances and more expensive Tesla V100 instances. Azure also offers instances based on the older P100 hardware as a halfway point between the other two. Again, I recommend the instance type that uses a single K80 (NC6) for this book, which also costs 90 cents per hour, and move onto other NC, NCv2 (P100), or NCv3 (V100) types as you need them.

Here’s how you set up the VM in Azure:

-

Log in to the Azure portal and find the Data Science Virtual Machine image in the Azure Marketplace.

-

Click the Get It Now button.

-

Fill in the details of the VM (give it a name, choose SSD disk over HDD, an SSH username/password, the subscription you’ll be billing the instance to, and set the location to be the nearest to you that offers the NC instance type).

-

Click the Create option. The instance should be provisioned in about five minutes.

-

You can use SSH with the username/password that you specified to that instance’s public Domain Name System (DNS) name.

-

Jupyter Notebook should run when the instance is provisioned; navigate to http://

dns name of instance:8000 and use the username/password combination that you used for SSH to log in.

Google Cloud Platform

In addition to offering K80, P100, and V100-backed instances like Amazon and Azure, Google Cloud Platform (GCP) offers the aforementioned TPUs for those who have tremendous data and compute requirements. You don’t need TPUs for this book, and they are pricey, but they will work with PyTorch 1.0, so don’t think that you have to use TensorFlow in order to take advantage of them if you have a project that requires their use.

Getting started with Google Cloud is also pretty easy:

-

Click Launch on Compute Engine.

-

Give the instance a name and assign it to the region closest to you.

-

Set the machine type to 8 vCPUs.

-

Set GPU to 1 K80.

-

Ensure that PyTorch 1.0 is selected in the Framework section.

-

Select the “Install NVIDIA GPU automatically on first startup?” checkbox.

-

Set Boot disk to SSD Persistent Disk.

-

Click the Deploy option. The VM will take about 5 minutes to fully deploy.

-

To connect to Jupyter on the instance, make sure you’re logged into the correct project in

gcloudand issue this command:gcloud compute ssh _INSTANCE_NAME_ -- -L 8080:localhost:8080

The charges for Google Cloud should work out to about 70 cents an hour, making it the cheapest of the three major cloud providers.

Which Cloud Provider Should I Use?

If you have nothing pulling you in any direction, I recommend Google Cloud Platform (GCP); it’s the cheapest option, and you can scale all the way up to using TPUs if required, with a lot more flexibility than either the AWS or Azure offerings. But if you have resources on one of the other two platforms already, you’ll be absolutely fine running in those environments.

Once you have your cloud instance running, you’ll be able to log in to its copy of Jupyter Notebook, so let’s take a look at that next.

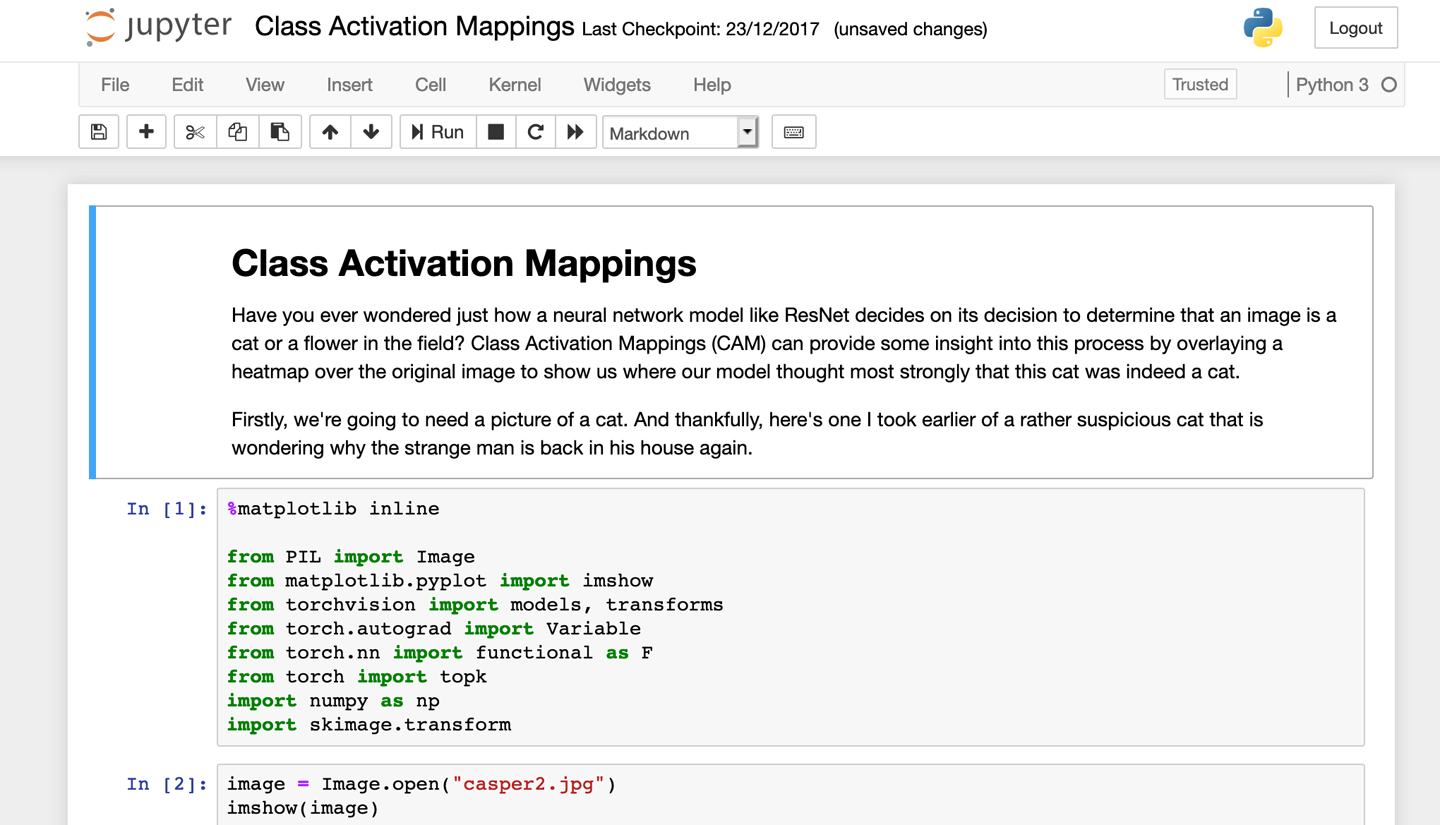

Using Jupyter Notebook

If you haven’t come across it before, here’s the lowdown on Jupyter Notebook: this browser-based environment allows you to mix live code with text, images, and visualizations and has become one of the de facto tools of data scientists all over the world. Notebooks created in Jupyter can be easily shared; indeed, you’ll find all the notebooks in this book. You can see a screenshot of Jupyter Notebook in action in Figure 1-2.

We won’t be using any advanced features of Jupyter in this book; all you need to know is how to create a new notebook and that Shift-Enter runs the contents of a cell. But if you’ve never used it before, I suggest browsing the Jupyter documentation before you get to Chapter 2.

Figure 1-2. Jupyter Notebook

Before we get into using PyTorch, we’ll cover one last thing: how to install everything manually.

Installing PyTorch from Scratch

Perhaps you want a little more control over your software than using one of the preceding cloud-provided images. Or you need a particular version of PyTorch for your code. Or, despite all my cautionary warnings, you really want that rig in your basement. Let’s look at how to install PyTorch on a Linux server in general.

Warning

You can use PyTorch with Python 2.x, but I strongly recommend against doing so. While the Python 2.x to 3.x upgrade saga has been running for over a decade now, more and more packages are beginning to drop Python 2.x support. So unless you have a good reason, make sure your system is running Python 3.

Download CUDA

Although PyTorch can be run entirely in CPU mode, in most cases, GPU-powered PyTorch is required for practical usage, so we’re going to need GPU support. This is fairly straightforward; assuming you have an NVIDIA card, this is provided by their Compute Unified Device Architecture (CUDA) API. Download the appropriate package format for your flavor of Linux and install the package.

For Red Hat Enterprise Linux (RHEL) 7:

sudo rpm -i cuda-repo-rhel7-10-0local-10.0.130-410.48-1.0-1.x86_64.rpm sudo yum clean all sudo yum install cuda

sudo dpkg -i cuda-repo-ubuntu1804-10-0-local-10.0.130-410.48_1.0-1_amd64.deb sudo apt-key add /var/cuda-repo-<version>/7fa2af80.pub sudo apt-get update sudo apt-get install cuda

Anaconda

Python has a variety of packaging systems, all of which have good and not-so-good points. Like the developers of PyTorch, I recommend that you install Anaconda, a packaging system dedicated to producing the best distribution of packages for data scientists. Like CUDA, it’s fairly easy to install.

Head to Anaconda and pick out the installation file for your machine. Because it’s a massive archive that executes via a shell script on your system, I encourage you to run md5sum on the file you’ve downloaded and check it against the list of signatures before you execute it with bash Anaconda3-VERSION-Linux-x86_64.sh to make sure that the signature on your machine matches the one on the web page. This ensures that the downloaded file hasn’t been tampered with and means it’s safe to run on your system. The script will present several prompts about locations it’ll be installing into; unless there’s a good reason, just accept the defaults.

Finally, PyTorch! (and Jupyter Notebook)

Now that you have Anaconda installed, getting set up with PyTorch is simple:

conda install pytorch torchvision -c pytorch

This installs PyTorch and the torchvision library that we use in the next couple of chapters to create deep learning architectures that work with images. Anaconda has also installed Jupyter Notebook for us, so we can begin by starting it:

jupyter notebook

Head to http://YOUR-IP-ADDRESS:8888 in your browser, create a new notebook, and enter the following:

importtorch(torch.cuda.is_available())(torch.rand(2,2))

This should produce output similar to this:

True0.60400.66470.92860.4210[torch.FloatTensorofsize2x2]

If cuda.is_available() returns False, you need to debug your CUDA installation so PyTorch can see your graphics card. The values of the tensor will be different on your instance.

But what is this tensor? Tensors are at the heart of almost everything in PyTorch, so you need to know what they are and what they can do for you.

Tensors

A tensor is both a container for numbers as well as a set of rules that define transformations between tensors that produce new tensors. It’s probably easiest for us to think about tensors as multidimensional arrays. Every tensor has a rank that corresponds to its dimensional space. A simple scalar (e.g., 1) can be represented as a tensor of rank 0, a vector is rank 1, an n × n matrix is rank 2, and so on. In the previous example, we created a rank 2 tensor with random values by using torch.rand(). We can also create them from lists:

x=torch.tensor([[0,0,1],[1,1,1],[0,0,0]])x>tensor([[0,0,1],[1,1,1],[0,0,0]])

We can change an element in a tensor by using standard Python indexing:

x[0][0]=5>tensor([[5,0,1],[1,1,1],[0,0,0]])

You can use special creation functions to generate particular types of tensors. In particular, ones() and zeroes() will generate tensors filled with 1s and 0s, respectively:

torch.zeros(2,2)>tensor([[0.,0.],[0.,0.]])

You can perform standard mathematical operations with tensors (e.g., adding two tensors together):

tensor.ones(1,2) + tensor.ones(1,2) > tensor([[2., 2.]])

And if you have a tensor of rank 0, you can pull out the value with item():

torch.rand(1).item()>0.34106671810150146

Tensors can live in the CPU or on the GPU and can be copied between devices by using the to() function:

cpu_tensor=tensor.rand(2)cpu_tensor.device>device(type='cpu')gpu_tensor=cpu_tensor.to("cuda")gpu_tensor.device>device(type='cuda',index=0)

Tensor Operations

If you look at the PyTorch documentation, you’ll see that there are a lot of functions that you can apply to tensors—everything from finding the maximum element to applying a Fourier transform. In this book, you don’t need to know all of those in order to turn images, text, and audio into tensors and manipulate them to perform our operations, but you will need some. I definitely recommend that you give the documentation a glance, especially after finishing this book. Now we’re going to go through all the functions that will be used in upcoming chapters.

First, we often need to find the maximum item in a tensor as well as the index that contains the maximum value (as this often corresponds to the class that the neural network has decided upon in its final prediction). These can be done with the max() and argmax() functions. We can also use item() to extract a standard Python value from a 1D tensor.

torch.rand(2,2).max()>tensor(0.4726)torch.rand(2,2).max().item()>0.8649941086769104

Sometimes, we’d like to change the type of a tensor; for example, from a LongTensor to a FloatTensor. We can do this with to():

long_tensor=torch.tensor([[0,0,1],[1,1,1],[0,0,0]])long_tensor.type()>'torch.LongTensor'float_tensor=torch.tensor([[0,0,1],[1,1,1],[0,0,0]]).to(dtype=torch.float32)float_tensor.type()>'torch.FloatTensor'

Most functions that operate on a tensor and return a tensor create a new tensor to store the result. However, if you want to save memory, look to see if an in-place function is defined, which should be the same name as the original function but with an appended underscore (_).

random_tensor=torch.rand(2,2)random_tensor.log2()>tensor([[-1.9001,-1.5013],[-1.8836,-0.5320]])random_tensor.log2_()>tensor([[-1.9001,-1.5013],[-1.8836,-0.5320]])

Another common operation is reshaping a tensor. This can often occur because your neural network layer may require a slightly different input shape than what you currently have to feed into it. For example, the Modified National Institute of Standards and Technology (MNIST) dataset of handwritten digits is a collection of 28 × 28 images, but the way it’s packaged is in arrays of length 784. To use the networks we are constructing, we need to turn those back into 1 × 28 × 28 tensors (the leading 1 is the number of channels—normally red, green, and blue—but as MNIST digits are just grayscale, we have only one channel). We can do this with either view() or reshape():

flat_tensor=torch.rand(784)viewed_tensor=flat_tensor.view(1,28,28)viewed_tensor.shape>torch.Size([1,28,28])reshaped_tensor=flat_tensor.reshape(1,28,28)reshaped_tensor.shape>torch.Size([1,28,28])

Note that the reshaped tensor’s shape has to have the same number of total elements as the original. If you try flat_tensor.reshape(3,28,28), you’ll see an error like this:

RuntimeErrorTraceback(mostrecentcalllast)<ipython-input-26-774c70ba5c08>in<module>()---->1flat_tensor.reshape(3,28,28)RuntimeError:shape'[3, 28, 28]'isinvalidforinputofsize784

Now you might wonder what the difference is between view() and reshape(). The answer is that view() operates as a view on the original tensor, so if the underlying data is changed, the view will change too (and vice versa). However, view() can throw errors if the required view is not contiguous; that is, it doesn’t share the same block of memory it would occupy if a new tensor of the required shape was created from scratch. If this happens, you have to call tensor.contiguous() before you can use view(). However, reshape() does all that behind the scenes, so in general, I recommend using reshape() rather than view().

Finally, you might need to rearrange the dimensions of a tensor. You will likely come across this with images, which often are stored as [height, width, channel] tensors, but PyTorch prefers to deal with these in a [channel, height, width]. You can user permute() to deal with these in a fairly straightforward manner:

hwc_tensor=torch.rand(640,480,3)chw_tensor=hwc_tensor.permute(2,0,1)chw_tensor.shape>torch.Size([3,640,480])

Here, we’ve just applied permute to a [640,480,3] tensor, with the arguments being the indexes of the tensor’s dimensions, so we want the final dimension (2, due to zero indexing) to be at the front of our tensor, followed by the remaining two dimensions in their original order.

Tensor Broadcasting

Borrowed from NumPy, broadcasting allows you to perform operations between a tensor and a smaller tensor. You can broadcast across two tensors if, starting backward from their trailing dimensions:

-

The two dimensions are equal.

-

One of the dimensions is 1.

In our use of broadcasting, it works because 1 has a dimension of 1, and as there are no other dimensions, the 1 can be expanded to cover the other tensor. If we tried to add a [2,2] tensor to a [3,3] tensor, we’d get this error message:

Thesizeoftensora(2)mustmatchthesizeoftensorb(3)atnon-singletondimension1

But we could add a [1,3] tensor to the [3,3] tensor without any trouble. Broadcasting is a handy little feature that increases brevity of code, and is often faster than manually expanding the tensor yourself.

That wraps up everything concerning tensors that you need to get started! We’ll cover a few other operations as we come across them later in the book, but this is enough for you to dive into Chapter 2.

Conclusion

Whether it’s in the cloud or on your local machine, you should now have PyTorch installed. I’ve introduced the fundamental building block of the library, the tensor, and you’ve had a brief look at Jupyter Notebook. This is all you need to get started! In the next chapter, you use everything you’ve seen so far to start building neural networks and classifying images, so make you sure you’re comfortable with tensors and Jupyter before moving on.

Get Programming PyTorch for Deep Learning now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.