Chapter 1. From Hypertext to Hyperdata

In this chapter, we will start exploring the basics of REST and HTTP, beginning with some concepts of REST architectures and the role of hypermedia.

This will be an introductory chapter on the architectural foundation blocks of REST and how these map to the HTTP protocol.

REST and HTTP

The Hypertext Transfer Protocol (HTTP) protocol has a predominant role on the Web. It represents the only protocol designed specifically to transfer resource representations, to abstract over lower-layered transport protocols such as TCP or UDP, and to act as the primary application-level protocol for web components.

Everything Started with Hypertext

In the Appendix of this book you can find an introductory section on the HTTP protocol and hypertext. Although web programmers tend to be very familiar with the protocol, recalling some deep concepts can be useful when reasoning about RESTful programming.

Representational State Transfer (REST) architectures are a generalization of the WWW architecture based on the HTTP protocol, where the World Wide Web represents an Internet-scale implementation of RESTful architectural style. In RESTful architectures agents implement uniform interface semantics, instead of application-specific components, implementations, and protocol syntax.

REST therefore represents an abstraction of the actual architecture of the Web, in a similar way to how the Web itself represents an abstraction of HTTP. REST is mostly a model of how the Web should work, a framework to make sure that any development to the protocols or more generally any components that make the Web possible respect the core constraints that make the Web as a whole a successful application.

Architectural Abstractions

Abstraction is an important concept in software architectures, and more generally in programming paradigms. A software system is designed to solve a problem by abstracting the problem scenario, reducing the problem into smaller subproblems, and computing accurate results.

The process of abstracting over a problem scenario is actually extremely common in engineering.

My university professor for a course in complex system analysis used to tell his class that every model is just an approximation of reality, able to produce an outcome (i.e., a solution to a problem) that can be considered somewhat satisfactory.

A software architecture contains different level of abstractions, and each level probably contains its own architectures with its own levels of abstractions. Think of the ISO/OSI model or the TCP/IP stack protocols. These are actual conceptual models that try to standardize a problem—the internal functions of a communications system—by dividing it into abstraction layers. Each layer contains its own specifications, protocols, and interfaces to exchange information with the other layers.

Each system will also have many different phases of operations that will define its behavior in any possible situation presented by the problem scenario.

Architectures define how the various modular components are linked through connectors, how data is exchanged between these components using a set of defined interfaces, and how these are configured within a system.

There are also different possible configurations of components and connectors; these are called architectural styles.

Architectural styles can be viewed like design patterns, or in other words as general and reusable approaches to solving recurring problems in a certain context.

It is also possible to break down a system architecture from different viewpoints. The idea of an architectural viewpoint is to represent a system from a certain perspective, to permit its comprehension.

The Web is a particularly interesting example of a complex distributed system whose architecture needs to be analyzed via different viewpoints, or by considering its different layers and components, from the actual physical connections that make each communication possible to the various protocols involved in every HTTP message sent or received by every server, computer, and device connected to the Internet.

Note

In 2003, the W3C defined the “Architecture of the World Wide Web,” describing the properties of the Web and the design choices that had been made to apply those properties to protocols, standards, and interfaces developed up to that moment.

Introducing REST

The RESTful architectural style (or just REST) was used by the W3C to design both the HTTPv1.1 protocol and the so-called modern web architecture constraints.

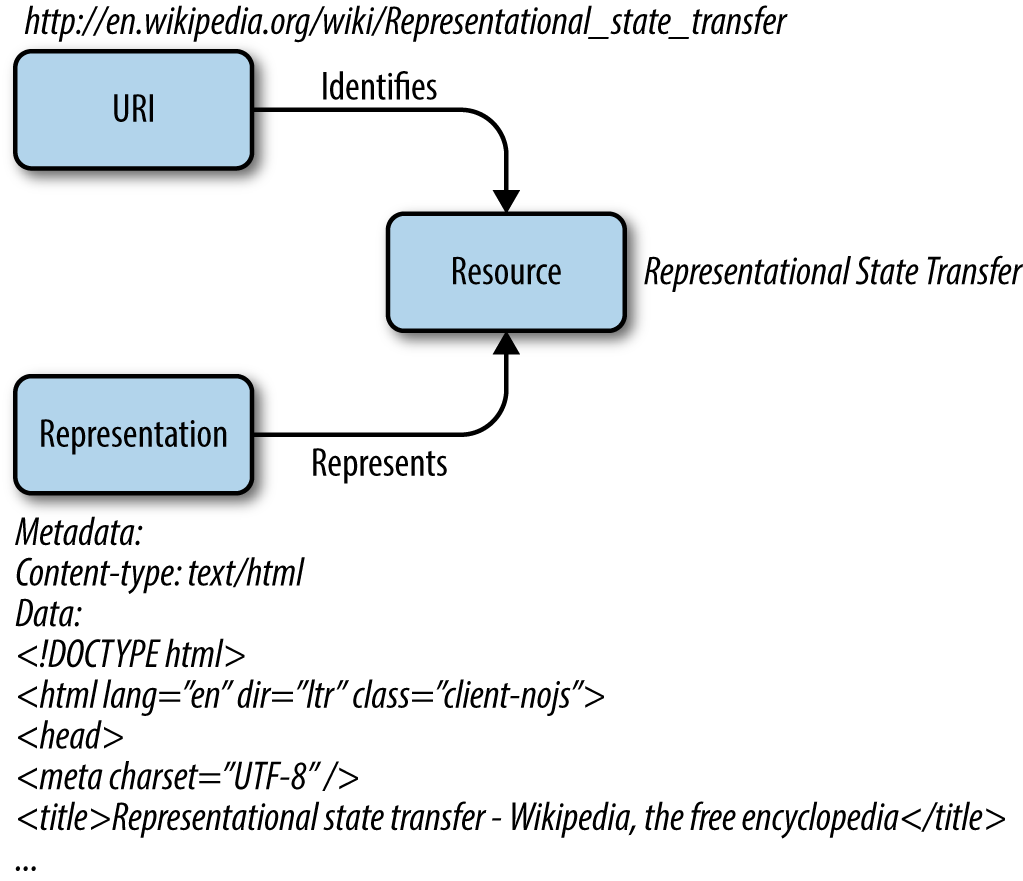

The W3C Technical Architecture Group identifies three architectural foundations of the Web. These are:

- The identification mechanism, where each resource is identified by a uniform resource identifier (URI)

- The actual communication process between agents, where representations about resources are exchanged

- The representations of data being exchanged, and how these are built from a predetermined set of data formats

These are also the three bases of REST architectures (Figure 1-1).

Figure 1-1. REST architectures interact with resources identified by URIs by exchanging data representations (HTML, XML, JSON, etc.)

An important aspect of the Web’s architecture is that identification, representation, and format are independent concepts. A URI can identify a resource without knowing what formats the resource uses to exchange representations. Likewise, the protocols and representations used by the resource to communicate can be modified independent of the URI identifying the resource.

This property of orthogonality within the Web’s architectural foundations makes systems easy to modify, extend, and scale. Communication interfaces can be defined only in terms of the communication protocols used, the specified syntax and semantics, and the sequences of interchanged messages.

The principles and architectural details of REST are discussed in practical terms in Chapter 3, but in this chapter we will introduce some theoretical concepts regarding the architecture of the Web and RESTful programming in general. Also note that some more detailed aspects of the HTTP protocol are covered in the Appendix.

RESTful Programming and Hypermedia

The W3C defines the World Wide Web as a network-spanning information space of resources interconnected by links. Within this information space, shared by several information systems around the world, other systems and agents create, retrieve, interpret, parse, and reason about resources.

The architecture of the Web is therefore that of a networked, distributed hypermedia information system, where clients access resources by “calling” them through their URIs. Resource states are communicated through some representation of the resource itself via some widely understood data format (HTML, XML, CSS, PNG, scripts).

Distributed systems is a vibrant field of study in computer science. In fact, everything hip and new seems to involve distributed systems, from the proliferation of web apps, to the newest mobile computing trends, to the Internet of Things (IoT).

A distributed system can be roughly defined as a collection of independent computers that appears to its users as a single coherent system.

Note

This definition is from Andrew S. Tanenbaum and Maarten Van Steen’s Distributed Systems: Principles and Paradigms, 2nd ed. (Upper Saddle River, NJ: Prentice-Hall, 2006). Their book is a milestone in the literature of distributed systems, enabling readers to understand past, present and future development.

A very important aspect of distributed systems is that differences between the individual components and the ways in which they communicate are almost completely transparent to the final user. The same principle applies for the internal organization of each component, as long as the external communication protocols and interfaces are implemented per specifications.

Single components are hence able to operate autonomously and cooperate with one another by exchanging information, so that a hypothetical user can interact with the system in a consistent and uniform way, regardless of where and when the interactions take place.

This aspect makes distributed systems easy to scale, in principle.

Design Concepts

REST is an architectural style crafted for and upon a particular distributed system, the Web. The primary goal of any distributed system is to facilitate access to remote resources.

REST was therefore designed with low entry barriers and with simplicity in mind, to enable quick user adoption and speed up development.

All protocols designed for the Web were conceived as text protocols. Communications between web components could be tested using existing network tools and underlying protocols.

REST was also designed to be easily extended if needed. The Web was built as an Internet-scale application. This means that no central control was considered in the initial design. Anarchic scalability and independent development were (and still represent) two core elements of the Web’s architecture.

REST carries the basis for hypermedia applications. Application control information is embedded within representations, or one level above.

RESTful Architectures

RESTful architectures carry some architectural constraints that were inherited from various networked distributed architectural styles.

Client and server in RESTful architectures are orthogonal, meaning there is a clear separation of concerns and functions between them. User logic is moved completely to the client side and server components are kept simple to improve scalability.

This separation also allows the two components to be developed and evolve independently, so long as they respect the communication protocols and defined interfaces.

In a RESTful architecture, each request is treated independently. This means the architecture of the server is stateless and requests cannot take advantage of any stored context and must carry all the necessary information to be understood and processed.

Each response also needs to be labeled as cacheable or noncacheable. If a response is cacheable, the client can store and reuse the data for network efficiency (i.e., cache the data to reduce round-trips).

REST is built on the idea of uniform interfaces between components. This way, the architecture and design choices are simplified in favor of simple interactions. The internal implementations of the components are separated from the services provided, which also encourages independent evolution and development.

REST architectures are layered. This idea of hierarchical systems is deeply rooted in networked architectures and software development in general.

There are a few advantages to developing a hierarchical architecture. The primary benefit is that it keeps the overall system design simple, since each component “sees” and operates only with the layer with which it is immediately interacting.

A last constraint introduced by REST is that of code on demand. Clients in a RESTful architecture can download and execute code in the form of scripts. This allows for system extendibility and simplification of the client architecture by reducing the number of features that must be preimplemented and allowing features to be added later, when needed.

Central elements of REST architectures are data, components, and connectors.

The data elements described in the definition of REST are the actual resources and the exchanged representations. With components instead we identify the clients, origin servers, and any intermediate parties. Components can communicate and exchange messages through connectors. Connectors are specifically used to communicate between RESTful interfaces and any other type of interface. For example, a RESTful service can communicate with a database that doesn’t possess a REST interface through a REST connector.

Each of these elements can be used to define an architectural view, an abstraction that will help us understand how REST components interact and communicate.

A view describes a layer within an architecture. The need to define layers or views comes from the old controversy around the concepts of client and server. In the HTTP protocol, a component acting as server for a specific call can act as client for another call (for further details on this, see the Appendix). Describing architectures in terms of clients and servers is therefore not always ideal.

Sometimes, to describe architectural views, it is considered that the ultimate goal of an application or client software is accessing some data in a database. In this case, three main levels are considered:

- The interface level

- The process level

- The data level

While these levels can certainly also be identified in REST architectures, it is more convenient to use REST core elements to describe REST architectural views.

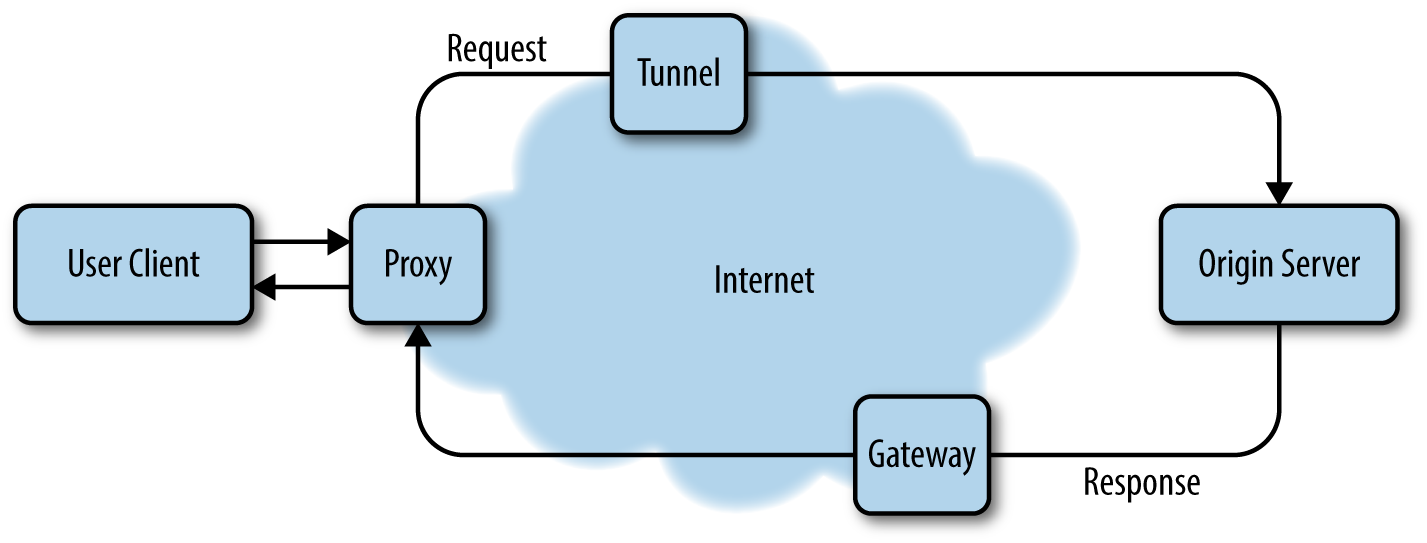

Process view

The process view of a REST architecture describes the data flow between client and server for a certain communication. The user agent makes a request to the server, and the message travels through the network and is processed by a series of intermediaries. Intermediary blocks are used for different reasons, from latency reduction to encapsulation of legacy services and from security enforcement to traffic balancing. Intermediaries in REST architectures can be added at any point in the communication without having to modify any interfaces between components.

Recall that the REST architectural style advocates for independent deployment, scalability of components and interactions, and generality of interfaces. The message flow between client and server is also completely dynamic. This has two major implications. The first, and most obvious, is that the path followed by the request message can be different from the path followed by the response message (Figure 1-2). The second implication is that it is not necessary for each component to be aware of the whole network topology to route messages.

Figure 1-2. Request and response messages can go through an arbitrary number of intermediary blocks

Connector view

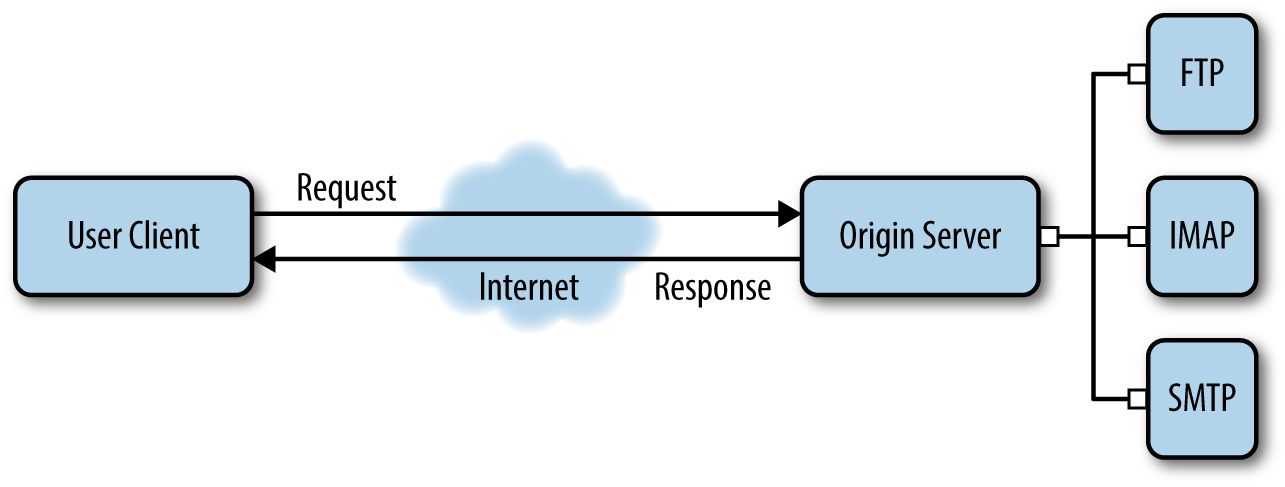

The connector view of a REST architecture is somewhat equivalent to the interface level, describing the actual communications between components.

Because REST represents an abstraction of the Web’s architecture, communication is not restricted to a particular protocol. REST instead specifies the scope of interaction and implementation assumptions that need to be made between components.

For example, although the Web uses HTTP as its transfer protocol, its architecture can also include services using other protocols, like FTP. REST delegates interaction with those services to a REST connector. The REST connector is responsible for invoking connections to other (non-REST) architectures in parallel.

This aspect of REST preserves its principle of generality of interfaces, allowing for heterogeneous components to communicate seamlessly (Figure 1-3).

Figure 1-3. A REST connector is able to interface with non-REST services, allowing for different components to easily communicate.

Data view

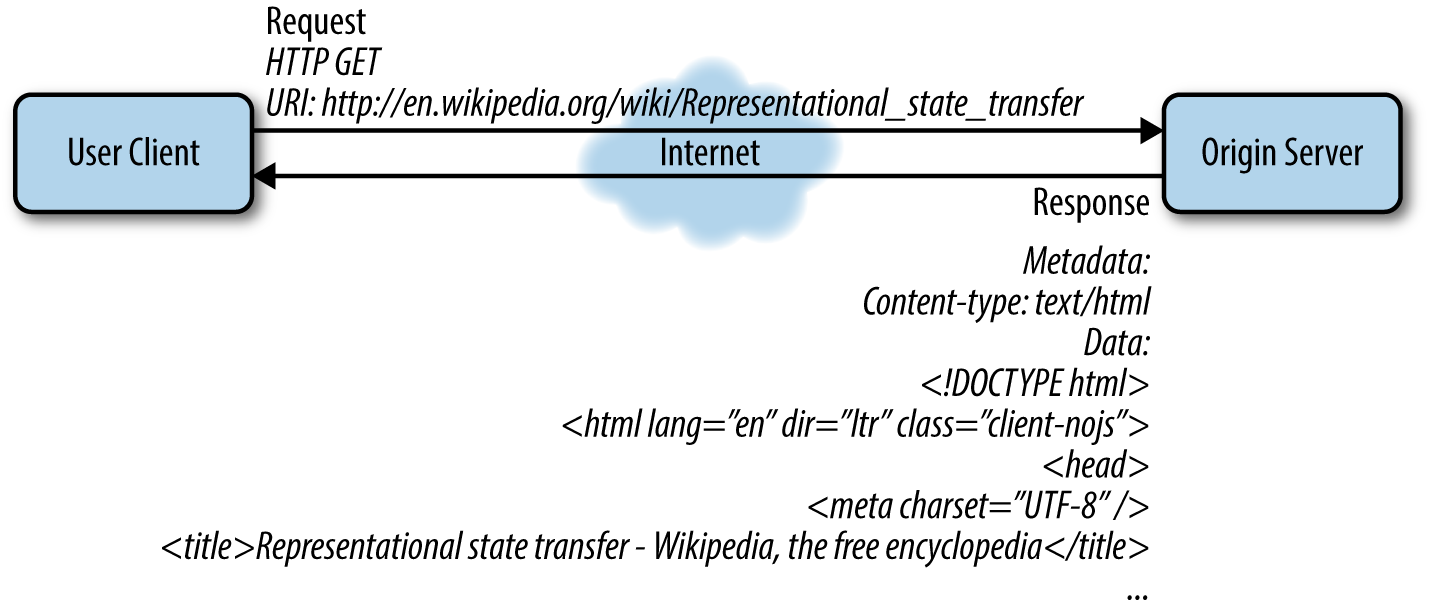

The data view of an architecture concerns the application state. REST is an architectural style designed for distributed information systems, so a RESTful application can be loosely defined as a structure of information and control alternatives performing some specific tasks under some user input.

This definition certainly includes a wide range of different applications, from an online dictionary to a ticket purchasing service to a social networking site to a video streaming service. These applications each define a set of goals to be performed by the underlying systems.

Components within applications interact to accomplish such goals. Interaction happens by exchanging messages used for control semantics, or exchanging complete resource representations. Messages are dynamically sized.

A frequent form of interaction between components is a request for retrieving a representation of a resource—i.e., the GET method in HTTP (Figure 1-4). The representation received in response contains all the control state of the RESTful resource.

One of the goals of REST is to eliminate the need for the server to maintain any awareness of the client state beyond the current request. This improves server scalability, but also makes every request independent from the previous or the next one.

The application state will therefore be defined by its pending requests, the topology of any connected components, the active requests on any connectors, and finally the data flow of resource representations in response to all its requests.

Figure 1-4. The client makes an HTTP GET request and receives an HTML document in response from the server

Part of the application state is also any possible processing of the resource representations as these are received by the user agent.

When an application has no outstanding requests, it is said that the application is in the steady state. It is also said that the user-perceived performance is a measure of the latency between consecutive steady states of the application.

Because the control state of an application resides in the requested resource representation, obtaining the first representation is a priority. Once the first representation is obtained the user will be able to jump to another resource representation via a link and manipulate the state directly.

RESTful Interfaces: Hypermedia and Action Controls

The concept of uniformity of interfaces is central to REST architectures. As long as each component implements this simple general principle, each module within the architecture can be developed and deployed independently; the overall architectural complexity is reduced, while server technology can be more easily scaled. Simple interfaces also allow for an arbitrary number of intermediary blocks to be placed between server and client within a communication.

RESTful interfaces

The uniformity of REST interfaces is built upon four guiding principles. These are:

- The identification of resources through the URI mechanism

- The manipulation of resources through their representations

- The use of self-descriptive messages

- Implementing Hypermedia as Engine of Application State (HATEOAS)

The use of the URI mechanism to identify resources is particularly central to the Web, and more generally to REST architectures. When the Web was being designed, it was proposed that each document would be identified by a Universal Document Identifier (UDI), to stress the importance that, although the UDI could eventually change, it should nevertheless be considered the unique identifier for that particular document.

The acronym UDI was later replaced by the more general URL (Uniform Resource Locator) and then URI (Uniform Resource Identifier). The URI still provides a means of identifying a resource that is simple, defined, and extensible.

The uniformity principle assures that different types of identifiers can be used in the same context, allowing a uniform semantic interpretation, even though the actual mechanisms used to access those resources may be different. At the same time, it permits us to introduce new types of resource identifiers without having to modify the way existing identifiers are used, while also permitting reuse of identifiers in different contexts.

It is important to note that not all URIs are URLs. The terms URI and URL actually refer to two different things, although they are often used interchangeably. Strictly speaking, a URI is just an identifier of a resource; it can be a locator or a name (URN, or Uniform Resource Name), or both. A URL therefore refers to a URI that, in addition to identifying a resource, also provides its network location—i.e., a means of locating the resource by providing its access mechanism.

URNs are location-independent resource identifiers that are intended to be persistent. The term URN has now been included in the URI definition: “URI” is used to refer to URIs as intended by the URN scheme and any other URI with the properties of a resource name.

We have introduced the term scheme, referring to the URN scheme. A scheme can introduce a protocol or data format that makes use of a specific URI syntax and semantics. The URI specifications provide a definition of the range of syntax permitted for all possible URIs, including schemes that haven’t been defined yet. The scheme makes the URI syntax easily extensible, since each scheme can modify and restrict syntax and semantics accordingly to its particular set of rules.

Each URI starts with the scheme name. The scheme name refers to a specification for assigning identifiers within the same scheme. Some scheme examples are:

- http

- ftp

- mailto

- telnet

- news

- urn

Once again, the principle of uniformity allows for independent evolution and development of protocols and data formats from identification schemes and the implementations that make use of the URIs.

The URI parsing mechanism is scheme independent. This means that the URI is first parsed according to the general syntax and semantic specification, while scheme-dependent handling of the URI is postponed until the scheme-dependent semantics are actually needed.

Although URIs are used to identify resources, it is not guaranteed that the URI itself provides a means to access the resource. This is in fact a common misunderstanding of URIs. URIs provide identification. Any other operation associated with a URI reference is associated with the protocol definition or data format that makes use of the URI.

The resource identified by the URI is a general object that is not necessarily accessible either. A resource can be either an electronic document or a service, an abstract concept or a relationship.

A resource is modified by manipulating its representation. The representation in REST contains the actual resource state, and this is what is exchanged between client and server. This means that users interact with a particular resource through representations of its state.

A RESTful application may support more than one representation of the same resource at a certain URI, allowing clients to indicate the preferred representation type.

Resource representations are requested through messages between client and server. Messages in REST architectures are said to be self-descriptive. This means that all the information needed to complete the task is actually contained in the message.

How to include control

Hypermedia as the Engine of Application State (HATEOAS) is a distinguishing element of REST architecture, describing how hypermedia controls can be used to interact with an application to modify its state.

Literally, it means that hyperlinks included within resource representations can be used to control how the user interacts with an application, in a similar way to how a user surfs through the content of a website.

This concept of designing the way a user explores resource states as we would a web page can be compared to the task of designing an application flow or a user interface. A designer would implement a certain number of tasks that can be completed by the user and would present them in a certain way. The designer’s goal, in this case, would be to make the use of the application as intuitive as possible for the end user.

In a similar way, HATEOAS provides the possibility to create semantically rich interfaces for machines and humans alike.

Links are flow control

When designing a simple application, the developer (or architect, if it is particularly complex) will make a number of choices to define the different tasks that a user can accomplish by using the application. Let us think for example about a web email client. The user of our client needs to be able to receive messages, read messages, write messages, and send messages. These are four simple actions that an email client needs to accomplish.

Because our email client application is web-based, the user will probably access it through a certain URL. The user will be presented with a login window to authenticate herself. After the user has authenticated, she will be presented with her list of unread messages. Each message can be opened through a link requesting the full text of the email.

Sound familiar? Each link in the application defines a task that the user can accomplish. Now let us think of the same application as an API.

An API of our web email client will contain all the actions of our application. The API itself will only respond to certain actions—for example, a given call will return the list of unread messages and a different call will return the latest unread message.

We can make this clearer with a few concrete examples. An HTTP GET request to api.ouremailclient.com/v1/client/list/unread returns a list of unread messages:

"inbox":{"message":{"id":"Dfvsafaf1479","title":"example message","sender":"john@rubyonrails.org","received_on":"2014-08-19 03:14:07"}"message":{"id":"8gryyfav13gT","title":"hello from kathy","sender":"kathy@wikipedia.org","received_on":"2014-08-19 04:23:31"}"message":{"id":"FrT3gqewQ2Ry","title":"let's go surfing","sender":"info@surfingvacation.net","received_on":"2014-08-19 08:45:13"}}

An HTTP GET request to api.ouremailclient.com/v1/client/messages/Dfvsafaf1479 returns the full text of a certain message identified with its ID:

"message":{"id":"Dfvsafaf1479","title":"example message","sender":"john@rubyonrails.org","received_on":"2014-08-19 03:14:07""text":"Hello, this is a sample message to test your new email.We hope it works!"}

Although the API itself performs the actions, we would also need to include more logic in some other software component. The result would allow us to perform all the functionality of a web application through a set of API calls.

What if a link to the next message was included in the resource representation? Following a simple next field would lead the user to the next unread message without having to go back to the list.

Actions, or operation control

Now imagine that you would like to reply to a single message. You will have to send another request to another endpoint, specifying at least sender and title.

Although the action seems quite intuitive, especially if you are thinking about it from a programmer’s perspective, having to code the logic to create and send the message means that if something in the API changes, you will have to update your code so that your application can continue to function.

This is certainly not ideal. In a perfect world, you would have to send a request specifying only the text you would like to include in your reply message.

To accomplish this simple task, we could create a different endpoint called reply that takes the message ID and the text that the user would like to include and just sends the email. In this case the user, or the application using our API, would need to know the right endpoint in order to use it. However, this again means that if something changes in the endpoint, all the clients using it will need to be rewritten, even if only partially, in order for them to continue to work.

Another possibility is to include a link to the reply action in the message representation. This way a client would only need to send the text in the call to the reply endpoint. The client would not have to include any logic that specifies the endpoint for the reply call; it would just find it in the resource representation and call it as it calls any other resource in the API.

Beyond HTTP

The goal of REST architecture, and hypermedia, is to go beyond the limitations of the Web as we have known it. When the Web was being developed, HTTP and web pages were said to bring semantics to a world of standalone documents. The experience of a user surfing the Web was described as that of our brain seeing an image and linking that image to something else we have known or done in the past, like a sound or a smell or a specific place on Earth.

Hypermedia builds on the definition of hypertext as interlinked information and on the HTTP protocol as the primary transport protocol for the Web.

A RESTful application, or a REST API, should in fact be protocol independent. Instead, the API’s descriptive efforts should be invested in the definition of the media types used to represent resources.

Hypermedia applications do not simply link objects and make HTTP calls, but also allow clients to interact with those objects through actions.

Ideally, a user would access a hypermedia API only through its main URL. It would then be the role of the server to present the user with the accessible resources and their possible operations.

Information is simultaneously presented with controls, allowing the user to act on it, by selecting actions. Links, therefore, are only a tiny aspect of HATEOAS and REST.

Wrapping Up

This chapter explored the HTTP protocol, the architecture of the Web as a distributed hypermedia system, and the bases of RESTful architectures and hypermedia interfaces. In the next chapter, we will start building a Rails application. This will be an API with actual data with some hypermedia flow control.

Get RESTful Rails Development now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.