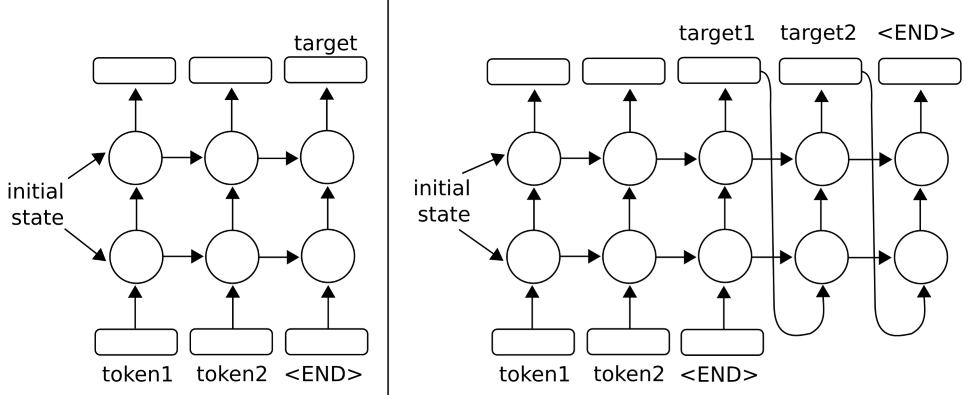

We can increase the depth of recurrent neural networks by stacking them on top of each other. Essentially, we will be taking the target outputs and feeding them into another network.

To get an idea of how this might work for just two layers, see the following diagram: