Chapter 1. Setup

Mise-en-place is the religion of all good line cooks…The universe is in order when your station is set up the way you like it: you know where to find everything with your eyes closed, everything you need during the course of the shift is at the ready at arm’s reach, your defenses are deployed.

Anthony Bourdain

There are a couple of ways I’ve gotten off on the wrong foot by not starting a project with the right tooling, resulting in lost time and plenty of frustration. In particular, I’ve made a proper hash of several computers by installing packages willy-nilly, rendering my system Python environment a toxic wasteland, and I’ve continued to use the default Python shell even though better alternatives are available. Modest up-front investments of time and effort to avoid these issues will pay huge dividends over your career as a Pythonista.

Polluting the System Python

One of Python’s great strengths is the vibrant community of developers producing useful third-party packages that you can quickly and easily install. But it’s not a good idea to just go wild installing everything that looks interesting, because you can quickly end up with a tangled mess where nothing works right.

By default, when you

pip install

(or in days of yore, easy_install)

a package,

it goes into your computer’s

system-wide site-packages directory.

Any time you fire up

a Python shell or a Python program,

you’ll be able to import

and use that package.

That may feel okay at first,

but once you start developing

or working with multiple

projects on that computer,

you’re going to eventually

have conflicts over package dependencies.

Suppose project P1 depends on

version 1.0 of library L,

and project P2 uses

version 4.2 of library L.

If both projects have to be

developed or deployed

on the same machine,

you’re practically guaranteed

to have a bad day

due to changes to the

library’s interface or behavior;

if both projects

use the same site-packages,

they cannot coexist!

Even worse,

on many Linux distributions,

important system tooling

is written in Python,

so getting into this

dependency management hell

means you can break

critical pieces of your OS.

The solution for this

is to use so-called virtual environments.

When you create a virtual environment

(or “virtual env”),

you have a separate Python

environment outside of the system Python:

the virtual environment has its own

site-packages directory,

but shares the standard library

and whatever Python binary

you pointed it at during creation.

(You can even have

some virtual environments

using Python 2

and others using Python 3,

if that’s what you need!)

For Python 2, you’ll need to

install virtualenv by running

pip install virtualenv,

while Python 3 now includes

the same functionality out-of-the-box.

To create a virtual environment in a new directory, all you need to do is run one command, though it will vary slightly based on your choice of OS (Unix-like versus Windows) and Python version (2 or 3). For Python 2, you’ll use:

virtualenv <directory_name>

while for Python 3, on Unix-like systems it’s:

pyvenv <directory_name>

and for Python 3 on Windows:

pyvenv.py <directory_name>

Note

Windows users will also

need to adjust their PATH

to include the location

of their system Python

and its scripts;

this procedure varies

slightly between versions

of Windows, and the exact

setting depends on the

version of Python. For a standard installation

of Python 3.4, for example,

the PATH should include:

C:\Python34\;C:\Python34\Scripts\;C:\Python34\Tools\Scripts

This creates a new directory

with everything the virtual environment needs:

lib (Lib on Windows)

and include subdirectories

for supporting library files,

and a bin subdirectory

(Scripts on Windows)

with scripts to manage

the virtual environment

and a symbolic link to

the appropriate Python binary.

It also installs the

pip and setuptools modules

in the virtual environment

so that you can easily

install additional packages.

Once the virtual environment

has been created,

you’ll need to navigate into that directory

and “activate” the virtual environment

by running a small shell script. This script tweaks the environment variables

necessary to use the

virtual environment’s Python

and site-packages.

If you use the Bash shell,

you’ll run:

source bin/activate

Windows users will run:

Scripts\activate.bat

Equivalents are also provided for the Csh and Fish shells on Unix-like systems, as well as PowerShell on Windows. Once activated, the virtual environment is isolated from your system Python—any packages you install are independent from the system Python as well as from other virtual environments.

When you are done working

in that virtual environment,

the deactivate command will revert

to using the default Python again.

As you might guess,

I used to think that

all this virtual environment stuff

was too many moving parts,

way too complicated,

and I would never need to use it.

After causing myself

significant amounts of pain,

I’ve changed my tune.

Installing virtualenv

for working with Python 2 code

is now one of the first things I do

on a new computer.

Tip

If you have more advanced needs

and find that pip and virtualenv

don’t quite cut it for you,

you may want to consider

Conda

as an alternative for managing

packages and environments.

(I haven’t needed it;

your mileage may vary.)

Using the Default REPL

When I started with Python, one of the first features I fell in love with was the interactive shell, or REPL (short for Read Evaluate Print Loop). By just firing up an interactive shell, I could explore APIs, test ideas, and sketch out solutions, without the overhead of having a larger program in progress. Its immediacy reminded me fondly of my first programming experiences on the Apple II. Nearly 16 years later, I still reach for that same Python shell when I want to try something out…which is a shame, because there are far better alternatives that I should be using instead.

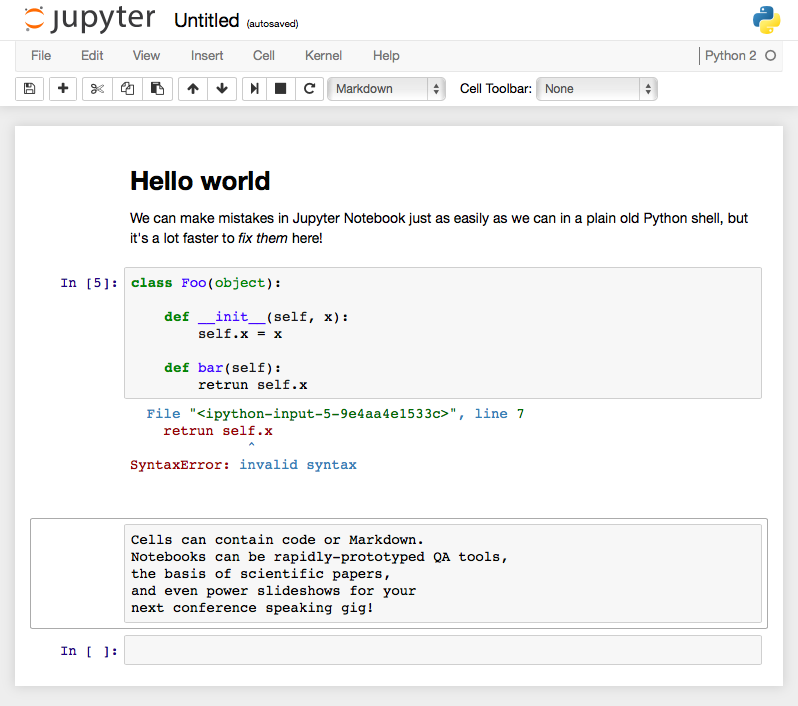

The most notable of these are IPython and the browser-based Jupyter Notebook (formerly known as IPython Notebook), which have spurred a revolution in the scientific computing community. The powerful IPython shell offers features like tab completion, easy and humane ways to explore objects, an integrated debugger, and the ability to easily review and edit the history you’ve executed. The Notebook takes the shell even further, providing a compelling web browser experience that can easily combine code, prose, and diagrams, and which enables low-friction distribution and sharing of code and data.

The plain old Python shell is an okay starting place, and you can get a lot done with it, as long as you don’t make any mistakes. My experiences tend to look something like this:

>>>classFoo(object):...def__init__(self,x):...self.x=x...defbar(self):...retrunself.xFile"<stdin>",line5retrunself.x^SyntaxError:invalidsyntax

Okay, I can fix that without retyping everything; I just need to go back into history with the up arrow, so that’s…

Up arrow. Up. Up. Up. Up. Enter.

Up. Up. Up. Up. Up. Enter. Up. Up. Up. Up. Up. Enter. Up. Up. Up. Up. Up. Enter.

Up. Up. Up. Up. Up. Enter.

Then I get the same SyntaxError

because I got into a rhythm

and pressed Enter without

fixing the error first.

Whoops!

Then I repeat this cycle several times, each iteration punctuated with increasingly sour cursing.

Eventually I’ll get it right,

then realize I need to

add some more things

to the __init__,

and have to re-create

the entire class again,

and then again,

and again,

and oh, the regrets

I will feel

for having reached

for the wrong tool

out of my old,

hard-to-shed habits.

If I’d been working

with the Jupyter Notebook,

I’d just change

the error directly

in the cell containing the code,

without any up-arrow shenanigans,

and be on my way in seconds (see Figure 1-1).

Figure 1-1. The Jupyter Notebook gives your browser super powers!

It takes just a little bit of extra effort and forethought to install and learn your way around one of these more sophisticated REPLs, but the sooner you do, the happier you’ll be.

Get How to Make Mistakes in Python now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.