Chapter 4. Creating the Fibonacci Sequence: Writing, Testing, and Benchmarking Algorithms

Writing an implementation of the Fibonacci sequence is another step in the hero’s journey to becoming a coder. The Rosalind Fibonacci description notes that the genesis for the sequence was a mathematical simulation of breeding rabbits that relies on some important (and unrealistic) assumptions:

-

The first month starts with a pair of newborn rabbits.

-

Rabbits can reproduce after one month.

-

Every month, every rabbit of reproductive age mates with another rabbit of reproductive age.

-

Exactly one month after two rabbits mate, they produce a litter of the same size.

-

Rabbits are immortal and never stop mating.

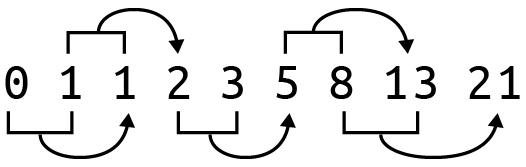

The sequence always begins with the numbers 0 and 1. The subsequent numbers can be generated ad infinitum by adding the two immediately previous values in the list, as shown in Figure 4-1.

Figure 4-1. The first eight numbers of the Fibonacci sequence—after the initial 0 and 1, subsequent numbers are created by adding the two previous numbers

If you search the internet for solutions, you’ll find dozens of different ways to generate the sequence. I want to focus on three fairly different approaches. The first solution uses an imperative approach where the algorithm strictly defines every step. The next solution uses a generator function, and the last will focus on a recursive solution. ...

Become an O’Reilly member and get unlimited access to this title plus top books and audiobooks from O’Reilly and nearly 200 top publishers, thousands of courses curated by job role, 150+ live events each month,

and much more.

Read now

Unlock full access