Chapter 14. Singular Value Decomposition

The previous chapter was really dense! I tried my best to make it comprehensible and rigorous, without getting too bogged down in details that have less relevance for data science.

Fortunately, most of what you learned about eigendecomposition applies to the SVD. That means that this chapter will be easier and shorter.

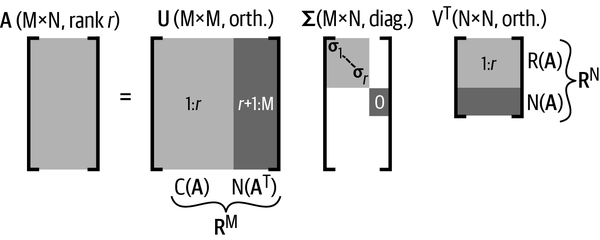

The purpose of the SVD is to decompose a matrix into the product of three matrices, called the left singular vectors (), the singular values (), and the right singular vectors ():

This decomposition should look similar to eigendecomposition. In fact, you can think of the SVD as a generalization of eigendecomposition to nonsquare matrices—or you can think of eigendecomposition as a special case of the SVD for square matrices.1

The singular values are comparable to eigenvalues, while the singular vectors matrices are comparable to eigenvectors (these two sets of quantities are the same under some circumstances that I will explain later).

The Big Picture of the SVD

I want to introduce you to the idea and interpretation of the matrices, and then later in the chapter I will explain how to compute the SVD.

Figure 14-1 shows the overview of the SVD.

Figure 14-1. The big picture of the SVD

Many important features of the SVD are visible in this diagram; I will go into these features in more detail throughout ...

Become an O’Reilly member and get unlimited access to this title plus top books and audiobooks from O’Reilly and nearly 200 top publishers, thousands of courses curated by job role, 150+ live events each month,

and much more.

Read now

Unlock full access