Chapter 1. Introduction to Data Wrangling and Data Quality

These days it seems like data is the answer to everything: we use the data in product and restaurant reviews to decide what to buy and where to eat; companies use the data about what we read, click, and watch to decide what content to produce and which advertisements to show; recruiters use data to decide which applicants get job interviews; the government uses data to decide everything from how to allocate highway funding to where your child goes to school. Data—whether it’s a basic table of numbers or the foundation of an “artificial intelligence” system—permeates our lives. The pervasive impact that data has on our experiences and opportunities every day is precisely why data wrangling is—and will continue to be—an essential skill for anyone interested in understanding and influencing how data-driven systems operate. Likewise, the ability to assess—and even improve—data quality is indispensable for anyone interested in making these sometimes (deeply) flawed systems work better.

Yet because both the terms data wrangling and data quality will mean different things to different people, we’ll begin this chapter with a brief overview of the three main topics addressed in this book: data wrangling, data quality, and the Python programming language. The goal of this overview is to give you a sense of my approach to these topics, partly so you can determine if this book is right for you. After that, we’ll spend some time on the necessary logistics of how to access and configure the software tools and other resources you’ll need to follow along with and complete the exercises in this book. Though all of the resources that this book will reference are free to use, many programming books and tutorials take for granted that readers will be coding on (often quite expensive) computers that they own. Since I really believe that anyone who wants to can learn to wrangle data with Python, however, I wanted to make sure that the material in this book can work for you even if you don’t have access to a full-featured computer of your own. To help ensure this, all of the solutions you’ll find here and in the following chapters were written and tested on a Chromebook; they can also be run using free, online-only tools using either your own device or a shared computer, for example, at school or a public library. I hope that by illustrating how accessible not just the knowledge but also the tools of data wrangling can be will encourage you to explore this exciting and empowering practice.

What Is “Data Wrangling”?

Data wrangling is the process of taking “raw” or “found” data, and transforming it into something that can be used to generate insight and meaning. Driving every substantive data wrangling effort is a question: something about the world you want to investigate or learn more about. Of course, if you came to this book because you’re really excited about learning to program, then data wrangling can be a great way to get started, but let me urge you now not to try to skip straight to the programming without engaging the data quality processes in the chapters ahead. Because as much as data wrangling may benefit from programming skills, it is about much more than simply learning how to access and manipulate data; it’s about making judgments, inferences, and selections. As this book will illustrate, most data that is readily available is not especially good quality, so there’s no way to do data wrangling without making choices that will influence the substance of the resulting data. To attempt data wrangling without considering data quality is like trying drive a car without steering: you may get somewhere—and fast!—but it’s probably nowhere you want to be. If you’re going to spend time wrangling and analyzing data, you want to try to make sure it’s at least likely to be worth the effort.

Just as importantly, though, there’s no better way to learn a new skill than to connect it to something you genuinely want to get “right,” because that personal interest is what will carry you through the inevitable moments of frustration. This doesn’t mean that question you choose has to be something of global importance. It can be a question about your favorite video games, bands, or types of tea. It can be a question about your school, your neighborhood, or your social media life. It can be a question about economics, politics, faith, or money. It just has to be something that you genuinely care about.

Once you have your question in hand, you’re ready to begin the data wrangling process. While the specific steps may need adjusting (or repeating) depending on your particular project, in principle data wrangling involves some or all of the following steps:

-

Locating or collecting data

-

Reviewing the data

-

“Cleaning,” standardizing, transforming, and/or augmenting the data

-

Analyzing the data

-

Visualizing the data

-

Communicating the data

The time and effort required for each of these steps, of course, can vary considerably: if you’re looking to speed up a data wrangling task you already do for work, you may already have a dataset in hand and know basically what it contains. Then again, if you’re trying to answer a question about city spending in your community, collecting the data may be the most challenging part of your project.

Also, know that, despite my having numbered the preceding list, the data wrangling process is really more of a cycle than it is a linear set of steps. More often than not, you’ll need to revisit earlier steps as you learn more about the meaning and context of the data you’re working with. For example, as you analyze a large dataset, you may come across surprising patterns or values that cause you to question assumptions you may have made about it during the “review” step. This will almost always mean seeking out more information—either from the original data source or completely new ones—in order to understand what is really happening before you can move on with your analysis or visualization. Finally, while I haven’t explicitly included it in the list, it would be a little more accurate to start each of the steps with Researching and. While the “wrangling” parts of our work will focus largely on the dataset(s) we have in front of us, the “quality” part is almost all about research and context, and both of these are integral to every stage of the data wrangling process.

If this all seems a little overwhelming right now—don’t worry! The examples in this book are built around real datasets, and as you follow along with coding and quality-assessment processes, this will all begin to feel much more organic. And if you’re working through your own data wrangling project and start to feel a little lost, just keep reminding yourself of the question you are trying to answer. Not only will that remind you why you’re bothering to learn about all the minutiae of data formats and API access keys,1 it will also almost always lead you intuitively to the next “step” in the wrangling process—whether that means visualizing your data or doing just a little more research in order to improve its context and quality.

What Is “Data Quality”?

There is plenty of data out in the world and plenty of ways to access and collect it. But all data is not created equal. Understanding data quality is an essential part of data wrangling because any data-driven insight can only be as good as the data it was built upon.2 So if you’re trying to use data to understand something meaningful about the world, you have to first make sure that the data you have accurately reflects that world. As we’ll see in later chapters (Chapters 3 and 6, in particular), the work of improving data quality is almost never as clear-cut as the often tidy-looking, neatly labeled rows and columns of data you’ll be working with.

That’s because—despite the use of terms like machine learning and artificial intelligence—the only thing that computational tools can do is follow the directions given to them, using the data provided. And even the most complex, sophisticated, and abstract data is irrevocably human in its substance, because it is the result of human decisions about what to measure and how. Moreover, even today’s most advanced computer technologies make “predictions” and “decisions” via what amounts to large-scale pattern matching—patterns that exist in the particular selections of data that the humans “training” them provide. Computers do not have original ideas or make creative leaps; they are fundamentally bad at many tasks (like explaining the “gist” of an argument or the plot of a story) that humans find intuitive. On the other hand, computers excel at performing repetitive calculations, very very fast, without getting bored, tired, or distracted. In other words, while computers are a fantastic complement to human judgment and intelligence, they can only amplify them—not substitute for them.

What this means is that it is up to the humans involved in data collection, acquisition, and analysis to ensure its quality so that the outputs of our data work actually mean something. While we will go into significant detail around data quality in Chapter 3, I do want to introduce two distinct (though equally important) axes for evaluating data quality: (1) the integrity of the data itself, and (2) the “fit” or appropriateness of the data with respect to a particular question or problem.

Data Integrity

For our purposes, the integrity of a dataset is evaluated using the data values and descriptors that make it up. If our dataset includes measurements over time, for example, have they been recorded at consistent intervals or sporadically? Do the values represent direct individual readings, or are only averages available? Is there a data dictionary that provides details about how the data was collected, recorded, or should be interpreted—for example, by providing relevant units? In general, data that is complete, atomic, and well-annotated—among other things—is considered to be of higher integrity because these characteristics make it possible to do a wider range of more conclusive analyses. In most cases, however, you’ll find that a given dataset is lacking on any number of data integrity dimensions, meaning that it’s up to you to try to understand its limitations and improve it where you can. While this often means augmenting a given dataset by finding others that can complement, contextualize, or extend it, it almost always means looking beyond “data” of any kind and reaching out to experts: the people who designed the data, collected it, have worked with it previously, or know a lot about the subject area your data is supposed to address.

Data “Fit”

Even a dataset that has excellent integrity, however, cannot be considered high quality unless it is also appropriate for your particular purpose. Let’s say, for example, that you were interested in knowing which Citi Bike station has had the most bikes rented and returned in a given 24-hour period. Although the real-time Citi Bike API contains high-integrity data, it’s poorly suited to answering the particular question of which Citi Bike station has seen the greatest turnover on a given date. In this case, you would be much better off trying to answer this question using the Citi Bike “trip history” data.

Of course, it’s rare that a data fit problem can be solved so simply; often we have to do a significant amount of integrity work before we can know with confidence that our dataset is actually fit for our selected question or project. There’s no way to bypass this time investment, however: shortcuts when it comes to either data integrity or data fit will inevitably compromise the quality and relevance of your data wrangling work overall. In fact, many of the harms caused by today’s computational systems are related to problems of data fit. For example, using data that describes one phenomenon (such as income) to try to answer questions about a potentially related—but fundamentally different—phenomenon (like educational attainment) can lead to distorted conclusions about what is happening in the world, with sometimes devastating consequences. In some instances, of course, using such proxy measures is unavoidable. An initial medical diagnosis based on a patient’s observable symptoms may be required to provide emergency treatment until the results of a more definitive test are available. While such substations are sometimes acceptable at the individual level, however, the gap between any proxy measure and the real phenomenon multiplies with the scale of the data and the system it is used to power. When this happens, we end up with a massively distorted view of the very reality our data wrangling and analysis hoped to illuminate. Fortunately, there are a number of ways to protect against these types of errors, as we’ll explore further in Chapter 3.

Why Python?

If you’re reading this book, chances are you’ve already heard of the Python programming language, and you may even be pretty certain that it’s the right tool for starting—or expanding—your work on data wrangling. Even if that’s the case, I think it’s worth briefly reviewing what makes Python especially suited to the type of data wrangling and quality work that we’ll do in this book. Of course if you haven’t heard of Python before, consider this an introduction to what makes it one of the most popular and powerful programming languages in use today.

Versatility

Perhaps one of the greatest strengths of Python as a general programming language is its versatility: it can be easily used to access APIs, scrape data from the web, perform statistical analyses, and generate meaningful visualizations. While many other programming languages do some of these things, few do all of them as well as Python.

Accessibility

One of Python creator Guido van Rossum’s goals in designing the language was to make “code that is as understandable as plain English”. Python uses English keywords where many other scripting languages (like R and JavaScript) use punctuation. For English-language readers, then, Python may be both easier and more intuitive to learn than other scripting languages.

Readability

One of the core tenets of the Python programming language is that “readability counts”. In most programming languages, the visual layout of the code is irrelevant to how it functions—as long as the “punctuation” is correct, the computer will understand it. Python, by contrast, is what’s known as “whitespace dependent”: without proper tab and/or space characters indenting the code, it actually won’t do anything except produce a bunch of errors. While this can take some getting used to, it enforces a level of readability in Python programs that can make reading other people’s code (or, more likely, your own code after a little time has passed) much less difficult. Another aspect of readability is commenting and otherwise documenting your work, which I’ll address in more detail in “Documenting, Saving, and Versioning Your Work”.

Community

Python has a very large and active community of users, many of whom help create and maintain “libraries” of code that enormously expand what you can quickly accomplish with your own Python code. For example, Python has popular and well-developed code libraries like NumPy and Pandas that can help you clean and analyze data, as well as others like Matplotlib and Seaborn to create visualizations. There are even powerful libraries like Scikit-Learn and NLTK that can do the heavy lifting of machine learning and natural language processing. Once you have a handle on the essentials of data wrangling with Python that we’ll cover in this book (in which we will use many of the libraries just mentioned), you’ll probably find yourself eager to explore what’s possible with many of these libraries and just a few lines of code. Fortunately, the same folks who write the code for these libraries often write blog posts, make video tutorials, and share code samples that you can use to expand your Python work.

Similarly, the size and enthusiasm of the Python community means that finding answers to both common (and even not-so-common) problems and errors that you may encounter is easy—detailed solutions are often posted online. As a result, troubleshooting Python code can be easier than for more specialized languages with a smaller community of users.

Python Alternatives

Although Python has much to recommend it, you may also be considering other tools for your data-wrangling needs. The following is a brief overview of some tools you may have heard of, along with why I chose Python for this work instead:

- R

-

The R programming language is probably Python’s nearest competitor for data work, and many teams and organizations rely on R for its combination of data wrangling, advanced statistical modeling, and visualization capabilities. At the same time, R lacks some of the accessibility and readability of Python.

- SQL

-

Simple Query Language (SQL) is just that: a language designed to “slice and dice” database data. While SQL can be powerful and useful, it requires data to exist in a particular format to be useful and is therefore of limited use for “wrangling” data in the first place.

- Scala

-

Although Scala is well suited for dealing with large datasets, it has a much steeper learning curve than Python, and a much smaller user community. The same is true of Julia.

- Java, C/C++

-

While these have large user communities and are very versatile, they lack the natural language and readability bent of Python and are oriented more toward building software than doing data wrangling and analysis.

- JavaScript

-

In a web-based environment, JavaScript is invaluable, and many popular visualization tools (e.g., D3) are built using variations of JavaScript. At the same time, JavaScript does not have the same breadth of data analysis features as Python and is generally slower.

Writing and “Running” Python

To follow along with the exercises in this book, you’ll need to get familiar with the tools that will help you write and run your Python code; you’ll also want a system for backing up and documenting your code so you don’t lose valuable work to an errant keystroke,5 and so that you can easily remind yourself what all that great code can do, even when you haven’t looked at it for a while. Because there are multiple toolsets for solving these problems, I recommend that you start by reading through the following sections and then choosing the approach (or combination of approaches) that works best for your preferences and resources. At a high level, the key decisions will be whether you want to work “online only”—that is, with tools and services you access via the internet—or whether you can and want to be able to do Python work without an internet connection, which requires installing these tools on a device that you control.

We all write differently depending on context: you probably use a different style and structure when writing an email than when sending a text message; for a job application cover letter you may use a whole different tone entirely. I know I also use different tools to write depending on what I need to accomplish: I use online documents when I need to write and edit collaboratively with coworkers and colleagues, but I prefer to write books and essays in a super-plain text editor that lives on my device. More particular document formats, like PDFs, are typically used for contracts and other important documents that we don’t want others to be able to easily change.

Just like natural human languages, Python can be written in different types of documents, each of which supports slightly different styles of writing, testing, and running your code. The primary types of Python documents are notebooks and standalone files. While either type of document can be used for data wrangling, analysis, and visualization, they have slightly different strengths and requirements. Since it takes some tweaking to convert one format to the other, I’ve made the exercises in this book available in both formats. I did this not only to give you the flexibility of choosing the document type that you find easiest or most useful but also so that you can compare them and see for yourself how the translation process affects the code. Here’s a brief overview of these document types to help you make an initial choice:

- Notebooks

-

A Python notebook is an interactive document used to run chunks of code, using a web browser window as an interface. In this book, we’ll be using a tool called “Jupyter” to create, edit, and execute our Python notebooks.6 A key advantage of using notebooks for Python programming is that they offer a simple way to write, run, and document your Python code all in one place. You may prefer notebooks if you’re looking for a more “point and click” programming experience or if working entirely online is important to you. In fact, the same Python notebooks can be used on your local device or in an online coding environment with minimal changes, meaning that this option may be right for you if you (1) don’t have access to a device where you’re able to install software, or (2) you can install software but you also want to be able to work on your code when you don’t have your machine with you.

- Standalone files

-

A standalone Python file is really any plain-text file that contains Python code. You can create such standalone Python files using any basic text editor, though I strongly recommend that you use one specifically designed for working with code, like Atom (I’ll walk through setting this up in “Installing Python, Jupyter Notebook, and a Code Editor”). While the software you choose for writing and editing your code is up to you, in general the only place you’ll be able to run these standalone Python files is on a physical device (like a computer or phone) that has the Python programming language installed. You (and your computer) will be able to recognize standalone Python files by their .py file extension. Although they might seem more restrictive at first, standalone Python files can have some advantages. You don’t need an internet connection to run standalone files, and they don’t require you to upload your data to the cloud. While both of those things are also true of locally run notebooks, you also don’t have to wait for any software to start up when running standalone files. Once you have Python installed, you can run standalone Python files instantly from the command line (more on this shortly)—this is especially useful if you have a Python script that you need to run on a regular basis. And while notebooks’ ability to run bits of code independently of one another can make them feel a bit more approachable, the fact that standalone Python files also always run your code “from scratch” can help you avoid the errors or unpredictable results that can occur if you run bits of notebook code out of order.

Of course, you don’t have to choose just one or the other; many people find that notebooks are especially useful for exploring or explaining data (thanks to their interactive and reader-friendly format), while standalone files are better suited for accessing, transforming, and cleaning data (since standalone files can more quickly and easily run the same code on different datasets, for example). Perhaps the bigger question is whether you want to work online or locally. If you don’t have a device where you can install Python, you’ll need to work in cloud-based notebooks; otherwise you can choose to use either (or both!) notebooks or standalone files on your device. As noted previously, notebooks that can be used either online or locally, as well as standalone Python files, are available for all the exercises in this book to give you as much flexibility as possible, and also so you can compare how the same tasks get done in each case!

Working with Python on Your Own Device

To understand and run Python code, you’ll need to install it on your device. Depending on your device, there may be a downloadable installation file available, or you may need to use a text-based interface (which you’ll need to use at some point if you’re using Python on your device) called the command line. Either way, the goal is to get you up and running with at least Python 3.9.7 Once you’ve got Python up and running, you can move on to installing Jupyter notebook and/or a code editor (instructions included here are for Atom). If you’re planning to work only in the cloud, you can skip right to “Working with Python Online” for information on how to get started.

Getting Started with the Command Line

If you plan to use Python locally on your device, you’ll need to learn to use the command line (also sometimes referred to as the terminal or command prompt), which is a text-based way of providing instruction to your computer. While in principle you can do anything in the command line that you can do with a mouse, it’s particularly efficient for installing code and software (especially the Python libraries that we’ll be using throughout the book) and backing up and running code. While it may take a little getting used to, the command line is often faster and more straightforward for many programming-related tasks than using a mouse. That said, I’ll provide instructions for using both the command line and your mouse where both are possible, and you should feel free to use whichever you find more convenient for a particular task.

To get started, let’s open up a command line (sometimes also called the terminal) interface and use it to create a folder for our data wrangling work. If you’re on a Chromebook, macOS, or Linux machine, search for “terminal” and select the application called Terminal; on a Windows PC, search for “powershell” and choose the program called Windows PowerShell.

Tip

To enable Linux on your Chromebook, just go to your Chrome OS settings (click the gear icon in the Start menu, or search for “settings” in the Launcher). Toward the bottom of the lefthand menu, you’ll see a small penguin icon labeled “Linux (Beta).” Click this and follow the directions to enable Linux on your machine. You may need to restart before you can continue.

Once you have a terminal open, it’s time to make a new folder! To help you get started, here is a quick glossary of useful command-line terms:

ls-

The “list” command shows files and folders in the current location. This is a text-based version of what you would see in a finder window.

cdfoldername-

The “change directory” command moves you from the current location into

foldername, as long asfoldernameis shown when you use thelscommand. This is equivalent to “double-clicking” on a folder within a finder window using your mouse. cd ../-

“Change directory” once again, but the

../moves your current position to the containing folder or location. cd ~/-

“Change directory,” but the

~/returns you to your “home” folder. mkdirfoldername-

“Make directory” with name

foldername. This is equivalent to choosing New → Folder in the context menu with your mouse and then naming the folder once its icon appears.

Tip

When using the command line, you never actually have to type out the full name of a file or folder; think of it more like search, and just start by typing the first few characters of the (admittedly case-sensitive) name. Once you’ve done that, hit the Tab key, and the name will autocomplete as much as possible.

For example, if you have two files in a folder, one called xls_parsing.py and one called xlsx_parsing.py (as you will when you’re finished with Chapter 4), and you wanted to run the latter, you can type python xl

and then hit Tab, which will cause the command line to autocomplete to

python xls.

At this point, since the two possible filenames diverge, you’ll need to supply either an x or an _, after which hitting Tab one more time will complete the rest of the filename, and you’re good to go!

Any time you open a new terminal window on your device, you’ll be in what’s known as your “home” folder. On macOS, Windows, and Linux machines, this is often the “User” folder, which is not the same as the “desktop” area you see when you first log in. This can be a little disorienting a first, since the files and folders you’ll see when you first run ls in a terminal window will probably be unfamiliar. Don’t worry; just point your terminal at your regular desktop by typing:

cd ~/Desktop

into the terminal and hitting Enter or Return (for efficiency’s sake, I’ll just refer to this as the Enter key from here on out).

On Chromebooks, Python (and the other programs we’ll need) can only be run from inside the Linux files folder, so you can’t actually navigate to the desktop and will have to open a terminal window.

Next, type the following command into your terminal window and hit Enter:

mkdir data_wrangling

Did you see the folder appear? If so, congratulations on making your first folder in the command line! If not, double-check the text at the left of the command line prompt ($ on Chromebook, % on macOS, or > on Windows). If you don’t see the word Desktop in there, run cd ~/Desktop and then try again.

Now that you’ve gotten a little bit of practice with the command line, let’s see how it can help when installing and testing Python on your machine.

Installing Python, Jupyter Notebook, and a Code Editor

To keep things simple, we’re going to use a software distribution manager called Miniconda, which will automatically install both Python and Jupyter Notebook. Even if you don’t plan to use notebooks for your own coding, they’re popular enough that being able to view and run other people’s notebooks is useful, and it doesn’t take up that much additional space on your device. In addition to getting your Python and Jupyter Notebook tools up and running, installing Miniconda will also create a new command-line function called conda, which will give you a quick and easy way to keep both your Python and Jupyter Notebook installations up to date.8 You can find more information about how to do these updates in Appendix A.

If you’re planning to do most of your Python programming in a notebook, I also still recommend installing a code editor. Even if you never use them to write a single line of Python, code editors are indispensable for viewing, editing, and even creating your own data files more effectively and efficiently than most devices’ built-in text-editing software. Most importantly, code editors do something called syntax highlighting, which is basically built-in grammar checking for code and data. While that may not sound like much, the reality is that it will make your coding and debugging processes much faster and more reliable, because you’ll know (literally) where to look when there’s a problem. This combination of features makes a solid code editor one of the most important tools for both Python programming and general data wrangling.

In this book I’ll be using and referencing the Atom (https://atom.io) code editor, which is free, multiplatform, and open source. If you play around with the settings, you’ll find many ways to customize your coding environment to suit your needs. Where I reference the color of certain characters or bits of code in this book, they reflect the default “One Dark” theme in Atom, but use whatever settings work best for you.

Note

You’ll need a strong, stable internet connection and about 30–60 minutes to complete the following setup and installation processes. I also strongly recommend that you have your device plugged into a power source.

Chromebook

To install your suite of data wrangling tools on a Chromebook, the first thing you’ll need to know is whether your version of the Chrome OS operating system is 32-bit or 64-bit.

To find this information, open up your Chrome settings (click the gear icon in the Start menu, or search “settings” in the Launcher) and then click on “About Chrome OS” at the lower left. Toward the top of the window, you’ll see the version number followed by either (32-bit) or (64-bit), as shown in Figure 1-1.

Figure 1-1. Chrome OS version detail

Make a note of this information before continuing with your setup.

Installing Python and Jupyter Notebook

To get started, download the Linux installer that matches the bit format of your Chrome OS version. Then, open your Downloads folder and drag the installer file (it will end in .sh) into your Linux files folder.

Next, open up a terminal window, run the ls command, and make sure that you

see the Miniconda .sh file. If you do, run the following command (remember, you

can just type the beginning of the filename and then hit the Tab key, and it will

autocomplete!):

bash _Miniconda_installation_filename_.sh

Follow the directions that appear in your terminal window (accept the license and the conda init prompt), then close and reopen your terminal window. Next, you’ll need to run the following:

conda init

Then close and reopen your terminal window again so that you can install Jupyter Notebook with the following command:

conda install jupyter

Answer yes to the subsequent prompts, close your terminal one last time, and you’re all set!

Installing Atom

To install Atom on your Chromebook, you’ll need to download the .deb package from https://atom.io and save it in (or move it to) your Linux files folder.

To install the software using the terminal, open a terminal window and type:

sudo dpkg -i atom-amd64.deb

Hit Enter.9 Once the text has finished scrolling and the command prompt (which ends with a $) is back, the installation is complete.

Alternatively, you can context-click on the .deb file in your Linux files folder and choose the “Install with Linux” option from the top of the context menu, then click “Install” and “OK.” You should see a progress bar on the bottom right of your screen and get a notification when the installation is complete.

Whichever method you use, once the installation is finished, you should see the green Atom icon appear in your “Linux apps” bubble in the Launcher.

macOS

You have two options when installing Miniconda on macOS: you can use the terminal to install it using a .sh file, or you can install it by downloading and double-clicking the .pkg installer.

Installing Python and Jupyter Notebook

To get started, go to the Miniconda installer links page. If you want to do your installation with the terminal, download the Python 3.9 “bash” file that ends in .sh; if you prefer to use your mouse, download the .pkg file (you may see a notification from the operating system during the download process warning, “This type of file can harm your computer”; choose “Keep”).

Whichever method you select, open your Downloads folder and drag the file onto your desktop.

If you want to try installing Miniconda using the terminal, start by opening a terminal window and using the cd command to point it to your desktop:

cd ~/Desktop

Next, run the ls command, and make sure that you see the Miniconda .sh file in the resulting list. If you do, run the following command (remember, you can just type the beginning of the filename and then hit the Tab key, and it will autocomplete!):

bash _Miniconda_installation_filename_.sh

Follow the directions that appear in your terminal window:

-

Use the space bar to move through the license agreement a full page at a time, and when you see

(END), hit Return. -

Type

yesfollowed by Return to accept the license agreement. -

Hit Return to confirm the installation location, and type

yesfollowed by Return to accept theconda initprompt.

Finally, close your terminal window.

If you would prefer to do the installation using your mouse, just double-click the .pkg file and follow the installation instructions.

Now that you have Miniconda installed, you need to open a new terminal window and type:

conda init

Then hit Return. Next, close and reopen your terminal window, and use the following command (followed by Return) to install Jupyter Notebook:

conda install jupyter

Installing Atom

To install Atom on a Mac, visit https://atom.io and click the large yellow Download button to download the installer.

Click on the atom-mac.zip file in your Downloads folder and then drag the Atom application (which will have a green icon next to it) into your Applications folder (this may prompt you for your password).

Windows 10+

To install your suite of data wrangling tools on Windows 10+, the first thing you’ll need to know is whether your version of the Windows 10 operating system is 32-bit or 64-bit.

To find this information, open up your Start menu, then select the gear icon to go to the Settings menu. In the resulting window, choose System → About in the lefthand menu. In the section titled “Device specifications,” you’ll see “System type,” which will specify whether you have a 32-bit or 64-bit system. For the official instructions, see Microsoft’s related FAQ.

Make a note of this information before continuing with your setup.

Installing Python and Jupyter Notebook

To get started, go to the Miniconda installer links page and download the Python 3.9 installer appropriate for your system (either 32-bit or 64-bit). Once the .exe file has downloaded, click through the installer menus, leaving the preselected options in place (you can skip the recommended tutorials and the “Anaconda Nucleus” sign-up at the end).

Once the installation is complete, you should see two new items in your Start menu in the “Recently added” list at the top: “Anaconda Prompt (miniconda3)” and “Anaconda Powershell Prompt (miniconda3),” as shown in Figure 1-2. While both will work for our purposes, I recommend you use Powershell as your “terminal” interface throughout this book.

Figure 1-2. Anaconda options in the Start menu

Now that you have Miniconda installed, you need to open a new terminal (Powershell) window and type:

conda init

Then hit Return. Next, close and reopen your terminal window as instructed, and use the following command (followed by Return) to install Jupyter Notebook:

conda install jupyter

Answer yes (by typing y and then hitting the Enter key) to the subsequent prompts.

Installing Atom

To install Atom on a Windows 10+ machine, visit https://atom.io and click the large yellow “Download” button to download the installer.

Click on the Atom-Setup-x64.exe file,10 and wait for the installation to finish; Atom should launch automatically. You can answer Yes to the blue pop-up that asks about registering as the default atom:// URI handler.

Testing your setup

To make sure that both Python and Jupyter Notebook are working as expected, start by opening a terminal window and pointing it to the data_wrangling folder you created in “Getting Started with the Command Line”, then running the following command:11

cd ~/Desktop/data_wrangling

Then, run:

python --version

If you see something like:

Python 3.9.4

this means that Python was installed successfully.

Next, test out Jupyter Notebook by running:

jupyter notebook

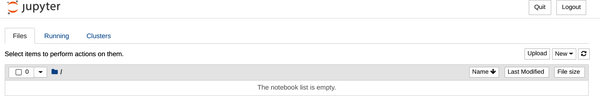

If a browser window opens12 that looks something like the image in Figure 1-3, you’re all set and ready to go!

Figure 1-3. Jupyter Notebook running in an empty folder

Working with Python Online

If you want to skip the hassle of installing Python and code editor on your machine (and you plan to only use Python when you have a strong, consistent internet connection), working with Jupyter notebooks online through Google Colab is a great option. All you’ll need to get started is an unrestricted Google account (you can create a new one if you prefer—make sure you know your password!). If you have those elements in place, you’re ready to get wrangling with “Hello World!”!

Hello World!

Now that you’ve got your data wrangling tools in place, you’re ready to get started writing and running your first Python program. For this, we’ll bow to programming tradition and create a simple “Hello World” program; all it’s designed to do is print out the words “Hello World!” To get started, you’ll need a new file where you can write and save your code.

Using Atom to Create a Standalone Python File

Atom works just like any other text-editing program; you can launch it using your mouse or even using your terminal.

To launch it with your mouse, locate the program icon on your device:

- Chromebook

-

Inside the “Linux apps” applications bubble.

- Mac

-

In Applications or in the Launchpad on Mac.

- Windows

-

In the Start menu or via search on Windows. If Atom doesn’t appear in your start menu or in search after installing it for the first time on Windows 10, you can find troubleshooting videos on YouTube.

Alternatively, you can open Atom from the terminal by simply running:

atom

The first time you open Atom on a Chromebook, you’ll see the “Choose password for new keyring” prompt. Since we’ll just be using Atom for code and data editing, you can click Cancel to close this prompt. On macOS, you’ll see a warning that Atom was downloaded from the internet—you can also click past this prompt.

You should now see a screen similar to the one shown in Figure 1-4.

By default, when Atom launches, it shows one or more “Welcome” tabs; you can just close these by clicking the x close button that appears to the right of the text when you hover over it with your mouse. This will move the untitled file toward the center of your screen (if you like, you can also collapse the Project panel on the left by hovering over its right edge until the < appears and then clicking on that).

Figure 1-4. Atom welcome screen

Before we start writing any code, let’s go ahead and save our file where we’ll know where to find it—in our data_wrangling folder! In the File menu, select “Save As…” and save the file in your data_wrangling folder with the name HelloWorld.py.

Tip

When saving standalone Python files, it’s essential to make sure you add the .py extension. While your Python code will still work properly without it, having the correct extension will let Atom do the super-useful syntax highlighting I mentioned in “Installing Python, Jupyter Notebook, and a Code Editor”. This feature will make it much easier to write your code correctly the first time!

Using Jupyter to Create a New Python Notebook

As you may have noticed when you tested Jupyter Notebook, in “Testing your setup”, the interface you’re using is actually just a regular browser window. Believe it or not, when you run the jupyter notebook command, your regular computer is actually creating a tiny web server on your device!13 Once that main Jupyter window is up and running, you can use your mouse to create new Python files and run other commands right in your web browser!

To get started, open a terminal window and use the command:

cd ~/Desktop/data_wrangling/

to move into the data_wrangling folder on your Desktop. Next, run:

jupyter notebook

You’ll see a lot of code run past on the terminal window, and your computer should automatically open a browser window that will show you an empty directory. Under New in the upper-righthand corner, choose “Python 3” to open a new notebook. Double-click the word Untitled in the upper-lefthand corner next to the Jupyter logo to name your file HelloWorld.

Warning

Because Jupyter Notebook is actually running a web server (yes, the same kind that runs regular websites) on your local computer, it’s essential that you leave that terminal window open and running for as long as you are interacting with your notebooks. If you close that particular terminal window, your notebooks will “crash.”

Fortunately, Jupyter notebooks autosave every two minutes, so even if something does crash, you probably won’t lose much work. That being said, you may want to minimize the terminal window you use to launch Jupyter, just to avoid accidentally closing it while you’re working.

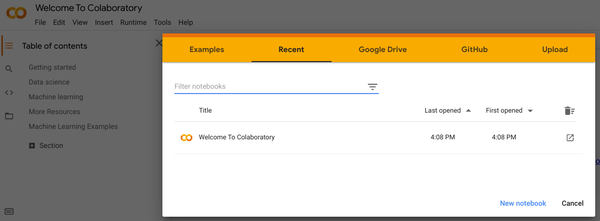

Using Google Colab to Create a New Python Notebook

First, sign in to the Google account you want to use for your data wrangling work, then visit the Colab website. You’ll see something similar to the overlay shown in Figure 1-5.

Figure 1-5. Google Colab landing page (signed in)

In the bottom-right corner, choose New notebook, then double-click at the top left to replace Untitled0.ipynb with HelloWorld.ipynb.14

Adding the Code

Now, we’ll write our first bit of code, which is designed to print out the words “Hello World.” No matter which type of Python file you’re using, the code shown in Example 1-1 is the same.

Example 1-1. hello_world.py

# the code below should print "Hello World!"("Hello World!")

In a Standalone File

All you need to do is copy (or type) the code in Example 1-1 into your file and save it!

In a Notebook

When you create a new file, there is one empty “code cell” in it by default (in Jupyter Notebook, you’ll see In [ ] to the left of it; in Google Colab, there’s a little “play” button). Copy (or type) the code in Example 1-1 into that cell.

Running the Code

Now that we’ve added and saved our Python code in our file, we need to run it.

In a Standalone File

Open a terminal window and move it into your data_wrangling folder using:

cd ~/Desktop/data_wrangling

Run the ls command and make sure you see your HelloWorld.py file listed in response. Finally, run:

python HelloWorld.py

You should see the words Hello World! print out on their own line, before the command prompt returns (signaling that the program has finished running).

Documenting, Saving, and Versioning Your Work

Before we really dive into Python in Chapter 2, there are a few more bits of preparation to do. I know these may seem tedious, but making sure you’ve laid the groundwork for properly documenting your work will save you dozens of hours of effort and frustration. What’s more, carefully commenting, saving, and versioning your code is a crucial part of “bulletproofing” your data wrangling work. And while it’s not exactly enticing right now, pretty soon all of these steps will be second nature (I promise!), and you’ll see how much speed and efficiency it adds to your data work.

Documenting

You may have noticed that the first line you wrote in your code cell or Python file in “Hello World!” didn’t show up in the output; the only thing that printed was Hello World!. That first line in our file was a comment, which provides a plain-language description of what the code on the following line(s) will do. Almost all programming languages (and some data types!) provide a way to include comments, precisely because they are an excellent way to provide anyone reading your code15 with the context and explanation necessary to understand what the specific program or section of code is doing.

Though many individual programmers tend to overlook (read: skip) the commenting process, it is probably the single most valuable programming habit you can develop. Not only will it save you—and anyone you collaborate with—an enormous amount of time and effort when you are looking through a Python program, but commenting is also the single best way to really internalize what you’re learning about programming more generally. So even though the code samples provided with this book will already have comments, I strongly encourage you to rewrite them in your own words. This will help ensure that when future you returns to these files, they’ll contain a clear walk-through of how you understood each particular coding challenge the first time.

The other essential documentation process for data wrangling is keeping what I call a “data diary.” Like a personal diary, your data diary can be written and organized however you like; the key thing is to capture what you are doing as you are doing it. Whether you’re clicking around the web looking for data, emailing experts, or designing a program, you need somewhere to keep track of everything, because you will forget.

The first entry in your “diary” for any data wrangling project should be the question you are trying to answer. Though it may be a challenge, try to write your question as a single sentence, and put it at the top of your data wrangling project diary. Why is it important that your question be a single sentence? Because the process of real data wrangling will inevitably lead you down enough “rabbit holes”—to answer a question about your data’s origin, for example, or to solve some programming problem—that it’s very easy to lose track of what you were originally trying to accomplish (and why). Once you have that question at the top of your data diary, though, you can always come back to it for a reminder.

Your data diary question will also be invaluable for helping you make decisions about how to spend your time when data wrangling. For example, your dataset may contain terms that are unfamiliar to you—should you try to track down the meaning of every single one? Yes, if doing so will help answer your question. If not, it may be time to move on to another task.

Of course, once you succeed in answering your question (and you will! at least in part), you’ll almost certainly find you have more questions you want to answer, or that you want to answer the same question again, but a week, a month, or a year later. Having your data diary on hand as a guide will help you do it much faster and more easily the next time. That’s not to say that it doesn’t take effort: in my experience, keeping a thorough data diary makes a project take about 40% longer to complete the first time around, but it makes doing it again (with a new version of the dataset, for example) at least twice as fast. Having a data diary is also a valuable proof of work: if you’re ever looking for the process by which you got your data wrangling results, your data diary will have all the information that you (or anyone else) might need.

When it comes to how you keep your data diary, however, it’s really up to you. Some folks like to do a lot of fancy formatting; others just use a plain old text file. You may even want to use a real gosh-for-sure paper notebook! Whatever works for you is fine. While your data diary will be an invaluable reference when it comes time to communicate with others about your data (and the wrangling process), you should organize it however suits you best.

Saving

In addition to documenting your work carefully through comments and data diaries, you’ll want to make sure you save it regularly. Fortunately, the “saving” process is essentially built in to our workflow: notebooks autosave regularly, and to run the code in our standalone file, we have to save our changes first. Whether you rely on keyboard shortcuts (for me, hitting Ctrl+S is something of a nervous habit) or use mouse-driven menus, you’ll probably want to save your work every 10 minutes or so at least.

Tip

If you are using standalone files, one thing to get familiar with is how your code editor indicates that a file has unsaved changes. In Atom, for example, a small colored dot appears in the document tab just to the right of the filename when there are unsaved changes to the file. If the code you’re running isn’t behaving as you expect, double-check that you have it saved first and then try again.

Versioning

Programming—like most writing—is an iterative process. My preferred approach has always been to write a little bit of code, test it out, and if it works, write a little more and test again. One goal of this approach is to make it easier to backtrack in case I add something that accidentally “breaks” the code.16

At the same time, it’s not always possible to guarantee that your code will be “working” when you have to step away from it—whether because the kids just got home, the study break is over, or it’s time for bed. You always want to have a “safe” copy of your code that you can come back to. This is where version control comes in.

Getting started with GitHub

Version control is basically just a system for backing up your code, both on your computer and in the cloud. In this book, we’ll be using GitHub for version control; it’s a hugely popular website where you can back up your code for free. Although there are many different ways to interact with GitHub, we’ll use the command line because it just takes a few quick commands to get your code safely tucked away until you’re ready to work on it again. To get started, you’ll need to create an account on GitHub, install Git on your computer, and then connect the accounts to one another:

-

Visit the GitHub website at https://github.com and click “Sign Up.” Enter your preferred username (you may need to try a few to find one that’s available), your email address, and your chosen password (make sure to write this down or save it to your password manager—you’ll need it soon!).

-

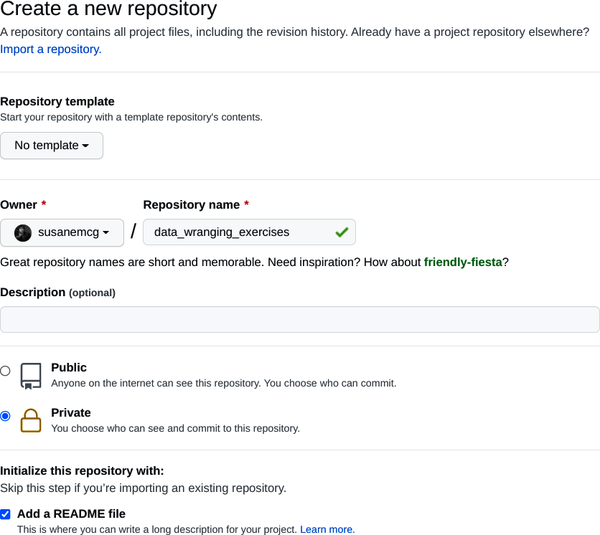

Once you’ve logged in, click the New button on the left. This will open the “Create a new repository” page shown in Figure 1-6.

-

Give your repository a name. This can be anything you like, but I suggest you make it something descriptive, like data_wrangling_exercises.

-

Select the Private radio button, and select the checkbox next to the option that says “Add a README file.”

-

Click the"Create repository” button.

Figure 1-6. Creating a new repository (or “repo”) on GitHub.com

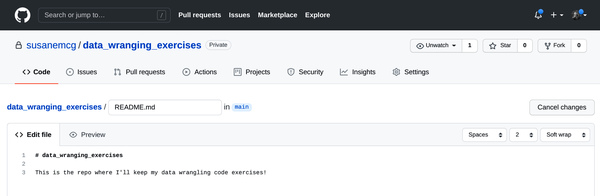

You’ll now see a page that shows data_wrangling_exercises in large type, with a small pencil icon just above and to the right. Click on the pencil and you’ll see an editing interface where you can add text. This is your README file, which you can use to describe your repository. Since we’ll be using this repository (or “repo” for short) to store exercises from this book, you can just add a sentence to that effect, as shown in Figure 1-7.

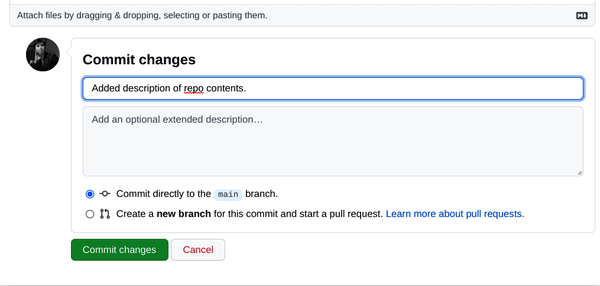

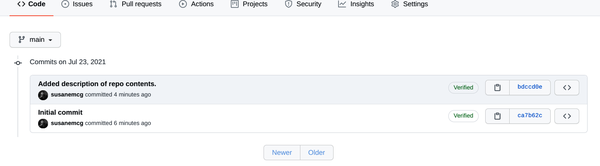

Scroll to the bottom of the page and you’ll see your profile icon with an editable area to the right that says “Commit changes,” and below that some default text that says “Update README.md.” Replace that default text with a brief description of what you did; this is your “commit message.” For example, I wrote: “Added description of repo contents,” as shown in Figure 1-8. Then click the"Commit changes” button.

Figure 1-7. Updating the README file on GitHub

Figure 1-8. Adding a commit message to the README file changes

When the screen refreshes, you’ll now see the text you added to the main file underneath the original data_wrangling_exercises title. Just above that, you should be able to see the text of your commit message, along with the approximate amount of time that’s passed since you clicked “Commit changes.” If you click on the text that says “2 commits” to the right of that, you’ll see the “commit history,” which will show you all the changes (so far just two) that have been made to that repo, as shown in Figure 1-9. If you want to see how a commit changed a particular file, just click on the six-character code to the right, and you’ll see what’s known as a diff (for “difference”) view of the file. On the left is the file as it existed before the commit, and on the right is the version of the file in this commit.

Figure 1-9. A brief commit history for our new repo

By this point, you may be wondering how this relates to backing up code, since all we’ve done is click some buttons and edit some text. Now that we’ve got a “repo” started on GitHub, we can create a copy of it on our local machine and use the command line to make “commits” of working code and back them up to this website with just a few commands.

For backing up local files: installing and configuring Git

Like Python itself, Git is software that you install on your computer and run via the command line. Because version control is such an integral part of most coding processes, Git comes built in on macOS and Linux; instructions for Windows machines can be found on GitHub, and for ChromeBooks, you can install Git using the Termux app. Once you’ve completed the necessary steps, open up a terminal window and type:

git --version

Followed by ‘enter’. If anything prints, you’ve already got Git! You’ll still, however, want to set your username and email (you can use any name and email you like) by running the following commands:

git config --global user.email your_email@domain.com git config --global user.name your_username

Now that you have Git installed and have added your name and email of choice to your local Git account, you need to create an authentication key on your device so that when you back up your code, GitHub knows that it really came from you (and not just someone on the other side of the world who figured out your username and password).

To do this, you’ll need to create what’s known as an SSH key—which is a long, unique string of characters stored on your device that GitHub can use to identify it. Creating these keys with the command line is easy: just open up a terminal window and type:

ssh-keygen -t rsa -b 4096 -C "your_email@domain.com"

When you see the “Enter a file in which to save the key” prompt, just press the Enter or Return key, so it saves the default location (this will make it easier to find in a minute, when we want to add it to GitHub). When you see the following prompt:

Enter passphrase (empty for no passphrase):

definitely add a passphrase! And don’t make it the password to your GitHub (or any other) account. However, since you’ll need to supply this passphrase every time you want to back your code up to GitHub,17 it needs to be memorable—try something like the first three words of the second verse of your favorite song or poem, for example. As long as it’s at least 8–12 characters long, you’re set!

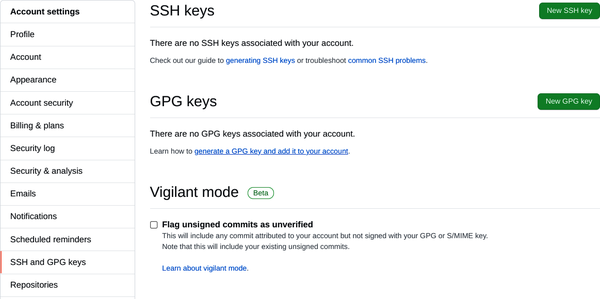

Once you’ve reentered your passphrase for confirmation, you can copy your key to your GitHub account; this will let GitHub match the key on your account to the one on your device. To do this, start by clicking on your profile icon in the upper-righthand corner of GitHub and choosing Settings from the drop-down menu. Then, on the lefthand navigation bar, click on the “SSH and GPG Keys” option. Toward the upper right, click the “New SSH key” button, as shown in Figure 1-10.

Figure 1-10. SSH key landing page on GitHub.com

To access the SSH key you just generated, you’ll need to navigate to the main user folder on your device (this is the folder that a new terminal window will open in) and set it (temporarily) to show hidden files:

- Chromebook

-

Your main user folder is just the one called Linux files. To show hidden files, just click the three stacked dots at the top right of any Files window and choose “Show hidden files.”

- macOS

-

Use the Command-Shift-. keyboard shortcut to show/hide hidden files.

- Windows

-

Open File Explorer on the taskbar, then choose View → Options → “Change folder and search options.” On the View tab in “Advanced settings,” select “Show hidden files, folders, and drives,” then click OK.

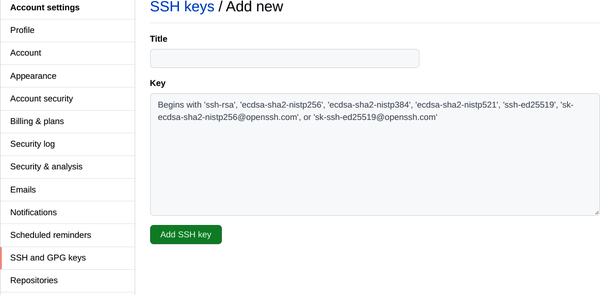

Look for the folder (it actually is a folder!) called .ssh and click into it, then using a basic text editor (like Atom), open the file called id_rsa.pub. Using your keyboard to select and then copy everything in the file, paste it into the empty text area labeled Key, as shown in Figure 1-11.

Figure 1-11. Uploading your SSH key to your GitHub.com account

Finally, give this key a name so you know what device it’s associated with, and click the"Add new SSH key” button—you will probably have to reenter your main GitHub password. That’s it! Now you can go back to leaving hidden files hidden and finish connecting your GitHub account to your device and/or Colab account.

Tip

I recommend using keyboard shortcuts to copy/paste your SSH key because the exact string of characters (including spaces) actually matters; if you use a mouse, something might get dragged around. If you paste in your key and GitHub throws an error, however, there are a couple of things to try:

-

Make sure you’re uploading the contents of the .pub file (you never really want to do anything with the other one).

-

Close the file (without saving) and try again.

If you still have trouble, you can always just delete your whole .ssh folder and generate new keys—since they haven’t been added to anything yet, there’s no loss in just starting over!

Tying it all together

Our final step is to create a linked copy of our GitHub repo on our local computer. This is easily done via the git clone command:

-

Open a terminal window, and navigate to your data_wrangling folder.

-

On GitHub, go to

your_github_username/data_wrangling_exercises. -

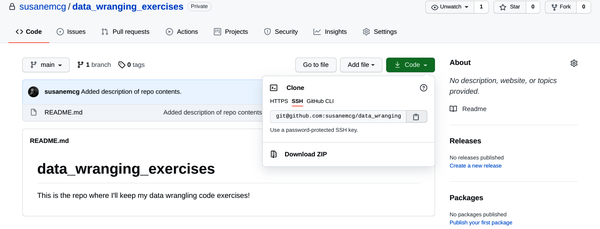

Still on GitHub, click theCode button toward the top of the page.

-

In the “Clone with SSH” pop-up, click the small clipboard icon next to the URL, as shown in Figure 1-12.

Figure 1-12. Retrieving the repo’s SSH location

-

Back in your terminal window, type

git cloneand then paste the URL from your clipboard (or type it directly if needed). It will look something like:git clone git@github.com:susanemcg/data_wrangling_exercises.git

-

You may get a prompt asking if you would like to add the destination to the list of known hosts. Type

yesand hit Return. If prompted, provide your SSH password. -

When you see the “done” message, type

ls. You should now see data_wrangling_exercises in your data_wrangling folder. -

Finally, type

cd data_wrangling_exercisesand hit Enter to move your terminal into the copied repo. Use thelscommand to have the terminal show the README.md file.

Whew! That probably seems like a lot, but keep in mind that you only ever need to create an SSH key once, and you’ll only have to go through the cloning process once per repo (and all the exercises in this book can be done in the same repo).

Now let’s see how this all works in action by adding our Python file to our repo. In a finder window, navigate to your data_wrangling folder. Save and close your HelloWorld.py or HelloWorld.ipynb file, and drag it into the data_wrangling_exercises folder. Back in terminal, use the ls command to confirm that you see your Python file.

Our final step is to use the add command to let Git know that we want our Python file to be part of what gets backed up to GitHub. We’ll then use a commit to save the current version, followed by the push command to actually upload it to GitHub.

To do this, we’re going to start by running git status in the terminal window. This should generate a message that mentions “untracked files” and shows the name of your Python file. This is what we expected (but running git status is a nice way to confirm it). Now we’ll do the adding, committing, and pushing process described previously. Note that the add commands produce output messages in the terminal:

-

In terminal, run

git addyour_python_filename. -

Then run

git commit -m "Adding my Hello World Python file."your_python_filename. The-mcommand indicates that the quoted text should be used as the commit message—the command-line equivalent of what we entered on GitHub for our README update a few minutes ago. -

Finally, run

git push.

The final command is what uploads your files to GitHub (note that this clearly will not work if you don’t have an available internet connection, but you can make commits anytime you like and run the push command whenever you have internet again). To confirm that everything worked correctly, reload your GitHub repo page, and you’ll see that your Python file and commit message have been added!

For backing up online Python files: connecting Google Colab to GitHub

If you’re doing all of your data wrangling online, you can connect Google Colab directly to your GitHub account. Make sure you’re logged in to your data wrangling Google account and then visit https://colab.research.google.com/github. In the pop-up window, it will ask you to sign in to your GitHub account, and then to “Authorize Colaboratory.” Once you do so, you can select a GitHub repo from the drop-down menu on the left, and any Jupyter notebooks that are in that repo will appear below.

Note

The Google Colab view of your GitHub repos will only show you Jupyter notebooks (files that end in .ipynb). To see all files in a repo, you’ll need to visit it on the GitHub website.

Tying it all together

If you’re working on Google Colab, all you have to do to add a new file to your GitHub repo is to choose File → Save a copy in GitHub. After automatically opening and closing a few pop-ups (this is Colab logging in to your GitHub account in the background), you’ll once again be able to choose the GitHub repo where you want to save your file from the drop-down menu at the top left. You can then choose to keep (or change) the notebook name and add a commit message. If you leave “Include a link to Colaboratory” checked in this window, then the file in GitHub will include a little “Open in Colab” label, which you’ll be able to click to automatically open the notebook in Colab from GitHub. Any notebooks that you don’t explicitly back up in GitHub this way will be in your Google Drive, inside a folder called Colab Notebooks. You can also find them by visiting the Colab website and selecting the Google Drive tab at the top.

Conclusion

The goal of this chapter was to provide you with a general overview of what you can expect to learn in this book: what I mean by data wrangling and data quality, and why I think the Python programming language is the right tool for this work.

In addition, we covered all the setup you’ll need to get started (and keep going!) with Python for data wrangling, by offering instructions for setting up your choice of programming environment: working with “standalone” Python files or Jupyter notebooks on your own device, or using Google Colab to use Jupyter notebooks online. Finally, we covered how you can use version control (no matter which setup you have) to back up, share, and document your work.

In the next chapter, we’ll move far beyond our “Hello World” program as we work through the foundations of the Python programming language and even tackle our first data wrangling project: a day in the life of New York’s Citi Bike system.

1 We’ll cover these in detail in Chapters 4 and 5, respectively.

2 In the world of computing, this is often expressed as “garbage in/garbage out.”

3 Disclosure: many ProPublica staffers, including the lead reporter on this series, are former colleagues of mine.

4 The “Machine Bias” series generated substantial debate in the academic community, where some took issue with ProPublica’s definition of bias. Much more importantly, however, the controversy spawned an entirely new area of academic research: fairness and transparency in machine learning and intelligence.

5 Remember that even a misplaced space character can cause problems in Python.

6 This same software can also be used to create notebooks in R and other scripting languages.

7 The numbers here are called version numbers, and they increase sequentially as the Python language is changed and upgraded over time. The first number (3) indicates the “major” version, and the second number (9) indicates the “minor” version. Unlike regular decimals, it’s possible for the minor version to be higher than 9, so in the future you might encounter a Python 3.12.

8 Miniconda is a smaller version of the popular “Anaconda” software, but since the latter installs the R programming language and a number of other items we won’t need, we’ll use Miniconda to save space on our device.

9 If you have a 32-bit Chromebook, the filename might be slightly different.

10 The installer filename may have a different number if you are on a 32-bit system.

11 Unless otherwise noted, all terminal commands should be followed by hitting Enter or Return.

12 If you get a prompt asking about how you want to “open this file,” I recommend selecting Google Chrome.

13 Don’t worry, it’s not visible on the internet!

14 Early versions of Jupyter Notebook were known as “iPythonNotebook,” which is where the .ipynb file extension comes from.

15 Especially “future you”!

16 Meaning that I no longer get the output I expect, or that I get errors and no output at all!

17 Depending on your device, you can save this password to your “keychain.” For more information, see the docs on GitHub.

Get Practical Python Data Wrangling and Data Quality now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.