Chapter 4. Loose Coupling

SOA APPLIES TO LARGE DISTRIBUTED SYSTEMS. SCALABILITY AND FAULT TOLERANCE ARE KEY TO THE maintainability of such systems. Another important goal is to minimize the impact of modifications and failures on the system landscape as a whole. Thus, loose coupling is a key concept of SOA.

This chapter will discuss the motivations for this concept (for large distributed systems), exploring variations of loose coupling from the technical and organizational points of view. This topic will demonstrate that SOA is a paradigm that leads to special priorities when designing large systems. However, again, there is no rule prescribing which kind or level of loose coupling you should employ. This decision must be made based on your specific circumstances.

The Need for Fault Tolerance

We live in crazy times. The market rules, which means you won’t usually have enough time to create well-elaborated, robust system designs. If you’re not fast enough, flexible enough, and cheap enough, you’ll soon find yourself out of the market. Thus, you need fast, flexible, and cheap solutions.

Fast and cheap solutions, however, can’t be well designed and robust. Consequently, you will have to deal with errors and problems. The important point here is fault tolerance. The most important thing is that your systems run. According to [ITSecCity02], a flightbooking system failure may cost $100,000 an hour, a credit card system breakdown may cost $300,000 an hour, and a stock-trading malfunction may cost $8 million an hour. As these figures show, fault tolerance is key for large distributed systems. When problems occur, it is important to minimize their effects and consequences.

Forms of Loose Coupling

Loose coupling is the concept typically employed to deal with the requirements of scalability, flexibility, and fault tolerance. The aim of loose coupling is to minimize dependencies. When there are fewer dependencies, modifications to or faults in one system will have fewer consequences on other systems.

Loose coupling is a principle; it is neither a tool, nor a checklist. When you design your SOA, it is up to you to define which kinds and amount of loose coupling you introduce. However, there are some typical topics you might want to consider when you think about loose coupling in your system. Table 4-1 lists them (this list is an extension of a list published in [KrafzigBankeSlama04], p. 47).

Tight coupling | Loose coupling | |

Physical connections | Point-to-point | Via mediator |

Communication style | Synchronous | Asynchronous |

Data model | Common complex types | Simple common types only |

Type system | Strong | Weak |

Interaction pattern | Navigate through complex object trees | Data-centric, self-contained message |

Control of process logic | Central control | Distributed control |

Binding | Statically | Dynamically |

Platform | Strong platform dependencies | Platform independent |

Transactionality | 2PC (two-phase commit) | Compensation |

Deployment | Simultaneous | At different times |

Versioning | Explicit upgrades | Implicit upgrades |

This table is far from being complete, but it’s pretty typical for large distributed systems. Note again that this is not a checklist. There is no SOA certification saying you conform when all or at least 50 percent of the forms of loose coupling are in use. However, it would be very strange if none of these forms of loose coupling were used in your SOA. If this were possible, your system would appear not to have the common requirements of large distributed systems with different owners. That’s OK, but you shouldn’t call your solution SOA. (Well, it may help you get money and resources for it, but beware of false impressions.)

If there is such a fine list of aspects of loose coupling, and minimizing dependencies is good, you might be wondering why you don’t simply use all these forms of loose coupling in each SOA. The answer is that there is a price to pay for loose coupling, in that it makes systems more complex. That means more development, and/or maintenance effort.

To explore how the forms of loose coupling listed in Table 4-1 can help and the costs they can incur, let’s examine some more closely. Most of these topics will be discussed in more detail later in the book, so I’ve included references to future chapters where appropriate.

Asynchronous Communication

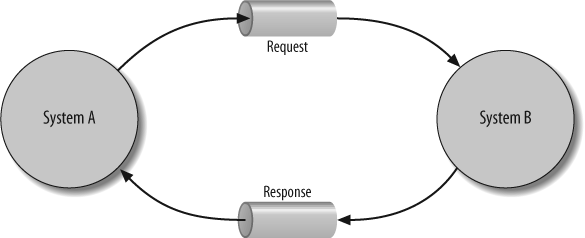

Probably the most well-known example of loose coupling is asynchronous communication (see Figure 4-1). Asynchronous communication usually means that the sender of a message and its receiver are not synchronized. Think of it like sending an email. After you send a message, you can continue your work while waiting for an answer. The recipient might not be available (online) when you send the message. When she comes online, the message gets delivered, and she can process it and send a response if necessary (again, with no requirement that you be available/online when she sends it).

One problem with asynchronous communication occurs when the sender needs a reply. With asynchronous communication, you don’t get replies to your messages immediately. Because you don’t know when (or whether) a reply will arrive, you continue your work and start to perform different tasks. Then, when the response arrives, you have to deal with it in an appropriate way. This means that you have to associate the answer with the original request (e.g., by processing something like a "Correlation IDs“). In addition, you have to process the reply, which usually requires knowledge of some of the initial state and context when the request was sent. Both correlating the response to the request, and transferring the state from the request to the response, require some effort.

Note

See Chapter 10 for a discussion of different message exchange patterns (MEPs) and Correlation IDs for details about correlation IDs.

The situation gets worse when you send a lot of asynchronous messages. The order in which you receive responses might be different from the order in which you sent the messages, and some of the awaited responses might not arrive (or arrive in time). Programming, testing, and debugging all the possible eventualities can be very complicated and time consuming.

For this reason, one of my customers who has hundreds of services in production has the policy of avoiding asynchronicity whenever a request needs a reply. Having found that debugging race conditions (situations caused by different unexpected response times) was a nightmare, and knowing that maintainability was key in large distributed systems, the customer decided to minimize the risk of getting into these situations. This decision involved a tradeoff because performance was not as good as it might have been with more asynchronous communication.

As this discussion demonstrates, there are two sides to introducing asynchronous communication in SOA (or distributed systems in general):

The advantage is that the systems exchanging service messages do not have to be online at the same time. In addition, if a reply is required, long answering times don’t block the service consumer.

The drawback is that the logic of the service consumer gets (much) more complicated.

Note that when discussing asynchronicity, people do not always mean the same thing. For example, asynchronous communication from a consumer’s point of view might mean that the consumer doesn’t block to wait for an answer, while from an infrastructure’s (ESB’s) point of view, it might mean that a message queue is used to decouple the consumer and provider. Often, both concepts apply in practice, but this is not always the case.

Heterogeneous Data Types

Now, let’s discuss my favorite example of loose coupling: the harmonization of data types over distributed systems. This topic always leads to much discussion, and understanding it is key to understanding large systems.

There is no doubt that life is a lot easier if data types are shared across all systems. For this reason, harmonizing data types is a “natural” approach. In fact, when object-orientation became mainstream, having a common business object model (BOM) became a general goal. But, it turned out that this approach was a recipe for disaster for large systems.

The first reason for the disaster was an organizational one: it was simply not possible to come to an agreement for harmonized types. The views and interests of the different systems were too varied. Because large distributed systems typically have different owners, it was tough to reach agreements. Either you didn’t fulfill all interests, or your model became far too complicated, or it simply was never finished. This is a perfect example of “analysis paralysis”: if you try to achieve perfection when analyzing all requirements, you’ll never finish the job.

You might claim that the solution is to introduce a central role (a systems architect or a “model master”) that resolves all open questions, so that one common BOM with harmonized data types becomes a reality. But then, you’ll run into another fundamental problem: different systems enhance differently. Say you create a harmonized data type for customers. Later, a billing system might need two new customer attributes to deal with different tax rates, while a CRM system might introduce new forms of electronic addresses, and an offering system might need attributes to deal with privacy protection. If a customer data type is shared among all your systems (including systems not interested in any of these extensions), all the systems will have to be updated accordingly to reflect each change, and the customer data type will become more and more complicated.

Sooner or later, the price of harmonization becomes too high. Keeping all the systems in sync is simply too expensive in terms of time and money. And even if you manage to succeed, your next company merger will introduce heterogeneity again!

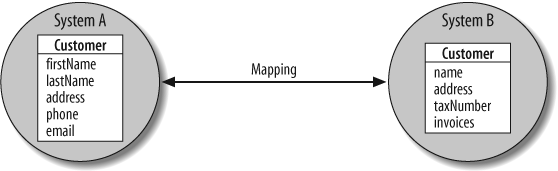

Common BOMs do not scale because they lead to a coupling of systems that is too tight. As a consequence, you have to accept the fact that data types on large distributed systems will not be harmonized. In decoupled large systems, data types differ (see Figure 4-2).

Again, there is a price to pay for this decision: if data types are not harmonized, you need data type mappings (which include technical and semantic aspects). Although mapping adds complexity, it is a good sign in large systems because it demonstrates that components are decoupled.

The usual approach is that a service provider defines the data types used by the services it provides (which might be ruled by some general conventions and constraints). The service consumers have to accept these types. Note that a service consumer should avoid using the provider’s data types in its own source code. Instead, a consumer should have a thin mapping layer to map the provider’s data types to its own data types. See Using Different Types for Different Versions of a Data Type for a detailed explanation of why this is important.

Again, there are two sides to introducing this form of loose coupling in SOA (or distributed systems in general). Having no common business data model has pros and cons:

The advantage is that systems can modify their data types without directly affecting other systems (modified service interfaces affect only corresponding consumers).

The drawback is that you have to map data types from one system to another.

Note that you will need some fundamental data types to be shared between all applications. But to promote loose coupling, fundamental data types harmonized for all services should usually be very basic. The most complicated common data type I’ve seen a phone company introduce in a SOA landscape was a data type for a phone number (a structure/record of country code, area code, and local number). The trial to harmonize a common type for addresses (customer addresses, invoice addresses, etc.) failed. One reason was an inability to agree on how to deal with titles of nobility. Another reason was that different systems and tools had different constraints on how to process and print addresses on letters and parcels.

Note

If you are surprised about this low level of harmonization, think about what it means to modify a basic type and roll out the modifications across all systems at the same time (see Deployment and Robustness for details). In practice, fundamental service data types must be stable.

Does this mean that you can’t have harmonized address data types in a SOA? Not necessarily. If you are able to harmonize, do it. Harmonization helps. However, don’t fall into the trap of requiring that data types be harmonized. This approach doesn’t scale.

If you can’t harmonize an address type, does this mean that all consumers have to deal with the differences between multiple address types? No. The usual approach in SOA is to introduce a composed service that allows you to query and modify addresses (composed services are discussed in Chapter 6). This service then deals with differences between the backend systems by mapping the data appropriately.

Note that with this approach, there’s still no need to have one common view to addresses. If you get new requirements, you can simply introduce a second address service mapping the additional attributes to the different backends. Existing consumers that don’t share the additional requirements will not be affected.

Mediators

A third form of loose coupling has to do with how a service call performed by a consumer finds the provider that has to process this request. With a “point-to-point” approach, the sender sends the request to one specific physical system using its physical address. This is like sending a letter to a specific postal address, such as 42 Broadway in New York, NY.

This is a tightly coupled approach. What happens if the receiver moves houses? What happens if the receiver is out of order, or is getting flooded with too many messages? Mechanisms for failover and load balancing are required. That is, you need some kind of intermediary to switch between different physical receivers.

In principle, there are two kinds of mediators:

The first type tells you the correct endpoint for your service call before you send it. That is, you still have point-to-point connections, but with the help of these mediators, you send your service calls to different addresses. Such a mediator is often called a broker or name server. You ask for a service using a symbolic name, and the broker or name server tells you which physical system to send your request to. This form of mediation requires some additional intelligence at the consumer site.

The second type chooses the right endpoint for a request after the consumer sends it. In this case, the consumer sends the request to a symbolic name, and the infrastructure (network, middleware, ESB) routes the call to the appropriate system according to intelligent routing rules.

In practice, very different flavors of both forms of mediation occur. For example, there are service buses that send messages using a broadcasting approach, so that the sender sends a request to a logical receiver, and any of the different providers providing the requested service can process the call. On the other hand, Web Services are technically point-to-point connections, where you typically use a broker to find the physical endpoint of a request, and/or you insert some so-called interceptors that route your request at runtime to an available provider.

Chapter 5 will discuss details of how to deal with mediation in an ESB.

Weak Type Checking

Another good example of the complexity of loose coupling has to do with the question of whether and when to check types. Most of us have probably learned that the earlier errors are detected, the better. For this reason, programming languages with type checking seem to be better than those without (because they detect possible errors at compile time rather than runtime).

However, as systems grow, things change. The problem is that type checking takes time and requires information. In order for the SOA infrastructure (ESB) to check types, it needs to have some information about those types. If, for example, types are described using XML, the ESB will need the corresponding XML schema file(s). As a consequence, any modifications of the interface will not only affect both the provider and the consumer(s), but also the ESB. This means that mechanisms and processes will be required to synchronize updates with the ESB. And if the ESB uses adapters for each provider and consumer, you might have to organize the deployment of these updates to all adapters. This is possible, but it leads to tighter dependencies than a policy where interface modifications affect only providers and consumers. For this reason, it might be a good idea to make the ESB generic. In general, interface changes should only affect those who use the interface as a contract, not those who transfer the corresponding data. If the Internet had had to validate the correctness of interfaces, it would never have been able to scale.

Now, you might come to the conclusion that you should always prefer generic interfaces to strict type checking in large systems. The extreme approach would be to introduce just one generic service for each system, so that the service interfaces never change: all you change are implementations against the interface. Note, however, that at some stage, your system will have to process data, and whether you can rely on that data being to some extent syntactically correct will affect your code. So, in places where business data gets processed, strong type checking helps. The only question is how stable the data is.

Say, for example, that your services exchange some string attributes. If the attributes are pretty stable, a typed service API that specifies each attribute explicitly is recommended. When a new attribute comes into play, you can introduce a new (version of the) service with the additional attribute. On the other hand, if the attributes change frequently, a key/value list might make sense. The right decision to make here depends on so many factors that a general rule is not useful. For these kinds of scenarios, you have to find the right amount of coupling as the system evolves. Note, however, that explicit modeling of attributes makes it easier to write code that processes the data (for example, you can easily map data in different formats when composing services in so-called orchestration engines). On the other hand, having no generic approach would be a very bad mistake when binary compatibility is a must. For example, a technical header provided for all service messages should always have some ability to be extended over the years without breaking binary compatibility (see Dealing with Technical Data (Header Data)).

Binding

Binding (the task of correlating symbols of different modules or translation units) allows a form of loose coupling that is similar to weak type checking. Again, you have to decide between early binding, which allows verification at compile or start time, and late binding, which happens at runtime.

Platform Dependencies

In Table 4-1, there are two forms of coupling that deal with the question of whether or not certain platform constraints apply. Making a decision about the general one, platform dependencies, is easy. Of course, you have more freedom of choice if platform-independent solutions are preferred over strong platform dependencies. The second form is discussed next.

Interaction Patterns

A special form of platform dependencies has to do with which interaction patterns are used in service signatures (i.e., which programming paradigms are provided to design service interfaces). Which are the fundamental data types, and how can you combine them? A wide range of questions must be considered:

Are only strings supported, or can other fundamental data types (integers, floats, Booleans, date/time types, etc.) be used?

Are enumerations (limited sets of named integer values) supported?

Can you constrain possible values (e.g., define certain string formats)?

Can you build higher types (structures, records)?

Can you build sequence types (arrays, lists)?

Can you design relations between types (inheritance, extensions, polymorphism)?

The more complicated the programming constructs are, the more abstractly you can program. However, you will also have more problems mapping data to platforms that have no native support for a particular construct.

Based on my own experience (and that of others), I recommend that you have a basic set of fundamental types that you can compose to other types (structures, records) and sequences (arrays). Be careful with enumerations (an anachronism from the time when each byte did count—use strings instead), inheritance, and polymorphism (even when XML supports it).

In general, be conservative with types, because once you have to support some language construct, you can’t stop doing so, even if the effort it requires (including the ability to log and debug) is very high. For more on data types, see Data Types.

Compensation

Compensation is an interesting form of loose coupling. It has to do with the question of transaction safety. If you have to update two different backends to be consistent, how can you avoid problems that occur when only one update is successful, resulting in an inconsistency? The usual approach to solving this problem is to create a common transaction context using a technique such as two-phase commit (2PC). With this approach, you first perform all the modifications on both backends, except for the final “switch to the updated data”; then, if no system signals a problem, the final commit performs the update on both systems.

2PC is one of the most overhyped attributes of middleware. Whenever there is an evaluation of middleware, the question of whether 2PC is supported arises. However, in practice, 2PC is rarely used in large systems because all the backends have to support it, it requires some programming effort, and it binds resources. The main problem is that all systems have to be online, and have to provide resources until the modifications are complete on the last system. Especially when there is concurrent data access, this can lead to delays and deadlocks.

A more loosely coupled way to ensure overall consistency is compensation. In this approach, you modify the first backend, and then modify the second backend; if only one modification is successful, you then “compensate” for the problem appropriately. For example, you might revert the successful modification to restore the consistent situation that existed before the modifications began, or send a problem report to an error desktop where somebody can look into the details and deal with it manually.

The advantage of compensation is that system updates don’t have to be performed synchronously (some backends might even be offline while they are being updated). The drawback is that you have to explicitly provide and call services that revert previous services or programs for manual error handling.

BPEL, the process execution language of Web Services, directly supports compensation (see BPEL).

Control of Process Logic

Process-control decisions can also lead to different forms of coupling. Having one central component controlling the whole process logic creates a bottleneck because each involved system must connect with it. Failure of such a central component will stop the whole process.

On the other hand, if you have decentralized or distributed control (where each component does its job and knows which component will continue after) you avoid bottlenecks, and if some systems fail, others can still continue to work. See Orchestration Versus Choreography for details.

Deployment

Whether you require that system updates be deployed simultaneously, or at different times, is related to coupling. Of course, systems are bound more tightly to each other if it is required that they update synchronously. The more loosely coupled approach of updating at different times, however, leads to a very important drawback: the need for migration, which leads to versioning (see Chapter 12).

Versioning

Your versioning policy also has something to do with tight or loose coupling. If a system provides certain data types that are used by a consumer, you’ll have problems when the provider adds new attributes. If the provider introduces a new type version, the consumer will have to upgrade explicitly to this new type; otherwise, the provider will have to support both types. If, on the other hand, the provider just adds the attribute to the existing type, this might cause binary compatibility issues, and require the consumer to recompile its code or use another library.

With a more loosely coupled form of data type versioning, the consumer won’t have to do anything as long as the modifications are backward compatible.

However, as discussed in Versioning of Data Types, achieving loose coupling here can be very complicated. Again, it’s up to you to decide on your policy by discussing the pros and cons.

Dealing with Loose Coupling

The forms of loose coupling discussed in this chapter are only some (more or less typical) examples. Again, note that there are no hard and fast rules: you will have to decide on the appropriate amount of loose coupling for your specific context and architecture.

I have seen very different decisions made with regard to different types of coupling. As I mentioned earlier, the policy of one of my customers was to avoid asynchronous communication whenever possible, based on the experience that it led to race conditions at runtime that were very hard, or even impossible, to reproduce in a development environment, and therefore almost impossible to fix. Another customer in the same domain had a policy that synchronous calls were allowed only for reading service calls because the performance was not good enough for writing service calls.

Note that you might also have to decide about combinations of forms of loose coupling. For example, one important decision you’ll have to make is whether an ESB should be separated from a backend via a protocol, or via an API (see Protocol-Driven Versus API-Driven ESB). Separating via an API usually means that the ESB provides libraries each backend or backend adapter has to use. So, deployment and binding become issues. On the other hand, using a common API, you can hide some aspects of synchronous or asynchronous communications inside the ESB.

You might ask which forms of loose coupling are typical. To my best knowledge, there is no answer. All I can say is that the larger systems are, the more loosely they should be coupled.

Summary

Loose coupling is a fundamental concept of SOA (and large distributed systems in general) aimed at reducing dependencies between different systems.

There are different forms of loose coupling, and you will have to find the mixture of tight and loose coupling that’s appropriate for your specific context and project.

Any form of loose coupling has drawbacks. For this reason, loose coupling should never be an end in itself.

The need to map data is usually a good property of large systems.

Get SOA in Practice now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.