Chapter 4. Qualitative Research Methods

Quantitative research is the foundation of research. The stories from Chapter 1 illustrate how numbers impact efficiency and worker flow creates an opportunity to innovate product design. As efficiencies in technology reach a predictable flow, designers seek to do more than streamline tasks. They ask what drives people to do the work they do and what makes it enjoyable. Enter qualitative research.

Qualitative Research: Can You Feel It?

Qualitative research is the study of anything that is subjective, notably the personal stories and challenges of our customers. Where quantitative research focuses on what can be measured, such as the time to complete a task, qualitative research looks at why customers are completing the task in the first place. Qualitative research seeks to understand customers’ motivations and desires by focusing on comprehension and accessibility that might not be numerically measured, but can nevertheless impact usability and desirability of systems.

Some common measures of qualitative research include:

Pleasures or challenges of a task

Preferences for different tools

Comprehension of content

Comfort with system or task

Workarounds, hacks, and “MacGyvered” solutions

This list differs dramatically from the one in Chapter 3. These factors cannot be statistically measured. At the same time, qualitative research provides insights and can use smaller data sets.

Where Do I Find Qualitative Research?

Qualitative research has roots in ethnography, anthropology, and psychology. The study of how humans behave is, at its core, qualitative research. Compared to the large pools of users researchers rely on to collect quantitative data, qualitative research often relies on smaller participant groups. Projects may have as few as 5–10 participants or, for projects with a broad scope and range of user profiles, upward of 20–40 people.

Qualitative research provides insight into individuals’ motivations as well as how they feel toward a product or service. This research provides contextual clues otherwise obscured by data or technology. Take the example of the good old VCR (Figure 4-1). As many readers might recall, VCRs came with a clock display that often flashed 12:00, especially after a power outage. A product designer might quantitatively measure the time required to set up the clock, and would be very disappointed to learn that the time on task is very high and the task is often left incomplete. While informative, this quantitative data set does not reflect the time spent reading the VCR manual or arguing with family members about how to set up this device. Only qualitative research can provide this degree of context and situational awareness. This information, in conjunction with quantitative data, can inform unique design solutions.

What Qualitative Research Is Not

Qualitative research provides the why and the how of human behavior that quantitative methods do not provide. Unlike quantitative research, these are softer skills or details not captured by a spreadsheet. That isn’t to say that qualitative research can’t exist without quantitative research. In fact, the best projects often succeed from integrating practices of both sides of the research spectrum.

Three Focuses of Research, Revisited

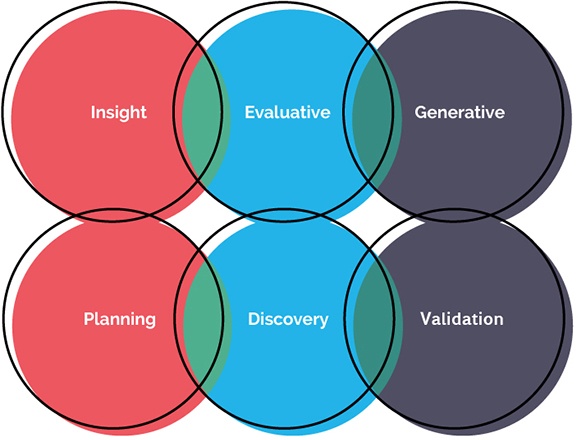

In the previous chapter we defined quantitative research methods as being insight-driven, evaluative, and generative. While this is true for qualitative research methods as well, we want to provide equal time to both ways of defining research—by phase and by type. This chapter will explore research techniques primarily by phase: initial planning, discovery and exploration, and product testing and validation (Figure 4-2).

Initial Planning

Initial planning often goes hand in hand with scoping. For those working in-house on internal products or at a startup, this might be the phase where the market needs for a product are evaluated.

Due to the limited scope of this phase, quantitative research is often too expensive or time-consuming to conduct. After all, in a consulting environment scoping exists before a client agrees to a project cost, and it’s in everyone’s best interest to be sensitive to timelines and to use existing knowledge to form educated guesses. Fortunately, there are low-cost methods for quickly evaluating a project’s domain.

Landscape analysis

Landscape analysis, a form of insight-driven research, is one of the most common forms of qualitative research at your disposal. Essential to initial discovery and planning, it requires a minimum amount of time and is crucial to your understanding the market you’re designing for.

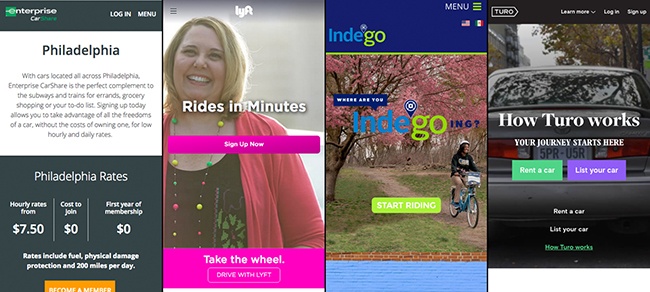

For a landscape analysis, designers identify existing products or services that reflect a portion of the new product’s functions or customer segmentation. For instance, if you are designing the newest transportation service, reviewing how Uber, Lyft, and various car-share services work is essential. It might also be important to evaluate nontraditional services, such as bike-share and carpooling services, and even public transit. By conducting this low-level analysis, the team can identify broad gaps or opportunities for their product (Figure 4-3).

Landscape analysis is not without its limitations. Often done in isolation from business stakeholders and customers, landscape analysis provides a biased view of what the product team identifies as important. It is also limited only to what can be seen from a public view.

Heuristic reviews

Heuristic reviews are the next level of insight-driven research. Performed either at the end of presales or immediately after the project starts, a heuristic review evaluates an existing product or service based on an established set of heuristics, or best practices.

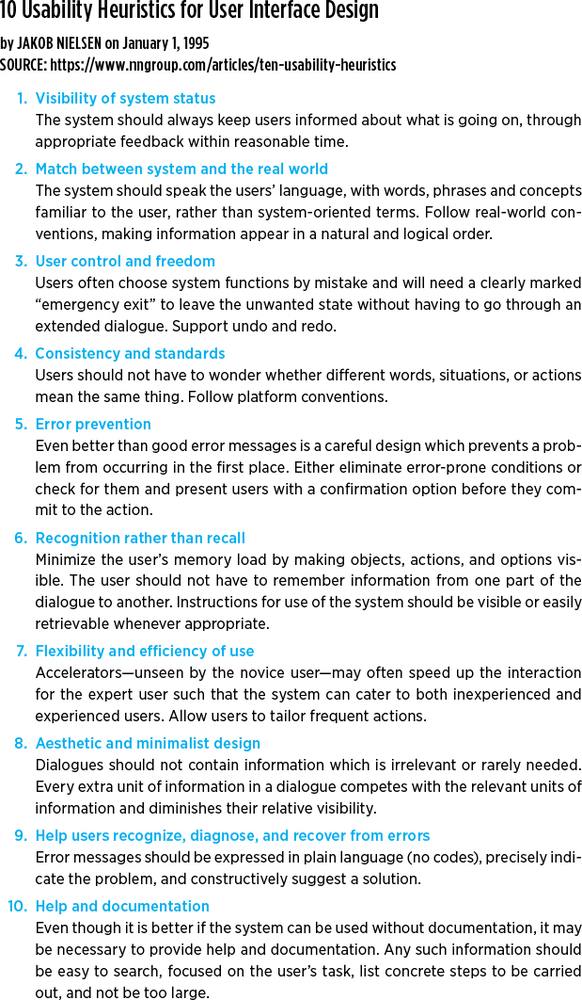

While there are many flavors of heuristics, we recommend choosing from the following list, which offers a wide variety of web and human behaviors to measure against as well as a balance of technical and emotive needs (Figure 4-4):

Jakob Nielsen’s 10 Usability Heuristics (https://www.nngroup.com/articles/ten-usability-heuristics)

Abby Covert’s IA Heuristics (http://abbytheia.com/2012/02/04/ia_heuristics)

Weinschenk and Barker Classification of Heuristics (https://en.wikipedia.org/wiki/Heuristic_evaluation#Weinschenk_and_Barker_classification)

Gerhardt-Powals’ Cognitive Engineering Principles (https://en.wikipedia.org/wiki/Heuristic_evaluation#Gerhardt-Powals.E2.80.99_cognitive_engineering_principles)

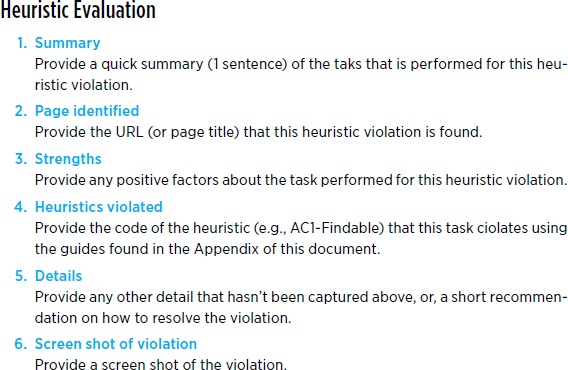

Heuristic evaluations seek to identify opportunities and gaps in an existing workflow. While they can be performed with competitor products, it is best to evaluate the product you are looking to improve. While there is no single way to craft a heuristic review, Figure 4-5 illustrates one approach.

Discovery and Exploration

The discovery and exploration phase is often the focus of product teams. Determining the idea and exploring various avenues and solutions seems to be the most exciting part of our work. Often inline with generative stages of research, discovery offers insights that were previously unobtainable.

Contextual inquiry

Contextual inquiries—also known by names like think-aloud studies and ride-along studies—are easily the most common version of discovery and exploration.

At their core, contextual inquiries are the study of how people perform one or more tasks in context. Redesigning a risk assessment tool for financial traders? Go to the trading floor and sit with the investors. Designing a new method to handle electronic health records? Visit a physician’s office to see how nurses, doctors, and office managers handle data. The goal of contextual inquiries is to be a fly on the wall and observe tasks with as little interference or bias as possible. Where quantitative research methods are conducted passively, contextual inquiries require one-on-one attention to participants and a larger time investment, and must be conducted in person (Figure 4-6).

While the goal of contextual inquiries is to be as unobtrusive as possible, questions are highly encouraged. Contextual inquiries start with a prompt to participants to “think aloud” about what they are doing. This provides context of actions as well as immediate, observable feedback on their tasks. Researchers often probe, asking questions of the participants to gain deeper understanding (with the caveat that if something is time-sensitive or important, questions can be revisited later).

Contextual inquiries can be conducted with as few as 5 or as many as 50 participants, depending on the project scope. This is a major distinction from quantitative research, where large data pools are the only way to guarantee good data. Contextual inquiries instead rely on trends and the researcher’s experience and ability to make judgment calls about what is important. A good rule of thumb: as you start to hear the same information again and again, you have conducted enough research.

Product Testing and Validation

The most important stage of any product design is testing and validation. In common product development cycles, teams move between validation and discovery in a fluid manner, constantly improving concepts. Product testing is most closely tied to evaluative research and also informs generative research.

Moderated product validation

In an ideal world, product validation is conducted in a moderated setting where a researcher is able to meet directly with users of the system. This allows moderators to adjust testing criteria on the fly and ask probing questions as needed.

Moderated product validation often involves inviting participants to a lab, or meeting them in their place of work. They will be instructed to follow a series of specific tasks and, as with contextual inquiries, will be prompted to speak their thought process aloud.

In this way, teams observe in real time how their product behaves with customers and can identify opportunities for improvements. In conjunction with quantitative tests like A/B tests, a holistic view of the system can be achieved.

Unmoderated product validation

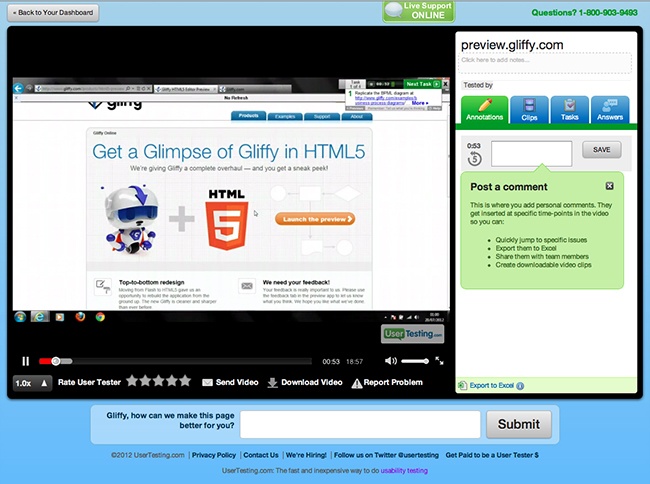

It is not always affordable and practical to invite participants to your office. In the case of global products, traveling to different locations may be cost-prohibitive. Tools like User Testing (https://www.usertesting.com) provide a great way to quickly and affordably set up remote, unmoderated product testing. While limited to services that can be hosted on the Web, User Testing and similar tools allow moderators to upload a script of tasks. Participants then log in and, using their device’s microphone, can think aloud as they walk through predetermined tasks. Screen recordings in conjunction with the audio make a good alternative to moderated testing (Figure 4-7).

While lower cost and often quicker to deliver feedback, unmoderated validation is limited in that the script is predefined and a moderator cannot adjust a question based on a unique perspective or lessons from previous tests. Unmoderated testing requires additional diligence in crafting questions in order to ensure they are not leading or too prescriptive. A third option for product validation is remote moderated testing, which combines the real-time interaction with customers and the support of digital communication screen-sharing tools such as Skype, Join.Me, or GoToMeeting.

Participatory Design

Participatory design takes on many shapes and flavors. This may be as simple as a workshop brainstorming ideas and opportunities, or a more formal sketching exercise. Participants may be asked to sketch actual interfaces or adapt their mental model in visual ways.

Participatory design often takes place with business stakeholders, though it can be used at any phase of research. Collaborative exercises may occur at the end of a contextual inquiry, the closing question of a validation session, or anywhere else the product team has opportunities to collaborate with stakeholders and customers.

Additional Methods

Just as there were more quantitative research methods than could be covered in a single chapter, the breadth of qualitative research spans beyond these pages. Table 4-1 summarizes the methods just covered plus a few additional methods we find particularly helpful.

Method name | Description | Planning | Discovery | Validation |

Card sorting | A similar method to quantitative card sorting with smaller pools of available customers. | X | X | |

Contextual inquiry | An ethnographic approach of following customers in their environment and observing their use of a product or system. | X | X | |

Diary study | A method, either digital or analog, where customers track their own use and behaviors with a system. | X | X | |

Heuristic evaluation | An expert review of a system based on established or accepted benchmarks. | X | X | |

Landscape analysis | A review of similar or competing products within an industry. | X | X | |

Moderated product validation | Similar to quantitative product validation with smaller data sets and a more ethnographic approach. | X | ||

Participatory design | Methods of eliciting design and strategic understanding from customers and stakeholders. | X | X | |

Stakeholder workshop | Methods of guiding conversations with business stakeholders on goals and opportunities of a system. | X | X | |

Surveys | More informal than quantitative surveys. | X | X | |

Taxonomy review | Differs from quantitative reviews in that it may be conducted by system experts as an initial evaluation. | X | X | |

Unmoderated product validation | Similar to quantitative product validation but with smaller data sets and a more ethnographic approach. | X |

Qualitative Methods: When and Where

There is no decision tree for when qualitative methods should be used in place of quantitative methods. In fact, both should be used in parallel. Qualitative methods prove effective when there is a small, identifiable population of customers. For new and novel products where quantitative research might be sparse, qualitative research provides exceptional insights and opportunities. Lastly, qualitative research is a great way to show business stakeholders firsthand what customers are feeling.

Qualitative Methods: When to Avoid

One of the major hurdles qualitative research has is that it is a “soft science.” Because it’s not based in numbers like its sibling, quantitative research, many business stakeholders don’t want to rely on qualitative research alone. To address this, invite stakeholders to observe and participate in qualitative research so they might experience the “aha” moments directly. This turns them into allies and supporters rather than blockers when you’re discussing findings with the broader client team. It is important to know your audience and what type of results they are looking for when choosing between quantitative and qualitative research.

Qualitative and Quantitative: A Match Made in Heaven

Quantitative and qualitative research methods are equals, not opposites. You cannot have one without the other. The most successful projects balance the two and inform our product designs.

Just as life imitates art, qualitative and quantitative research inform and imitate each other. There is not a single artifact in product design that you won’t improve by integrating both of these techniques.

Data-Driven Personas

Personas are fictional customers you create to represent various user types. These may include the call center representative, the tech native, or the Luddite. Traditional market segments are typically focused on the numbers (age, gender, geography) of customers. Similarly, classical personas may be generated from a handful of contextual inquiries with no hard data grounding them.

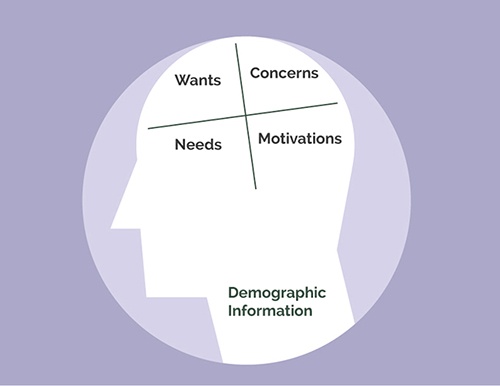

Data-driven personas balance this. By combining the analytical data about who’s using a system with the data on users’ wants, needs, and motivations you’ve uncovered through contextual inquiries, you can establish well-rounded data-driven personas.

Figure 4-8 illustrates a common structure for developing data-driven personas.

Data-Driven Customer Journeys

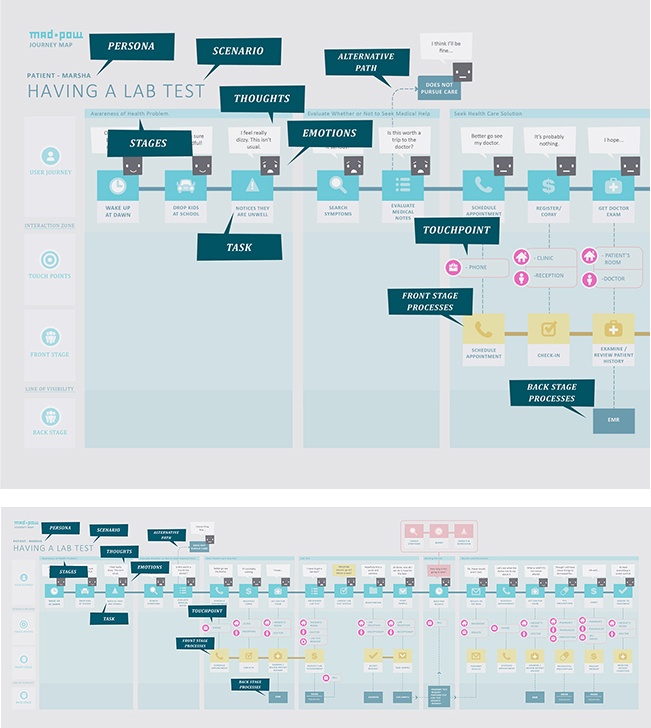

Customer journeys are often created to illustrate the touchpoints a customer has throughout a process. This may be inclusive of an entire ecosystem, from researching cars online to entering an auto dealership to purchasing the car and making payments. It may also be more specific, such as onboarding for a new piece of technology.

Quantitative customer journeys provide only the steps a customer takes. A qualitative customer journey focuses primarily on emotions. Combining data from both sources allows you to create data-driven customer journeys that account for real task time and latency with awareness of human needs. These can be used as baselines for KPIs and establishing longer-term roadmaps (Figure 4-9).

Data-Driven Design

Data-driven design is the term ascribed to making design decisions with actual data. Rather than basing decisions on customer feedback or design intuition alone, with data-driven design you use system analytics and other data from quantitative research to inform design. There is no single way to integrate quantitative research into design, though we recommend befriending your analytics team and discussing where opportunities to collaborate may exist.

Exercise: Getting the Feel for Qualitative Research

Qualitative research can be a time-consuming process. In order to best understand the opportunities for qualitative research methods in your work, follow this simple exercise to identify the type of research and goals for an existing project.

Choose a method.

From the list of methods illustrated in this chapter, select one that you are particularly interested in. Try to identify a method you might not have encountered in a past project.

Write down why you chose this method.

Think of a project you are currently working on. On a sheet of paper, list out what you hope to gain from this method. What questions do you have about a project that would be best answered by direct interaction with users?

Bring in data.

For many teams, quantitative data is available that can help inform the questions you are asking. If this is true for you, on the same sheet of paper, list the quantitative data sources that might be available to support the qualitative method you selected in step 1.

Parting Thoughts

Qualitative research is often seen as the bread and butter of product design. It doesn’t require statistical analysis and employs softer skills that many professionals pride themselves on having. While soft skills and qualitative studies are important, it is equally important to understand when and how to use them. All too often a designer knows only one method and tries to fit that approach into every project. Rather than fit the square peg in the round hole, familiarize yourself with as many methods as possible, and only then focus on a few to become truly proficient in.

With this balance in mind, the next chapter will describe an approach for choosing between quantitative and qualitative research methods. The book will then focus on how research is actually conducted, starting with logistics.

Get UX Research now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.