RISELab’s AutoPandas hints at automation tech that will change the nature of software development

Neural-backed generators are a promising step toward practical program synthesis.

Drum of a washing machine (source: Petar Milošević on Wikimedia Commons)

Drum of a washing machine (source: Petar Milošević on Wikimedia Commons)

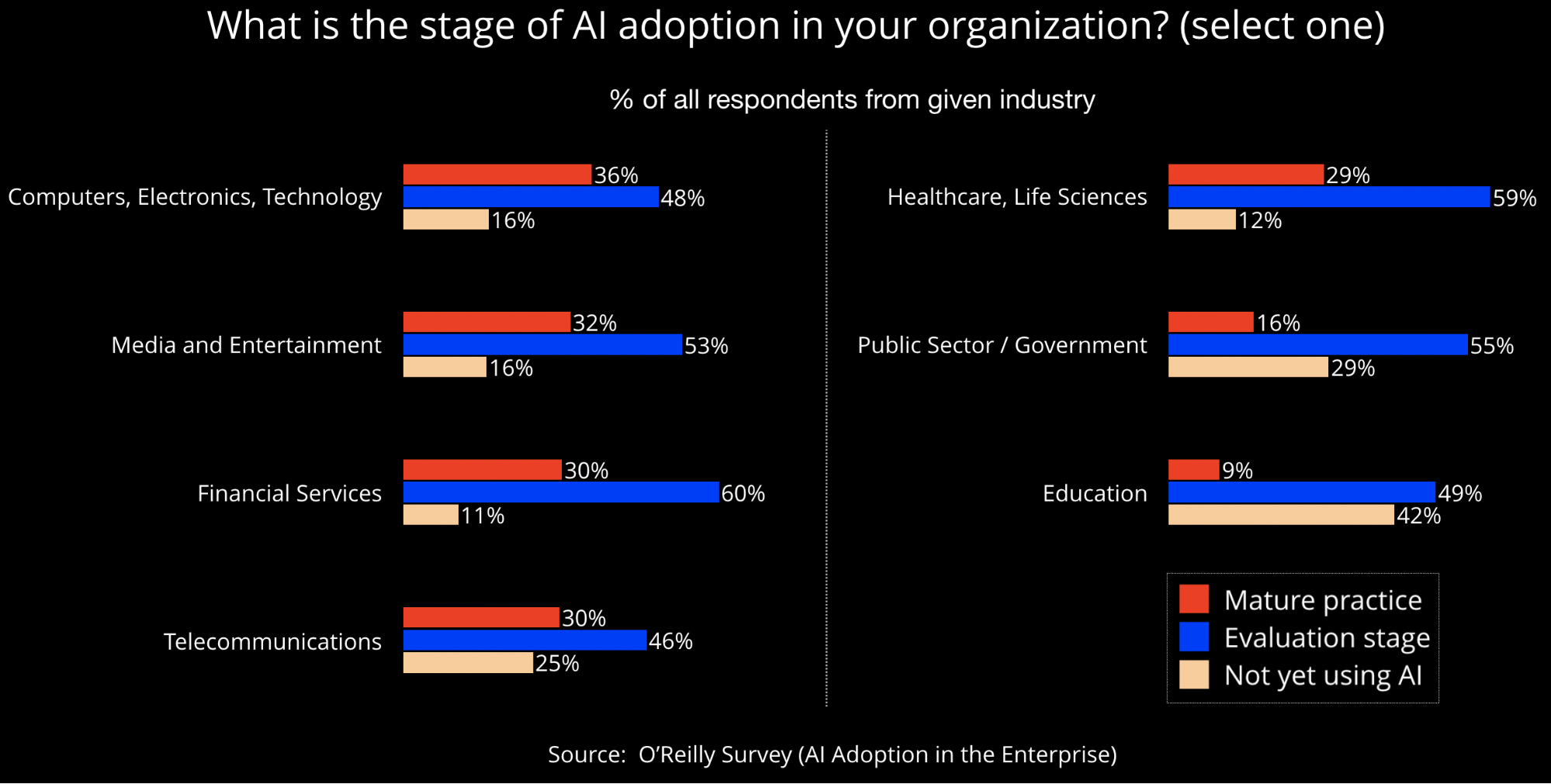

There’s a lot of hype surrounding AI, but are companies actually beginning to use AI technologies? In a survey we released earlier this year, we found that more than 60% of respondents worked in organizations that planned to invest some of their IT budgets into AI. We also found that the level of investment depended on how much experience a company already had with AI technologies, with companies further along the maturity curve planning substantially higher investments. As far as current levels of adoption, the answer depended on the industry sector. We found that in several industries, 30% or more of respondents described their organizations as having a mature AI practice:

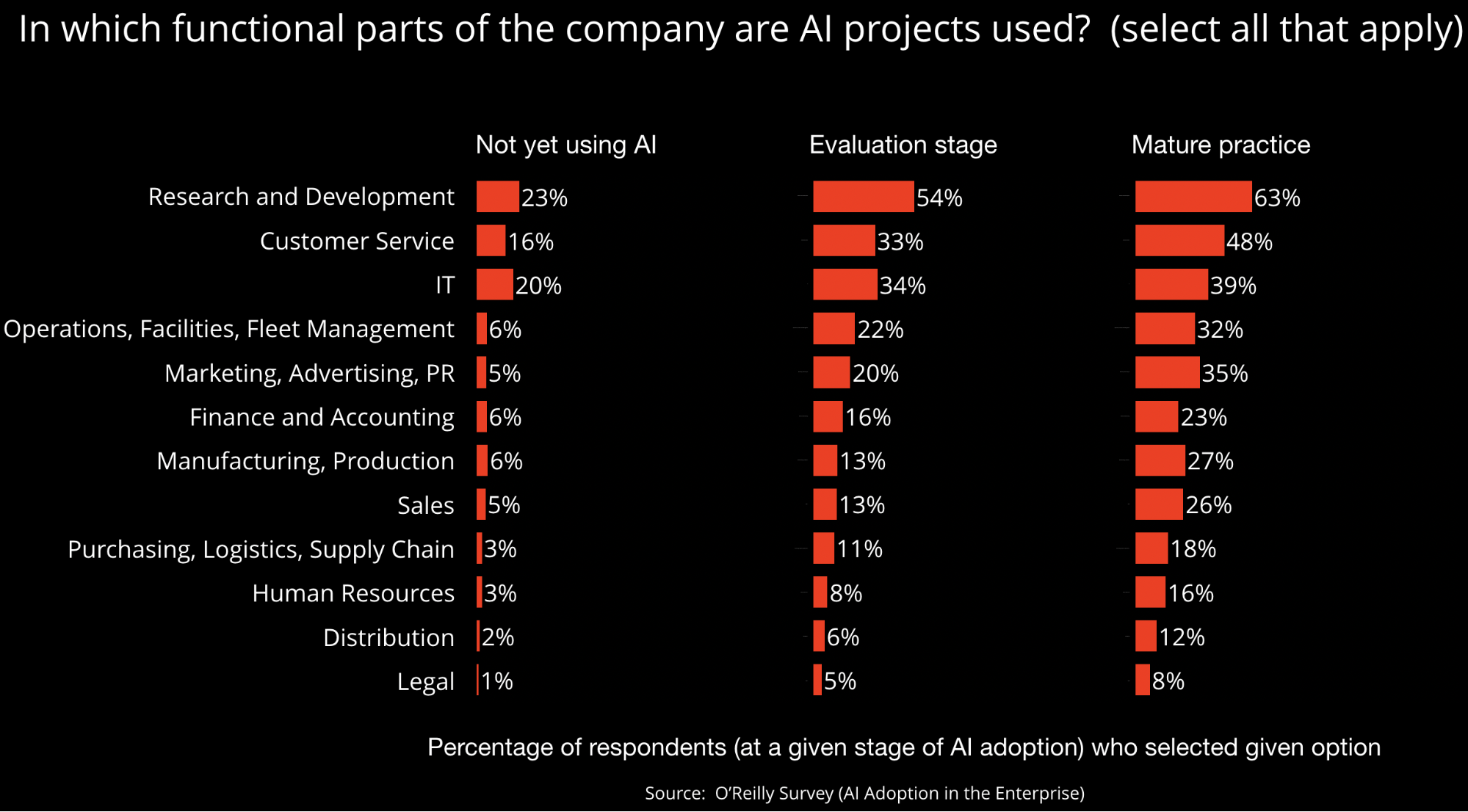

In which areas or domains are AI technologies being applied? As with any new technology, AI is used for a lot of R&D-related activity. But we are also beginning to see AI and machine learning gain traction in areas like customer service and IT. In a recent post, we outlined the many areas pertaining to customer experience, where AI-related technologies are beginning to make an impact. This includes things like data quality, personalization, customer service, and many other factors that impact customer experience.

One area I’m particularly interested in is the application of AI and automation technologies in data science, data engineering, and software development. We’ve sketched some initial manifestations of “human in the loop” technologies in software development, where initial applications of machine learning are beginning to change how people build and manage software systems. Automation has also emerged as one of the hottest topics in data science and machine learning (AutoML), and teams of researchers and practitioners are actively building tools that can automate every stage of a machine learning pipeline.

For a typical data scientist, data engineer, or developer, there is an explosion of tools and APIs they now need to work with and “master.” A data scientist might need to know Python, pandas, numpy, scikit-learn, one or more deep learning frameworks, Apache Spark, and more. According to a recent blog post by Khaliq Gant, a web developer is typically expected to demonstrate competence in things like “navigating the terminal, HTML, CSS, JavaScript, cloud infrastructure, deployment strategies, databases, HTTP protocols, and that’s just the beginning.” Data engineers additionally need to master several pieces of infrastructure.

How do data scientists, data engineers, and developers cope with this explosion of tools and APIs? They typically use search (Google) or post in forums (Stack Overflow, Slack, mailing lists). In both instances, it takes some baseline knowledge to both frame a question and to be able to discern which answer is the “best one” to choose. In the case of forums, there might be a significant delay before one obtains an adequate response. Those with more resources and more time to spare can avail of free or paid learning resources such as books, videos, or training courses.

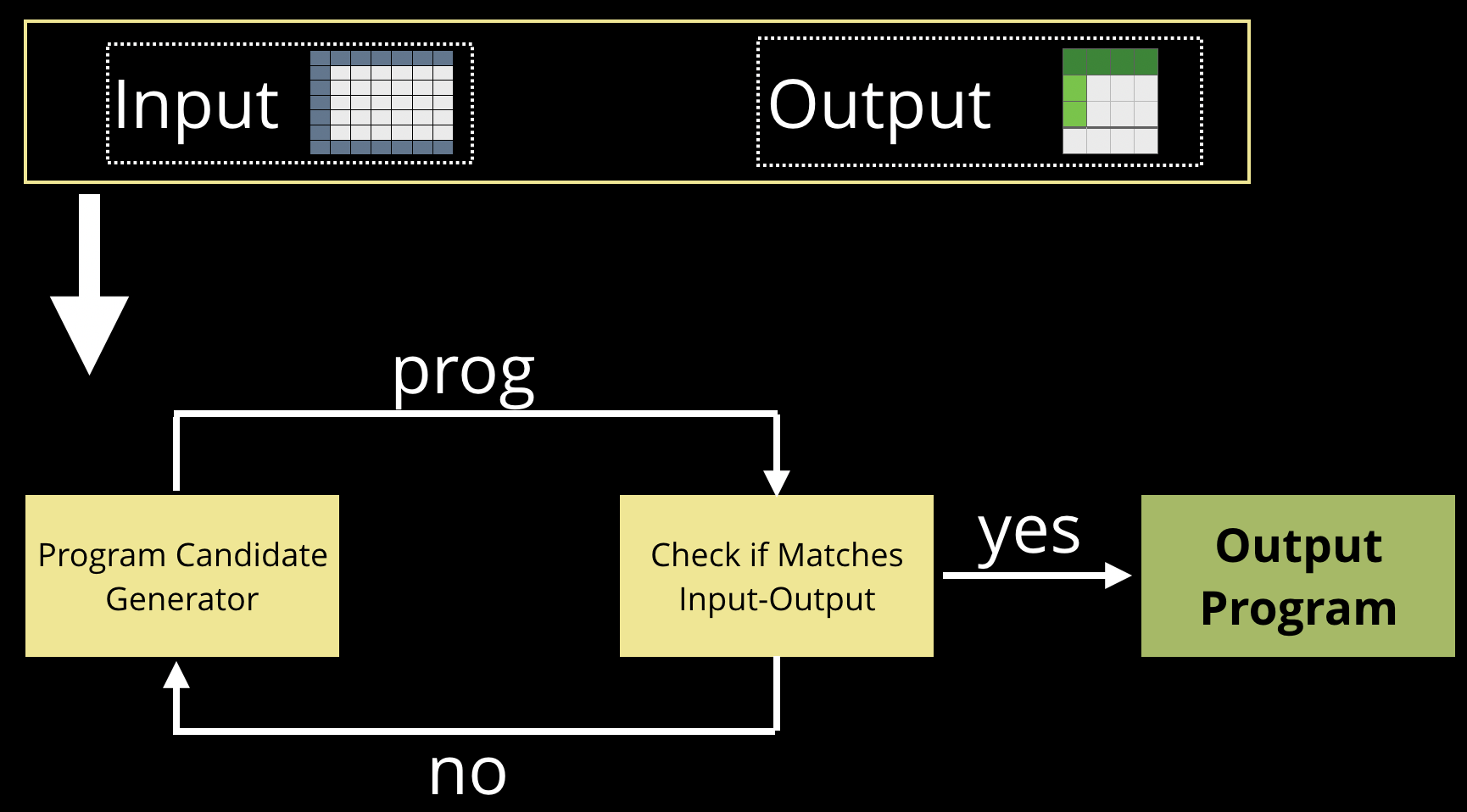

There are emerging automation tools that can drastically increase the efficiency and productivity of software developers. At his recent keynote at the Artificial Intelligence conference in Beijing, professor Ion Stoica, director of UC Berkeley’s RISELab, unveiled a new research project that hints at a path forward for software developers. Their initial output is AutoPandas, a program synthesis engine for Pandas, one of the most widely used data science libraries today. As described in a paper from Microsoft and the University of Washington, program synthesis is a longstanding research area in computer science:

Program synthesis is the task of automatically finding a program in the underlying programming language that satisfies the user intent expressed in the form of some specification. Since the inception of AI in the 1950s, this problem has been considered the holy grail of computer science.

An AutoPandas user simply specifies an input and output data structure (i.e., dataframes), and AutoPandas automatically synthesizes an optimal program that produces the desired output from the given input. AutoPandas relies on “program generators” that capture the API constraints to reduce the search space (the space of possible programs is immense), neural network models to predict the arguments of the API calls, and the distributed computing framework Ray to scale up the search.

While we are still very much in the early days, neural-backed generators are an extremely promising step toward practical program synthesis. Note that while researchers at RISELab have initially focused on pandas, the techniques and tools behind AutoPandas can be applied to other APIs (e.g., numpy, TensorFlow, etc.). So, any number of popular tools used by developers, data scientists, or data engineers can benefit from some level of automation via program synthesis.

Programming tools have always changed over time (I no longer use Perl, for example), and there has always been an expectation that technologists should be able to adapt to the latest tools and methods. Continued progress in tools for program synthesis means that automation will change how data scientists, data engineers, or developers do their work. One can imagine a future where mastery of individual tools and APIs will matter less, and technologists can focus on architecture and building end-to-end systems and applications. As tools and APIs get easier to use, your employer won’t care as much about what tools you know coming into a job, but they will expect you to possess “soft skills” (including skills that cannot be easily automated), domain knowledge and expertise, and the ability to think holistically.

Related content:

- Building reinforcement learning models and AI applications with Ray: a tutorial at the Artificial Intelligence Conference in San Jose, September 9-12, 2019.

- “AI Adoption in the Enterprise”

- “Deep automation in machine learning”

- “How AI and machine learning are improving customer experience”

- “What machine learning means for software development”

- “The evolution and expanding utility of Ray”

- “AI and machine learning will require retraining your entire organization”