Chapter 5. Embeddings: How Machines “Understand” Words

In the first stage of our journey through lower-level NLP, we figured out how to use tokenizers to massage our text data into a format that’s more convenient for a neural net to read. The next piece of the puzzle is the embedding layer. If tokenizers are what our models will use to read text, embeddings are what they use to understand it.

Understanding Versus Reading Text

For a long time, machines have been able to represent characters (and by extension, words, sentences, etc.) digitally. The idea of using a binary encoding scheme for language and communication dates back to at least the invention of the telegraph in the 19th century.

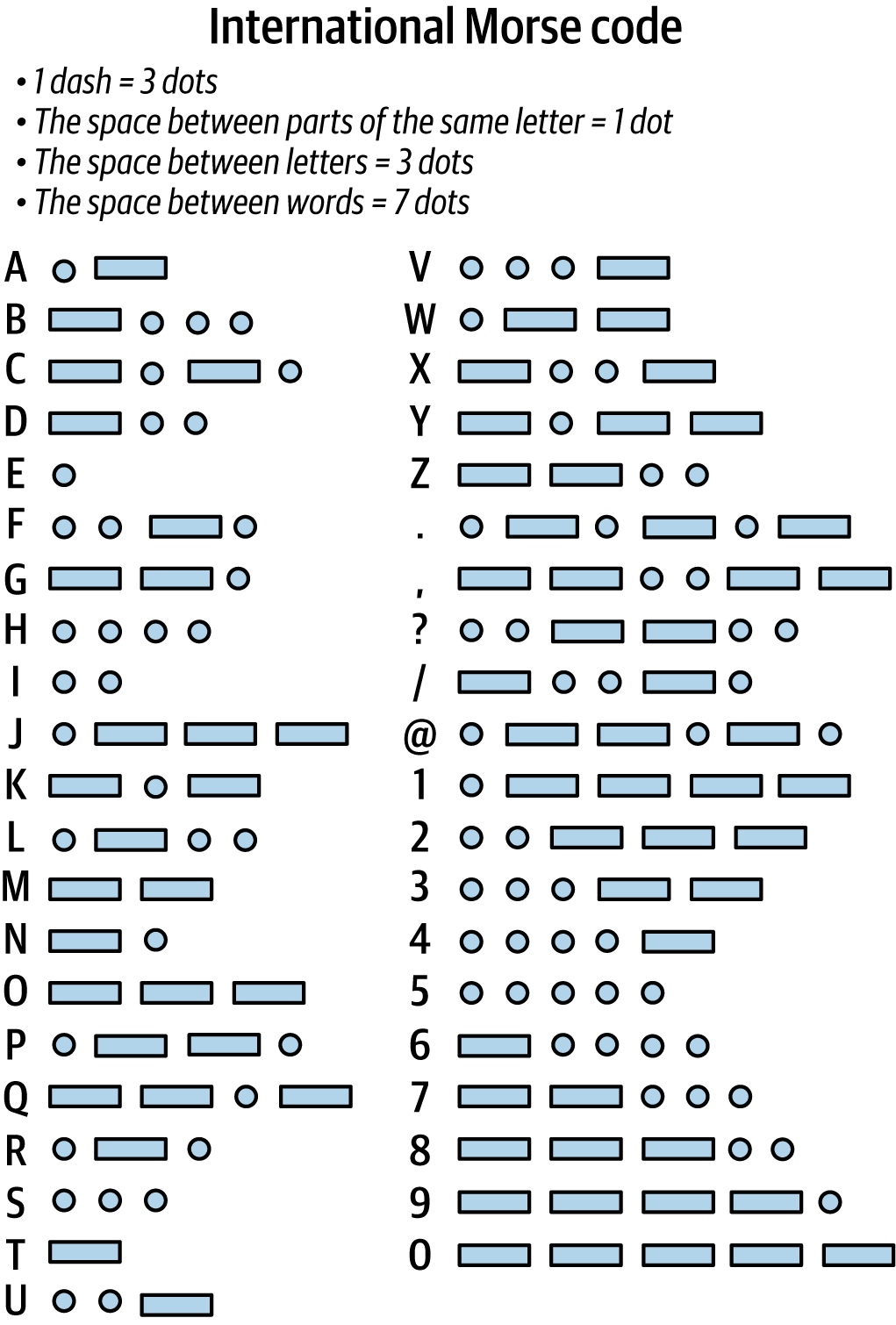

One of the earliest forms of language encoding was Morse code. In this system, binary signals, such as switching a light on and off or sending a sequence of long and short pulses of audio, were used to represent different characters. If two people had a mode of binary communication and agreed upon a standard of what the binary sequences meant, they could reliably communicate in Morse code. This was one of the earliest and simplest methods of embedding natural human language into a binary format that machines could work with in some way. Notice how Morse code, illustrated in Figure 5-1, uses only dots and dashes—analogous to the 1s and 0s used in modern digital communication.

Figure 5-1. Morse ...

Get Applied Natural Language Processing in the Enterprise now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.