Chapter 4. Web Performance

Each Tuesday morning, when a Facebook employee logs in to the application, they are presented with an option to try out the app on a slower connection for an hour. This is part of an initiative they call 2G Tuesdays, as an attempt to recognize the importance and challenges of designing and developing applications that are served over a variety of network conditions.

As developers, we often have access to good hardware and quick web connections, but this may not always be the case for our users. Even those of us who live in major cities may experience variable network conditions, clogged or throttled by overuse. When we build our sites and applications with a performance mindset, we benefit all of our users.

File Size

80% of the end-user response time is spent on the front-end

Steve Sounders, author of High Performance Websites

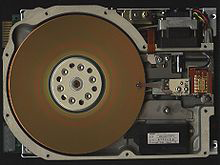

As of writing, the average web page requires a user to download roughly 2.3 MB worth of data. Using this metric, the first 5.25 inch hard drive, the 1980 Seagate ST–506 (Figure 4-1), would be able to hold just two modern web pages.

Figure 4-1. The Seagate ST–506 hard drive (image courtesy of Wikimedia Commons)

With varying connection speeds around the world, the cost of accessing our site’s can differ. The website What Does My Site Cost seeks to provide insight into the real-world data costs of sites accessed over a mobile connection. ...

Become an O’Reilly member and get unlimited access to this title plus top books and audiobooks from O’Reilly and nearly 200 top publishers, thousands of courses curated by job role, 150+ live events each month,

and much more.

Read now

Unlock full access