Chapter 1. Design Follows Technology

With all disciplines of design, as technology progresses, the restrictions become fewer and fewer until they get to the point at which a wave crashes and there’s nothing holding you back from making anything you can dream of. Architecture became bolder when steel frames could support nearly any structure architects could dream of without being restricted by the need to cater to gravity to the degree they needed to when building with stone alone. Furniture evolved with advancements in the technology of the materials available to designers, such as fiberglass and bending plywood, as well as technological advancements related to mass manufacturing processes. In graphic design, typefaces became increasingly more complex and detailed as printing technologies evolved to the point that those details could be reproduced accurately, and then it went completely insane when computers removed nearly all graphical restrictions.

Digital Maturity

Wearable technology has been around in one form or another since 17th-century China when someone came up with an abacus that you could wear on your finger. So, what’s so special about wearable technology at this point in time? There’s a reason wearable technology is making a resurgence. It’s because we’ve finally made it to the digital maturity tipping point: the point at which advancements in computing and the supporting infrastructure have lessened the restrictions of the design of technology so that they’re nearly nonexistent.

Considering that most people use their computers for simple internet-based information recording and retrieval, the gap in capabilities of a laptop and a computer the size of a watch is not very significant, at least from a technical standpoint. Though physical size of the technology is a big part of this advancement, there’s also the cost of computing, which has fallen to such a degree that this type of technology can proliferate on a major scale. Combine this with the open source hardware movement that is making the tools to create this technology available to a wider range of people. In this section, we go over some of the specific things that happened to advance wearable technology to where it is today.

Integrated Circuits

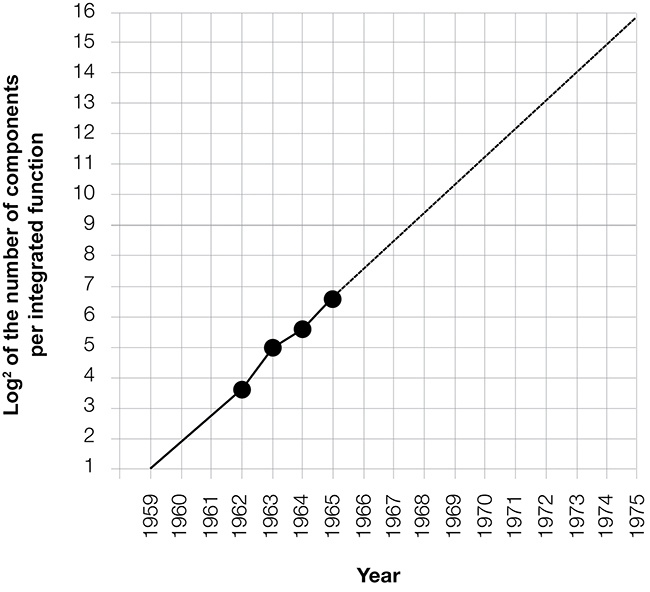

On a high level, processors work in pretty much the same way they did in the 1950s, when the first silicon chip was invented: a series of switches called transistors that have an on state and an off state control the flow of electrons. These are the 1s (on state) and 0s (off state) that compile into what we see on our computer screens. In 1965, Gordon Moore wrote an article for Electronics magazine in which he predicted that the number of transistors that could fit on to a chip would double every two years.[3] This became known as Moore’s law (Figure 1-1). Currently, the smallest transistor is down to 14 billionths of a meter wide. Beyond size efficiency, integrated circuits have seen increases in energy efficiency on par with Moore’s law, which is also very important for computers that can’t be plugged in to a power source all the time.

The Internet

The internet is obviously a major contributor to the advancement of wearable technology: the data that’s retrieved and recorded on a wearable device doesn’t need to be stored on the device if it can be stored on a server. The internet is also making Moore’s law slightly less relevant as a computational speed limit because said computation doesn’t need to be done entirely on a local device. An example of this are image recognition programs on Google Glass that take a photograph and immediately upload it to a server for processing instead of trying to do the computation on the device itself.

Cellular Data Networks

Cellular data is the primary access point for untethered wearable devices, whether through a phone or the device itself. 4G LTE cellular data has a maximum speed of 100 megabits per second (MBps) for downloads and 50 MBps for uploads, which is fast enough for most wearable applications.

Open Source

The last major contributor to the perfect storm of wearable computing is the increased level of access to the tools used to create the technology itself. This is primarily due to the open source movement, wherein the source code of software and original designs of devices are made available to the public to reproduce and modify freely. In addition to the designs and technology itself, an incredibly supportive educational community has grown around the open source movement.

The Human Problem

So, we’re now free of limitations with respect to the size of the device, the communication infrastructure, and even access to the production of the technology, but wearable devices are limited by one major thing: humans. Now that we’re free to do whatever we want with technology, we need to figure out how we actually want to move forward. The two main human-related areas are input—how we put information into the computer—and output—how the computer gives information to us.

Input

There are two high-level types of input for wearable devices. First, there’s detailed input such as text input or manipulation of objects and menu systems, basically the type of input you’d need to operate a normal desktop computer. Then, there’s passive input, which refers to things like steps, heart rate, GPS location, ambient noise, and other things that can be collected passively.

Detailed input

Detailed input on wearable devices is difficult, primarily because of the prevalence of the keyboard and mouse. People don’t necessarily want to keep using the keyboard and mouse as primary input mechanisms; it’s just that they’re so dominant that the way we design and think about digital interactions are inevitably stuck in that paradigm. It’s similar to television in its earliest days, when there just wasn’t an established content paradigm for the new medium, so most of the early television shows were just people reading radio scripts. Eventually we’ll get to something more appropriate, but it will take a while to turn the ship.

Today, there are a few techniques being tried out in terms of wearable text input. One-handed keyboards (Figure 1-2) were popular in academic circles but are generally considered clunky and difficult to learn. Voice input is getting a lot stronger in terms of recognition, but inevitably comes with some issues such as other people being able to hear what you’re saying and background noise issues. The cleanest method of text input that I’ve seen is actually instructing users to pull out their phones and use them as a temporary keyboard.

Beyond text input, there has been some interesting progress in gestural interfaces, particularly with the Myo Armband (Figure 1-3) from Thalmic Labs, which reads EEG signals from the movement in your arm muscles. Microsoft’s Hololens (Figure 1-4) also uses gestures as a primary input mechanism by targeting an object by looking at it and gesturing toward that object. In addition to gestures, Microsoft released a “clicker” which is essentially a handheld mouse trackpad to use with the Hololens.

Passive input

Passive input is a lot easier to figure out from a design perspective. It’s basically a matter of finding indicators of certain behaviors; for example, if the accelerometer on my device is moving in a specific pattern, I can safely assume that it’s an indicator that I’m walking and each cycle of that pattern is a step.

The most basic example of passive input are fitness trackers. These devices are worn all the time and you don’t really interact all that much with them other than swinging your arms around. A more interesting example of passive input comes from MIT Media Lab spinoff Humanyze, which distributes sensor-laden lanyards to the employees of a company it’s working with to analyze where and how social interactions are happening within the company’s building by tracking the employees’ location and recording who is speaking when there are conversations happening.

Output

Output is a much easier problem to tackle with wearable devices. Output is divided into active and passive categories. Active output includes things like heads-up displays, screen-based smartwatches, and audio feedback. These are all things that get your attention and inform you of something. Passive output is information that’s displayed more like dashboards, which are typically self-initiated or even communicate without commanding active attention.

Active output

We see a lot of examples of active output in wearable devices today. These are the types of interactions that require a conscious engagement, such as when you get an email notification on your smartwatch, or a calendar reminder pops up on your Google Glass. It’s pretty fair to say that most notifications are active outputs, but other non-notification interfaces, such as the display on a Fitbit, are active in the sense that in order to use them, you must actively engage with the device.

Passive output

Passive output is very different from active. Passive outputs are interfaces that might be always on and provide information to us without commanding our full attention. A great example of this is the Lumo Lift (Figure 1-5), a “digital posture coach” that vibrates every time you slouch. After a couple hours using the Lift, you get used to it to the point where it’s almost like another sense—you’re out and about doing things and working on things and you feel that little vibration and you react to it without diverting your attention. Another example of this would be the Withings Activité watches (Figure 1-6) that have a completely passive, always-on interface that you can glimpse out of the corner of your eye without actively thinking about.

The “Us” Part of Maturity

The other side of the digital maturity coin is that now, with little to no technological restrictions on design, we must decide how we, as a society, want to live with technology. Now that we can do whatever we want, we must determine what it is that we want. What’s healthy? What actually improves our lives? This is the part for which human-centered design is most important. We’ve made it through the Wild West days during which we had no idea if something was going to be useful or awful. Now, we need to take a firm look at what exactly is going on, if we’re actually benefiting from technology, and why we’re using that technology.

Moving forward in the design of wearable technologies requires us to be fully aware of the behavior that these devices encourage, good and bad, to provide truly useful services that empower people with more control and awareness in their day-to-day lives. We now have enough information to step back and take an honest look at how people actually use this technology so we can be critical of what we’re putting into the world and be fully aware of the ramifications of introducing these products into people’s lives.

[3] Gordon E. Moore, “Cramming More Components onto Integrated Circuits,” Electronics, April 19, 1965.

Get Designing for Wearables now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.