Chapter 1. Where We’ve Been, Where We’re Going

Introduction

I see it, but I don’t believe it.

— Georg Cantor

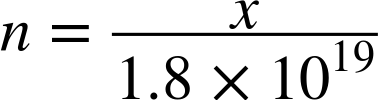

The standard LAN interface assignment in IPv6 is a /64. Or, to be more explicit, a subnet with 64 bits set aside for network identification and 64 bits reserved for host addresses. (If this is unfamiliar to you, don’t fret. We’ll review the basic rules of IPv6 addressing in the second chapter.) As it happens, 64 bits of host addressing makes for a pretty large decimal value:

![]() 18,446,744,073,709,551,616

18,446,744,073,709,551,616

It’s cumbersome to represent such large values, so let’s use scientific notation instead (and round the value down, too):

![]()

That’s a pretty big number (around 18 quintillion) and in IPv6, if we’re following the rules, we stick it on a single LAN interface. Here’s what that might look like in common router configuration syntax (Cisco IOS, in this case):

! interface FastEthernet0/0 ipv6 address 2001:db8:a:1::1/64

Now if someone were to ask you to configure the same interface for IPv4, what is the first question you’d ask? Most likely some variation of the following:

“How many hosts are on the LAN segment I’m configuring the interface for?”

The reason we ask this question is that we don’t want to use more IPv4 addresses than we need to. In most situations, the answer would help determine the size of the IPv4 subnet we would configure for the interface. Variable Length Subnet Masking (VLSM) in IPv4 provides the ability to tailor the subnet size accordingly.

This practice was adopted in the early days of the Internet to conserve public (or routable) IPv4 space. But chances are the LAN we’re configuring is using private address space[1] and Network Address Translation (NAT). And while that doesn’t give us anywhere close to the number of addresses we have at our disposal in IPv6, we still have three respectably large blocks from which to allocate interface assignments:

- 10.0.0.0/8

- 172.16.0.0/12

- 192.168.0.0/16

Since we can’t have overlapping IP space in the same site (at least not without VRFs or some beastly NAT configurations), we’d need to carve up one or more of those private IPv4 blocks further to provide subnets for all the interfaces in our network and, more specifically, host addresses.

But in IPv6, the question of “How many hosts are on the network segment I’m configuring the interface for?”, which is so commonplace in IPv4 address planning, has no relevance.

To see why, let’s assume x is equal to the answer. Then we’ll define n as the ratio of the addresses used to the total available addresses:

Now we can quantify how much of the available IPv6 address space we’ll consume. But the value for x can’t ever be larger than the maximum number of hosts we can have on an interface. What is that maximum?

Recall that a router or switch has to keep track of the mappings between layer 2 and layer 3 addresses. This is done through Address Resolution Protocol (ARP) in IPv4 and Neighbor Discovery (ND) in IPv6.[2] Keeping track of these mappings requires router memory and processor cycles, which are necessarily limited. Thus, the maximum of x is typically in the range of a few thousand.

This upper limit of x ensures that n will only ever be microscopically small. For example, let’s assume a router or switch could support a whopping 10,000 hosts on a single LAN segment:

So unlike IPv4, it doesn’t matter whether we have 2, 200, or 2,000,000 hosts on a single LAN segment. How could it when the total number of addresses available in the subnet of a standard interface assignment is more than 4.3 billion IPv4 Internets?

In other words, the concepts of scarcity, waste, and conservation as we understand them in relation to IPv4 host addressing have no equivalent in IPv6.

Tip

In IPv6 addressing, the primary concern isn’t how many hosts are in a subnet, but rather how many subnets are needed to build a logical and scalable address plan (and more efficiently operate our network).

IPv4 only ever offered a theoretical maximum of around 4.3 billion host addresses.[3] By comparison, IPv6 provides 3.4x1038 (or 340 trillion trillion trillion). Because the scale of numerical values we’re used to dealing with is relatively small, the second number is so large its significance can be elusive. As a result, those working with IPv6 have developed many comparisons over the years to help illustrate its difference in size from IPv4.

All the Stars in the Universe…

Astronomers estimate that there are around 400 billion stars in the Milky Way. Galaxies, of course, come in different sizes, and ours is perhaps on the smallish side (given that their are elliptical galaxies with an estimated 100 trillion stars). But let’s assume for the sake of discussion that 400 billion stars per galaxy is a good average. With 170 trillion galaxies estimated in the known universe, that results in a total of 6.8x1025 stars.

Yet that’s still 5 trillion times fewer than the number of available IPv6 addresses! It’s essentially impossible to visualize a quantity that large, and rational people have difficulty believing what they can’t see.

Cognitive dissonance is a term from psychology that means, roughly, the emotionally unpleasant impact of holding in one’s mind two or more ideas that are entirely inconsistent with each other. For rational people (like some network architects!), it’s generally an unpleasant state to be in. To eliminate or lessen cognitive dissonance, we will usually favor one idea over the other, sometimes quite unconsciously. Often, the favored idea is the one we’re the most familiar with. Here are the two contradictory ideas relevant to our discussion:

- Idea 1: We must conserve IP addresses.

- Idea 2: We have a virtually limitless supply of IP addresses.

- Result: Contradiction and cognitive dissonance, favoring the first, (and in this case) more familiar idea.

IPv4 Thinking

Idea 1 is the essence of IPv4 Thinking, a disability affecting network architects new to IPv6 and something that we’ll often observe, and illustrate the potential impact of, as we make our way through the book.

We’ll encounter a couple more of these comparisons as we proceed. I think they’re fun—they can tickle the imagination a bit. But perhaps more importantly, they help chip away at our ingrained prejudice favoring Idea 1.

From my own experience, the sooner we shed this obsolete idea, the sooner we’ll make the best use of the new principles guiding our IPv6 address planning efforts.

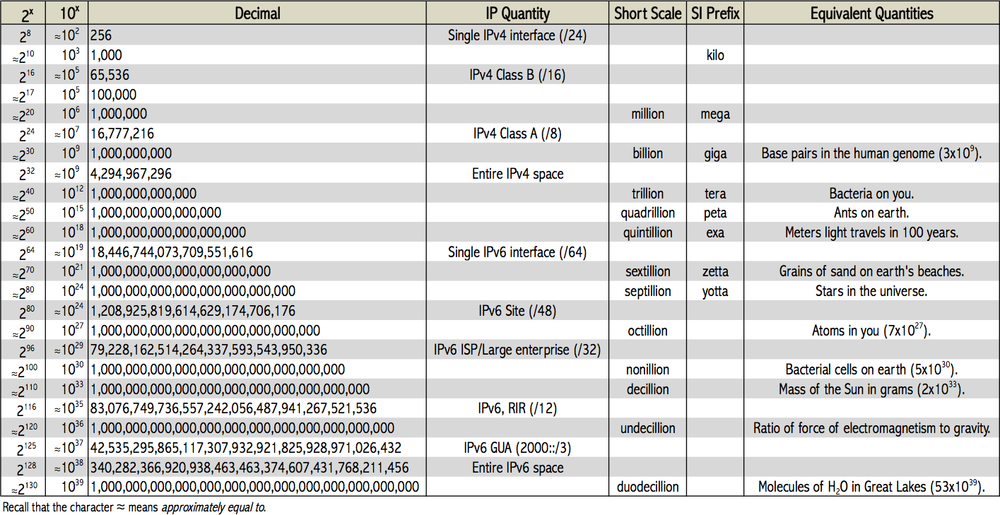

Figure 1-1 offers a comparison of scale based on values for powers of 2 (binary) and 10 (decimal).

In the Beginning

And finally, because nobody could make up their minds and I’m sitting there in the Defense Department trying to get this program to move ahead, we haven’t built anything, I said, it’s 32 bits. That’s it. Let’s go do something. Here we are. My fault.

— Dr. Vinton G. Cerf

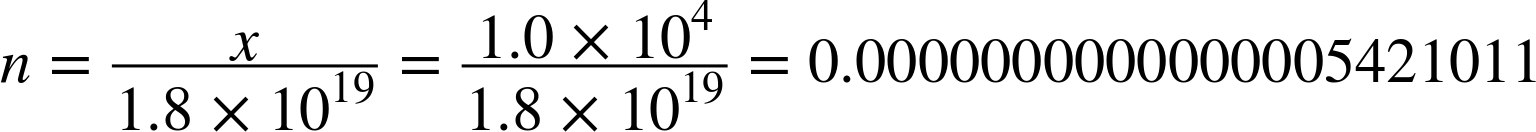

Back in 1977, when he chose 32 bits to use for Internet Protocol addressing, Vint Cerf might have had trouble believing that in a few decades, a global network with billions of hosts (and still using the protocol he co-invented with Bob Kahn) would have already permanently revolutionized human communication. After all, at that time, host count on the military-funded, academic research network ARPANET was just north of 100.

By January 1, 1983, host counts were still modest enough by today’s standards that the entire ARPANET could switch over from the legacy routed Network Control Protocol to TCP/IP in one day (though the transition took many years to plan). By 1990, the ARPANET had been decommissioned at the ripe old age of 20. Its host count at the time took up slightly more than half of one /19, approximately 300,000 IPv4 addresses (or about 0.007% of the overall IPv4 address space).

Yet, by then, the question was already at least a couple of years old: how long until 32-bit IP addresses run out?

A Dilemma of Scale

Concern about IP address exhaustion had been rising, due to the combination of the protocol’s remarkable success, along with a legacy allocation method based on classful addressing that was proving tremendously wasteful and inefficient.[6] It was not uncommon in the early days of the ARPANET for any large requesting organization to receive a Class A network,[7] or 16,777,214 addresses. With only 256 total Class A networks in the entire IP space, a smaller allocation was obviously more appropriate to avoid quickly exhausting all remaining IP address blocks. With 65,536 addresses, a Class B network[8] met the host count needs of most organizations. Each Class B also provided 256 Class C subnetworks[9] (each with 256 addresses), which could be used by the organization to establish or preserve network hierarchy. (This allowed for more efficient network management, as well as processor and router memory utilization.)

But allocating Class B networks in place of Class A ones was merely slicing the same undersized pie in thinner slices. The problem of eventual IP exhaustion remained.[10] In 1992, the IETF published RFC 1338 titled “Supernetting: an Address Assignment and Aggregation Strategy.” It identified three problems:

- Exhaustion of Class B address space

- Growth of the routing tables beyond manageability

- Exhaustion of all IP address space

The first problem was predicted to occur within one to three years. What was needed to slow its arrival were “right-sized” allocations that provided sufficient, but not an excessive number of, host addresses. With only 254 usable addresses, Class C networks were not large enough to provide host addresses for most organizations. By the same token, the 65,534 addresses available in a Class B were often overkill.

The second problem followed from the suggested solution for the first. If IP allocations became ever more granular to conserve remaining IP space, the routing table could grow too quickly, overwhelming hardware resources (this during a period when memory and processing power were more prohibitively expensive) and leading to global routing instability.

The subsequent recommendation and adoption of Classless Inter-Domain Routing (CIDR) helped balance these requirements. Subnetting beyond the bit boundaries of the classful networks provided right-sized allocations.[11] It also allowed for the aggregation of Class C (and other) subnets, permitting a much more controlled growth of the global routing table. The adoption of CIDR helped provide sufficient host addressing and slow the growth of the global routing table, at least in the short-term.

But the third problem would require nothing less than the development of a new protocol; ideally, one with enough addresses to eliminate the problem of exhaustion indefinitely. But that left the enormous challenge of how to transition to the new protocol from 32-bit IP. At the time, one of the protocols being considered appeared to recognize the need to keep existing production IP networks up and running with a minimum of disruption. It recommended:[12]

- An ability to upgrade routers and hosts to the new protocol and add them to the network independently of each other

- The persistence of existing IPv4 addressing

- Keeping deployment costs manageable

The one protocol meeting these requirements was known as SIPP-16 (or Simple IP Plus) and was most noteworthy for offering a 128-bit address. It was also known for being version 6 of the candidates for the next-generation Internet Protocol.[15]

IPv4 Exhaustion and NAT

While work proceeded on IPv4’s eventual replacement, other projects examined ways to slow IPv4 exhaustion.

In the early 1990s, there were various predictions for when IPv4 exhaustion would occur. The Address Lifetime Expectations (ALE)[16] working group, formed in 1993, estimated that depletion would occur sometime between 2005 and 2011. As it happened, IANA (the Internet Assigned Numbers Authority) handed out the last five routable /8s to the various RIRs (Regional Internet Registries) in February 2011.[17] In all likelihood, IPv4 exhaustion would have occurred much sooner without the adoption of NAT.

The original NAT proposal suggested that stub networks (as most corporate or enterprise networks were at the time) could share and reuse the same address range provided that these potentially duplicate host addresses were translated to unique, globally routable addresses at the organization’s edge. Initially, only one reusable, or private, Class A network was defined, but that was later expanded to the three listed at the beginning of the chapter.

The NAT proposal was attractive for many reasons. The obvious general appeal was that it would help slow the overall rate of IPv4 exhaustion. Early enterprise and corporate networks would have liked the benefit of a centralized, locally-administered solution that would be relatively easy to own and operate. From an address planning standpoint, they would also get additional architectural flexibility. Where a stub network might only qualify for a small range of routable space from an ISP, with NAT an entire /8 could be used. This would make it possible to group internal subnets according to location or function in a way that maximized operational efficiency (and as we’ll see in Chapters 4 and 5, something IPv6 universally allows for). Privately addressed networks could also maintain their addressing schemes without having to renumber if they switched providers.

But NAT also had major drawbacks, many of which persist to this day. The biggest liability was (and is) that it broke the end-to-end model of the Internet. The translation of addresses anywhere in the session path meant that hosts were no longer communicating directly. This had negative implications for both security and performance. Applications that had been written based on the assumption that hosts would communicate directly with each other could break. For instance, a NAT-enabled router would have to exchange local for global addresses in application flows that included IPv4 address literals (like FTP). Even rudimentary TCP/IP functions like packet checksums had to be manipulated (in fact, the original RFC contained sample C code to suggest how to accomplish this).[18]

Whatever NAT’s ultimate shortcomings, there’s no question that it has been enormously successful in terms of its adoption. And to be fair, it has helped extend the life of IPv4 and provide for a more manageable deployment of IPv6 following its formal arrival in 1998.

IPv6 Arrives

The first formal specification for IPv6 was published late in 1998, nearly 25 years after the first network tests using IPv4.[21]

Adoption of IPv6 outside of government and academia was slow going in the early years.

In the United States, the federal government has used mandates (with moderate success) to drive IPv6 adoption among government agencies. A 2005 mandate included requirements that all IT gear should support IPv6 to the “maximum extent practicable” and that agency backbones must be using IPv6 by 2008.[22] These requirements almost certainly helped accelerate the maturation of IPv6 features and support across many vendors’ network infrastructure products. IPv6 feature parity[23] with IPv4 was also greatly enhanced by the work of the IETF during this period: nearly 200 IPv6 RFCs were published (as well as hundreds of IETF drafts).

IPv6 adoption among enterprise networks was negligible during this same time period. With so few hosts on the Internet IPv6-enabled, it made little sense to make one’s website and online resources available over it. Meanwhile, IPv4 private addressing in combination with NAT at the edge of the IT network reduced or eliminated any need to deploy IPv6 internally.

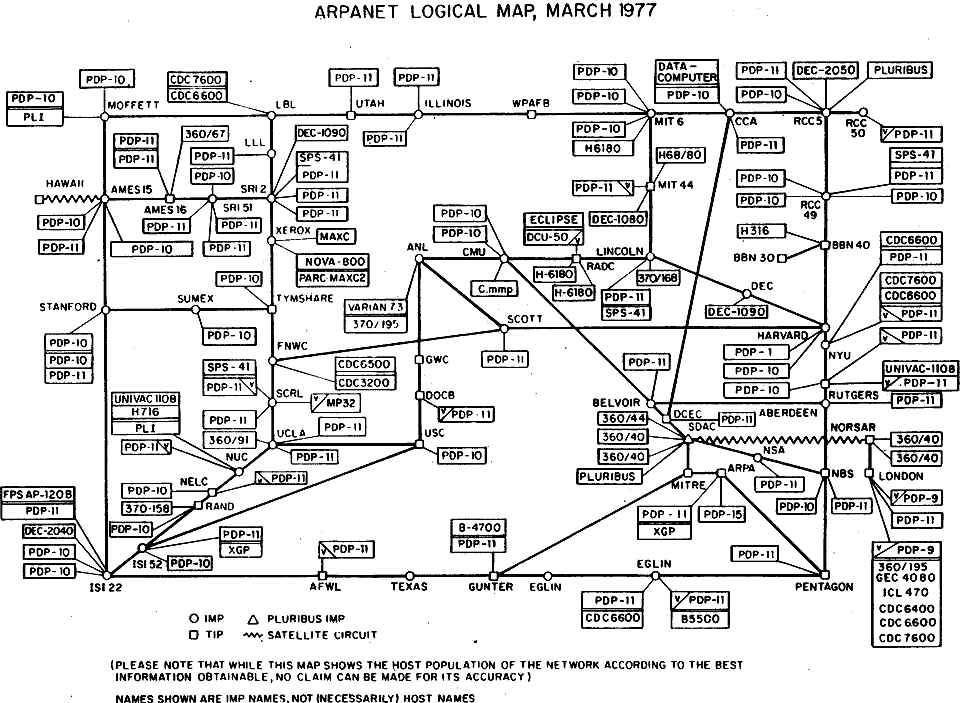

Why Not a “Flag Day” for IPv6?

Why not just set a day and switch all hosts and networks over from IPv4 to IPv6? After all, it worked for the transition from NCP to IP back in 1982. It should be obvious that even by 1998, the Internet had grown to such an extent that a “flag day” was no longer technically feasible. Even in relatively closed environments, flag days are difficult to pull off successfully—see Figure 1-2 for proof.

Much of the discussion around IPv6 adoption in this period focused on the possible appearance of a “killer app,” one that would take advantage of some technical aspect of IPv6 not available in IPv4.[24] Such an application would incentivize broad IPv6 adoption and create an incontrovertible business case for those parties waiting on the sidelines. But without widespread deployment of IPv6, there was little hope that such an application would emerge. Thus, a classic Catch-22 situation.[25]

To try to interrupt this vicious cycle, the Internet Society in 2008 began an effort to encourage large content providers to make their popular websites available over IPv6. This effort culminated in a plan for a June 8, 2011 “World IPv6 Day” during which Google, Yahoo, Facebook, and any others that wished to participate would make their primary domains (e.g., www.google.com) and related content available over IPv6 for 24 hours. This would give the Internet a day to kick the tires of IPv6 and take it out for a test drive, and in the process, gain operational wisdom from a day-long surge of IPv6 traffic.

The event was successful enough to double IPv6 traffic on the Internet (from 0.75% to 1.5%!) and inspired the World IPv6 Launch event the following year, which encouraged anyone with publicly available content to make it available over IPv6 permanently.

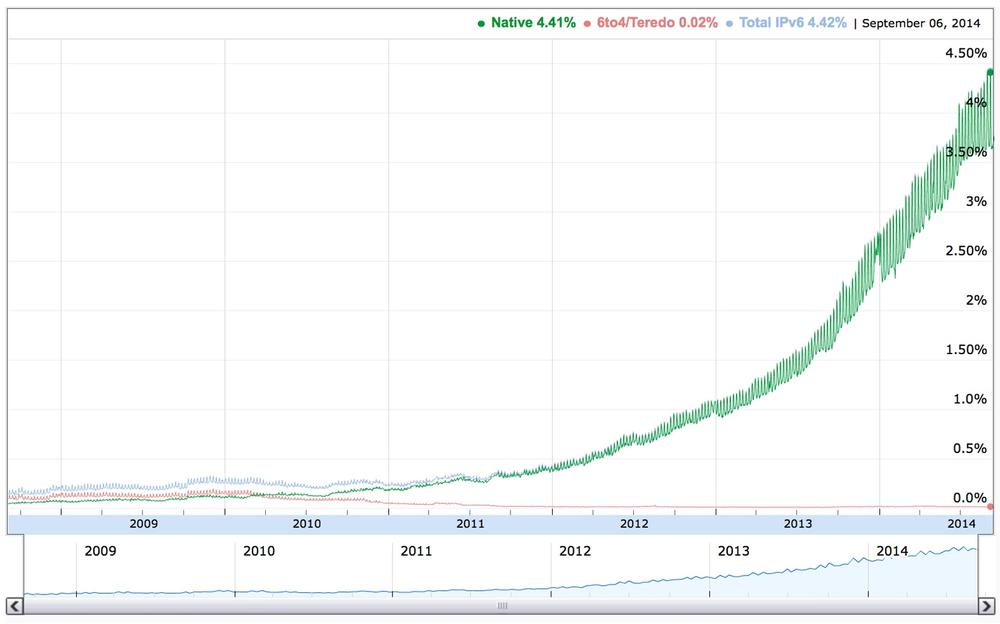

More recently, large broadband subscriber and mobile companies like Comcast and Verizon are reporting that a third to more than half of their traffic now runs over IPv6, and global IPv6 penetration as measured by Google has jumped from 2% to 4% in the last nine months (Figure 1-3).[26]

Conclusion

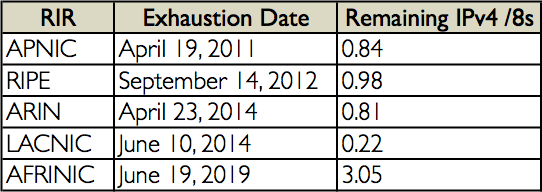

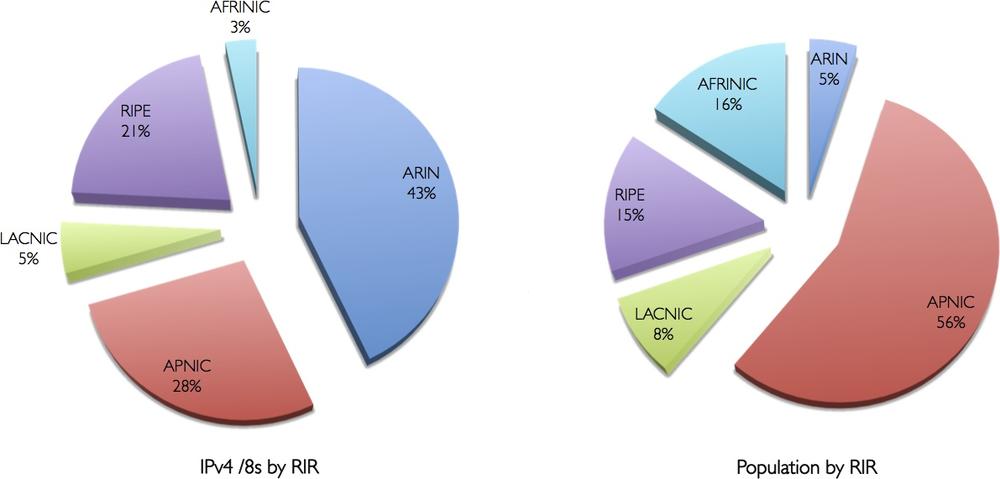

As mentioned previously, IANA had already allocated the last of the routable IPv4 address blocks in 2011. By April of that year, APNIC, the Asian-Pacific RIR (not to be confused with the Union-Pacific RR), had exhausted its supply of IPv4 (technically defined as running down to their last /8 of IPv4 addresses). And in September of 2012, RIPE, in Europe, had reached the same status. ARIN announced that it was entering the IPv4 exhaustion phase in April 2014, and Latin America’s LACNIC followed in June, just two months later (Figure 1-4). Africa is good for now with AFRINIC having enough IPv4 to ostensibly last through 2019.)

To put it bluntly, by the time you’re reading this book, IPv4 will most likely be exhausted everywhere but in Africa. And as we’ve learned, IPv6 adoption is accelerating, by some metrics, exponentially. To stay connected to the whole Internet, you’re going to need IPv6 addresses and an address plan to guide their deployment.

[2] RFC 4861, Neighbor Discovery for IP version 6 (IPv6). Keep in mind that RFCs are often updated (or even obsoleted) by new ones. When referring to them, check the updated by and obsoletes cross-references at the top of the RFC to make sure you have the most up-to-date information you might need.

[3] After reserved addresses (such as experimental, multicast, etc.) are deducted, IPv4 offers closer to 3.7 billion globally unique addresses.

[4] Trends such as mobile device proliferation and the Internet of Things (or Everything) have produced wildly divergent estimates for Internet-connected devices by 2020. They range from 20 billion on the low end all the way up to 200 billion.

[5] And in case it isn’t obvious, the entire 50 billion devices would fit quite neatly into one /64 (360 million times, actually).

[6] The success of IPv4 was (and is) at least partly a function of its remarkable simplicity. The formal protocol specification contained in RFC 791 is less than 50 pages long and notorious for its modest scope: providing for addressing and fragmentation.

[7] A /8 in Classless Inter-Domain Routing (CIDR) notation.

[8] A /16 in CIDR notation.

[9] A /24 in CIDR notation.

[10] From the remaining allocatable IP space to be carved up for Class B networks, you had to subtract the Class A networks already allocated.

[11] This method of “right-sizing” subnets for host counts using CIDR would become an entrenched architectural method that, as we’ll learn, can be a serious impediment to properly designing your IPv6 addressing plan.

[13] Enabling the forward march of human progress, as well as the reliable delivery of cat videos.

[14] It’s worth noting that some organizations running a dual-stack architecture today are taking steps to make their networks IPv6-only. For example, Facebook is planning on removing IPv4 completely from its internal network within the next year. Reasons for such a move may vary among organizations, but the biggest motivator for running a single stack is likely the aniticipated reductions to the complexity and cost of network operations (e.g., less time to troubleshoot network issues, perform maintenance and configuration, etc.).

[15] The also-rans were ST2 and ST2+, aka IPv5 and IPv7, which offered a 64-bit address. Other next-generation candidate replacements for IP included TUBA (or TCP/UDP Over CLNP-Addressed Networks) and CATNIP (or Common Architecture for the Internet).

[16] Based on the acronym, and their affinity for hotel bars as the best setting for getting things done at meetings, I shouldn’t need to explicitly mention that this was an IETF effort.

[19] In IPv6, we extend this requirement to the interface.

[20] As we’ll explore in Chapter 3 when we discuss what we mean by IPv6 adoption, the path to realizing this principle isn’t necessarily a direct one.

[21] RFC 2460, Internet Protocol, Version 6 (IPv6) Specification. Also, formal in this case refers to the IETF draft standard stage as described in RFC 2026, The Internet Standards Process—Revision 3. It’s perhaps important to keep in mind that earlier IPv6 RFCs (especially proposed standard RFC 1883 from December of 1995) provided specifications critical to faciliating the development of the “rough consensus and running code” (that famous founding tenet of the IETF) for IPv6.

[22] See the official Transition Planning for Internet Protocol Version 6 (IPv6) memorandum.

[23] Feature parity, as you’ve probably inferred, refers to the equivalent support and performance for a given feature in both IPv4 and IPv6. We’ll discuss this in more detail in Chapter 3.

[24] As we have come to recognize, the “killer app” turned out to be the Internet itself. Without IPv6, the Internet cannot continue to grow in the economical and manageable way required for its potential scale.

[25] “There was only one catch and that was Catch-22, which specified that a concern for one’s own safety in the face of dangers that were real and immediate was the process of a rational mind…Orr would be crazy to fly more missions and sane if he didn’t, but if he was sane he had to fly them. If he flew them he was crazy and didn’t have to; but if he didn’t want to he was sane and had to. Yossarian was moved very deeply by the absolute simplicity of this clause of Catch-22 and let out a respectful whistle. ‘That’s some catch, that Catch-22,’ he observed. ‘It’s the best there is,’ Doc Daneeka agreed.” -Joseph Heller, Catch-22.

[26] Google IPv6 Statistics. In the US, this figure is approaching 10%—or around 22.3 million users. IPv6 expert and O’Reilly author Silvia Hagen recently calculated that global user adoption of IPv6 will reach 50% by 2017.

[27] Source: Internet World Stats.

Get IPv6 Address Planning now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.