This chapter delves into the area of Linux memory management, with an emphasis on techniques that are useful to the device driver writer. Many types of driver programming require some understanding of how the virtual memory subsystem works; the material we cover in this chapter comes in handy more than once as we get into some of the more complex and performance-critical subsystems. The virtual memory subsystem is also a highly interesting part of the core Linux kernel and, therefore, it merits a look.

The material in this chapter is divided into three sections:

The first covers the implementation of the mmap system call, which allows the mapping of device memory directly into a user processâs address space. Not all devices require mmap support, but, for some, mapping device memory can yield significant performance improvements.

We then look at crossing the boundary from the other direction with a discussion of direct access to user-space pages. Relatively few drivers need this capability; in many cases, the kernel performs this sort of mapping without the driver even being aware of it. But an awareness of how to map user-space memory into the kernel (with get_user_pages) can be useful.

The final section covers direct memory access (DMA) I/O operations, which provide peripherals with direct access to system memory.

Of course, all of these techniques require an understanding of how Linux memory management works, so we start with an overview of that subsystem.

Rather than describing the theory of memory management in operating systems, this section tries to pinpoint the main features of the Linux implementation. Although you do not need to be a Linux virtual memory guru to implement mmap, a basic overview of how things work is useful. What follows is a fairly lengthy description of the data structures used by the kernel to manage memory. Once the necessary background has been covered, we can get into working with these structures.

Linux is, of course, a virtual memory system, meaning that the addresses seen by user programs do not directly correspond to the physical addresses used by the hardware. Virtual memory introduces a layer of indirection that allows a number of nice things. With virtual memory, programs running on the system can allocate far more memory than is physically available; indeed, even a single process can have a virtual address space larger than the systemâs physical memory. Virtual memory also allows the program to play a number of tricks with the processâs address space, including mapping the programâs memory to device memory.

Thus far, we have talked about virtual and physical addresses, but a number of the details have been glossed over. The Linux system deals with several types of addresses, each with its own semantics. Unfortunately, the kernel code is not always very clear on exactly which type of address is being used in each situation, so the programmer must be careful.

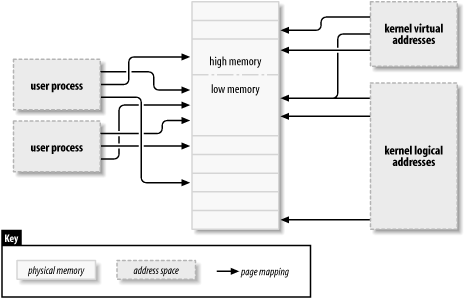

The following is a list of address types used in Linux. Figure 15-1 shows how these address types relate to physical memory.

- User virtual addresses

These are the regular addresses seen by user-space programs. User addresses are either 32 or 64 bits in length, depending on the underlying hardware architecture, and each process has its own virtual address space.

- Physical addresses

The addresses used between the processor and the systemâs memory. Physical addresses are 32- or 64-bit quantities; even 32-bit systems can use larger physical addresses in some situations.

- Bus addresses

The addresses used between peripheral buses and memory. Often, they are the same as the physical addresses used by the processor, but that is not necessarily the case. Some architectures can provide an I/O memory management unit (IOMMU) that remaps addresses between a bus and main memory. An IOMMU can make life easier in a number of ways (making a buffer scattered in memory appear contiguous to the device, for example), but programming the IOMMU is an extra step that must be performed when setting up DMA operations. Bus addresses are highly architecture dependent, of course.

- Kernel logical addresses

These make up the normal address space of the kernel. These addresses map some portion (perhaps all) of main memory and are often treated as if they were physical addresses. On most architectures, logical addresses and their associated physical addresses differ only by a constant offset. Logical addresses use the hardwareâs native pointer size and, therefore, may be unable to address all of physical memory on heavily equipped 32-bit systems. Logical addresses are usually stored in variables of type

unsignedlongorvoid*. Memory returned from kmalloc has a kernel logical address.- Kernel virtual addresses

Kernel virtual addresses are similar to logical addresses in that they are a mapping from a kernel-space address to a physical address. Kernel virtual addresses do not necessarily have the linear, one-to-one mapping to physical addresses that characterize the logical address space, however. All logical addresses are kernel virtual addresses, but many kernel virtual addresses are not logical addresses. For example, memory allocated by vmalloc has a virtual address (but no direct physical mapping). The kmap function (described later in this chapter) also returns virtual addresses. Virtual addresses are usually stored in pointer variables.

If you have a logical address, the macro _ _pa( ) (defined in <asm/page.h>) returns its associated physical address. Physical addresses can be mapped back to logical addresses with _ _va( ), but only for low-memory pages.

Different kernel functions require different types of addresses. It would be nice if there were different C types defined, so that the required address types were explicit, but we have no such luck. In this chapter, we try to be clear on which types of addresses are used where.

Physical memory

is divided into discrete

units called pages. Much of the systemâs internal handling of

memory is done on a per-page basis. Page size varies from one architecture to the next,

although most systems currently use 4096-byte pages. The constant PAGE_SIZE (defined in <asm/page.h>) gives the page size on any given architecture.

If you look at a memory addressâvirtual or physicalâit is divisible into a page number

and an offset within the page. If 4096-byte pages are being used, for example, the 12

least-significant bits are the offset, and the remaining, higher bits indicate the page

number. If you discard the offset and shift the rest of an offset to the right, the result

is called a page frame number

(PFN). Shifting bits

to convert between page frame numbers and addresses is a fairly common operation; the macro

PAGE_SHIFT

tells how many

bits must be shifted to make this conversion.

The difference between logical and kernel virtual addresses is highlighted on 32-bit systems that are equipped with large amounts of memory. With 32 bits, it is possible to address 4 GB of memory. Linux on 32-bit systems has, until recently, been limited to substantially less memory than that, however, because of the way it sets up the virtual address space.

The kernel (on the x86 architecture, in the default configuration) splits the 4-GB virtual address space between user-space and the kernel; the same set of mappings is used in both contexts. A typical split dedicates 3 GB to user space, and 1 GB for kernel space.[1] The kernelâs code and data structures must fit into that space, but the biggest consumer of kernel address space is virtual mappings for physical memory. The kernel cannot directly manipulate memory that is not mapped into the kernelâs address space. The kernel, in other words, needs its own virtual address for any memory it must touch directly. Thus, for many years, the maximum amount of physical memory that could be handled by the kernel was the amount that could be mapped into the kernelâs portion of the virtual address space, minus the space needed for the kernel code itself. As a result, x86-based Linux systems could work with a maximum of a little under 1 GB of physical memory.

In response to commercial pressure to support more memory while not breaking 32-bit application and the systemâs compatibility, the processor manufacturers have added âaddress extensionâ features to their products. The result is that, in many cases, even 32-bit processors can address more than 4 GB of physical memory. The limitation on how much memory can be directly mapped with logical addresses remains, however. Only the lowest portion of memory (up to 1 or 2 GB, depending on the hardware and the kernel configuration) has logical addresses;[2] the rest (high memory) does not. Before accessing a specific high-memory page, the kernel must set up an explicit virtual mapping to make that page available in the kernelâs address space. Thus, many kernel data structures must be placed in low memory; high memory tends to be reserved for user-space process pages.

The term âhigh memoryâ can be confusing to some, especially since it has other meanings in the PC world. So, to make things clear, weâll define the terms here:

- Low memory

Memory for which logical addresses exist in kernel space. On almost every system you will likely encounter, all memory is low memory.

- High memory

Memory for which logical addresses do not exist, because it is beyond the address range set aside for kernel virtual addresses.

On i386 systems, the boundary between low and high memory is usually set at just under 1 GB, although that boundary can be changed at kernel configuration time. This boundary is not related in any way to the old 640 KB limit found on the original PC, and its placement is not dictated by the hardware. It is, instead, a limit set by the kernel itself as it splits the 32-bit address space between kernel and user space.

We will point out limitations on the use of high memory as we come to them in this chapter.

Historically, the kernel

has used logical

addresses to refer to pages of physical memory. The addition of high-memory support,

however, has exposed an obvious problem with that approachâlogical addresses are not

available for high memory. Therefore, kernel functions that deal with memory are

increasingly using pointers to struct

page (defined in <linux/mm.h>) instead. This data structure is used to keep track of

just about everything the kernel needs to know about physical memory; there is one

struct page for each physical page on the system.

Some of the fields of this structure include the following:

atomic_t count;The number of references there are to this page. When the count drops to

0, the page is returned to the free list.void *virtual;The kernel virtual address of the page, if it is mapped;

NULL, otherwise. Low-memory pages are always mapped; high-memory pages usually are not. This field does not appear on all architectures; it generally is compiled only where the kernel virtual address of a page cannot be easily calculated. If you want to look at this field, the proper method is to use the page_address macro, described below.unsigned long flags;A set of bit flags describing the status of the page. These include

PG_locked, which indicates that the page has been locked in memory, andPG_reserved, which prevents the memory management system from working with the page at all.

There is much more information within struct page,

but it is part of the deeper black magic of memory management and is not of concern to

driver writers.

The kernel maintains one or more arrays of struct

page entries that track all of the physical memory on the system. On some

systems, there is a single array called mem_map. On

some systems, however, the situation is more complicated.

Nonuniform

memory access (NUMA) systems and those with widely discontiguous physical memory may have

more than one memory map array, so code that is meant to be portable should avoid direct

access to the array whenever possible. Fortunately, it is usually quite easy to just work

with struct page pointers without worrying about where

they come from.

Some functions and macros are defined for translating between struct page pointers and virtual addresses:

struct page *virt_to_page(void *kaddr);This macro, defined in <asm/page.h>, takes a kernel logical address and returns its associated

struct pagepointer. Since it requires a logical address, it does not work with memory from vmalloc or high memory.struct page *pfn_to_page(int pfn);Returns the

struct pagepointer for the given page frame number. If necessary, it checks a page frame number for validity with pfn_valid before passing it to pfn_to_page.void *page_address(struct page *page);Returns the kernel virtual address of this page, if such an address exists. For high memory, that address exists only if the page has been mapped. This function is defined in <linux/mm.h>. In most situations, you want to use a version of kmap rather than page_address.

#include <linux/highmem.h>void *kmap(struct page *page);void kunmap(struct page *page);kmap returns a kernel virtual address for any page in the system. For low-memory pages, it just returns the logical address of the page; for high-memory pages, kmap creates a special mapping in a dedicated part of the kernel address space. Mappings created with kmap should always be freed with kunmap; a limited number of such mappings is available, so it is better not to hold on to them for too long. kmap calls maintain a counter, so if two or more functions both call kmap on the same page, the right thing happens. Note also that kmap can sleep if no mappings are available.

#include <linux/highmem.h>#include <asm/kmap_types.h>void *kmap_atomic(struct page *page, enum km_type type);void kunmap_atomic(void *addr, enum km_type type);kmap_atomic is a high-performance form of kmap. Each architecture maintains a small list of slots (dedicated page table entries) for atomic kmaps; a caller of kmap_atomic must tell the system which of those slots to use in the

typeargument. The only slots that make sense for drivers areKM_USER0andKM_USER1(for code running directly from a call from user space), andKM_IRQ0andKM_IRQ1(for interrupt handlers). Note that atomic kmaps must be handled atomically; your code cannot sleep while holding one. Note also that nothing in the kernel keeps two functions from trying to use the same slot and interfering with each other (although there is a unique set of slots for each CPU). In practice, contention for atomic kmap slots seems to not be a problem.

We see some uses of these functions when we get into the example code, later in this chapter and in subsequent chapters.

On any modern system, the processor must have a mechanism for translating virtual addresses into its corresponding physical addresses. This mechanism is called a page table; it is essentially a multilevel tree-structured array containing virtual-to-physical mappings and a few associated flags. The Linux kernel maintains a set of page tables even on architectures that do not use such tables directly.

A number of operations commonly performed by device drivers can involve manipulating page tables. Fortunately for the driver author, the 2.6 kernel has eliminated any need to work with page tables directly. As a result, we do not describe them in any detail; curious readers may want to have a look at Understanding The Linux Kernel by Daniel P. Bovet and Marco Cesati (OâReilly) for the full story.

The virtual memory area (VMA) is the kernel data structure used to manage distinct regions of a processâs address space. A VMA represents a homogeneous region in the virtual memory of a process: a contiguous range of virtual addresses that have the same permission flags and are backed up by the same object (a file, say, or swap space). It corresponds loosely to the concept of a âsegment,â although it is better described as âa memory object with its own properties.â The memory map of a process is made up of (at least) the following areas:

An area for the programâs executable code (often called text)

Multiple areas for data, including initialized data (that which has an explicitly assigned value at the beginning of execution), uninitialized data (BSS),[3] and the program stack

One area for each active memory mapping

The memory areas of a process can be seen by looking in /proc/ <pid/maps> (in which pid, of course, is replaced by a process ID). /proc/self is a special case of /proc/ pid, because it always refers to the current process. As an example, here are a couple of memory maps (to which we have added short comments in italics):

# cat /proc/1/maps

look at init

08048000-0804e000 r-xp 00000000 03:01 64652 /sbin/init text

0804e000-0804f000 rw-p 00006000 03:01 64652 /sbin/init data

0804f000-08053000 rwxp 00000000 00:00 0 zero-mapped BSS

40000000-40015000 r-xp 00000000 03:01 96278 /lib/ld-2.3.2.so text

40015000-40016000 rw-p 00014000 03:01 96278 /lib/ld-2.3.2.so data

40016000-40017000 rw-p 00000000 00:00 0 BSS for ld.so

42000000-4212e000 r-xp 00000000 03:01 80290 /lib/tls/libc-2.3.2.so text

4212e000-42131000 rw-p 0012e000 03:01 80290 /lib/tls/libc-2.3.2.so data

42131000-42133000 rw-p 00000000 00:00 0 BSS for libc

bffff000-c0000000 rwxp 00000000 00:00 0 Stack segment

ffffe000-fffff000 ---p 00000000 00:00 0 vsyscall page

# rsh wolf cat /proc/self/maps #### x86-64 (trimmed)

00400000-00405000 r-xp 00000000 03:01 1596291 /bin/cat text

00504000-00505000 rw-p 00004000 03:01 1596291 /bin/cat data

00505000-00526000 rwxp 00505000 00:00 0 bss

3252200000-3252214000 r-xp 00000000 03:01 1237890 /lib64/ld-2.3.3.so

3252300000-3252301000 r--p 00100000 03:01 1237890 /lib64/ld-2.3.3.so

3252301000-3252302000 rw-p 00101000 03:01 1237890 /lib64/ld-2.3.3.so

7fbfffe000-7fc0000000 rw-p 7fbfffe000 00:00 0 stack

ffffffffff600000-ffffffffffe00000 ---p 00000000 00:00 0 vsyscallThe fields in each line are:

start-end perm offset major:minor inode image

Each field in /proc/*/maps

(except the image name)

corresponds to a field in struct

vm_area_struct:

startendThe beginning and ending virtual addresses for this memory area.

permA bit mask with the memory areaâs read, write, and execute permissions. This field describes what the process is allowed to do with pages belonging to the area. The last character in the field is either

pfor âprivateâ orsfor âshared.âoffsetWhere the memory area begins in the file that it is mapped to. An offset of

0means that the beginning of the memory area corresponds to the beginning of the file.majorminorThe major and minor numbers of the device holding the file that has been mapped. Confusingly, for device mappings, the major and minor numbers refer to the disk partition holding the device special file that was opened by the user, and not the device itself.

inodeThe inode number of the mapped file.

imageThe name of the file (usually an executable image) that has been mapped.

When a user-space process calls mmap to map device memory into its address space, the system responds by creating a new VMA to represent that mapping. A driver that supports mmap (and, thus, that implements the mmap method) needs to help that process by completing the initialization of that VMA. The driver writer should, therefore, have at least a minimal understanding of VMAs in order to support mmap.

Letâs look at the most important fields in struct

vm_area_struct (defined in <linux/mm.h>). These fields may be used by device drivers in their

mmap

implementation. Note that the kernel maintains lists and trees of VMAs to optimize area

lookup, and several fields of vm_area_struct are used

to maintain this organization. Therefore, VMAs canât be created at will by a driver, or

the structures break. The main fields of VMAs are as follows (note the similarity

between these fields and the /proc output we just

saw):

unsigned long vm_start;unsigned long vm_end;The virtual address range covered by this VMA. These fields are the first two fields shown in /proc/*/maps.

struct file *vm_file;A pointer to the

struct filestructure associated with this area (if any).unsigned long vm_pgoff;The offset of the area in the file, in pages. When a file or device is mapped, this is the file position of the first page mapped in this area.

unsigned long vm_flags;A set of flags describing this area. The flags of the most interest to device driver writers are

VM_IOandVM_RESERVED.VM_IOmarks a VMA as being a memory-mapped I/O region. Among other things, theVM_IOflag prevents the region from being included in process core dumps.VM_RESERVEDtells the memory management system not to attempt to swap out this VMA; it should be set in most device mappings.struct vm_operations_struct *vm_ops;A set of functions that the kernel may invoke to operate on this memory area. Its presence indicates that the memory area is a kernel âobject,â like the

structfilewe have been using throughout the book.void *vm_private_data;A field that may be used by the driver to store its own information.

Like

struct vm_area_struct, the vm_operations_struct is defined in <linux/mm.h>; it includes the operations listed below. These

operations are the only ones needed to handle the processâs memory needs, and they are

listed in the order they are declared. Later in this chapter, some of these functions

are implemented.

void (*open)(struct vm_area_struct *vma);The open method is called by the kernel to allow the subsystem implementing the VMA to initialize the area. This method is invoked any time a new reference to the VMA is made (when a process forks, for example). The one exception happens when the VMA is first created by mmap; in this case, the driverâs mmap method is called instead.

void (*close)(struct vm_area_struct *vma);When an area is destroyed, the kernel calls its close operation. Note that thereâs no usage count associated with VMAs; the area is opened and closed exactly once by each process that uses it.

struct page *(*nopage)(struct vm_area_struct *vma, unsigned long address, int*type);When a process tries to access a page that belongs to a valid VMA, but that is currently not in memory, the nopage method is called (if it is defined) for the related area. The method returns the

struct pagepointer for the physical page after, perhaps, having read it in from secondary storage. If the nopage method isnât defined for the area, an empty page is allocated by the kernel.int (*populate)(struct vm_area_struct *vm, unsigned long address, unsignedlong len, pgprot_t prot, unsigned long pgoff, int nonblock);This method allows the kernel to âprefaultâ pages into memory before they are accessed by user space. There is generally no need for drivers to implement the populate method.

The final piece of the memory

management puzzle is the process memory map structure,

which holds all of the other data structures together. Each process in the system (with

the exception of a few kernel-space helper threads) has a struct

mm_struct (defined in <linux/sched.h>) that contains the processâs list of virtual memory

areas, page tables, and various other bits of memory management housekeeping information,

along with a semaphore (mmap_sem) and a spinlock

(page_table_lock). The pointer to this structure is

found in the task structure; in the rare cases where a driver needs to access it, the

usual way is to use current->mm. Note that the

memory management structure can be shared between processes; the Linux implementation of

threads works in this way, for example.

That concludes our overview of Linux memory management data structures. With that out of the way, we can now proceed to the implementation of the mmap system call.

Memory mapping is one of the most interesting features of modern Unix systems. As far as drivers are concerned, memory mapping can be implemented to provide user programs with direct access to device memory.

A definitive example of mmap usage can be seen by looking at a subset of the virtual memory areas for the X Window System server:

cat /proc/731/maps

000a0000-000c0000 rwxs 000a0000 03:01 282652 /dev/mem

000f0000-00100000 r-xs 000f0000 03:01 282652 /dev/mem

00400000-005c0000 r-xp 00000000 03:01 1366927 /usr/X11R6/bin/Xorg

006bf000-006f7000 rw-p 001bf000 03:01 1366927 /usr/X11R6/bin/Xorg

2a95828000-2a958a8000 rw-s fcc00000 03:01 282652 /dev/mem

2a958a8000-2a9d8a8000 rw-s e8000000 03:01 282652 /dev/mem

...The full list of the X serverâs VMAs is lengthy, but most of the entries are not of

interest here. We do see, however, four separate mappings of /dev/mem, which give some insight into how the X server works with the video

card. The first mapping is at a0000, which is the

standard location for video RAM in the 640-KB ISA hole. Further down, we see a large mapping

at e8000000, an address which is above the highest RAM

address on the system. This is a direct mapping of the

video memory

on the adapter.

These regions can also be seen in /proc/iomem:

000a0000-000bffff : Video RAM area 000c0000-000ccfff : Video ROM 000d1000-000d1fff : Adapter ROM 000f0000-000fffff : System ROM d7f00000-f7efffff : PCI Bus #01 e8000000-efffffff : 0000:01:00.0 fc700000-fccfffff : PCI Bus #01 fcc00000-fcc0ffff : 0000:01:00.0

Mapping a device means associating a range of user-space addresses to device memory. Whenever the program reads or writes in the assigned address range, it is actually accessing the device. In the X server example, using mmap allows quick and easy access to the video cardâs memory. For a performance-critical application like this, direct access makes a large difference.

As you might

suspect, not every device lends itself to the mmap abstraction; it

makes no sense, for instance, for serial ports and other stream-oriented devices. Another

limitation of mmap is that mapping is PAGE_SIZE grained. The kernel can manage virtual addresses only at the level of

page tables; therefore, the mapped area must be a multiple of PAGE_SIZE and must live in physical memory starting at an address that is a

multiple of PAGE_SIZE. The kernel forces size granularity

by making a region slightly bigger if its size isnât a multiple of the page size.

These limits are not a big constraint for drivers, because the program accessing the device is device dependent anyway. Since the program must know about how the device works, the programmer is not unduly bothered by the need to see to details like page alignment. A bigger constraint exists when ISA devices are used on some non-x86 platforms, because their hardware view of ISA may not be contiguous. For example, some Alpha computers see ISA memory as a scattered set of 8-bit, 16-bit, or 32-bit items, with no direct mapping. In such cases, you canât use mmap at all. The inability to perform direct mapping of ISA addresses to Alpha addresses is due to the incompatible data transfer specifications of the two systems. Whereas early Alpha processors could issue only 32-bit and 64-bit memory accesses, ISA can do only 8-bit and 16-bit transfers, and thereâs no way to transparently map one protocol onto the other.

There are sound advantages to using mmap when itâs feasible to do so. For instance, we have already looked at the X server, which transfers a lot of data to and from video memory; mapping the graphic display to user space dramatically improves the throughput, as opposed to an lseek/write implementation. Another typical example is a program controlling a PCI device. Most PCI peripherals map their control registers to a memory address, and a high-performance application might prefer to have direct access to the registers instead of repeatedly having to call ioctl to get its work done.

The mmap method is part of the file_operations structure and is invoked when the

mmap system call is issued. With mmap, the

kernel performs a good deal of work before the actual method is invoked, and, therefore, the

prototype of the method is quite different from that of the system call. This is unlike

calls such as ioctl and poll, where the kernel

does not do much before calling the method.

The system call is declared as follows (as described in the mmap(2) manual page):

mmap (caddr_t addr, size_t len, int prot, int flags, int fd, off_t offset)

On the other hand, the file operation is declared as:

int (*mmap) (struct file *filp, struct vm_area_struct *vma);

The filp argument in the method is the same as that

introduced in Chapter 3, while vma contains the information about the virtual address range

that is used to access the device. Therefore, much of the work has been done by the kernel;

to implement mmap, the driver only has to build suitable page tables

for the address range and, if necessary, replace vma->vm_ops with a new set of operations.

There are two ways of building the page tables: doing it all at once with a function

called remap_pfn_range or doing it a page at a time via

the nopage VMA method. Each method has its advantages and limitations.

We start with the âall at onceâ approach, which is simpler. From there, we add the

complications needed for a real-world implementation.

The job of building new page tables to map a range of physical addresses is handled by remap_pfn_range and io_remap_page_range, which have the following prototypes:

int remap_pfn_range(struct vm_area_struct *vma,

unsigned long virt_addr, unsigned long pfn,

unsigned long size, pgprot_t prot);

int io_remap_page_range(struct vm_area_struct *vma,

unsigned long virt_addr, unsigned long phys_addr,

unsigned long size, pgprot_t prot);The value returned by the function is the usual 0

or a negative error code. Letâs look at the exact meaning of the functionâs

arguments:

vmaThe virtual memory area into which the page range is being mapped.

virt_addrThe user virtual address where remapping should begin. The function builds page tables for the virtual address range between

virt_addrandvirt_addr+size.pfnThe page frame number corresponding to the physical address to which the virtual address should be mapped. The page frame number is simply the physical address right-shifted by

PAGE_SHIFTbits. For most uses, thevm_pgofffield of the VMA structure contains exactly the value you need. The function affects physical addresses from(pfn<<PAGE_SHIFT)to(pfn<<PAGE_SHIFT)+size.sizeThe dimension, in bytes, of the area being remapped.

protThe âprotectionâ requested for the new VMA. The driver can (and should) use the value found in

vma->vm_page_prot.

The

arguments to remap_pfn_range are fairly straightforward, and most of

them are already provided to you in the VMA when your mmap method is

called. You may be wondering why there are two functions, however. The first

(remap_pfn_range) is intended for situations where pfn refers to actual system RAM, while

io_remap_page_range should be used when phys_addr points to I/O memory. In practice, the two functions are identical

on every architecture except the SPARC, and you see remap_pfn_range

used in most situations. In the interest of writing portable drivers, however, you should

use the variant of remap_pfn_range that is suited to your particular

situation.

One other complication has to do with caching: usually, references to device memory should not be cached by the processor. Often the system BIOS sets things up properly, but it is also possible to disable caching of specific VMAs via the protection field. Unfortunately, disabling caching at this level is highly processor dependent. The curious reader may wish to look at the pgprot_noncached function from drivers/char/mem.c to see whatâs involved. We wonât discuss the topic further here.

If your driver needs to do a simple, linear mapping of device memory into a user address space, remap_pfn_range is almost all you really need to do the job. The following code is derived from drivers/char/mem.c and shows how this task is performed in a typical module called simple (Simple Implementation Mapping Pages with Little Enthusiasm):

static int simple_remap_mmap(struct file *filp, struct vm_area_struct *vma)

{

if (remap_pfn_range(vma, vma->vm_start, vm->vm_pgoff,

vma->vm_end - vma->vm_start,

vma->vm_page_prot))

return -EAGAIN;

vma->vm_ops = &simple_remap_vm_ops;

simple_vma_open(vma);

return 0;

}As you can see, remapping memory just a matter of calling remap_pfn_range to create the necessary page tables.

As we have seen, the

vm_area_struct structure contains a set of operations

that may be applied to the VMA.

Now we

look at providing those operations in a simple way. In particular, we provide

open and close operations for our VMA. These

operations are called whenever a process opens or closes the VMA; in particular, the

open method is invoked anytime a process forks and creates a new

reference to the VMA. The open and close VMA

methods are called in addition to the processing performed by the kernel, so they need not

reimplement any of the work done there. They exist as a way for drivers to do any

additional processing that they may require.

As it turns out, a simple driver such as simple need not do any extra processing in particular. So we have created open and close methods, which print a message to the system log informing the world that they have been called. Not particularly useful, but it does allow us to show how these methods can be provided, and see when they are invoked.

To this end, we override the default vma->vm_ops

with operations that call printk:

void simple_vma_open(struct vm_area_struct *vma)

{

printk(KERN_NOTICE "Simple VMA open, virt %lx, phys %lx\n",

vma->vm_start, vma->vm_pgoff << PAGE_SHIFT);

}

void simple_vma_close(struct vm_area_struct *vma)

{

printk(KERN_NOTICE "Simple VMA close.\n");

}

static struct vm_operations_struct simple_remap_vm_ops = {

.open = simple_vma_open,

.close = simple_vma_close,

};To make these operations active for a specific mapping, it is necessary to store a

pointer to simple_remap_vm_ops in the vm_ops field of the relevant VMA. This is usually done in the

mmap method. If you turn back to the

simple_remap_mmap example, you see these lines of code:

vma->vm_ops = &simple_remap_vm_ops; simple_vma_open(vma);

Note the explicit call to simple_vma_open. Since the open method is not invoked on the initial mmap, we must call it explicitly if we want it to run.

Although remap_pfn_range works well for many, if not most, driver mmap implementations, sometimes it is necessary to be a little more flexible. In such situations, an implementation using the nopage VMA method may be called for.

One situation in which the nopage approach is useful can be brought about by the mremap system call, which is used by applications to change the bounding addresses of a mapped region. As it happens, the kernel does not notify drivers directly when a mapped VMA is changed by mremap. If the VMA is reduced in size, the kernel can quietly flush out the unwanted pages without telling the driver. If, instead, the VMA is expanded, the driver eventually finds out by way of calls to nopage when mappings must be set up for the new pages, so there is no need to perform a separate notification. The nopage method, therefore, must be implemented if you want to support the mremap system call. Here, we show a simple implementation of nopage for the simple device.

The nopage method, remember, has the following prototype:

struct page *(*nopage)(struct vm_area_struct *vma,

unsigned long address, int *type);

When a user

process attempts to access a page in a VMA that is not present in memory, the associated

nopage function is called. The address parameter contains the virtual address that caused the fault, rounded

down to the beginning of the page. The nopage function must locate

and return the struct page pointer that refers to the

page the user wanted. This function must also take care to increment the usage count for

the page it returns by calling the get_page macro:

get_page(struct page *pageptr);

This step is necessary to keep the reference counts correct on the mapped pages. The

kernel maintains this count for every page; when the count goes to 0, the kernel knows that the page may be placed on the free

list. When a VMA is unmapped, the kernel decrements the usage count for every page in the

area. If your driver does not increment the count when adding a page to the area, the

usage count becomes 0 prematurely, and the integrity of

the system is compromised.

The nopage method should also store the type of fault in the

location pointed to by the type argumentâbut only if

that argument is not NULL. In device drivers, the

proper value for type will invariably be VM_FAULT_MINOR.

If you are using nopage, there is usually very little work to be done when mmap is called; our version looks like this:

static int simple_nopage_mmap(struct file *filp, struct vm_area_struct *vma)

{

unsigned long offset = vma->vm_pgoff << PAGE_SHIFT;

if (offset >= _ _pa(high_memory) || (filp->f_flags & O_SYNC))

vma->vm_flags |= VM_IO;

vma->vm_flags |= VM_RESERVED;

vma->vm_ops = &simple_nopage_vm_ops;

simple_vma_open(vma);

return 0;

}The main thing mmap has to do is to replace the default (NULL) vm_ops pointer with

our own operations. The nopage method then takes care of âremappingâ

one page at a time and returning the address of its struct

page structure. Because we are just implementing a

window onto physical memory here, the remapping step is simple: we only need to locate and

return a pointer to the struct

page for the desired address. Our

nopage method looks like the following:

struct page *simple_vma_nopage(struct vm_area_struct *vma,

unsigned long address, int *type)

{

struct page *pageptr;

unsigned long offset = vma->vm_pgoff << PAGE_SHIFT;

unsigned long physaddr = address - vma->vm_start + offset;

unsigned long pageframe = physaddr >> PAGE_SHIFT;

if (!pfn_valid(pageframe))

return NOPAGE_SIGBUS;

pageptr = pfn_to_page(pageframe);

get_page(pageptr);

if (type)

*type = VM_FAULT_MINOR;

return pageptr;

}Since, once again, we are simply mapping main memory here, the

nopage function need only find the correct struct

page for the faulting address and increment its

reference count. Therefore, the required sequence of events is to calculate the desired

physical address, and turn it into a page frame number by right-shifting it PAGE_SHIFT bits. Since user space can give us any address it

likes, we must ensure that we have a valid page frame; the pfn_valid

function does that for us. If the address is out of range, we return NOPAGE_SIGBUS, which causes a bus signal to be delivered to

the calling process. Otherwise, pfn_to_page gets the necessary

struct

page pointer; we can increment its reference count

(with a call to get_page) and return it.

The nopage method normally returns a pointer to a struct page. If, for some reason, a normal page cannot be

returned (e.g., the requested address is beyond the deviceâs memory region), NOPAGE_SIGBUS can be returned to signal the error; that is

what the simple code above does. nopage can also

return NOPAGE_OOM to indicate failures caused by

resource limitations.

Note that this implementation works for ISA memory regions but not for those on the

PCI bus. PCI memory is mapped above the highest system memory, and there are no entries in

the system memory map for those addresses. Because there is no struct page to return a pointer to, nopage cannot be

used in these situations; you must use remap_pfn_range

instead.

If the nopage method is left NULL, kernel code that handles page faults maps the zero page to the faulting

virtual address. The zero page is a copy-on-write page that reads

as 0 and that is used, for example, to map the BSS

segment. Any process referencing the zero page sees exactly that: a page filled with

zeroes. If the process writes to the page, it ends up modifying a private copy. Therefore,

if a process extends a mapped region by calling mremap, and the

driver hasnât implemented nopage, the process ends up with

zero-filled memory instead of a segmentation fault.

All the examples weâve seen so

far are reimplementations of /dev/mem; they remap

physical addresses into user space. The typical driver, however, wants to map only the

small address range that applies to its peripheral device, not all memory. In order to map

to user space only a subset of the whole memory range, the driver needs only to play with

the offsets. The following does the trick for a driver mapping a region of simple_region_size bytes, beginning at physical address

simple_region_start (which should be

page-aligned):

unsigned long off = vma->vm_pgoff << PAGE_SHIFT;

unsigned long physical = simple_region_start + off;

unsigned long vsize = vma->vm_end - vma->vm_start;

unsigned long psize = simple_region_size - off;

if (vsize > psize)

return -EINVAL; /* spans too high */

remap_pfn_range(vma, vma_>vm_start, physical, vsize, vma->vm_page_prot);In addition to calculating the offsets, this code introduces a check that reports an

error when the program tries to map more memory than is available in the I/O region of the

target device. In this code, psize is the physical I/O

size that is left after the offset has been specified, and vsize is the requested size of virtual memory; the function refuses to map

addresses that extend beyond the allowed memory range.

Note that the user process can always use mremap to extend its mapping, possibly past the end of the physical device area. If your driver fails to define a nopage method, it is never notified of this extension, and the additional area maps to the zero page. As a driver writer, you may well want to prevent this sort of behavior; mapping the zero page onto the end of your region is not an explicitly bad thing to do, but it is highly unlikely that the programmer wanted that to happen.

The simplest way to prevent extension of the mapping is to implement a simple nopage method that always causes a bus signal to be sent to the faulting process. Such a method would look like this:

struct page *simple_nopage(struct vm_area_struct *vma,

unsigned long address, int *type);

{ return NOPAGE_SIGBUS; /* send a SIGBUS */}As we have seen, the nopage method is called only when the

process dereferences an address that is within a known VMA but for which there is

currently no valid page table entry. If we have used remap_pfn_range

to map the entire device region, the nopage method shown here is

called only for references outside of that region. Thus, it can safely return NOPAGE_SIGBUS to signal an error. Of course, a more thorough

implementation of nopage could check to see whether the faulting

address is within the device area, and perform the remapping if that is the case. Once

again, however, nopage does not work with PCI memory areas, so

extension of PCI mappings is not possible.

An interesting limitation of remap_pfn_range is that it gives access only to reserved pages and physical addresses above the top of physical memory. In Linux, a page of physical addresses is marked as âreservedâ in the memory map to indicate that it is not available for memory management. On the PC, for example, the range between 640 KB and 1 MB is marked as reserved, as are the pages that host the kernel code itself. Reserved pages are locked in memory and are the only ones that can be safely mapped to user space; this limitation is a basic requirement for system stability.

Therefore, remap_pfn_range wonât allow you to remap conventional addresses, which include the ones you obtain by calling get_free_page. Instead, it maps in the zero page. Everything appears to work, with the exception that the process sees private, zero-filled pages rather than the remapped RAM that it was hoping for. Nonetheless, the function does everything that most hardware drivers need it to do, because it can remap high PCI buffers and ISA memory.

The limitations of remap_pfn_range can be seen by running mapper, one of the sample programs in misc-progs in the files provided on OâReillyâs FTP site. mapper is a simple tool that can be used to quickly test the mmap system call; it maps read-only parts of a file specified by command-line options and dumps the mapped region to standard output. The following session, for instance, shows that /dev/mem doesnât map the physical page located at address 64 KBâinstead, we see a page full of zeros (the host computer in this example is a PC, but the result would be the same on other platforms):

morgana.root# ./mapper /dev/mem 0x10000 0x1000 | od -Ax -t x1 mapped "/dev/mem" from 65536 to 69632 000000 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 * 001000

The inability of remap_pfn_range to deal with RAM suggests that memory-based devices like scull canât easily implement mmap, because its device memory is conventional RAM, not I/O memory. Fortunately, a relatively easy workaround is available to any driver that needs to map RAM into user space; it uses the nopage method that we have seen earlier.

The way to map real RAM to user

space is to use

vm_ops->nopage to deal with page faults one at a

time. A sample implementation is part of the scullp module,

introduced in Chapter 8.

scullp is a page-oriented char device. Because it is page oriented, it can implement mmap on its memory. The code implementing memory mapping uses some of the concepts introduced in Section 15.1.

Before examining the code, letâs look at the design choices that affect the mmap implementation in scullp :

scullp doesnât release device memory as long as the device is mapped. This is a matter of policy rather than a requirement, and it is different from the behavior of scull and similar devices, which are truncated to a length of

0when opened for writing. Refusing to free a mapped scullp device allows a process to overwrite regions actively mapped by another process, so you can test and see how processes and device memory interact. To avoid releasing a mapped device, the driver must keep a count of active mappings; thevmasfield in the device structure is used for this purpose.Memory mapping is performed only when the scullp

orderparameter (set at module load time) is0. The parameter controls how _ _get_free_pages is invoked (see Section 8.3). The zero-order limitation (which forces pages to be allocated one at a time, rather than in larger groups) is dictated by the internals of _ _get_free_pages, the allocation function used by scullp. To maximize allocation performance, the Linux kernel maintains a list of free pages for each allocation order, and only the reference count of the first page in a cluster is incremented by get_free_pages and decremented by free_pages. The mmap method is disabled for a scullp device if the allocation order is greater than zero, because nopage deals with single pages rather than clusters of pages. scullp simply does not know how to properly manage reference counts for pages that are part of higher-order allocations. (Return to Section 8.3.1 if you need a refresher on scullp and the memory allocation order value.)

The zero-order limitation is mostly intended to keep the code simple. It is possible to correctly implement mmap for multipage allocations by playing with the usage count of the pages, but it would only add to the complexity of the example without introducing any interesting information.

Code that is intended to map RAM according to the rules just outlined needs to implement the open, close, and nopage VMA methods; it also needs to access the memory map to adjust the page usage counts.

This implementation of scullp_mmap is very short, because it relies on the nopage function to do all the interesting work:

int scullp_mmap(struct file *filp, struct vm_area_struct *vma)

{

struct inode *inode = filp->f_dentry->d_inode;

/* refuse to map if order is not 0 */

if (scullp_devices[iminor(inode)].order)

return -ENODEV;

/* don't do anything here: "nopage" will fill the holes */

vma->vm_ops = &scullp_vm_ops;

vma->vm_flags |= VM_RESERVED;

vma->vm_private_data = filp->private_data;

scullp_vma_open(vma);

return 0;

}The purpose of the if statement is to avoid

mapping devices whose allocation order is not 0.

scullpâs operations are stored in the vm_ops field, and a pointer to the device structure is stashed in the

vm_private_data field. At the end, vm_ops->open is called to update the count of active

mappings for the device.

open and close simply keep track of the mapping count and are defined as follows:

void scullp_vma_open(struct vm_area_struct *vma)

{

struct scullp_dev *dev = vma->vm_private_data;

dev->vmas++;

}

void scullp_vma_close(struct vm_area_struct *vma)

{

struct scullp_dev *dev = vma->vm_private_data;

dev->vmas--;

}Most of the work is then performed by nopage. In the

scullp implementation, the address parameter to nopage is used to calculate an

offset into the device; the offset is then used to look up the correct page in the

scullp memory tree:

struct page *scullp_vma_nopage(struct vm_area_struct *vma,

unsigned long address, int *type)

{

unsigned long offset;

struct scullp_dev *ptr, *dev = vma->vm_private_data;

struct page *page = NOPAGE_SIGBUS;

void *pageptr = NULL; /* default to "missing" */

down(&dev->sem);

offset = (address - vma->vm_start) + (vma->vm_pgoff << PAGE_SHIFT);

if (offset >= dev->size) goto out; /* out of range */

/*

* Now retrieve the scullp device from the list,then the page.

* If the device has holes, the process receives a SIGBUS when

* accessing the hole.

*/

offset >>= PAGE_SHIFT; /* offset is a number of pages */

for (ptr = dev; ptr && offset >= dev->qset;) {

ptr = ptr->next;

offset -= dev->qset;

}

if (ptr && ptr->data) pageptr = ptr->data[offset];

if (!pageptr) goto out; /* hole or end-of-file */

page = virt_to_page(pageptr);

/* got it, now increment the count */

get_page(page);

if (type)

*type = VM_FAULT_MINOR;

out:

up(&dev->sem);

return page;

}scullp uses memory obtained with

get_free_pages. That memory is addressed using logical addresses,

so all scullp_nopage has to do to get a struct

page pointer is to call

virt_to_page.

The scullp device now works as expected, as you can see in this sample output from the mapper utility. Here, we send a directory listing of /dev (which is long) to the scullp device and then use the mapper utility to look at pieces of that listing with mmap:

morgana%ls -l /dev > /dev/scullpmorgana%./mapper /dev/scullp 0 140mapped "/dev/scullp" from 0 (0x00000000) to 140 (0x0000008c) total 232 crw------- 1 root root 10, 10 Sep 15 07:40 adbmouse crw-r--r-- 1 root root 10, 175 Sep 15 07:40 agpgart morgana%./mapper /dev/scullp 8192 200mapped "/dev/scullp" from 8192 (0x00002000) to 8392 (0x000020c8) d0h1494 brw-rw---- 1 root floppy 2, 92 Sep 15 07:40 fd0h1660 brw-rw---- 1 root floppy 2, 20 Sep 15 07:40 fd0h360 brw-rw---- 1 root floppy 2, 12 Sep 15 07:40 fd0H360

Although itâs rarely necessary, itâs interesting to see how a driver can map a kernel virtual address to user space using mmap. A true kernel virtual address, remember, is an address returned by a function such as vmallocâthat is, a virtual address mapped in the kernel page tables. The code in this section is taken from scullv, which is the module that works like scullp but allocates its storage through vmalloc.

Most of the scullv implementation is like the one weâve just seen

for scullp, except that there is no need to check the order parameter that controls memory allocation. The reason

for this is that vmalloc allocates its pages one at a time, because

single-page allocations are far more likely to succeed than multipage allocations.

Therefore, the allocation order problem doesnât apply to vmalloced

space.

Beyond that, there is only one difference between the nopage

implementations used by scullp and scullv.

Remember that scullp, once it found the page of interest, would

obtain the corresponding struct

page pointer with virt_to_page.

That function does not work with kernel virtual addresses, however. Instead, you must use

vmalloc_to_page. So the final part of the

scullv version of nopage looks like:

/*

* After scullv lookup, "page" is now the address of the page

* needed by the current process. Since it's a vmalloc address,

* turn it into a struct page.

*/

page = vmalloc_to_page(pageptr);

/* got it, now increment the count */

get_page(page);

if (type)

*type = VM_FAULT_MINOR;

out:

up(&dev->sem);

return page;Based on this discussion, you might also want to map addresses returned by ioremap to user space. That would be a mistake, however; addresses from ioremap are special and cannot be treated like normal kernel virtual addresses. Instead, you should use remap_pfn_range to remap I/O memory areas into user space.

Most I/O operations are buffered through the kernel. The use of a kernel-space buffer allows a degree of separation between user space and the actual device; this separation can make programming easier and can also yield performance benefits in many situations. There are cases, however, where it can be beneficial to perform I/O directly to or from a user-space buffer. If the amount of data being transferred is large, transferring data directly without an extra copy through kernel space can speed things up.

One example of direct I/O use in the 2.6 kernel is the SCSI tape driver. Streaming tapes can pass a lot of data through the system, and tape transfers are usually record-oriented, so there is little benefit to buffering data in the kernel. So, when the conditions are right (the user-space buffer is page-aligned, for example), the SCSI tape driver performs its I/O without copying the data.

That said, it is important to recognize that direct I/O does not always provide the performance boost that one might expect. The overhead of setting up direct I/O (which involves faulting in and pinning down the relevant user pages) can be significant, and the benefits of buffered I/O are lost. For example, the use of direct I/O requires that the write system call operate synchronously; otherwise the application does not know when it can reuse its I/O buffer. Stopping the application until each write completes can slow things down, which is why applications that use direct I/O often use asynchronous I/O operations as well.

The real moral of the story, in any case, is that implementing direct I/O in a char driver is usually unnecessary and can be hurtful. You should take that step only if you are sure that the overhead of buffered I/O is truly slowing things down. Note also that block and network drivers need not worry about implementing direct I/O at all; in both cases, higher-level code in the kernel sets up and makes use of direct I/O when it is indicated, and driver-level code need not even know that direct I/O is being performed.

The key to implementing direct I/O in the 2.6 kernel is a function called get_user_pages , which is declared in <linux/mm.h> with the following prototype:

int get_user_pages(struct task_struct *tsk,

struct mm_struct *mm,

unsigned long start,

int len,

int write,

int force,

struct page **pages,

struct vm_area_struct **vmas);This function has several arguments:

tskA pointer to the task performing the I/O; its main purpose is to tell the kernel who should be charged for any page faults incurred while setting up the buffer. This argument is almost always passed as

current.mmA pointer to the memory management structure describing the address space to be mapped. The

mm_structstructure is the piece that ties together all of the parts (VMAs) of a processâs virtual address space. For driver use, this argument should always becurrent->mm.startlenstartis the (page-aligned) address of the user-space buffer, andlenis the length of the buffer in pages.writeforceIf

writeis nonzero, the pages are mapped for write access (implying, of course, that user space is performing a read operation). Theforceflag tells get_user_pages to override the protections on the given pages to provide the requested access; drivers should always pass0here.pagesvmasOutput parameters. Upon successful completion,

pagescontain a list of pointers to thestruct pagestructures describing the user-space buffer, andvmascontains pointers to the associated VMAs. The parameters should, obviously, point to arrays capable of holding at leastlenpointers. Either parameter can beNULL, but you need, at least, thestructpagepointers to actually operate on the buffer.

get_user_pages is a low-level memory management function, with a suitably complex interface. It also requires that the mmap reader/writer semaphore for the address space be obtained in read mode before the call. As a result, calls to get_user_pages usually look something like:

down_read(¤t->mm->mmap_sem); result = get_user_pages(current, current->mm, ...); up_read(¤t->mm->mmap_sem);

The return value is the number of pages actually mapped, which could be fewer than the number requested (but greater than zero).

Upon successful completion, the caller has a pages

array pointing to the user-space buffer, which is locked into memory. To operate on the buffer

directly, the kernel-space code must turn each struct

page pointer into a kernel virtual address with

kmap or kmap_atomic. Usually, however, devices

for which direct I/O is justified are using DMA operations, so your driver will probably

want to create a scatter/gather list from the array of struct

page pointers. We discuss how to do this in the section,

Section 15.4.4.7.

Once your direct I/O operation is complete, you must release the user pages. Before doing so, however, you must inform the kernel if you changed the contents of those pages. Otherwise, the kernel may think that the pages are âclean,â meaning that they match a copy found on the swap device, and free them without writing them out to backing store. So, if you have changed the pages (in response to a user-space read request), you must mark each affected page dirty with a call to:

void SetPageDirty(struct page *page);

(This macro is defined in <linux/page-flags.h>). Most code that performs this operation checks first to ensure that the page is not in the reserved part of the memory map, which is never swapped out. Therefore, the code usually looks like:

if (! PageReserved(page))

SetPageDirty(page);Since user-space memory is not normally marked reserved, this check should not strictly be necessary, but when you are getting your hands dirty deep within the memory management subsystem, it is best to be thorough and careful.

Regardless of whether the pages have been changed, they must be freed from the page cache, or they stay there forever. The call to use is:

void page_cache_release(struct page *page);

This call should, of course, be made after the page has been marked dirty, if need be.

One of the new features added to the 2.6 kernel was the asynchronous I/O capability. Asynchronous I/O allows user space to initiate operations without waiting for their completion; thus, an application can do other processing while its I/O is in flight. A complex, high-performance application can also use asynchronous I/O to have multiple operations going at the same time.

The implementation of asynchronous I/O is optional, and very few driver authors bother; most devices do not benefit from this capability. As we will see in the coming chapters, block and network drivers are fully asynchronous at all times, so only char drivers are candidates for explicit asynchronous I/O support. A char device can benefit from this support if there are good reasons for having more than one I/O operation outstanding at any given time. One good example is streaming tape drives, where the drive can stall and slow down significantly if I/O operations do not arrive quickly enough. An application trying to get the best performance out of a streaming drive could use asynchronous I/O to have multiple operations ready to go at any given time.

For the rare driver author who needs to implement asynchronous I/O, we present a quick overview of how it works. We cover asynchronous I/O in this chapter, because its implementation almost always involves direct I/O operations as well (if you are buffering data in the kernel, you can usually implement asynchronous behavior without imposing the added complexity on user space).

Drivers supporting asynchronous I/O should include <linux/aio.h>. There are three file_operations methods for the implementation of asynchronous I/O:

ssize_t (*aio_read) (struct kiocb *iocb, char *buffer,

size_t count, loff_t offset);

ssize_t (*aio_write) (struct kiocb *iocb, const char *buffer,

size_t count, loff_t offset);

int (*aio_fsync) (struct kiocb *iocb, int datasync);The aio_fsync

operation is only of interest to filesystem code, so we do not discuss it further here.

The other two, aio_read and aio_write, look very

much like the regular read and write methods but

with a couple of exceptions. One is that the offset

parameter is passed by value; asynchronous operations never change the file position, so

there is no reason to pass a pointer to it. These methods also take the iocb (âI/O control blockâ) parameter, which we get to in a

moment.

The purpose of the aio_read and aio_write methods is to initiate a read or write operation that may or may not be complete by the time they return. If it is possible to complete the operation immediately, the method should do so and return the usual status: the number of bytes transferred or a negative error code. Thus, if your driver has a read method called my_read, the following aio_read method is entirely correct (though rather pointless):

static ssize_t my_aio_read(struct kiocb *iocb, char *buffer,

ssize_t count, loff_t offset)

{

return my_read(iocb->ki_filp, buffer, count, &offset);

}Note that the struct

file pointer is found in the ki_filp field of the kiocb

structure.

If you support asynchronous I/O, you must be aware of the fact that the kernel can, on occasion, create âsynchronous IOCBs.â These are, essentially, asynchronous operations that must actually be executed synchronously. One may well wonder why things are done this way, but itâs best to just do what the kernel asks. Synchronous operations are marked in the IOCB; your driver should query that status with:

int is_sync_kiocb(struct kiocb *iocb);

If this function returns a nonzero value, your driver must execute the operation synchronously.

In the end, however, the point of all this structure is to enable asynchronous

operations. If your driver is able to initiate the operation (or, simply, to queue it

until some future time when it can be executed), it must do two things: remember

everything it needs to know about the operation, and return -EIOCBQUEUED to the caller. Remembering the operation information includes

arranging access to the user-space buffer; once you return, you will not again have the

opportunity to access that buffer while running in the context of the calling process. In

general, that means you will likely have to set up a direct kernel mapping (with

get_user_pages) or a DMA mapping. The -EIOCBQUEUED error code indicates that the operation is not yet complete, and

its final status will be posted later.

When âlaterâ comes, your driver must inform the kernel that the operation has completed. That is done with a call to aio_complete:

int aio_complete(struct kiocb *iocb, long res, long res2);

Here, iocb is the same IOCB that was initially

passed to you, and res is the usual result status for

the operation. res2 is a second result code that will

be returned to user space; most asynchronous I/O implementations pass res2 as 0. Once you call

aio_complete, you should not touch the IOCB or user buffer

again.

The page-oriented scullp driver in the example source implements asynchronous I/O. The implementation is simple, but it is enough to show how asynchronous operations should be structured.

The aio_read and aio_write methods donât actually do much:

static ssize_t scullp_aio_read(struct kiocb *iocb, char *buf, size_t count,

loff_t pos)

{

return scullp_defer_op(0, iocb, buf, count, pos);

}

static ssize_t scullp_aio_write(struct kiocb *iocb, const char *buf,

size_t count, loff_t pos)

{

return scullp_defer_op(1, iocb, (char *) buf, count, pos);

}These methods simply call a common function:

struct async_work {

struct kiocb *iocb;

int result;

struct work_struct work;

};

static int scullp_defer_op(int write, struct kiocb *iocb, char *buf,

size_t count, loff_t pos)

{

struct async_work *stuff;

int result;

/* Copy now while we can access the buffer */

if (write)

result = scullp_write(iocb->ki_filp, buf, count, &pos);

else

result = scullp_read(iocb->ki_filp, buf, count, &pos);

/* If this is a synchronous IOCB, we return our status now. */

if (is_sync_kiocb(iocb))

return result;

/* Otherwise defer the completion for a few milliseconds. */

stuff = kmalloc (sizeof (*stuff), GFP_KERNEL);

if (stuff = = NULL)

return result; /* No memory, just complete now */

stuff->iocb = iocb;

stuff->result = result;

INIT_WORK(&stuff->work, scullp_do_deferred_op, stuff);

schedule_delayed_work(&stuff->work, HZ/100);

return -EIOCBQUEUED;

}A more complete implementation would use get_user_pages to map

the user buffer into kernel space. We chose to keep life simple by just copying over the

data at the outset. Then a call is made to is_sync_kiocb to see if

this operation must be completed synchronously; if so, the result status is returned,

and we are done. Otherwise we remember the relevant information in a little structure,

arrange for âcompletionâ via a workqueue, and return -EIOCBQUEUED. At this point, control returns to user space.

Later on, the workqueue executes our completion function:

static void scullp_do_deferred_op(void *p)

{

struct async_work *stuff = (struct async_work *) p;

aio_complete(stuff->iocb, stuff->result, 0);

kfree(stuff);

}Here, it is simply a matter of calling aio_complete with our saved information. A real driverâs asynchronous I/O implementation is somewhat more complicated, of course, but it follows this sort of structure.

Direct memory access, or DMA , is the advanced topic that completes our overview of memory issues. DMA is the hardware mechanism that allows peripheral components to transfer their I/O data directly to and from main memory without the need to involve the system processor. Use of this mechanism can greatly increase throughput to and from a device, because a great deal of computational overhead is eliminated.

Before introducing the programming details, letâs review how a DMA transfer takes place, considering only input transfers to simplify the discussion.

Data transfer can be triggered in two ways: either the software asks for data (via a function such as read) or the hardware asynchronously pushes data to the system.

In the first case, the steps involved can be summarized as follows:

When a process calls read, the driver method allocates a DMA buffer and instructs the hardware to transfer its data into that buffer. The process is put to sleep.

The hardware writes data to the DMA buffer and raises an interrupt when itâs done.

The interrupt handler gets the input data, acknowledges the interrupt, and awakens the process, which is now able to read data.

The second case comes about when DMA is used asynchronously. This happens, for example, with data acquisition devices that go on pushing data even if nobody is reading them. In this case, the driver should maintain a buffer so that a subsequent read call will return all the accumulated data to user space. The steps involved in this kind of transfer are slightly different:

The hardware raises an interrupt to announce that new data has arrived.

The interrupt handler allocates a buffer and tells the hardware where to transfer its data.

The peripheral device writes the data to the buffer and raises another interrupt when itâs done.

The handler dispatches the new data, wakes any relevant process, and takes care of housekeeping.

A variant of the asynchronous approach is often seen with network cards. These cards often expect to see a circular buffer (often called a DMA ring buffer) established in memory shared with the processor; each incoming packet is placed in the next available buffer in the ring, and an interrupt is signaled. The driver then passes the network packets to the rest of the kernel and places a new DMA buffer in the ring.

The processing steps in all of these cases emphasize that efficient DMA handling relies on interrupt reporting. While it is possible to implement DMA with a polling driver, it wouldnât make sense, because a polling driver would waste the performance benefits that DMA offers over the easier processor-driven I/O.[4]

Another relevant item introduced here is the DMA buffer. DMA requires device drivers to allocate one or more special buffers suited to DMA. Note that many drivers allocate their buffers at initialization time and use them until shutdownâthe word allocate in the previous lists, therefore, means âget hold of a previously allocated buffer.â

This section covers the allocation of DMA buffers at a low level; we introduce a higher-level interface shortly, but it is still a good idea to understand the material presented here.

The main issue that arrises with DMA buffers is that, when they are bigger than one page, they must occupy contiguous pages in physical memory because the device transfers data using the ISA or PCI system bus, both of which carry physical addresses. Itâs interesting to note that this constraint doesnât apply to the SBus (see Section 12.5), which uses virtual addresses on the peripheral bus. Some architectures can also use virtual addresses on the PCI bus, but a portable driver cannot count on that capability.

Although DMA buffers can be allocated either at system boot or at runtime, modules can allocate their buffers only at runtime. Driver writers must take care to allocate the right kind of memory when it is used for DMA operations; not all memory zones are suitable. In particular, high memory may not work for DMA on some systems and with some devicesâthe peripherals simply cannot work with addresses that high.

Most devices on modern buses can handle 32-bit addresses, meaning that normal memory allocations work just fine for them. Some PCI devices, however, fail to implement the full PCI standard and cannot work with 32-bit addresses. And ISA devices, of course, are limited to 24-bit addresses only.

For devices with this kind of limitation, memory should be allocated from the DMA zone

by adding the GFP_DMA flag to the

kmalloc or get_free_pages call. When this flag

is present, only memory that can be addressed with 24 bits is allocated. Alternatively,

you can use the generic DMA layer (which we discuss shortly) to allocate buffers that work

around your deviceâs limitations.

We

have seen how get_free_pages can allocate up to a few megabytes (as

order can range up to MAX_ORDER, currently 11), but

high-order requests are prone to fail even when the requested buffer is far less than

128 KB, because system memory becomes fragmented over time.[5]

When the kernel cannot return the requested amount of memory or when you need more

than 128 KB (a common requirement for PCI frame grabbers, for example), an alternative

to returning -ENOMEM is to allocate memory at boot

time or reserve the top of physical RAM for your buffer. We described allocation at boot

time in Section 8.6, but it is not

available to modules. Reserving the top of RAM is accomplished by passing a mem= argument to the kernel at boot time. For example, if

you have 256 MB, the argument mem=255M keeps the

kernel from using the top megabyte. Your module could later use the following code to

gain access to such memory:

dmabuf = ioremap (0xFF00000 /* 255M */, 0x100000 /* 1M */);

The allocator, part of the sample code accompanying the book, offers a simple API to probe and manage such reserved RAM and has been used successfully on several architectures. However, this trick doesnât work when you have an high-memory system (i.e., one with more physical memory than could fit in the CPU address space).

Another option, of course, is to allocate your buffer with the GFP_NOFAIL allocation flag. This approach does, however,

severely stress the memory management subsystem, and it runs the risk of locking up the

system altogether; it is best avoided unless there is truly no other way.

If you are going to such lengths to allocate a large DMA buffer, however, it is worth putting some thought into alternatives. If your device can do scatter/gather I/O, you can allocate your buffer in smaller pieces and let the device do the rest. Scatter/gather I/O can also be used when performing direct I/O into user space, which may well be the best solution when a truly huge buffer is required.

A device driver using DMA has to talk to hardware connected to the interface bus, which uses physical addresses, whereas program code uses virtual addresses.

As a matter of fact, the situation is slightly more complicated than that. DMA-based hardware uses bus, rather than physical, addresses. Although ISA and PCI bus addresses are simply physical addresses on the PC, this is not true for every platform. Sometimes the interface bus is connected through bridge circuitry that maps I/O addresses to different physical addresses. Some systems even have a page-mapping scheme that can make arbitrary pages appear contiguous to the peripheral bus.

At the lowest level (again, weâll look at a higher-level solution shortly), the Linux kernel provides a portable solution by exporting the following functions, defined in <asm/io.h>. The use of these functions is strongly discouraged, because they work properly only on systems with a very simple I/O architecture; nonetheless, you may encounter them when working with kernel code.

unsigned long virt_to_bus(volatile void *address); void *bus_to_virt(unsigned long address);

These functions perform a simple conversion between kernel logical addresses and bus addresses. They do not work in any situation where an I/O memory management unit must be programmed or where bounce buffers must be used. The right way of performing this conversion is with the generic DMA layer, so we now move on to that topic.

DMA operations, in the end, come down to allocating a buffer and passing bus addresses to your device. However, the task of writing portable drivers that perform DMA safely and correctly on all architectures is harder than one might think. Different systems have different ideas of how cache coherency should work; if you do not handle this issue correctly, your driver may corrupt memory. Some systems have complicated bus hardware that can make the DMA task easierâor harder. And not all systems can perform DMA out of all parts of memory. Fortunately, the kernel provides a bus- and architecture-independent DMA layer that hides most of these issues from the driver author. We strongly encourage you to use this layer for DMA operations in any driver you write.

Many of the functions below require a pointer to a struct

device. This structure is the low-level representation of a device within the

Linux device model. It is not something that drivers often have to work with directly, but

you do need it when using the generic DMA layer. Usually, you can find this structure

buried inside the bus specific that describes your device. For example, it can be found as

the dev field in struct

pci_device or struct usb_device. The

device structure is covered in detail in Chapter 14.

Drivers that use the following functions should include <linux/dma-mapping.h>.

The first question that must be answered before attempting DMA is whether the given device is capable of such an operation on the current host. Many devices are limited in the range of memory they can address, for a number of reasons. By default, the kernel assumes that your device can perform DMA to any 32-bit address. If this is not the case, you should inform the kernel of that fact with a call to:

int dma_set_mask(struct device *dev, u64 mask);

The mask should show the bits that your device