Introducing Modern System Administration

Systems are made up of a group of components and their relationships to one another to form a complex whole. You are fundamentally trying to navigate the chaos to manage your systems sustainably. There is no one right way of system management, but there are paths you can take on your journey to understanding your systems to reduce their physical and mental toil and build a lifelong career tackling interesting challenges.

Iâve organized this book to provide the resources you need to prepare for your journey to adopt modern system administration technologies, tools, and practices. In this introduction, I will give you some high-level goals that will help you forge your own path to take care of your systems reliably and sustainably.

Map Your Journey

In many ways, system administrators are like hikers embarking into the wilderness. Like Figure I-1 shows, we like to think that somewhere out there is a map that will tell us exactly what to do and when to do it, and if we follow that map, we will achieve a perfectly maintained system. We imagine that the path weâre about to walk is well lit and that the map we find will have clearly defined milestones and goals.

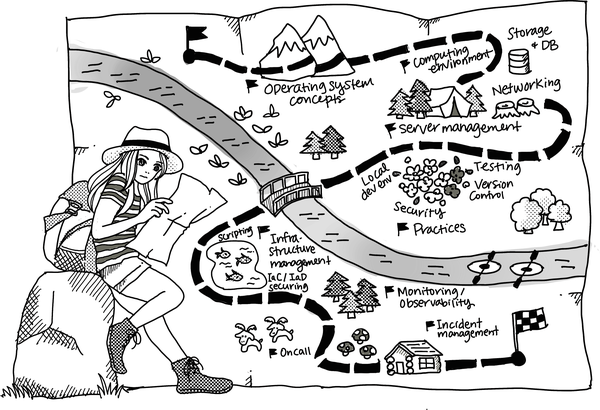

But modern system administration is more like Figure I-2. You can prepare yourself for the journey with some universal tools: the fundamental and critical practices of assembling, monitoring, and scaling any system. You canât predict which specific tools youâll need on your journey or how youâre going to need to use them, but youâll be ready to make those decisions and enact them when the time comes. And you donât have to do it alone!

Figure I-1. This image shows what most of us imagine is possibleâa clear map with clear goals and a solitary journeyâif only we find the right resources and learn the right things. This is not the reality (image by Tomomi Imura).

You must tailor your journey to achieving effective system administration at every organization and on every team you join. Ultimately, the milestones and goals will vary.

When hiking, you donât know each and every turn along the way. Even if youâve walked the same path, you may encounter new challenges: a path washed out or wild animals you donât want to disturb. In system administration, youâre going to run into unexpected problems (twists and turns) that impact the outcomes of your efforts. So you learn from your mistakes, try different routes, ask for help, and keep trying until you reach your goal.

Figure I-2. There is no such thing as the one resource that will tell us exactly what to do to manage our systems. The path before us is unclear, and the terrain never matches the map, but with the right tools and collaborators we can move forward with confidence that weâll be able to handle whatever lies ahead (image by Tomomi Imura).

This book supports you in establishing the patterns and behaviors to focus your time and energy where you need to so that you can build quality, reliable, and sustainable systems. The size and scope of your responsibilities will vary. You may be responsible for everything and must balance supporting the whole organization and specific engineering initiatives. You may manage the âIT infrastructureâ and how the company conducts business. You may support the specific infrastructure for one product.

When something goes wrong, you need to maintain your systems without harming your own physical and mental health. You are not done when you reach your goal. For a lifelong career, youâll be constantly adjusting to new trails and terrain as technology and practices evolve.

Embrace a Mindset Shift

Being prepared starts with a growth mindset, in which you believe you can grow your capacities and talents over time. You can continue to update your skills and knowledge and persist in facing challenges and failures.

Throughout the book, I share different models to enable you to think about the systems you manage. Models enable understanding and communication and help to explain concepts, represent ideas, and provide common ways of talking to one another. No model is flawless. They arenât meant to be. As you think about the systems that the models represent, remember what Vincent van Gogh wrote, â[Y]our model is not your final aim,â1 and be cautious when the model isnât giving you a good frame to maintain your systems.

Take models like infrastructure as code and the five-layer Internet model to process, visualize, and explain your systems. And build from your experiences to develop new models to advance the practices and technologies in system administration.

At the heart of modern system administration is the fact that your systems are continuously growing in complexity and size as âsoftware eats the world.â To be effective, you must recognize change and develop your understanding of what it means to do the job in practice, whether adopting new practices or technologies.

What Is the Job?

You are responsible for building, configuring, and maintaining reliable and sustainable systems, where systems can be specific tools, applications, or services. While everyone within the organization should care about uptime, performance, and security, your perspective focuses on these measurements within the constraints of the organization or teamâs budget and the specific needs of the tool, application, or service consumer.

Whether you manage hundreds or thousands of systems, you are a sysadmin if you have elevated privileges on the system. Unfortunately, many people strive to define system administration in terms of the tasks involved or what work the individual does. Thatâs often because the role is not well defined and usually takes on an outsized responsibility of everything that no one else wants to do.

Many describe system administration as the digital janitor role,2 responsible for cleaning up the systems, especially when they arenât working as needed. While the janitorâs role in an organization is critical, equating the two is a disservice to both positions.

Closer metaphors for system administrators include plumber, electrician, or HVAC specialist. People take it for granted that modern homes and businesses have running water, electricity, and climate control systems, but these systems require trained specialists to build, install, maintain, and repair them so they run correctly and safely.

Flavors of System Administration

The name for the people who manage systems widely varies (e.g., sysadmin, SRE,3 DevOps engineer, platform engineer, and cloud engineer are just a few). The name of the role may indicate that slightly different skill sets are required. For example, with âSRE,â there is often an expectation that engineers are also software engineers with operability skills. With DevOps engineers, there is often an assumption that engineers are strong in at least one modern language and have expertise in continuous integration and deployment. More often, itâs just a name and not always a consistent one. Sometimes a team defines the role completely differently and requires specific skills based on the needs of the organization. To avoid a mismatch in expectations, check with the team directly when assessing whether a role is a good fit for you. For example, the acronym SRE can mean site, system, service reliability, or resilience engineering at different organizations.

As an engineering discipline, system administration is one part art and one part science. Itâs an approach to your work (designing, building, and monitoring your systems) that considers the implications to safety, human factors, government regulations, practicality, and cost. There can be hundreds of different ways to accomplish something. Your knowledge, skills, and experiences will inform which of those many ways you will take while leveraging your analytical skills to monitor for impact and success, identifying when to spend (or save) money or time, and factoring in the cost to humans to support the system.

Embrace Evolving Practices

As technology evolves, the practices to manage the technologies have also adapted. Be prepared to adopt new techniques to stay abreast of changing platforms to reduce a systemâs impact and maintainability.

The fundamental sysadmin and dev dynamic changes when you measure your systemâs reliability and the organization shifts who is responsible for reliability improvements. Today, itâs more common for everyone to improve a productâs reliability than for a single team to carry the brunt of the support work to keep a system or service running. SRE teams are empowered to help reduce the overall toil of the systems.4

Embrace Collaboration

The pace of change, complexities of our environments, and risks inherent to their failure require the following actions:

-

Bringing together expertise from different areas (e.g., development, operations, security, and testing)

-

Integrating proposals rather than compromising so that the final solution addresses multiple perspectives

It takes real effort to build the trust and psychological safety that encourages people to voice their opinions and perspectives. When team members have achieved psychological safety with one another, they feel safe to take risks and be vulnerable. For example, an individual on a team who feels high amounts of psychological safety will proactively share when they need help. This can help prevent failures in the system because of an established mutual support system.

Encourage a culture that enables and supports people asking probing questions to help everyone come to a shared understanding (weâre working toward the same goal) and promotes intellectual courage (experts are fallible). Some questions include the following:

-

Why? Why are we doing this? Why does it work this way?

-

Could you help me understand your perspective?

-

What other ways did you think about solving this?

Tip

Learn more about psychological safety, the number-one key dynamic of high-performing teams that Googleâs People Operations identified from its research with the re:Work program.

Embracing collaboration leads to working well with others so that when you most need them, your collaborators will be available (and willing) to provide support because you have already built up and prepared for that eventuality.

Embrace Sustainability

Sustainability is the measurement of a system that enables the humans in the system to thrive, leading healthy lives while working. Regardless of the size and scope of your work, eight measurements inform the sustainability of your work:

- Performance

-

Measures the systemâs capability of doing useful work for a period of time. System performance is defined differently depending on the service or product you build.

- Scalability

-

Measures the systemâs adaptability to add and remove individual components.

- Availability

-

Measures the length of the time the system functions as expected.

- Reliability

-

Measures how well a system consistently performs its specific purpose for a period of time.

- Maintainability

-

Measures the ease of deploying, updating, and deprecating a system.

- Simplicity

-

Measures the ease of a new engineer understanding the system.

- Usability

- Observability

-

Measures how well you can figure out what is going wrong with a system under observation, recognizing not all systems need a high level of observability.

In the following chapters, I will share the different technologies and practices that improve the goals you set for these measures and, ultimately, the sustainability of your systems.

Wrapping Up

Your journey will be specific to your systems and the people who support those systems. No one can provide that perfectly defined checklist to tell you exactly what you need to learn or do and when. Still, you can better prepare yourself with the appropriate toolkit (understanding the fundamentals and key practices and assembling, monitoring, and scaling the systems).

What it means to be a system administrator is constantly evolving. Therefore, it would be helpful to adopt a growth mindset and foster the talents and skills necessary to sustain a lifelong career with new technology and practices.

Ask for help and build on collaborative practices that enable you to work effectively with your team by building psychological safety. Use models to inform your understanding, and build upon them to advance system administration practices.

Embrace sustainability. You deserve to thrive and have a whole career supporting the systems you manage.

1 Vincent van Gogh quoting Dickens: â[Y]our model is not your final aim, but the means of giving form and strength to your thought and inspirationâ in a letter to his brother.

2 Check out the many roles of system administrators in Appendix B of Thomas Limoncelli et al.âs book The Practice of System and Network Administration (Addison-Wesley Professional).

3 Learn more about being an SRE from Alice Goldfussâs blog post âHow to Get into SREâ and Molly Struveâs blog post âWhat It Means to Be a Site Reliability Engineerâ.

4 Learn about the reduction of toil and its impact on teams from Stephen Thorneâs Medium article on the tenets of SRE.

Get Modern System Administration now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.